OpenAI has just announced the newest version of the technology powering popular AI-based tools such as ChatGPT and Microsoft's Bing Chat.

The newest version of the underlying technology is called "GPT-4", which is a step up of .5 from the previous version, GPT-3.5. While the .5 may not sound like much at face value, the improvements in this technology upgrade shouldn't be understated. GPT-4 is many more times powerful than GPT-3.5 and comes with a range of new features that only adds to its applicability. For example, GPT-4 introduces the ability to use images as well as text, which OpenAI demonstrates during the company's announcement livestream.

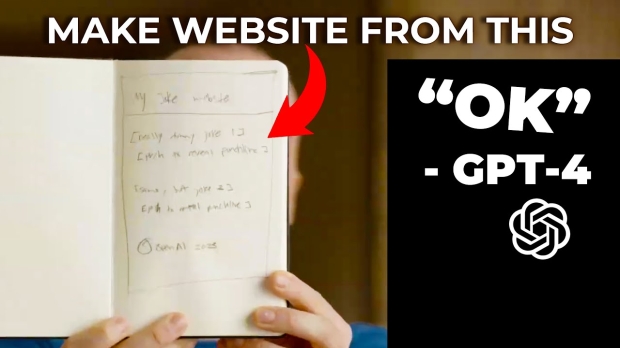

The above video demonstrates that a user can draw an idea for a website on any piece of paper, snap a photo of that piece of paper and upload it to the AI to analyze. OpenAI explains that when the image is uploaded to the AI, the user is actually communicating with a neural network that has been fed an "unimaginable" number of content and is trained on "what to predict next".

The user requests the AI to convert what it has analyzed in the provided image into a programming language such as HTML or JavaScript, and the AI replies almost instantly, converting what was hand drawn on the paper into a working website. As outlined by OpenAI during the livestream, this quick demonstration represents an almost endless amount of possibilities for people to convert what they have drawn in real-life into working code that can be for a variety of applications such as apps, data analysis, websites, and more.

Furthermore, GPT-4 has achieved "human-level performance" in almost every simulated test that was thrown at it. OpenAI outlines on its website that while the differences between GPT-3.5 and GPT-4 appear subtle, GPT-4 comes with a new level of reliability, improvements with creative responses, the ability to handle much more nuanced instructions, and advanced reasoning capabilities. As for the simulated tests, GPT-4 outperforms ChatGPT's current public model, GPT-3.5, in higher approximate percentiles.

Additionally, OpenAI writes on its website that GPT-4 is 82% less likely to respond to requests for disallowed content and 40% more likely to factual responses compared to GPT-3.5, according to OpenAI's internal evaluations.

If you are interested in reading more about the capabilities of OpenAI's GPT-4, check out the article below, where test results between the new version of the AI and the old version are compared in simulated tests, along with a longer explanation of all of the differences. Check out the link below.