Introduction

The Serial Cables SA-ENC12G-01A JBOD is designed for 2.5-inch HDD/SSD testing environments. The JBOD allows DAS use of 12Gb/s SAS and SATA devices, and it also provides backwards compatibility with the previous 6 Gb/s and 3 Gb/s generations. A major issue encountered by many manufacturers is signal integrity and 10b errors when testing 12Gb/s storage devices. This is usually from too much loss between the host and the end device, and Serial Cables designed their JBOD to address this problem by providing the purest connection possible. The JBOD doesn't feature an expander chip or other components that affect latency.

Serial Cables focuses on providing testing equipment for design and validation engineering groups, along with a wide variety of cables and specialty items. Serial Cables provides a wide range of fibre channel cables and SFPs, and cabling for HD SAS/SATA, SCSI, PCIe, Infiniband cable, and numerous adapters and accessories. Serialcables.com also provides pictures and wiring diagrams of all cables they supply.

The wait for 12Gb/s SAS infrastructure was a bit frustrating. We actually had 12Gb/s SSDs in the lab long before there were HBAs or RAID controllers available for testing. Once the required components began trickling in, we ran into problems finding dual-port SAS cabling and connection converters. Serial Cables led the way, and they often have cabling options and specialty items not carried by other vendors. Serial Cables is a certified LSI Channel Alliance Partner and SandForce Trusted Partner, and they provide the cabling supplied with LSI RAID controllers and HBAs.

Creating a refined solution for design and engineering groups, along with normal hardware users, requires attention to detail and a resilient design. 12Gb/s devices require higher quality PCB assemblies that have lower loss. The 12Gb/s SAS specification calls for enhanced transmit and receive equalization. This is accomplished with the 12Gb/s compliant Quarch backplane that provides connection for eight 2.5-inch drives with SFF8680/SFF8643 connections. The SA-ENC12G-01A JBOD also supports dual-port connections to test all SAS features.

The JBOD provides a copious amount of airflow with a rating of 3.5 meters per minute. Typically, anything above two meters per minute is sufficient. The design of the JBOD includes slotted air intakes through the handles to optimize airflow. During our testing, all SSDs and HDDs remained well below the expected temperature range.

The SA-ENC12G-01A has an integrated 150W power supply to power the drives. We tested power consumption during our use of the JBOD. With the high-powered HGST SSD800MH SSDs, it peaked at 111 watts.

Serial Cables also provides a 19-inch rack mount for the JBOD, allowing the placement of two JBODs side-by-side in a rack. The slim design only requires 2U of space, and when stood vertically, the enclosure occupies very little space. The design is efficient and provides an excellent amount of storage density. The fans on the SA-ENC12G-01A are not quiet, but they also don't match the noise of most 1U and 2U server racks. In lab and development environments, noise is not a large concern. The current design doesn't allow fan adjustment, but for office environments, it would be nice to have the ability to dial down the fans.

Serial Cables has enjoyed significant success with this inaugural JBOD design, and they are now shipping the SA-ENC12G-018 tool-less 8-bay JBOD and the larger 24-bay SAS/SATA test box. The 24-bay design also has enhanced features, such as power measurement and individual on/off control, that can be programmed through USB, Telnet, and Ethernet.

We have actually been using the SA-ENC12G-01A for several months with a wide range of storage devices. The design and build quality are top-notch, and the JBOD is warrantied for one year. Let's take a closer look at the device and see how it fares in our testing.

SA-ENC12G-01A Internals

Internals

The SA-ENC12G-01A provides storage for eight 2.5-inch storage devices.

The front of the JBOD is simple. A latch on each drive sled releases the drives. The JBOD is constructed of very sturdy metal and has a solid feel to it. A button on the front silences the built-in alarm, and a 'fan-failed' LED indicates a fan problem. There is also a power indicator LED. This small enclosure is very resilient and stood up to the rigors of our test labs for several months with no issue.

The 5,7000 RPM Delta 80x28mm fan cools the drives, providing 80CFM, and it is rated for 52 dB. There is an on/off switch above the fan and four connections for the HD Mini-SAS cables. The power supply receptacle is next to the smaller PSU fan on the bottom of the JBOD.

Removing the side panel exposes the innards of the JBOD. There are two Quarch 12Gb/s backplanes connected to the drive bays. The bays also have slotted sides to facilitate efficient airflow. The backplanes are connected to the rear of the enclosure with a specialized connector, pictured at the bottom of this page. The Zippy EMACS P1S-5150V 150W mini power supply occupies the bottom of the case.

Two PCBs house the components for the front LEDs, alarm, and fan power connections.

One of the most impressive aspects of the SA-ENC12G-01A is the very smooth operation of the drive sleds. Many enclosures and JBODs have significant friction when installing the drives. Some enclosures require a bit of force when inserting the drive. We have actually broken the SAS/SATA connections on the drives during installation in cheap enclosures. The Serial Cables JBOD features a very smooth installation when we push the drives into the enclosure. The complete lack of resistance is impressive. The drive sled design utilizes thin metal and leaves a good amount of space open on the bottom to cool the drives.

The drive sleds have slots open on the front for smooth airflow.

This connector provides the bridge between the rear of the case and the Quarch backplanes. The Dual HD MiniSAS Receptacle (SFF-8644) to Dual Int MD MiniSAS Plug (SFF-8643) can also be bought separately at Serial Cables.

Test System and Methodology

We designed our approach to storage testing to target long-term performance with a high level of granularity. Many testing methods record peak and average measurements during the test period. These average values give a basic understanding of performance, but they fall short in providing the clearest view possible of I/O Quality of Service (QoS).

'Average' results do little to indicate performance variability experienced during actual deployment. The degree of variability is especially pertinent as many applications can hang or lag as they wait for I/O requests to complete. This testing methodology illustrates performance variability, and includes average measurements, during the measurement window.

While under load, all storage solutions deliver variable levels of performance. While this fluctuation is normal, the degree of variability is what separates enterprise storage solutions from typical client-side hardware. Providing ongoing measurements from our workloads with one-second reporting intervals illustrates product differentiation in relation to I/O QoS. Scatter charts give readers a basic understanding of I/O latency distribution without directly observing numerous graphs.

Consistent latency is the goal of every storage solution, and measurements such as Maximum Latency only illuminate the single longest I/O received during testing. This can be misleading as a single 'outlying I/O' can skew the view of an otherwise superb solution. Standard Deviation measurements consider latency distribution, but they do not always effectively illustrate I/O distribution with enough granularity to provide a clear picture of system performance. We utilize high-granularity I/O latency charts to illuminate performance during our test runs.

Our testing regimen follows key SNIA principles to ensure consistent, repeatable testing. The first page of results will provide the 'key' to understanding and interpreting our new test methodology. In replicated environments, RAID 0 can be a compelling choice for bleeding edge performance. RAID 5 provides a layer of data security that protects from the loss of a drive. We test RAID 0 and RAID 5 for this evaluation. During testing, we noted periodic performance irregularities that occurred with both controllers at similar intervals. Due to the similar timing of performance errata with both controllers, we concluded these are GC or other internal functions of the HGST SSD test array.

To test the Serial Cables SA-ENC12G-01A JBOD, we required the utmost performance, and the SSD800MH provides the best result in steady state of the available 12Gb/s SSDs. The HGST SSD800MH SSDs feature sequential read/write speeds of 1200/750 MB/s and read/write IOPS of 145,000/100,000.

We paired the HGST SSDs with the Adaptec ASR-8885 12Gb/s RAID controller. Tests were conducted at default controller settings.

Benchmarks - RAID 0 4k Random Read/Write

We precondition the ASR-8885 RAID controller with an array of eight HGST SSD800MH for 9,000 seconds, or two and a half hours, receiving performance reports every second. We plot this data to illustrate the drives' descent into steady state.

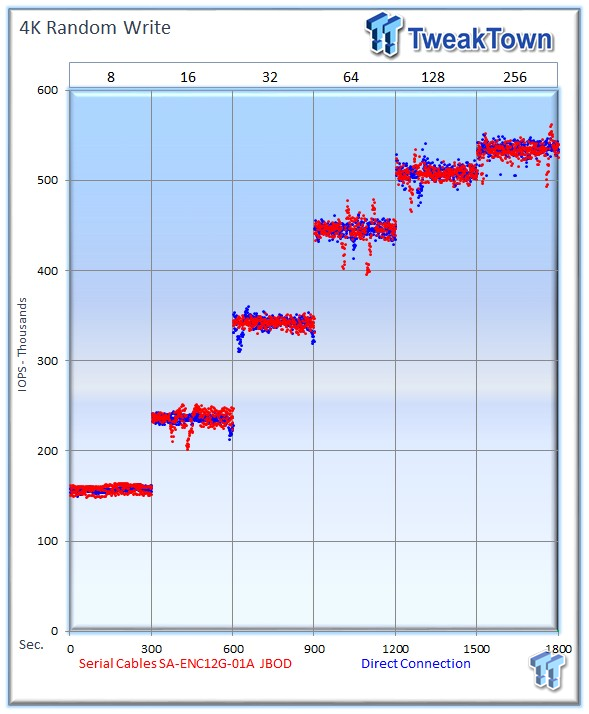

This chart consists of 18,000 data points. The red dots signify IOPS for the SA-ENC12G-01A JBOD, and the blue dots are the same array of SSDs connected directly to the ASR-8885 during the test. The lines through the data scatter are the average during the test. This type of testing presents standard deviation and maximum/minimum I/O in a visual manner.

This downward slope of performance only happens during the first few hours of use, and we present precondition results only to confirm steady state convergence.

Each level tested includes 300 data points (five minutes of one second reports) to illustrate performance variability. The line for each OIO depth represents the average speed reported during the five-minute interval. 4k random speed measurements are an important metric when comparing drive performance as the hardest type of file access for any storage solution to master is small-file random. One of the most sought-after performance specifications, 4k random performance is a heavily marketed figure.

The SSDs inside the Serial Cables SA-ENC12G-01A 12Gb/s JBOD average 685,760 IOPS at 256 OIO, and with the SSDs directly attached to the ASR-8885, they average 685,203 IOPS. This slight difference falls well within the tolerances of repeated testing, and is well below a 1 percent variance. This workload produces the most IOPS of our tests and quickly sets the tone for the remainder of our testing. During this test, we are pushing the highest IOPS during our regimen, and the Serial Cables JBOD comes away with a perfect result. We test with other types of workloads to verify the results as mixed workloads can introduce jitter into our test results.

Examining the latency results at a high level of granularity reveals no additional latency impact from deploying the 12Gb/s JBOD.

As we move into our mixed testing, we note some increased variability due to the nature of the solid state devices we are testing. The SSD800MH produces the most consistent performance of the 12Gb/s SAS SSDs, and the Serial Cables enclosure adds no performance impact. The JBOD averages 533,193 IOPS at 256 OIO, and the direct connected SSDs average 536,286 IOPS. Again, the variance is well below 1 percent.

Latency is unaffected by the addition of the JBOD into the storage subsystem.

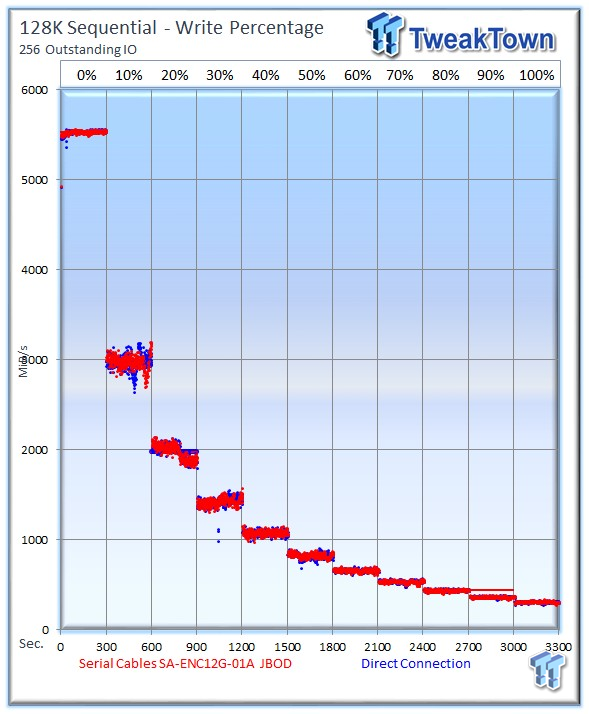

Our write percentage testing illustrates the varying performance of each solution with mixed workloads. The 100 percent column to the right is a pure 4k write workload, and 0 percent represents a pure 4k read workload.

Our write percentage testing shakes out the loose ends when we test all manner of storage devices, whether they are HDDs, SSDs, RAID controllers, or HBAs. Typically, if there is a significant weakness hiding in the performance, this test flushes them out. The performance of the 12Gb/s JBOD remains unaffected in comparison to the direct connection, delivering the same level of superb performance that doesn't impact the storage subsystem.

Benchmarks - RAID 0 8k Random Read/Write

Server workloads rely heavily upon 8k performance, and we include this as a standard with each evaluation. Many of our server emulations also test 8k performance with various mixed read/write workloads.

The Serial Cables 12Gb/s JBOD averages 534,222 IOPS at 256 OIO, and the direct connection averages 537,601 IOPS. Note the dips at 256 OIO; these are from the internal garbage collection routines of the HGST SSD800MH SSDs. These same performance drops occur with both connection methods, verifying they are unrelated to the JBOD.

Latency consistency falls well within expectations.

The 12Gb/s JBOD provides an average of 399,579 IOPS at 256 OIO. The direct connection delivers an average of 400,577 IOPS at 256 OIO. The difference of less than 1,000 IOPS is superb.

Once again, the Serial Cables SA-ENC12G-01A delivers a superb connection for the SSDs, and it remains within the error tolerances of the test.

Benchmarks - RAID 0 128k Sequential Read/Write

128k sequential speed reflects the maximum sequential throughput. The 12Gb/s JBOD provides an outstanding sequential read speed of 5,534 MiB/s. The direct connection provides a lower average of 5,495 MiB/s, with the majority of the disparity occurring from an SSD GC cycle during the measurement period. This is an amazing amount of performance from both connection methods.

Latency testing reveals expected scaling.

The Serial Cables SA-ENC12G-01A provides an average of 4,713 MiB/s, and the direct connection nets 4,741 MiB/s.

The difference in performance below is negligible.

Write percentage-testing revels both connection methods are closely matched with mixed workloads.

Benchmarks - RAID 5 4k Random Read/Write

RAID 5 testing may seem a bit redundant--pun intended--but RAID 5 introduces another layer of complex I/O into the equation. We start RAID 5 testing with a 4k random read workload, and the Serial Cables SA-ENC12G-01A delivers an outstanding average of 679,146 IOPS at 256 OIO. The direct connection averages 679,021 IOPS at the highest workload. The variance of less than 150 IOPS is outstanding.

The 12Gb/s JBOD tops out at 31,176 IOPS at 64 OIO, and the direct connection averages 31,188 IOPS, a difference of just 12 IOPS.

Once again, the results are so close that the variances are barely detectable.

Benchmarks - RAID 5 8k Random Read/Write

The array goes through some internal activity for both connection methods during the 256 OIO measurement period. This skews the average performance of both connections during that measurement period significantly. Subsequent tests resulted in the same interference at this point in the test, so we use 128 OIO as our measurement for this test. The 12Gb/s JBOD averages 499,107 IOPS at 128 OIO, and the direct connection provides 499,109 IOPS. A variance just 2 IOPS is amazing.

The 12Gb/s JOBD tops out at 29,492 IOPS at 64 OIO, while the direct connection reaches 29,488 at 64 OIO.

Outside of the 0 percent write workload, which had significant GC activities for both connection methods, the results are identical.

Benchmarks - RAID 5 128k Sequential Read/Write

The 12Gb/s JBOD delivers an average of 5,532 MiB/s in sequential read speed, and the direct connection yields 5,530 MiB/s.

The 12Gb/s JBOD averages 309 MiB/s at 256 OIO, and the direct connection provides 310 MiB/s.

The write percentage testing closes out our tests with very similar results.

Final Thoughts

Testing enterprise SSDs is a tricky business. SSDs deliver varying performance depending upon what state they are in. When SSDs are fresh, they deliver astronomical performance, but once full and subjected to heavy workloads, they drop to a lower level of performance referred to as steady state. Removing the test load from the SSDs allows them to begin recovery, and the best SSDs pull back up near the original fresh performance with extended idle time.

The key to testing flash-based storage solutions is to ensure repeatable results. With wild performance fluctuations from the SSDs, tests require design and execution in a manner that ensures the SSDs are in the same performance state during measurement periods. The SNIA committee has designed guidelines for the accurate testing of SSDs, and we adhere to the guidelines for preconditioning and test methodology. This provides us with accurate and repeatable results, which are key when comparing and contrasting the performance impact of different storage subsystem components.

The 12Gb/s JBOD is designed specifically for HDD/SSD testing environments, and this requires a transparent bridge between the DUT (Device Under Test) and the HBA/RAID controller. Pushing the limits of the 12Gb/s connection is required to ensure that the SA-ENC12G-01A doesn't incur any performance or latency penalties.

The common belief has always been that the best method of testing storage devices is with a direct connection. This provides the most transparent connection between the DUT and the HBA/RAID controller. We have adhered to this guideline for years. This leads to some messy wiring setups when pushing arrays of 24 SSDs, but it has always been considered necessary to remove the chance of interference from backplanes, JBODS, and enclosures. We don't go to the extremes of direct connection for massive setups for no reason; our testing with different enclosures and backplanes has rooted out significant negative performance impacts in the past.

It is safe to say the SA-ENC12G-01A has changed that perception for us. This is the first enclosure we have tested that provides nearly unmeasurable interference in test results. The tests speak for themselves; the variances are well below what we would expect from test to test even with a direct connection. There are so many factors at play, including the SSDs, RAID controller, and operating system, that we normally would not expect results this close in line with each other, even if testing with the same direct connection repeatedly. The Serial Cables enclosure simply blew away our expectations, and in some cases, it delivered slightly higher results than direct connection. Of course, this is due to the nature of SSD performance, and using the enclosure will not provide faster performance from the storage devices. However, this is indicative of a pure unadulterated connection.

Build quality is superb. A common problem we experience with all manner of enclosures occurs when we install the drives. The latching devices usually have some small hitching, or require a bit of pressure, to seat correctly. We have even broken drive connectors in the past with low-quality enclosures. The Serial Cables JBOD has the smoothest function we have experienced in this respect; there is literally no friction when installing and latching the drives. The use of the enclosure in labs and development environments will result in thousands of opening/closing cycles, and the JBOD was obviously designed with that in mind.

The Serial Cables SA-ENC12G-01A delivers excellent performance that doesn't hamper test results and also has a superior build quality, meriting the TweakTown Editor's Choice Award.

United

States: Find other tech and computer products like this

over at

United

States: Find other tech and computer products like this

over at  United

Kingdom: Find other tech and computer products like this

over at

United

Kingdom: Find other tech and computer products like this

over at  Australia:

Find other tech and computer products like this over at

Australia:

Find other tech and computer products like this over at  Canada:

Find other tech and computer products like this over at

Canada:

Find other tech and computer products like this over at  Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf

Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf