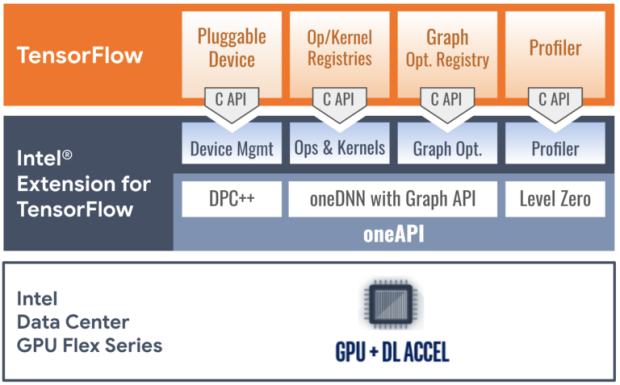

Intel has just announced that its new Data Center GPU Flex Series cards have been added to their family of PluggableDevices, something that is called Intel Extension for TensorFlow.

Intel Extension for TensorFlow is an open-source solution that runs TensorFlow applications on Intel AI hardware, allowing the high-performance deep learning extension for the TensorFlow PluggableDevice interface. It will allow Intel XPU (GPU, CPU, and more) devices to be readily accessible to TensorFlow developers.

With the new Intel Extension, the company says that developers can train and infer TensorFlow models on Intel AI hardware with "zero code change". Intel Extension for TensorFlow is built on the foundations of the oneAPI software components, with most of the performance-critical graphs and operators being highly optimized by Intel oneAPI Deep Neural Network (oneDNN) which is an open-source, cross-platform performance library for Deep Learning applications.

- Read more: Microsoft previews Shader Model 6.10 with a matrix math API, making neural rendering a standard DirectX feature

- Read more: NVIDIA launches single-slot RTX PRO 4500 Blackwell Server Edition GPU

- Read more: Xbox VP: Project Helix's GPU can generate its own workloads in real time, delivering massive uplift in performance

- Device management: The Intel and Google developers implemented TensorFlow's StreamExecutor C API utilizing C++ with SYCL and some exceptional support provided by the oneAPI SYCL runtime (DPC++ LLVM SYCL project). StreamExecutor C API defines stream, device, context, memory structure, and related functions, all have trivial mappings to corresponding implementations in the SYCL runtime.

- Op and kernel registration: TensorFlow's kernel and op registration C API allows device-specific kernel implementations and custom operations. To ensure sufficient model coverage, the development team matched TensorFlow native GPU device's op coverage, implementing most performance-critical ops by calling highly-optimized deep learning primitives from the oneAPI Deep Neural Network Library (oneDNN). Other ops are implemented with SYCL kernels or the Eigen math library to C++ with SYCL so that it can generate programs to implement device ops.

- Graph optimization: The Flex Series GPU plug-in optimizes TensorFlow graphs in Grappler through Graph C API and offloads performance-critical graph partitions to the oneDNN library through oneDNN Graph API. It receives a protobuf-serialized graph from TensorFlow, deserializes the graph, identifies and replaces appropriate subgraphs with a custom op, and sends the graph back to TensorFlow. When TensorFlow executes the processed graph, the custom ops are mapped to oneDNN's optimized implementation for their associated oneDNN Graph partitions.

- The Profiler C API lets PluggableDevices communicate profiling data in TensorFlow's native profiling format. The Flex Series GPU plug-in takes a serialized XSpace object from TensorFlow, fills the object with runtime data obtained through the oneAPI Level Zero low-level device interface, and returns the object to TensorFlow. Users can display the execution profile of specific ops on The Flex Series GPU with TensorFlow's profiling tools like TensorBoard.

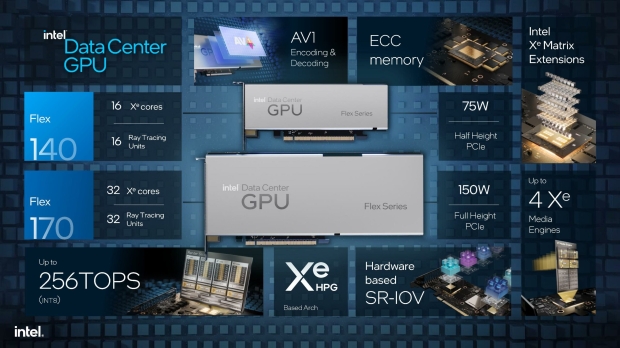

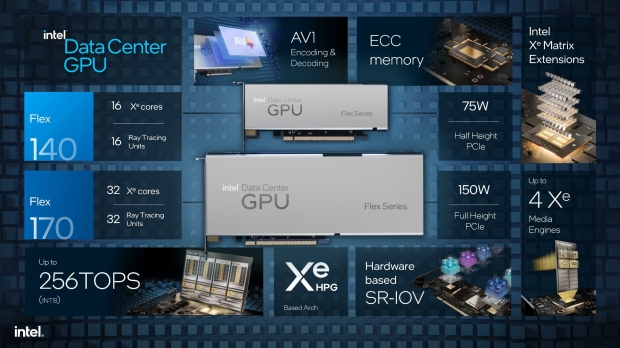

Inside, the Intel Data Center GPU Flex Series 170 is a full-height, single-wide passively cooled card with a 150W TDP. It features a single GPU with 32 Xe Cores, up to 16 TFLOPs of FP32 compute performance, 16GB of GDDR6 memory, and a PCIe 4.0 interface.

Intel also has the Data Center GPU Flex Series 140, a half-height, single-wide passively cooled card with a 75W TDP.

This card has 2 x GPUs with 16 Xe Cores in total (8 x Xe Cores per GPU) which is less than the single-GPU Flex Series 170, with only 8 TFLOPs of FP32 compute performance, and 12GB of GDDR6 memory in total (6GB GDDR6 memory per GPU).