Intel and AMD have partnered to standardize a new x86 ISA extension to supercharge AI performance. Under the x86 Ecosystem Advisory Group (EAG), AI Compute Extensions (ACE) are engineered to deliver a 16x increase in compute density over standard AVX10 instructions.

Traditional vectorized hardware (SIMD) and instructions, such as AVX, operate on one-dimensional data. Machine learning, powered by Matrix Multiplication and Accumulate (MMA), has created a demand for specialized hardware that runs in two dimensions. This need led to the rise of specialized Tensor Cores in GPUs, and now ACE brings that same logic to the x86 CPU.

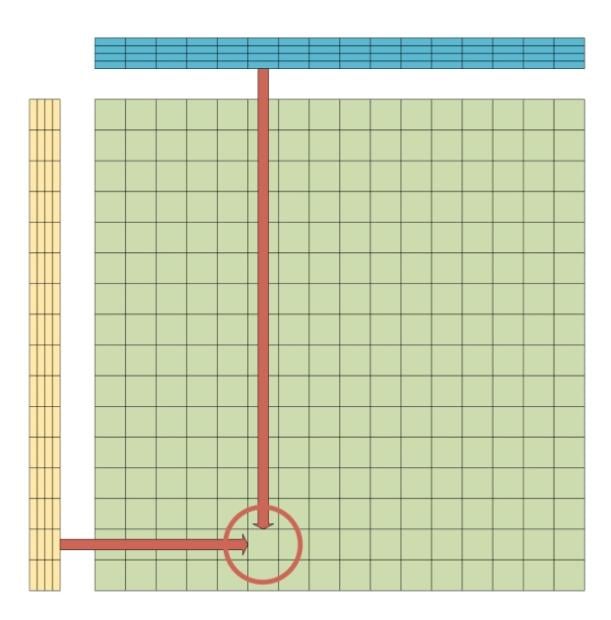

ACE uses an outer-product algorithm and eight new 2D Tile Registers, each with 16x16 dimensions and 32-bit precision. Large AI datasets are split into sub-matrices, with the hardware consuming two 16x4 input matrices (at 8-bit precision) - the Blue and Yellow matrices. At each intersection on the 16x16 grid, the hardware computes an inner product between a 1x4 and a 4x1 vector.

This setup creates 256 new products simultaneously and stores them in the Tile Register (Green matrix). In total, it performs 1,024 multiplications per clock cycle. The Tile Register does not overwrite data in the next cycle. Instead, it accumulates results, adding new values to existing ones.

Traditional AVX, without optimizations, treats the 512-bit vectors as simple rows of 64 elements (8-bit data), resulting in only 64 multiplications. The jump from 64 to 1,024 is the source of the 16x performance claim. ACE is not a replacement for AVX, but an extension of the instruction set.

Because this extension is a joint effort between both x86 giants, ACE is poised to avoid the instruction fragmentation that hindered the rollout of AVX-512. The architecture is specifically designed to handle lightweight AI workloads directly on the CPU, preventing the need to engage the more power-hungry GPU and significantly improving energy efficiency.

Currently, no CPUs with ACE support have been announced or seen in the wild. However, software enablement is underway, with a focus on optimized kernels, libraries, and ML runtime integrations. The team is adding ACE support to major libraries like NumPy and SciPy, as well as to AI frameworks such as PyTorch and TensorFlow.

Historically, the greatest strength of the x86 ecosystem has been its scale, and its greatest weakness has been fragmentation. By co-authoring ACE, Intel and AMD have established a consistent standard across the entire stack. This unified approach enables seamless software deployment, ensuring that the same code runs identically whether it's on a consumer-grade laptop or a rack-scale data center. However, it'll likely be a while before we see the results of this partnership bear fruit.