NVIDIA has just announced its new A30 Tensor Core GPU which the company calls its "most versatile mainstream compute GPU for AI inference and mainstream enterprise workloads".

The new NVIDIA A30 Tensor Core GPU was built for AI inference at scale, packing an Ampere GPU with 24GB of HBM2 memory with 933GB/sec of memory bandwidth available. NVIDIA includes third-gen NVLink support here with 200GB/sec of bandwidth available between GPUs in multi-GPU situations.

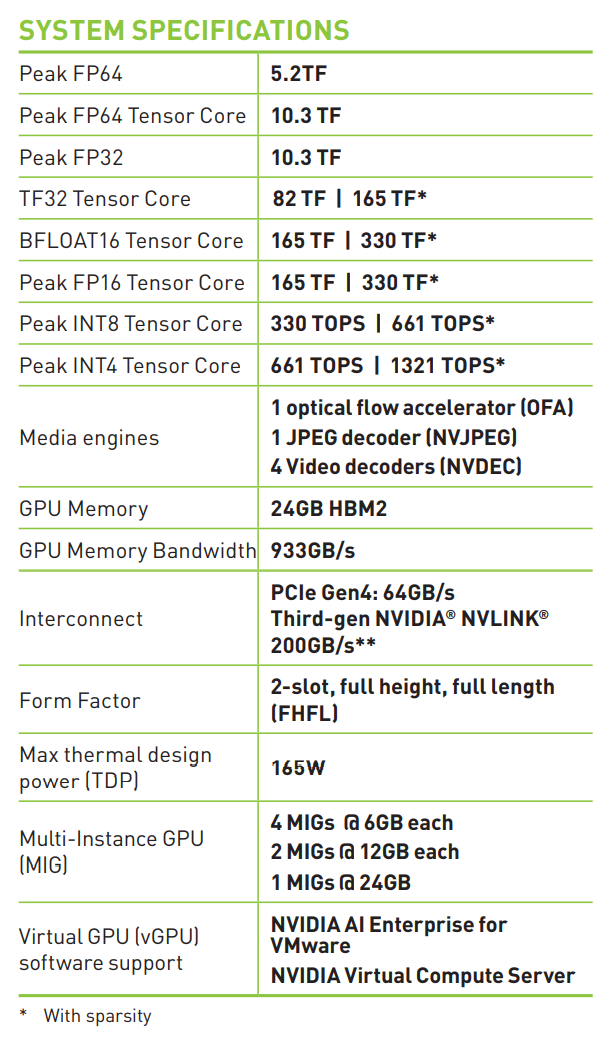

We have peak FP32 performance of 10.3 TFLOPs, peak FP64 performance of 5.2 TFLOPs and peak FP64 Tensor Core performance of 10.3 TFLOPs -- all within a 165W low-power TDP. Not only that, but the NVIDIA A30 Tensor Core GPU runs on a regular PCIe 4.0 card which will slot into mainstream servers.

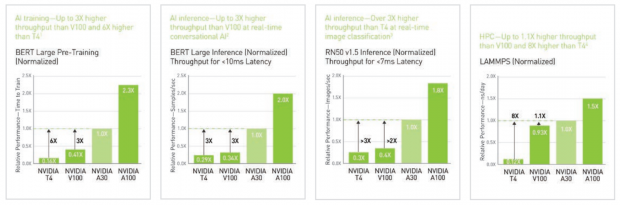

We have some huge upgrades over the NVIDIA T4 and NVIDIA V100 cards, with massive uplifts in performance across a multitude of professional situations and applications.

Depending on the software, you're looking at a 6x increase in performance over the NVIDIA T4 with the new NVIDIA A30 in BERT Large Pre-Training (Normalized) and up to an insane 8x increase in performance over the NVIDIA T4 with the new NVIDIA A30 in LAMMPS (Normalized). That's quite the performance gain there, NVIDIA.

The new NVIDIA A30 Tensor Core GPU can be used in multi-instance GPU (MIG) scenarios, which will let developers get access to four GPU instances -- fully isolated at the hardware level with their own HBM2, cache, and compute cores. You could have 4 x MIGs with 6GB HBM2 each, 2 x MIGs with 12GB HBM2 each, or a single MIG with 24GB of HBM2 with the new NVIDIA A30 Tensor Core GPU.