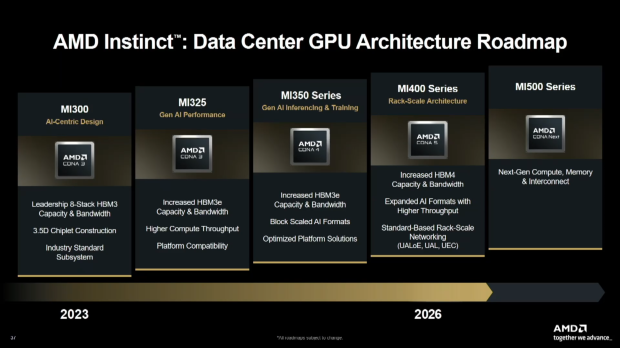

AMD confirms its next-gen Instinct MI400 AI accelerators for 2026 based on the new CDNA 5 architecture, with a huge 432GB of HBM4 memory, where it will compete against NVIDIA's next-gen Vera Rubin AI platform.

During its Financial Analysts Day event, the company confirmed its new MI400 series will come in multiple variants: MI455X is for training and inference, while the MI430X will be available in HPC variants.

AMD's new Instinct MI400 series feature the new CDNA 5 architecture, huge amounts of ultra-fast HBM4 memory, more AI formats and higher throughput, and standard-based rack-scale networking (UALoE, UAL, and UEC).

AMD says that its new Instinct MI450 series is its "most advanced AI accelerator" with 40 PFLOPs of FP4 compute performance, and 20 PFLOPs of FP8 compute performance, which works out to double the compute performance over the MI350 series.

The new Instinct MI450 series will offer a large 50% memory capacity upgrade over the MI350 series, with the new MI450 featuring a huge 432GB of next-gen HBM4 memory over the 288GB of HBM3E on MI350.

AMD's new Instinct MI450 will enjoy a huge 19.6TB/sec of memory bandwidth, which is over double the 8TB/sec on the MI350 series. There's also 300GB/sec scale-out bandwidth per GPU, so expect some big things when they arrive in AMD's next-gen Mega Pod systems.

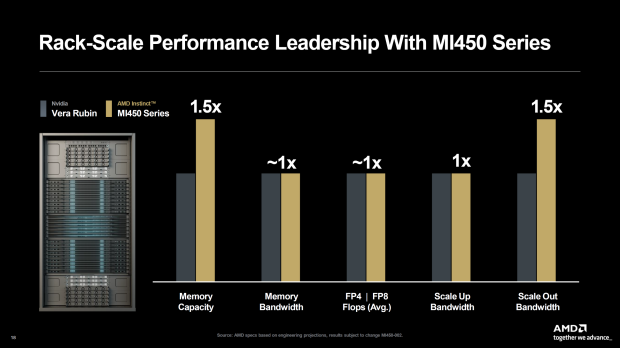

AMD is positioning its next-gen Instinct MI450 series GPUs directly against NVIDIA's next-gen Vera Rubin AI platform, directly comparing rack-scale performance against NVIDIA Vera Rubin with its new AMD Instinct MI450 series. This is what that comparison looks like.

AMD Instinct MI450 series vs NVIDIA Vera Rubin rack-scale performance:

- 1.5x Memory Capacity vs Competition

- Same Memory Bandwidth vs Competition

- Same FP4 / FP8 FLOPs vs Competition

- Same Scale-Up Bandwidth vs Competition

- 1.5x Scale-Out Bandwidth vs Competition

However, AMD wasn't finished with its exciting announcements and teases... as it will be launching its future-gen Instinct MI500 series in 2027, and will act like the "Ultra" versions of AI GPUs that NVIDIA have been releasing. NVIDIA has its current-gen Blackwell GB200 and Blackwell Ultra GB300 AI chips, with its Rubin and beefed-up Rubin Ultra AI GPUs, with the Rubin Ultra chip reportedly hitting thermal concerns with 2300W of power per chip.

In recent rumors, AMD's next-gen Instinct MI450X AI accelerator "forced" NVIDIA to boost the TDP of its Rubin VR200 AI GPU, with Rubin previously having an 1800W TGP, but upgraded to 2300W to better compete against the MI450X with its 2300W TGP design, with AMD also recently upgrading MI450X to 2500W.