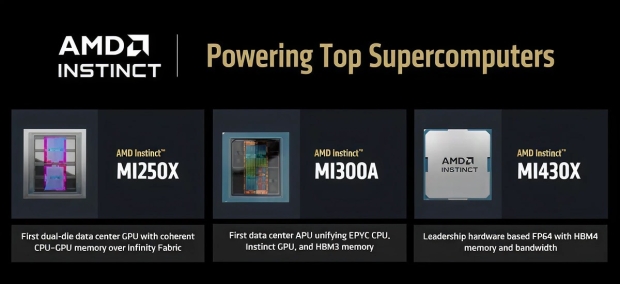

AMD has just unveiled one of the first models in its next-gen Instinct MI400 AI GPU family with the introduction of the Instinct MI430X, which was specifically created for HPC system buildouts, based on the new CDNA 5 architecture and using next-gen HBM4 memory.

In a new blog post, the company teased its new Instinct MI430X AI chip which was designed for large-scale AI environments, with some of the details unveiled on the new MI430X accelerator. AMD's new Instinct MI430X will feature the next-generation CDNA 5 architecture, and a huge 432GB of next-gen HBM4 memory with up to 19.6TB/sec of memory bandwidth.

AMD says its new MI430X is a "true successor" to the MI300A, with improvements on paper between both the chips -- the new CDNA 5 architecture, more VRAM and next-gen HBM4 with huge amounts more memory bandwidth -- making the MI430X ready for next-gen AI workloads.

AMD's new Instinct MI430X is great in hardware-based FP64 capabilities, which is why the company is aiming at using MI430X chips inside of HPC environments. Up from the MI430X we've got the upcoming MI455X AI GPU, which will be fighting head-to-head with NVIDIA's next-gen Rubin AI GPU family in 2026.

Here's where AMD's new Instinct MI430X AI GPUs are used so far:

- Discovery, at Oak Ridge National Laboratory, serves as one of the United States' first AI Factory supercomputers. Using AMD Instinct MI430X GPUs and next-gen AMD EPYC "Venice" CPUs on HPE Cray GX5000 supercomputing platform, Discovery will enable U.S. researchers to train, fine-tune, and deploy large-scale AI models while advancing scientific computing across energy research, materials science, and generative AI.

- Alice Recoque, a recently announced Exascale-class system in Europe, integrates AMD Instinct MI430X GPUs and next gen AMD EPYC "Venice" CPUs using Eviden's newest BullSequana XH3500 platform to deliver exceptional performance for both double-precision HPC and AI workloads. The system's architecture leverages the massive memory bandwidth and energy efficiency to accelerate scientific breakthroughs while meeting stringent energy efficiency goals.