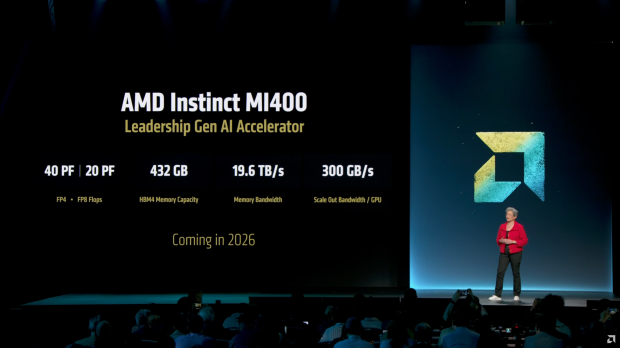

AMD has just teased its next-gen Instinct MI400 AI accelerator, which will double the AI compute performance over the just-announced MI350 series, with 50% more memory, and close to 2.5x the memory bandwidth thanks to the use of next-gen HBM4 memory.

The company shared some fresh new details on its next-gen Instinct MI400 series AI accelerator, with 40 PFLOPs (FP4) and 20 PFLOPs (FP8) which is double the AI compute speeds of the new Instinct MI350 that was just launched today. AMD's new Instinct MI400 series AI chip will boast 50% more memory capacity over the MI350 which has 288GB of HBM3E, while the new MI400 has a huge 432GB of HBM4 memory.

AMD's use of the new HBM4 standard will bring the company up to full competitiveness against NVIDIA which will be using HBM4 on its upcoming Rubin R100 AI GPU, with AMD's new Instinct MI400 AI chip and its 432GB of HBM4 offering a huge 19.6TB/sec of memory bandwidth, up from just 8TB/sec on the MI350 series. The new AI GPU will also sport a 300GB/sec scale-out bandwidth per GPU, so we should expect big things from MI400 in 2026 as it battles Rubin R100 in the HBM4-powered AI fight.

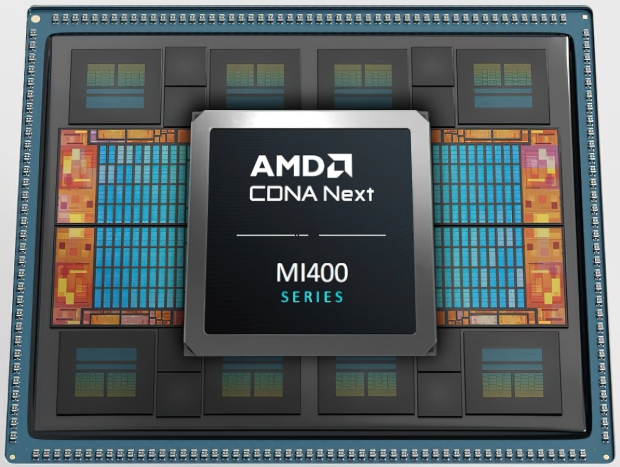

AMD's new Instinct MI400 AI accelerator will feature up to 4 x XCDs (Accelerated Compute Dies) which is double the core counts of MI300 (2 x XCDs per AID). We should also expect two AIDs (Active Interposer Dies) on the Instinct MI400 series, as well as separate Multimedia and I/O dies as well.

For each of the AIDs there is a dedicated MID tile offering efficient communication between the compute units and I/O interfaces compared to what AMD has done with previous-gen Instinct AI accelerators.

AMD will launch its next-gen Instinct MI400 series AI accelerators in 2026 and we'll be keeping an eye on them until then.