NVIDIA revised its Vera Rubin VR200 NVL72 AI server spec at CES 2026 a couple of weeks ago, increasing the HBM4 memory bandwidth by 10% to ensure it beats AMD's upcoming Instinct MI455X AI accelerator.

In a new post on X from @SemiAnalysis, the NVIDIA Vera Rubin NVL72 AI server specifications now see HBM4 memory bandwidth at 22.2TB/sec, which is a 10% increase from the specs disclosed by NVIDIA at GTC 2025 last year.

The original 13TB/sec of memory bandwidth from the HBM4 memory was impressive from the original specs unveiled for Vera Rubin NVL144 last year, with NVIDIA originally asking for 9Gbps bandwidth per pin from HBM4, and then a faster 10-11Gbps... but why is that? Why did NVIDIA upgrade the HBM4 bandwidth on Vera Rubin?

- Read more: AMD MI450X 'forced' NVIDIA to increase TGP + memory bandwidth on Rubin

- Read more: Oh no: NVIDIA Vera Rubin AI systems to eat up MILLIONS of terabytes of SSDs

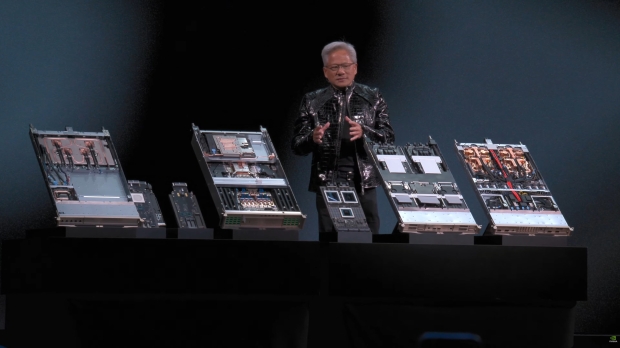

- Read more: NVIDIA officially unveils Rubin: next-gen AI platform with huge upgrades + HBM4

- Read more: AMD confirms Instinct MI400 series AI GPUs drop in 2026, next-gen MI500 in 2027

- Read more: NVIDIA's next-gen Vera Rubin NVL576 AI server: 576 GPUs, 12672C/25344T CPU, HBM4

NVIDIA is using 8-Hi HBM4 stacks for Vera Rubin, which is why it has been pushing for HBM4 specifications to exceed JEDEC's rating, asking HBM makers to increase pin speeds to 11Gbps. On the other hand, AMD is using 12-Hi HBM4 stacks, resulting in 19.6TB/sec of memory bandwidth... NVIDIA needed that 10% higher HBM4 bandwidth, now at 22.2TB/sec for its upcoming Vera Rubin NVL72 AI servers.