Introduction

I've spent over a week re-benching my entire stack of silicon with CD PROJEKT RED's ambitious game, Cyberpunk 2077, now that its new v1.5 update is here and with it... an awesome built-in benchmark.

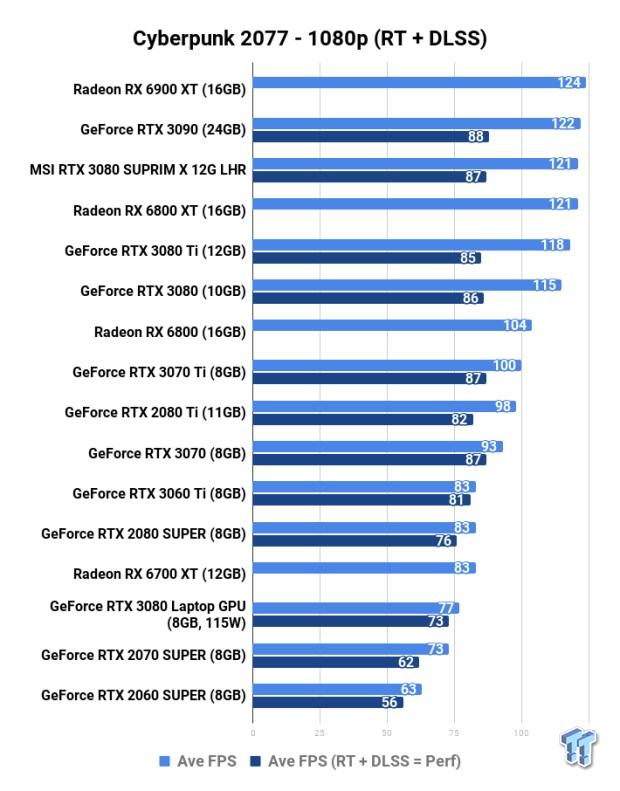

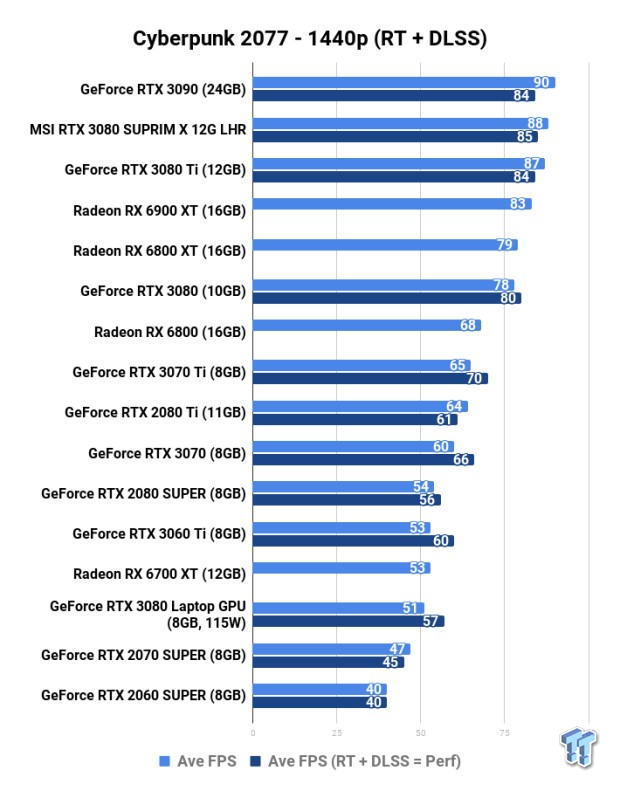

The initial wave of benchmarking saw me concentrating on the raw performance, without any ray tracing (RT) and DLSS (Deep Learning Super Sampling) enabled. But with a lot more time, and a lot more numbers, I've got some data on NVIDIA's flock of Turing and Ampere GPUs with both RT and DLSS enabled at 1080p, 1440p, and 4K.

Cyberpunk 2077 is one of the best-looking games that you can play on the PC, especially when you've got the hardware grunt to dial everything up to the max, and run it at 4K. But even if you do... the fastest CPU and GPU on the planet can't run Cyberpunk 2077 at native 4K and 60FPS. Nope, no way.

NVIDIA has some black magik that it calls DLSS -- or Deep Learning Super Sampling -- with DLSS 2.3 featured in Cyberpunk 2077 with some truly impressive results. DLSS opens up enough additional performance that you can enable both RT + DLSS to enjoy a better-looking Cyberpunk 2077, with even more performance.

But now I'm back, and I have an arsenal of data to go through... so let's dive right into it.

Graphics Settings

I've got the same graphics settings as my original article, but this time I've enabled ray tracing ("Psycho" setting) and then I've run the NVIDIA GPUs through DLSS on both "Quality" and "Performance" mode. For the ray tracing benchmarks, I've got DLSS set to "Performance".

Test System Specs

Anthony's GPU Test System Specifications

The biggest upgrade to the GPU testbed is the AMD Ryzen 9 5900X processor, offering 12 cores and 24 threads of Zen 3-powered CPU grunt at up to 4.8GHz.

That's plenty of CPU power and offers a great upgrade over the Ryzen 7 3800X that I was using previously.

I will be upgrading this system in a few months, and maybe running it side-by-side with the new Alder Lake-powered Intel Core i9-12900K processor. I'm using one inside of the Allied M.O.A.B.-I gaming PC that I reviewed a few months ago, and man the 12900K is like the Godzilla of CPUs.

Sabrent is the most recent partner of mine to help build out my systems, sending me oodles of the fastest NVMe M.2 SSDs on the planet. I'm using Sabrent's flagship Rocket 4 Plus 4TB M.2 SSDs which offers 7GB/sec+ reads and writes with a huge 4TB of capacity.

- Read more: ASUS ROG Strix XG438Q Review: It's So Good, ASUS Will Hate This Review

- Read more: ASUS ROG Swift PG43UQ Review: Perfect For The GeForce RTX 3080

- Read more: ASUS ROG Strix XG43UQ Review - The Best HDMI 2.1 Gaming Monitor

ASUS has been a tight partner of mine for a few years now, providing their huge 43-inch 4K 120Hz gaming monitors for my benchmarking and gaming needs. I'm using two of them at the moment, the ROG Strix XG438Q and the ROG Swift PG43UQ gaming monitors.

- CPU: AMD Ryzen 9 5900X (buy from Amazon)

- Motherboard: ASUS ROG X570 Crosshair VIII HERO (buy from Amazon)

- Cooler: CoolerMaster MasterLiquid ML360R RGB (buy from Amazon)

- RAM: G.SKILL Trident Z NEO RGB 32GB (4x8GB) (F4-3600C18Q-32GTZN) (buy from Amazon)

- SSD: Sabrent 4TB Rocket NVMe PCIe 4.0 M.2 2280 (buy from Amazon)

- PSU: be quiet! Dark Power Pro 11 1200W (buy from Amazon)

- Case: InWin X-Frame 2.0

- OS: Microsoft Windows 11 Pro x64 (buy from Amazon)

- Display: ASUS ROG Swift PG43UQ (4K 120Hz) (buy from Amazon)

Benchmarks - 1080p

Benchmarks - 1440p

Benchmarks - 4K

Final Thoughts

Let's face it: you want the GeForce RTX 3080 Ti or GeForce RTX 3090 for the ultimate Cyberpunk 2077 experience. CD PROJEKT RED has molded an absolute masterpiece, which is amplified by ray tracing and pumped with performance from DLSS technology and only on GeForce RTX graphics cards.

4K 60FPS with ray tracing in a game as good looking as Cyberpunk 2077 is about as good as it gets on the PC, and a game like Cyberpunk 2077 doesn't require much above 60FPS to feel smooth. Sure, 120FPS is the goal for PC gaming (at least IMO as an enthusiast) but 60FPS (minimum) and maybe 90FPS (average) in a game like that is perfect.

But then you add in NVIDIA and its kick-ass DLSS technology... and then you're adding another 20% average performance at 4K. NVIDIA's flagship... well, for the next few days before the RTX 3090 Ti launches... GeForce RTX 3090 is capable of only 47FPS average at 4K (without RT, and without DLSS).

Enabling both RT (all dials maxed out) and DLSS on Performance mode, the RTX 3090 still pushes another 10FPS average up to 57FPS average. That's with ray tracing enabled, but DLSS is counter-balancing that load on the GPU and injecting a great dose of performance.

The same thing happens across the board, but it's much more noticed on the GeForce RTX 3080, RTX 3080 12GB, and RTX 3080 Ti. There's more performance across the board by enabling not just DLSS, but better graphics through ray tracing, too. It's a win-win.

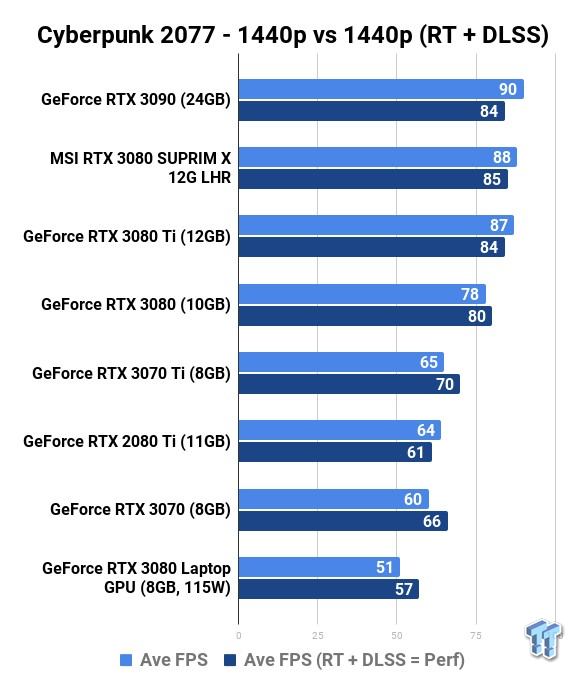

If you wanted to leap up and over 60FPS average, dropping down to 1440p is something you can do: and you can still keep RT enabled because even DLSS isn't helping much here. All of NVIDIA's higher-end GeForce RTX 3080, RTX 3080 12GB, RTX 3080 Ti, and RTX 3090 graphics cards are capable of 80FPS average (without RT or DLSS).

Enabling both RT and DLSS doesn't do much here, with the RTX 3090 only moving from 84FPS to 90FPS average. You can't complain about that -- once again, we have enabled ray tracing here -- so Cyberpunk 2077 looks even better, but DLSS is helping not provide the same but even better performance at 1440p.