Google has developed three AI compression algorithms designed to reduce the memory footprint of large language models without sacrificing performance and quality. Published on Google Research, the tech is described as a way to shrink AI's working memory, known as the "KV cache", by using a form of vector quantization.

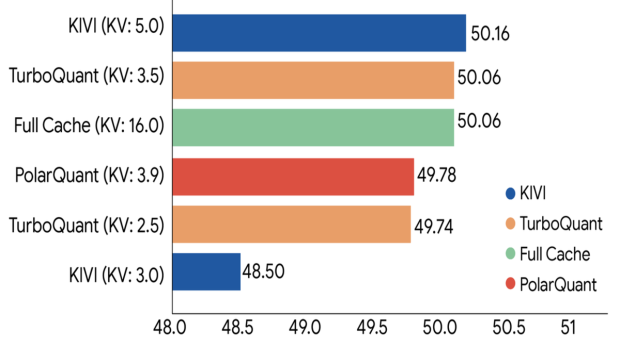

The company plans to present its findings at the ICLR 2026 conference next month, along with the three algorithms making this possible, namely TurboQuant, PolarQuant, and Quantized Johnson-Lindenstrauss.

TurboQuant would allow AI to remember more information while taking up less space and maintaining accuracy. There is a lot more detail in the Google Research article on how the compression technology works, but the results are what's exciting.

Google evaluated all three algorithms across a range of standard long-context benchmarks, including LongBench, Needle in a Haystack, ZeroSCROLLS, RULER, and L-Eval, using the open-source Gemma and Mistral LLMs. The results show that TurboQuant could make AI cheaper to run, reducing its runtime working memory by "at least 6x" while maintaining strong performance across the board.

This is good news, but not for RAM prices. This working memory has nothing to do with AI data centers requiring fewer resources. Instead, the aim is to address memory overhead in the KV cache for LLMs. This cache stores conversational context as users interact with AI chatbots and grows the more you use the model.

That translates to reduced memory requirements in AI inference workloads, making it easier for LLMs to run on consumer smartphones or mid-range laptops. It's similar to how DeepSeek R1 was so efficient that it could run on a single GPU. Since TurboQuant targets inference memory, and not training, where the real hardware crunch is happening, it won't ease the broader RAM shortage driven by AI development. At least not directly.

There's also a less comfortable angle to consider. Agentic AI, systems capable of performing tasks autonomously, are already around the corner. With such compression tech making those systems run on lower-spec hardware, it could accelerate the AI push significantly. More deployment means more demand for training new models, which loops back to more pressure on the memory supply, not less. This means that a more efficient inference method, like what we are seeing here, could somewhat drive overall memory demand higher in the long run.

With that said, TurboQuant is still a lab result. It hasn't been deployed broadly. For now, the broader memory crisis shows no signs of slowing down, with AI data centers already straining CPU supply and forcing Intel and AMD to raise CPU prices by up to 15%.