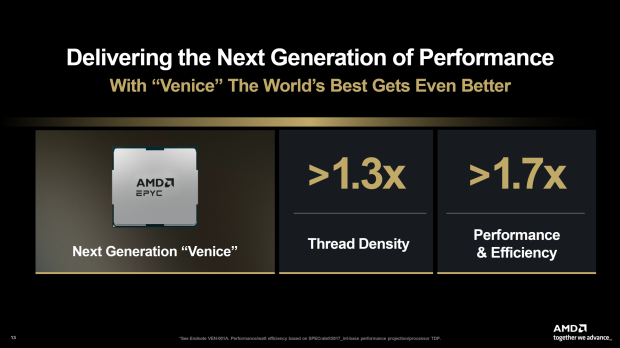

AMD will be launching its next-generation Zen 6-based EPYC "Venice" CPUs in 2026, which will rock a huge 70% performance and efficiency leap over the current-gen Zen 5 EPYC chips, 30% more thread density, and more.

AMD's upcoming Zen 6 EPYC "Venice" CPUs will be fabbed on TSMC's new bleeding-edge 2nm (N2P) process node, with up to 256 cores and 512 threads (256C/512T) of Zen 6 processing power. In AMD's official slides, EPYC Venice CPUs will have 70% more performance and efficiency, and a 30% improvement in thread density.

Zen 6 EPYC Venice CPUs will pack a huge 256C/512T of processing power, which is a 33.3% improvement over Zen 5 EPYC Turin, which tops out at 192C/384T. Not only that, there are optimizations and improvements with Zen 6, on top of the fact that it's fabbed on TSMC's latest 2nm process node. The entire Zen 6 family of processors -- Olympic Ridge for desktop, Medusa for laptops -- are fabbed on TSMC N2P.

70% more performance and efficiency is a great feat for AMD, where these improvements come from a variety of different factors. AMD will have made some great IPC improvements, clock rates, architectural improvements, as well as leaning on TSMC's bleeding-edge 2nm process node with a transition away from FinFET to Nanosheet transistors (GAA), which offers 10-15% more performance at the same power, 25-30% lower power consumption with the same performance, and up to 15% higher transistor density.

Not only will AMD be launching its next-gen EPYC "Venice" CPUs, but it will simultaneously launch its next-gen Instinct MI400 AI accelerator series. During AMD's recent Q3 2025 earnings call, Lisa said that AMD's new MI400 series AI accelerators combine a new compute engine with industry-leading memory capacity -- 432GB of next-gen HBM4 memory and 19.6TB/sec bandwidth -- and advanced networking capabilities to "deliver a major leap in performance for the most demanding AI training and inference workloads".