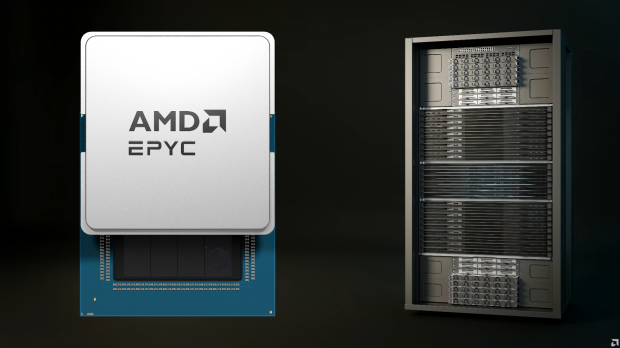

AMD has just shown off its next-gen world-first 2nm EPYC "Venice" CPU with Zen 6 cores, and its Instinct MI455X AI accelerator, ready for its next-gen Helios AI racks.

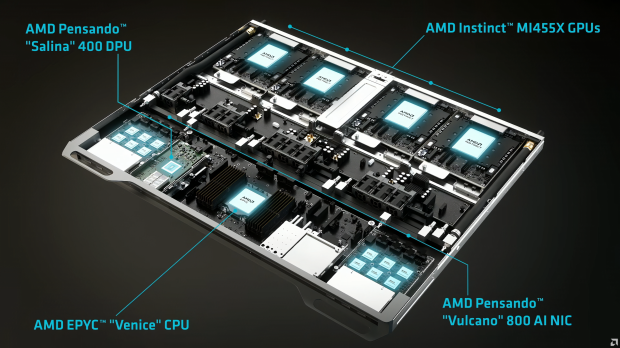

The company unveiled its new Helios AI rack at its recent Financial Analysts Day 2025, promising some more performance numbers with class-leading performance and efficiency for AI workloads of the future. The new AMD Helios AI rack features a full liquid-cooled design with 4 x Instinct MI455X AI GPUs and a single Zen 6-based EPYC "Venice" CPU.

Helios AI racks use AMD's new Pensando "Salina" 400 DPU and Pensando "Vulcano" 800 AI NIC for networking and interconnection. AMD's next-gen EPYC "Venice" CPUs come with up to 256 cores based on the Zen 6c architecture, and each Instinct MI455X AI GPU packs a ton of GPU cores and next-gen HBM4 memory.

AMD can scale its Helios AI rack with up to 2.9 Exaflops of AI compute power, 31TB of total HBM4 memory, 43TB/sec of scale-out bandwidth, and up to 4600 CPU cores and over 18,000 GPU cores.

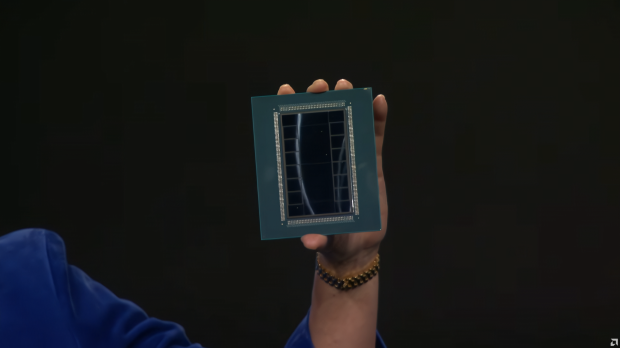

In the above photo is the chip for the Instinct MI455X, which is absolutely huge with two gigantic GCDs (Graphics Compute Dies) and two MCDs (Memory Controller Dies), with 16 x HBM4 sites. It's absolutely massive when you see it in someone's hand -- in this case, AMD boss Dr. Lisa Su.

AMD has positioned its new Instinct MI400 series with NVIDIA's upcoming Vera Rubin AI platform, with the MI450X series AI accelerators featuring up to 40 PFLOPs of FP4, 20 PFLOPs of FP8 compute performance, 432GB of HBM4 memory with up to 19.6 TB/s bandwidth per chip, 3.6TB/s scale-up, and 300GB/s scale-out bandwidth.