NVIDIA's industry-leading H100 Tensor Core GPUs have set new records in the latest industry-standard tests, flexing their AI GPU muscle in the latest MLPerf industry benchmarks.

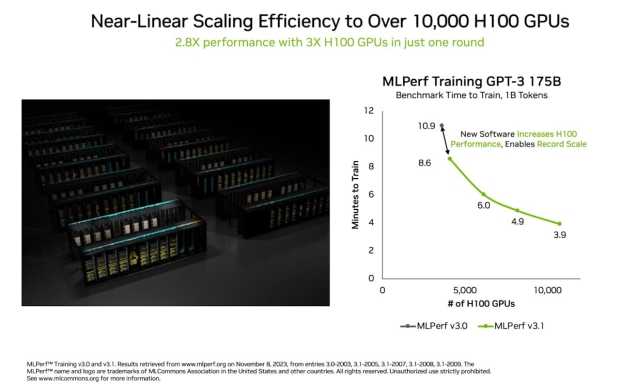

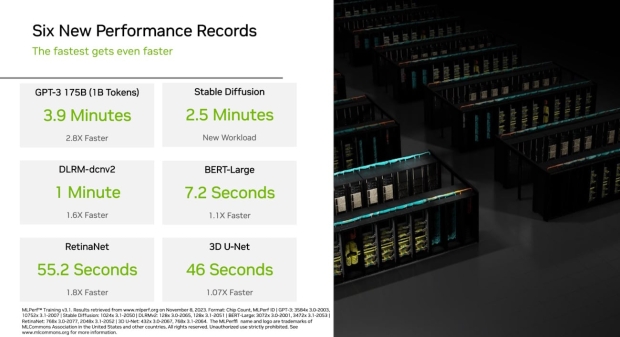

The NVIDIA Eos AI supercomputer packs an incredible 10,752 NVIDIA H100 Tensor Core GPUs and NVIDIA's Quantum-2 InfiniBand networking, which has just completed a training benchmark based on the GPT-3 model with 175 billion parameters trained on 1 billion tokens in just 3.9 minutes. It might not sound like much, but that's nearly 3x faster than the previous world record that NVIDIA set with 10.9 minutes. That's a full 7 minutes shaved off.

The MLPerf benchmark uses a part of the full GPT-3 data set that powers the super-popular ChatGPT service, with NVIDIA teasing its Eos AI supercomputer could train in just 8 days, which is a mind-blowing 73x faster than the previous state-of-the-art system powered by 512 NVIDIA A100 GPUs. The huge speed-up in training time means reduced costs, energy savings, and super-speeds the time-to-market for companies using NVIDIA H100 AI GPUs for their AI products.

NVIDIA talks about new generative AI tests powered by 1024 NVIDIA H100 GPUs that completed a training benchmark based on the Stable Diffusion text-to-image model in just 2.5 minutes, which sets a "high bar" on this new workload, according to NVIDIA.

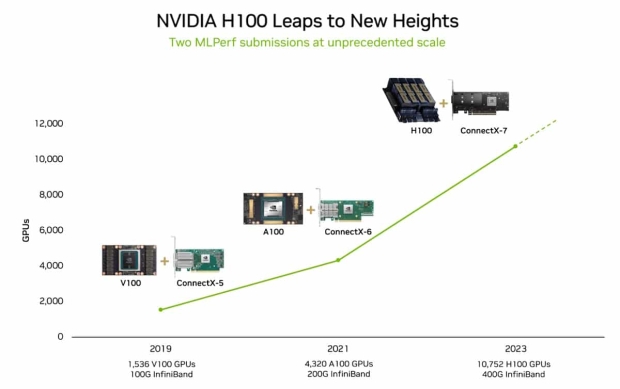

Before this new record-setting benchmark, the previous record for MLPerf was powered by 3584 NVIDIA H100 AI GPUs, while the new record uses way more AI silicon with 10,752 NVIDIA H100 GPUs, a 3x increase in H100 AI GPUs. That 3x increase in GPU numbers delivers a 2.8x scaling in performance and a 93% efficiency rate, which NVIDIA says is also thanks partly to software optimizations.

Efficient scaling is very important for generative AI because LLMs (large language models) are growing by an order of magnitude every year, whereas NVIDIA is the only company in the world with AI GPUs that are up to the task of meeting the insatiable AI GPU demand.

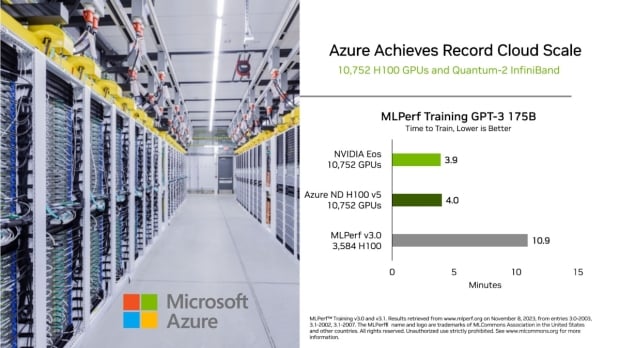

The new world-record MLPerf benchmark is thanks to a full-stack platform of innovators in accelerators, systems and software that both NVIDIA's Eos AI supercomputer and Microsoft Azure used in the latest round. Microsoft's new Azure supercomputer is also powered by the same number of AI hardware, with 10,752 NVIDIA H100 AI GPUs inside.

Now that NVIDIA has two identical H100 AI GPU deployments in the 10,752 GPU milestone, they can look at both of the new 10,752 H100 AI GPUs in either system with MLPerf Training GPT-3 175B. The NVIDIA Eos AI supercomputer hits 3.9 seconds, while the Microsoft Azure ND H100 v5 AI supercomputer is just 0.1 seconds behind at 4.0 seconds. 10.9 seconds for the 3584 H100 AI GPUs, remember.

NVIDIA also set new records in this round, in addition to making advances in generative AI. The company says that H100 GPUs were 1.6x faster than the prior-round training recommender models widely employed to help users find what they're looking for online. RetinaNet, a computer vision model, is up to 1.8x faster now. These increases are thanks to a combination of advances in software and scaled-up hardware.

NVIDIA also points out something important: they were the only company to run all MLPerf tests, with the H100 GPUs used in the fastest performance and the greatest scaling in each of the 9 benchmarks run. Impressive for NVIDIA, and something they should be very proud of indeed.

There were 11 system makers that were using the NVIDIA AI platforms in their submissions this time around, with ASUS, Dell Technologies, Fujitsu, GIGABYTE, Lenovo, QCT, and Supermicro. These companies use the MLPerf benchmark because they know it's a valuable tool for customers evaluating AI platforms and vendors.