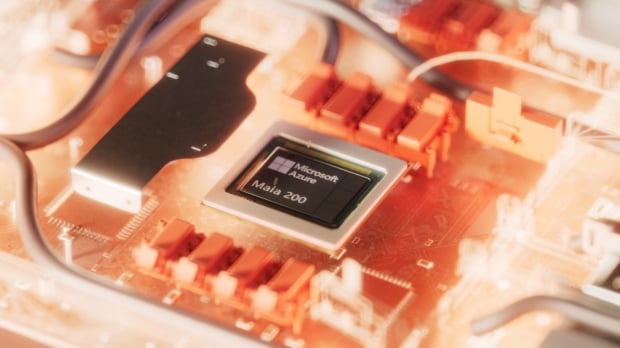

Microsoft has unveiled its latest AI accelerator for inference and token generation, the Maia 200. Built on TSMC's 3nm process with native FP8 and FP4 tensor cores, and an overhauled memory system featuring 216GB of HBM3e at 7 TB/s and 272MB of on-chip SRAM, Microsoft described the Maia 200 as the "most performant, first-party silicon from any hyperscaler."

And with that, Microsoft claims three times the FP4 performance of Amazon's third-generation Trainium accelerator, with faster FP8 performance than Google's seventh-gen TPU. Microsoft adds that the Maia 200 is its most efficient AI inference accelerator to date, boasting 30% better performance-per-dollar than the "latest generation hardware" it currently deploys.

Set to become part of the company's AI infrastructure, Maia 200 will power the latest GPT-5.2 models from OpenAI, Microsoft Foundry, and Microsoft 365 Copilot. The company will also leverage Maia 200 to train next-gen in-house models using synthetic data.

Microsoft has confirmed that Maia 200 has already been deployed in its US Central datacenter near Des Moines, Iowa, and its US West 3 datacenter near Phoenix, Arizona, seamlessly integrating with Azure. A closer look at the Maia 200's specs reveals that its TSMC 3nm process packs over 140 billion transistors and delivers over 10 petaFLOPS in 4-bit precision (FP4) and over 5 petaFLOPS in 8-bit precision (FP8), while carrying a 750W SoC TDP.

And of course, multiple Maia 200 chips deliver scalable performance with Microsoft confirming clusters of up to 6,144 accelerators - each with 2.8 TB/s of bidirectional bandwidth. As part of the announcement, Microsoft is formally inviting developers, AI startups, and academics to begin using Maia 200 through a preview program of its SDK.

"Our Maia AI accelerator program is designed to be multi-generational," Scott Guthrie, Microsoft Executive Vice President for Cloud and AI, writes. "As we deploy Maia 200 across our global infrastructure, we are already designing for future generations and expect each generation will continually set new benchmarks for what's possible and deliver ever better performance and efficiency for the most important AI workloads."