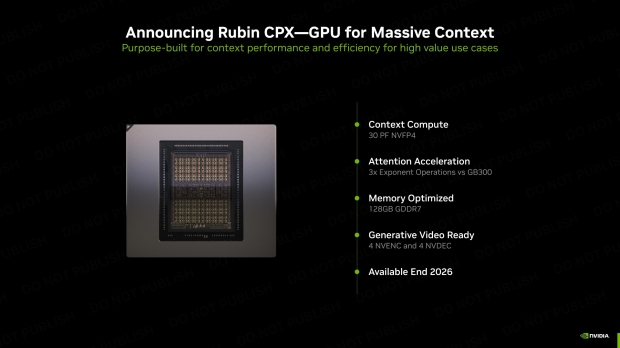

NVIDIA has announced its upcoming Rubin CPX GPU, a new specialized accelerator from the next-gen Rubin family of AI chips, made specifically for massive-context AI models, sporting a huge 128GB of GDDR7 memory.

The new Rubin CPX GPU features 30 PetaFLOPS of NVFP4 compute performance on a single monolithic die, which marks a shift away from dual-GPU packages that NVIDIA has used on its current Blackwell and Blackwell Ultra AI GPUs, as well as the design path that the rest of the Rubin family will follow.

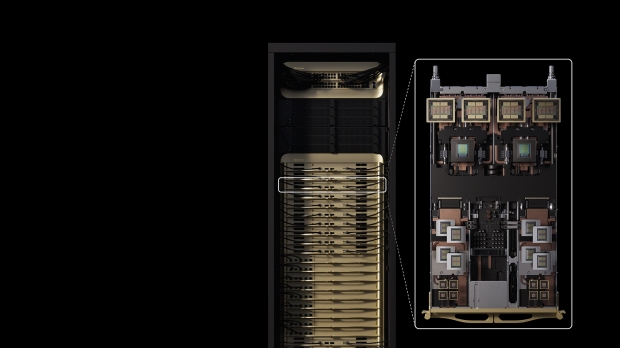

Rubin CPX works hand in hand with NVIDIA Vera CPUs and Rubin GPUs inside of the new NVIDIA Vera Rubin NVL144 CPX platform, with the integrated NVIDIA MGX system featuring 8 exaflops of AI compute to provide 7.5x more AI performance than the new NVIDIA GB300 NVL72 AI system, as well as 100TB of fast memory and 1.7PB/sec of memory bandwidth in a single rack.

- Read more: NVIDIA's next-gen Vera Rubin NVL576 AI server: 576 Rubin AI GPUs, 12672C/25344T CPU, new HBM4

- Read more: NVIDIA officially unveils Rubin: its next-gen AI platform with huge upgrades, next-gen HBM4

- Read more: NVIDIA shows off its next-gen Vera Rubin Superchip at GTC Washington with two huge GPUs

Jensen Huang, founder and CEO of NVIDIA, said: "The Vera Rubin platform will mark another leap in the frontier of AI computing - introducing both the next-generation Rubin GPU and a new category of processors called CPX. Just as RTX revolutionized graphics and physical AI, Rubin CPX is the first CUDA GPU purpose-built for massive-context AI, where models reason across millions of tokens of knowledge at once".

NVIDIA's new Rubin CPX enables the highest performance and token revenue for long-context processing -- far beyond what today's systems were designed to handle. This transforms AI coding assistants from simple code-generation tools into sophisticated systems that are capable of comprehending and optimizing large-scale software projects.

The company explains that in order to process video, that AI models can "take up to 1 million tokens for an hour of content, pushing the limits of traditional GPU compute. Rubin CPX integrates video decoder and encoders, as well as long-context inference processing, in a single chip for unprecedented capabilities in long-format applications such as video search and high-quality generative video".

"Built on the NVIDIA Rubin architecture, the Rubin CPX GPU uses a cost‑efficient, monolithic die design packed with powerful NVFP4 computing resources and is optimized to deliver extremely high performance and energy efficiency for AI inference tasks".

NVIDIA's new Rubin CPX is expected to be available at the end of 2026.