If you are one to get somewhat spicy over FaceTime, those days are now coming to an end as Apple has updated the feature to automatically stop video and audio if any nudity is detected.

The new stipulations for using FaceTime were discovered in the iOS 26 beta. Apple did mention last month when it unveiled iOS 26 that new features were coming to child accounts, but now it appears some of those features are making their way over to adult accounts as well. 9to5Mac reports that as part of the expansion of features, "Communication Safety expands to intervene when nudity is detected in FaceTime video calls, and to blur out nudity in Shared Albums in Photos."

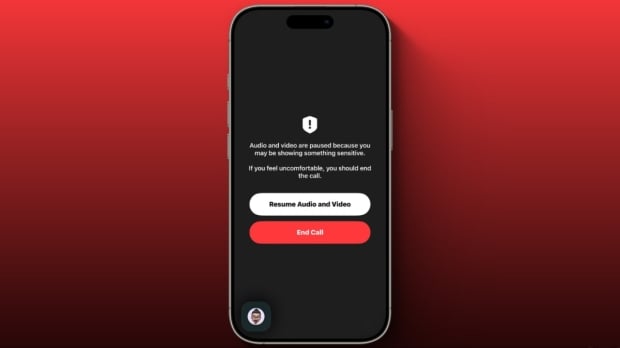

What happens when FaceTime detects nudity? The video and audio of the call both freeze, and then the app will show the following message: "Audio and video are paused because you may be showing something sensitive. If you feel uncomfortable, you should end the call." There are two options to select from in this screen, "Resume Audio and Video" and "End Call". Presumably, users can simply resume the call and carry out their spicy activity.

However, the fact that nudity was even detected and Apple's decision to interrupt the call has raised some serious questions about privacy. Notably, Communication Safety uses on-device machine learning to analyze photo and video attachments and determine if a photo or video appears to contain nudity.

Apple writes, "Because the photos and videos are analyzed on your child's device, Apple doesn't receive an indication that nudity was detected and doesn't get access to the photos or videos as a result."

Currently, it remains unclear whether this is a bug within the iOS 26 beta intended only for child accounts or if it has inadvertently affected adult accounts. Hopefully, that is the case, as enforcing this on adult accounts seems like an overreach for a company that is encroaching on people's privacy through the guise of user protection.