When more cores are just not enough

The CPU market is now flooded with multi-core and multi-threaded CPUs. We see them in everything from smartphones (with Dual Core Cortex A9 from ARM) to the highest end notebooks and desktops where the Core i7-980X is now king. But the question many people are asking is; what will more cores give me? - So let's take a brief look at why even with 6 and 12 core CPUs in the channel we do not see better multi-thread and multi-tasking support in our applications and operating systems.

The question of what does more cores get me is an important one and one that needs to be answered by the big software companies. If you go back a few years (like around 10), you will see that it was not until very recently that even the big names like Microsoft began to seriously consider multiple CPUs or cores in their products. True, you had Windows NT that would support more than one CPU, but the scheduler that handled the tasks was very basic, though it still achieved the end result and allowed you to use more than one CPU through the OS.

In the professional space this was great and helped with production and content creation. In the consumer space there were very few applications that could deal with this, so it was up to the very immature task scheduler to deal with these in what it felt was the most efficient manner. From Windows NT we moved to Windows 2000 then to Windows XP (yes, I know, I am skipping over a few years here). Of these two, only XP had rudimentary support for consumer level SMP and SMT systems. But, again this was not a very mature or efficient product.

In fact, there was quite a large issue with the Home version of XP and anything with more than one CPU. The problem centered on the poorly written task scheduler and the power management. If you had a dual core CPU (or dual CPU system) and only one core was active, the PWM system would still try to throttle down both cores. This caused many systems to slow down dramatically or simply lockup. This was one of the reasons that most gamers and enthusiasts used Windows XP Professional over Home (there were others, but this was a big one).

Again, even with support for more than one CPU in Windows XP, it was very limited. In fact, the original Kernel only had support for two CPUs, the same type of SMP support that existed since the days of Windows NT workstation. This was still going on while Quad Core CPUs from Intel and AMD were hitting the market. It was very interesting to see reviews on quad core CPUs using software and games that could not even deal with two, especially considering that Windows XP was still not able to efficiently deal with them. Some of that changed when many sites moved to the Server 2003 Kernel based Windows XP x64 OS. You still did not have many applications outside the professional world that would allow you to use more than two cores, but the platform was getting better.

With the release of Windows Vista we saw MS try its hand at SMT/SMP for the masses again. The Scheduler in Vista was much more efficient and was a big improvement over anything (other than server operating systems) that had been available on the consumer market before. However, Vista was not received well and due to some internal issues combined with terrible press, it was not widely adopted across the consumer space. It can also be said that Microsoft's insistence on making 50 different SKUs for the OS hurt. When people think of an OS, they think of it by name. They have Vista, not Vista Basic, or Vista Home, or Vista Home Basic Premium Lite. This caused major confusion and only hurt the adoption of better SMT and SMP support in the consumer space.

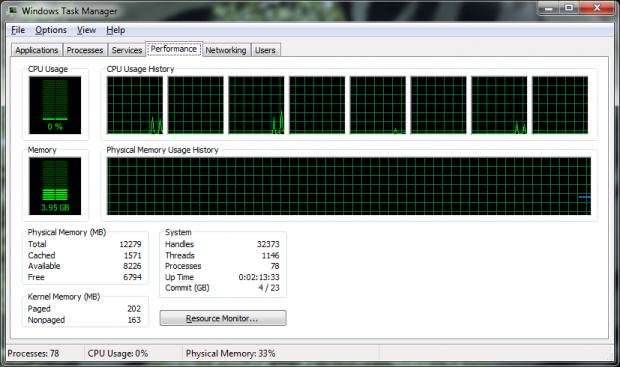

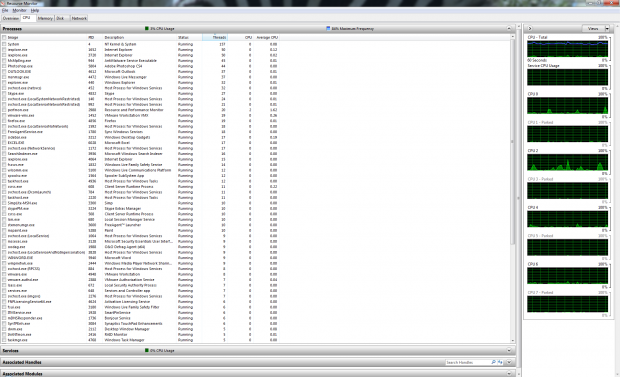

It is only now in the beginnings of 2010 that we see a widely adopted operating system with good solid multi-thread and multi-CPU support in the consumer market space. Windows 7 has a great task scheduler; it is very efficient at managing the applications and giving them CPU time based on demand and application need. It's not 100% perfect, but it is pretty damn good compared to what we had before. There are some things I would like to see in future versions; preset core affinity would be nice, as would being able to set application priority at launch vs. having to do it after the fact in task manager, but I am digressing here.

Multiple CPUs and Threads; the Odd numbered 'CPUs' are really just extra threads

The reason I touched on the path of SMT and SMP in the operating system is to cover the major reason why most consumer applications have almost no support for multi core systems. To put it simply and rather bluntly, they have not seen the need to spend the money on it. After all, if the OS does not support it, why should they? I have said this over and over again; money drives the market. You can put any friendly name on it you want. Consumer demand, adoption rate, call it what you will; both equal sales and money.

Software development costs money, so to add in even rudimentary support for multiple CPUs or threads to an existing application that does not already have it, is not worth the time for most companies. They will happily continue to push out the same core product with a few minor updates, all the while unknowingly limiting their own customer's performance on their new systems. After all, if you think about it; every application that was pushed out to the consumer while XP was king was fighting for the same single CPU core and its resources. You had to rely on the dodgy task scheduler in Windows XP to hopefully open up the new application instance on another free CPU core, but that did not always happen. The same issue was present in almost every game. I mean come on guys; we saw SMP and SMT support going all the way back to Quake III and Half Life! Why can't we see that now when a dual core is the standard and quad cores are not far behind? It makes no sense at all to be honest.

When more cores are just not enough - Continued

Developers are not the problem

Now here is the thing that is going to really confuse the issue. It is not the guys hammering away on the keyboard late into the night that are the issue here. You see the actual developer knows the need for SMT and SMP support and also knows what can be done with it. The bean counters at the top usually have no idea, but see any time devoted to this as a waste of resources (which is another way to say money); which means that even though the people doing the work understand the issue, they will never be allowed to do anything about it due to a lack of understanding at the corporate level. This is true for just about all types of software and is almost doubly true for games.

Shoddy multi-thread support even in a 'new' game

Remember in the world of game development, the console is the king. That is not to say that consoles are better than PCs. In fact, the opposite is true. It just means that the console is the bigger money maker. The current consoles on the market all use an old, tried and tested friend; DX9. This means that to make a new game there is no requirement to develop a new engine; they simply recycle the old one and drop in a few tweaks. Distribution companies can charge for upgrades and map packs making the console game the cash cow of the industry.

Since we know that the software industry is all about money, it makes sense that we will see this trend increase. But there is more to this than just map pack sales. You see, because the development companies can use DX9, many are reluctant to use anything newer like DX10 or 11, both of which have better support for offloading instructions to multi-core CPUs. Instead, what we get are a handful of graphical features from the new APIs and that is about it.

We spoke with a couple of developers that told us they wish they could do a ground up redesign of their engine. It would let them improve it in more ways than graphically. When you start a new project you can use lessons learned from past projects and bypass many issues you might have had to patch before. You can end up with a cleaner and more efficient product working this way. You can also start working new features and API support in from the beginning instead of trying to shoehorn it in after the fact.

The down side of this approach is once again, money. Doing a ground up new build is time consuming and costly. Even if you get support from companies like NVIDIA, AMD or Intel, it is still going to cost you. But here is another wrench to throw at the monkey. NVIDIA does not want more information off loaded to the CPU; it would take away from their push to get more information on the GPU. Yes, you heard right, NVIDIA does not want any game engine to be more CPU efficient. NVIDIA probably does not want any application to be more CPU efficient either. Why? The first reason is again money. After all, if games and applications were more CPU efficient then NVIDIA would not be able to sell Tesla and Cuda off on the market. There are others, but they are not as large as the ability to sell GPU based computing. Now, this is not to say that a CPU is as powerful or efficient as a GPU. They are not; but then again, there are reasons for that, too. Still, with a little bit of effort, CPUs could give a much better showing.

The same model is applied to normal software, but on a more aggressive scale. Here the people running the business absolutely do not want to spend the money to develop applications that have multi-thread support. They argue that the consumer does not want this or even know they do not have it. They get away with this type of thinking because of consumer ignorance of the issues at hand. For example; if a new game or application runs poorly, the OS, the CPU, the GPU and everything else but the actual application are blamed. It is hardly ever the fault of the software or its lack of proper support for the environment it is being run in. If more people knew this there would be a greater demand put on ISVs to get to work in providing this support.

The game changes at the bottom level

But what we have touched on so far is not the whole picture. There is a growing movement to give multi-threaded support. This is in the smaller software development houses, the ones that are putting out the transcoding applications and the like. These are becoming more and more SMT aware and very efficient at what they do.

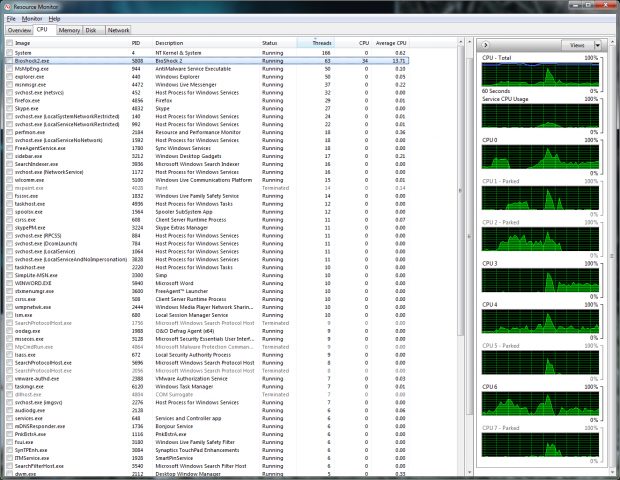

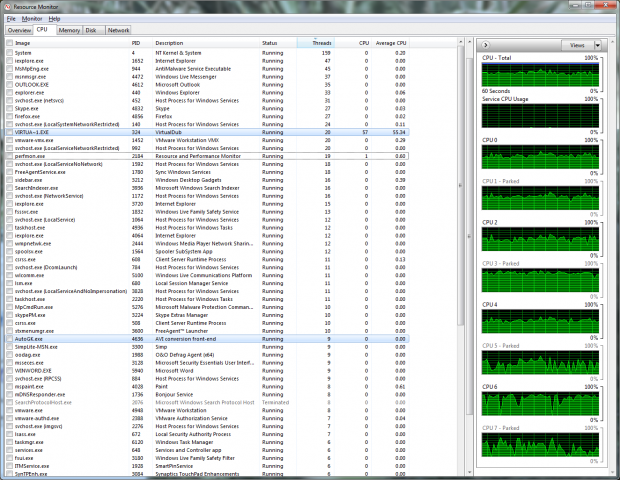

But then again, many of these are run by the same people that are doing the actual coding; they see the benefit of faster and more efficient products at the ground level. This is the opposite of the corporate model we talked about above. Just look at some applications like AVS, AutoGK and even Videora. They are all highly threaded and very efficient at the tasks they perform. They also make use of the latest Codex support like Divx and Xvid; you see upgrades that incorporate new changes in technology on a routine basis, not just bug fixes.

AutoGK 2.53b shows true multi-threading performance in a free app

So, the next time you wonder why you do not see any improvement in performance when you drop in a CPU with more cores than the one you have, think about the state of the software industry. It was not until the latter half of 2009 that Windows 7 came out with a more scalable scheduler for the consumer space. Game companies do not want to spend the money to start over from scratch, while ISVs think there is no need because consumers are not directly asking them for it (and also do not want to spend the money), and you may even have some companies working to prevent the move to more thread efficient applications.

Still, it is inevitable that for the near future both Intel and AMD will be pushing more and more cores at you. This may change in 2011-2012 when both AMD and Intel release their next generation of CPUs, but that does not help people now. This is especially true for the entry level and mainstream market. For them the change will not hit them for a few more years; more like 2014-2015 as the next gen CPUs trickle down to them.

Still, there is a ray of hope for the consumer. This is the small development shops; they have the idea right and are working with the changes in technology instead of against them. Maybe one day the ISVs and Game Distribution companies will wake up and start giving the consumer what they need to make the most of the systems they have, but I suppose until there is obvious money in it and until they are shown as the source of the problem (not just dumping the blame on the OS or hardware), we will get the same old business as usual.

United

States: Find other tech and computer products like this

over at

United

States: Find other tech and computer products like this

over at  United

Kingdom: Find other tech and computer products like this

over at

United

Kingdom: Find other tech and computer products like this

over at  Australia:

Find other tech and computer products like this over at

Australia:

Find other tech and computer products like this over at  Canada:

Find other tech and computer products like this over at

Canada:

Find other tech and computer products like this over at  Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf

Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf