Introduction

The LSI Nytro WarpDrive BLP4-800 is one member of the LSI Nytro Application Acceleration Products family. The LSI Nytro family of enterprise storage products leverages PCIe application accelerators to provide customers with a greater range of options to address performance challenges. The BLP4-800 directly accelerates the users' primary storage, while the other Nytro products present different options.

The Nytro XD solution provides caching software that allows LSI Nytro WarpDrive products to intelligently cache and accelerate traditional HDD SAN and DAS storage environments. The final piece of the three-tiered approach is the LSI Nytro MegaRAID Application Acceleration Card, which provides on-board NAND flash to accelerate HDD-based DAS environments.

LSI supplies the Nytro Predictor Software Tool for customers that need help in deciding which product would work best for their workload and environment. This software collects and analyses traces of the workload and identifies potential bottlenecks. This allows the software to predict which product would best accelerate the workload.

LSI initially embarked on the road to providing application acceleration products with the first generation LSI WarpDrive SLP-300. This initial effort teamed LSI with SandForce to develop a 300GB SLC product powered by six SF-1500 processors. Through this collaborative effort with SandForce a good working relationship was forged between the two companies, culminating with LSI purchasing SandForce in October of 2011 for $355 million.

The purchase of SandForce sends a clear message that LSI is serious about its entrance into the SSD market. The merging of the two companies is mutually beneficial, with the IP and new technologies offered by SandForce merging well with LSI's core competency of semiconductor and software development. Coupled with LSI's renowned validation and reputation for reliability, the two companies emerge as a serious contender in the SSD market.

The fusion of the two companies allows LSI to develop products with more of the components under its direct control. This streamlines R&D, integration of the two technologies, and customer support should any issues arise.

SSDs bring the promise of lower power, cooling, space, and management costs than HDD solutions can offer. Utilizing a PCIe SSDs is by far the simplest of upgrades, and keeping the data as close to the processor as possible results in excellent latency. Overall, the option to provide flash acceleration to the server is ripe with benefits, from reduced TCO to greater CPU utilization in existing infrastructure.

Our Latest PCIe SSD Review Coverage

Specifications, Pricing and Availability

The offloaded architecture allows the WarpDrive to handle all processing onboard the device itself. This is important as some other PCIe solutions require the host computer to handle the wear leveling and other flash management tasks. This can lead to rising system requirements over time and creates a need for high-end CPU processors to provide full performance.

One of the greatest aspects of utilizing flash solutions in virtualized environments is the increase in VM density, up to 300% in some cases with the WarpDrive. With the proliferation of virtualized environments, every CPU cycle counts. The WarpDrive creates minimal intrusion into the server environment, keeping the CPU cycles and RAM free to handle other applications. Solutions that incur host overhead rob the system of potential density increases and do not lend themselves well to multi-card deployments.

Overall TCO of any SSD solution is far lower than a comparative HDD-based solution, and this holds true with the WarpDrive. The increased density of the half-height half-length card sips power at 25W. This does not require any auxiliary power and helps to keep heat generation to a minimum.

The demanding workloads placed upon enterprise-class storage solutions require powerful flash management techniques, and this is where the SandForce processors shine. The DuraWrite feature enables data compression, which facilitates low write amplification as a result of writing less data physically to the NAND flash in relation to the host writes. Writing less data provides higher performance, lessens garbage collection requirements, and increases the endurance of the underlying NAND.

The SandForce IP also brings powerful ECC and CRC in conjunction with the RAISE feature to prevent, identify, and repair any data corruption. RAISE and its built-in parity are responsible for repairing the data if the ECC and CRC cannot, providing an extra layer of data security.

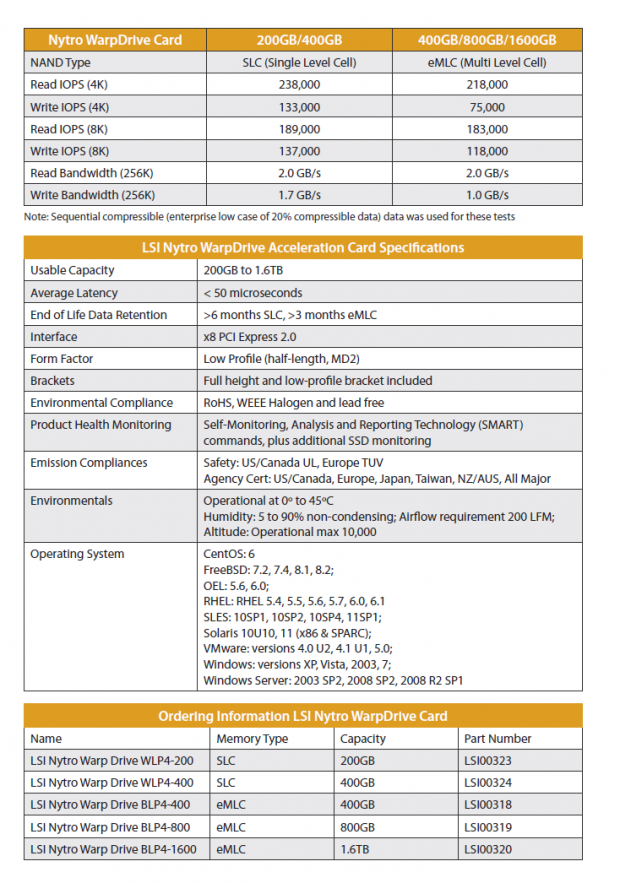

The BLP4-800 sports four SF-2582 controllers, and in conjunction with Toshiba Toggle eMLC NAND can deliver latency as low as 50 microseconds. The 800GB model features 214,000 4K read IOPS and 75,000 4K write IOPS. Total bandwidth with 256K sequential data tops out at 2GB/s in read and 1GB/s in write speed. There are five capacities available, with the 200 and 400 GB variants featuring SLC NAND. The 400, 800, and 1.6TB versions use eMLC NAND.

WarpDrive Management

The LSI SAS MPT integrated drivers allow for plug-and-play capability, easing installation into the host computer. The half-height half-length form factor opens this card up for deployments into increasingly popular low-profile server racks, and upon installation the WarpDrive presents itself as one volume to the host OS with no user configuration required.

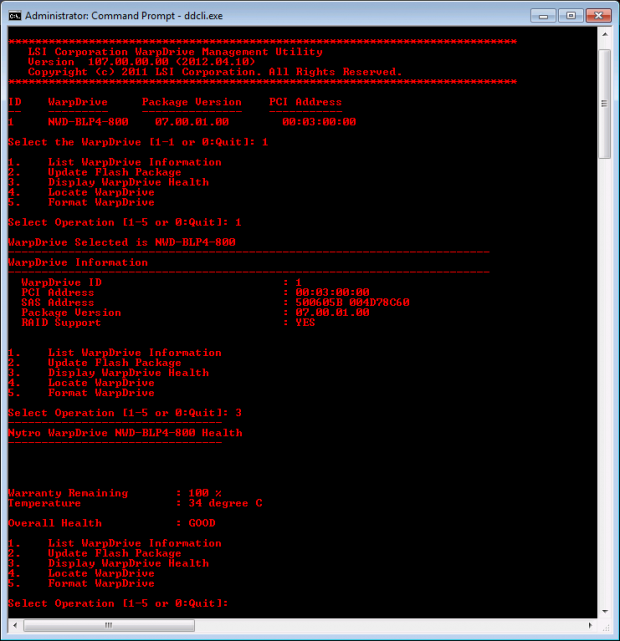

Managing the WarpDrive itself is simple with the WarpDrive Management Utility. This basic program has few bells and whistles.

The utility is very straightforward, and here we can see a typical use. Upon startup the utility asks the user to select which WarpDrive to manage. Querying the drive for health information returns a life measurement reflected by a percentage of the warranty remaining, and the temperature and overall health of the unit.

There is no access to SMART data for the drive, which would be helpful for users to determine some relevant metrics, such as the number of reallocated sectors and the amount of data written to the device. This type of data is surely taken into consideration by the utility when determining the overall health of the device, but it would be nice for users to have access to that level of granularity.

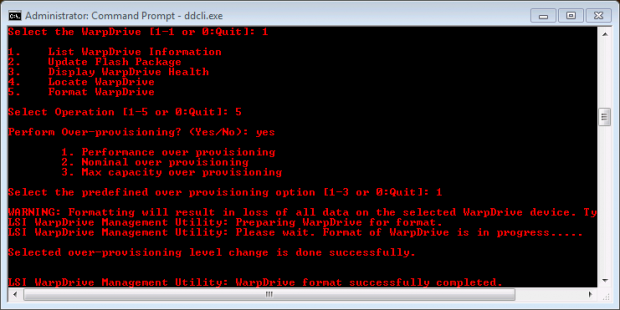

Formatting the drive is also as simple as it gets, with the option for a few different levels of overprovisioning for the end user. By utilizing different levels of overprovisioning users can attain a speed increase at the sacrifice of some capacity. The Performance Overprovisioning setting gives the user 595.05 GB of usable capacity and the Nominal Overprovisioning yields 745.06 GB of usable capacity. We conducted our testing with the Nominal Overprovisioning setting.

This is a much-needed feature that should be included on other enterprise-class SSDs in general. The option to provide users with an easy means of setting variable levels of overprovisioning will certainly cut configuration time and provide excellent benefits in heavy write environments.

All other drive management tasks are handled in the server manager, as per normal.

LSI Nytro WarpDrive

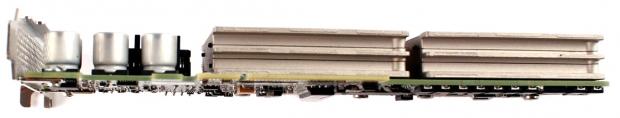

The Nytro WarpDrive connects to the host via a standard PCIe 2.0 x8 connection. There is quite a bit of heft to the card due to the large heavy metal heatsinks that house the NAND.

Three LED lights that indicate the data activity, drive life, and status of the WarpDrive shine through the rear bracket.

The WarpDrive is surprisingly small for the high capacity that it offers, coming in a half-height half-length form factor.

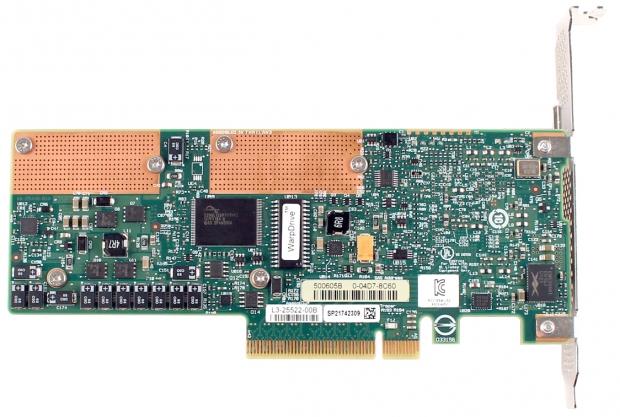

The processor hiding under the black heatsink is the proven LSI SAS2008 PCIe to SAS Bridge. The LSI SAS2008 processor is supported by OEM integrated drivers in nearly every operating system. Flanking the processor are four power regulation capacitors.

The rear of the card holds few surprises, with a firmware chip and power management circuitry. There are also included host power-loss protection capacitors along the bottom of the card.

One of the most telling aspects is what is not there, and that is DRAM packages. SandForce processors do not utilize DRAM caching. This provides consistent performance and a higher resilience to power failures.

LSI Nytro WarpDrive Internals

Pulling the WarpDrive apart, we can observe that each large metal heatsink holds two SSD modules. This design focuses on providing efficient thermal dissipation in high-heat server environments. With the temperature thresholds rising in many datacenters to ease power consumption for cooling, heat mitigation is important to extend the longevity of the NAND and controllers.

Each module connects to the device via ports connected to the PCB with a ribbon cable.

Upon closer inspection, we note that each metal plate has large pink thermal pads that sandwich each SSD module on both sides. This furthers the efficient heat transfer from the components to the heatsink.

Both sides of each PCB are in view in this picture. The SandForce SF-2582 FSP (Flash Storage Processor) is located at the bottom of the PCB on the right. The obscured markings are due to the oily residue from the thermal pads.

The Toshiba Toggle NAND packages of eMLC are each 32GB in capacity, totaling 256GB of raw flash per SSD. After RAISE and the standard overprovisioning there is 200GB of user addressable NAND per SSD, for an aggregate of 800GB for the entire WarpDrive.

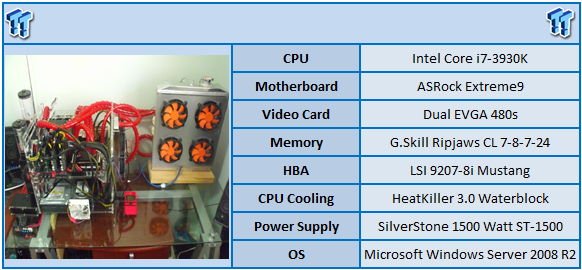

Test System and Methodology

We utilize a new approach to HDD and SSD storage testing here at TweakTown for our Enterprise Test Bench. The inaugural launch of our new methods with SSDs is with the LSI Nytro WarpDrive, and comparisons to other SSDs will be forthcoming as we test new models and SSDs from various manufacturers.

Designed specifically to target the long-term performance of solid state with a high level of granularity, our new SSD testing regimen is applicable to a wide variety of flash devices. From typical form-factor SSDs to the hottest PCIe application accelerators available, we are utilizing this new test regimen to provide accurate performance measurements over a variety of parameters.

Many forms of testing involve utilizing peak and average measurements over a given time period. While these average values can give a basic understanding of the performance of the storage solution, they fall short in providing the clearest view possible of the QOS (Quality of Service) of the I/O.

The problem with average results is that they do little to indicate the variability experienced during the actual deployment of the device. The degree of variability is especially pertinent, as many applications can hang or lag as they wait for one I/O to complete. This type of testing illustrates the performance variability expected in these types of scenarios while also including a whole host of other relevant data, including the average measurements during the measurement window.

In reality, while under load all storage solutions deliver variable levels of performance that are subject to constant change. While this fluctuation is normal, the degree of fluctuation is what separates enterprise storage solutions from typical client-side hardware. By providing ongoing measurements from our workloads with one-second reporting intervals, we can illustrate the difference between different products in relation to the purity of the QOS while the device is under load. By utilizing scatter charts readers can get a basic understanding of the latency distribution of the I/O stream without directly observing numerous graphs.

Consistent latency is the goal of every storage solution, and measurements such as Maximum Latency only illuminate the single longest I/O received during testing. This can be misleading, as a single 'outlying I/O' can skew the view of an otherwise superb solution. Standard Deviation measurements take the average distribution of the I/O into consideration, but do not always effectively illustrate the entire I/O distribution with enough granularity to provide a clear picture of system performance. We use histograms to illuminate the latency of every single I/O issued during our test runs, providing a clear picture of the actual percentage of I/O requests that fall within each latency range.

Our testing regimen follows SNIA principles to ensure consistent, repeatable testing. Due to the very nature of NAND devices, it is important that we test under steady state conditions. We attain steady state convergence through a process that brings the device within a performance level that does not range more than 20% from the average speed measured during the measurement window.

We only test below QD32 to illustrate the scaling of the device. However, low QD testing with enterprise-class storage solutions is a frivolous activity if not presented with higher QD results as well. Administrators that have optimized their infrastructure correctly sustain high QD levels, capitalizing on the performance of the premium tier of storage that SSDs provide. Especially with the explosion of virtualization into the datacenter, the high QD performance of the storage solution is the most important metric.

The first page of results will provide the 'key' to understanding and interpreting our new test methodology.

4K Random Read/Write

We preconditioned the WarpDrive with a heavy 4K random write workload for 18,000 seconds, or five hours. Every second we are receiving reports on several parameters of the workload performance. We then plot this data to illustrate the drives' descent into steady state.

This chart consists of 36,000 data points. The red dots signify the IOPS during the test, and the blue dots are the latency encountered during the test period. We place the latency data in a logarithmic scale to bring it into comparison range. This is a dual-axis chart, with the IOPS on the left, and the latency on the right. The lines through the data scatter are a moving average during the test. This type of testing presents standard deviation and maximum I/O in a visual manner.

Note that the IOPS and Latency figures are nearly mirror images of each other. This illustrates the point that the scatter testing can give our readers a good feel for the latency distribution by viewing the IOPS at one-second intervals. This should be in mind when viewing our read and write results below.

We provide histograms to provide further latency granularity below. This slope of performance happens very few times in the lifetime of the device, and we present these test results only to confirm that the device has reached steady state convergence.

Each QD for each parameter tested includes 300 data points (five minutes of one second reports) to illustrate the degree of performance variability. Dark blue data points are incompressible data, red data points are 80% compressible data, and the light blue data points signify 100% compressible data. This key is to the left of the axis. The line for each QD represents the average speed reported during the five-minute interval.

4K random read speed measurements are an important metric when comparing drive performance, as the hardest type of file access for any storage solution to master is small-file random. One of the most sought-after performance specifications, 4K random performance is a heavily marketed figure.

With incompressible data the WarpDrive delivers an average of 17,583 IOPS, 80% compressible comes in at 20,251 and 100% compressible data averages 37,031 during the QD 256 measurement window.

The tests are conducted in reverse order (QD 256, 128, 64, 32, 16, 8, 4) to keep the device under load. This ensures steady state conditions are held as long as possible. Especially at lower QD the device can tend to begin to recover from steady state.

With SandForce devices, however, once they begin to use fully compressible data they begin to recover regardless of the QD. This is why we are observing the apparently lower performance with the higher QD in some scenarios. In actual deployment, this will not always be the case when utilizing 100% compressible data and the results with higher QD may be in line with the QD 128 results.

Random read is largely unaffected by the compression of the data, and at QD256 the WarpDrive comes in with an impressive average of 145,779 IOPS.

The histogram represents the latency of every single I/O during the QD256 testing period represented in percentages.

During incompressible testing the majority of writes 19% of the requests, or 4,091,467 I/Os, fell into the 10-20 ms range.

For 80% compressible data 27% of the I/O, or 6,377,634 I/Os fell into the 6-8ms range.

For 100% compressible data 52% of accesses, or 27,405,087 I/Os fell into the 4-6ms range.

8K Random Read/Write

8K random read and write speed is a metric that is not often tested in consumer reviews, but for enterprise environments this is an important aspect of performance. With several different workloads relying heavily upon 8K performance, we include this as a standard with each evaluation. Many of our Server Emulations below will also test 8K performance with various mixed read/write workloads.

The 8K random write results are very consistent, averaging around 140,000 IOPS for both 80% and 100% compressible data at QD 256. For incompressible data the 4K write averages 17,730 IOPS at QD 256.

The 8K random read speed is an average of 139,767 IOPS at QD256.

There was a wide range of write access latency during the QD 256 test for incompressible data, but 33% (3,654,428 I/Os) fell into the 10-20ms range.

For 80% compressible data 62% (6,266,218 I/Os) fell into the .02-.04ms range.

For 100% compressible data 86% of requests (74,055,424) fell into the 1-2ms range.

The latency for the 8K write performance is very consistent, providing a good level of performance for many of the server workloads, which are heavily reliant on 8K access.

128K Sequential Read/Write

The 128K sequential read speeds reflect the maximum sequential throughput of the SSD using a realistic file size actually encountered in an enterprise scenario.

The incompressible 128k sequential data speed comes in at an average of 590MB/s at QD 256, the 80% compressible data scores at 775MB/s and the 100% compressible speed comes in at 1450MB/s at QD256, but does score as high as 1700MB/s at QD 128.

The tests are conducted in reverse order (QD 256, 128, 64, 32, 16, 8, 4) to keep the device under load. This ensures steady state conditions are held as long as possible. Especially at lower QD the device can tend to begin to recover from steady state.

With SandForce devices, however, once they begin to use fully compressible data they begin to recover regardless of the QD. This is why we are observing the apparently lower performance with the higher QD in some scenarios. In actual deployment, this will not always be the case when utilizing 100% compressible data, and the results with higher QD may be in line with the mid-range QD results.

The WarpDrive provides an average of 1700MB/s in read speed at a QD of 256.

For incompressible data 72% of requests (998,105 I/Os) fell into the 40-60ms range.

For 80% compressible data 70% of requests (1,320,988) fell into the 20-40ms range.

For 100% compressible data, a remarkable 79% of requests (2,727,615) fell into the 20-40ms range.

OLTP and Webserver

This test emulates Database and On-Line Transaction Processing (OLTP) workloads. Used heavily in the financial sector, OLTP is in essence the processing of transactions such as credit cards. Enterprise SSDs are uniquely well suited for the financial sector with low latency and high random workload performance. Databases are the bread and butter of many enterprise deployments. These are demanding workloads with 8K random of 66% read and 33% write distribution that can bring even the highest performing solutions down to earth.

OLTP and Database data compresses very well, so the higher results will be indicative of the performance in deployment.

At QD 256 with incompressible data the WarpDrive averages 52,375 IOPS, with 80% compressible data it averages 61,612 IOPS, and for 100% compressible it averages 118,167 IOPS.

For incompressible data 32% of requests (73,746,667 I/Os) fell into the .6-.8ms range.

For 80% compressible data 64% of requests (24,017,791I/Os) fell into the 2-4ms range.

For 100% compressible data 59% of requests (41,727,091 I/Os) fell into the 1-2ms range.

The Webserver profile is a read-only test with a wide range of file sizes. Web servers are responsible for generating content for users to view over the internet, much like the very page you are reading. The speed of the underlying storage system has a massive impact on the speed and responsiveness of the server that is hosting the websites and thus the end-user experience.

The speed of the webserver testing was remarkably consistent, with all types of data verging right below 100,000 IOPS at QD 256. This is a read-only test, and the WarpDrive performed admirably.

The Webserver results pegged all types of data performing in the same range, 2-4ms, for 86% of requests.

Fileserver and Workstation

The File Server profile represents typical workloads encountered in file servers. This profile tests across a wide variety of different file sizes simultaneously, with an 80% read and 20% write distribution.

With incompressible data the WarpDrive averages 128,890 IOPS at QD 256, with 80% compressible the average was 128,602 IOPS, and with 100% compressible data the device averaged 128,671 IOPS.

In the fileserver profile all three types of data scores roughly 68% of requests within the 1-2ms range.

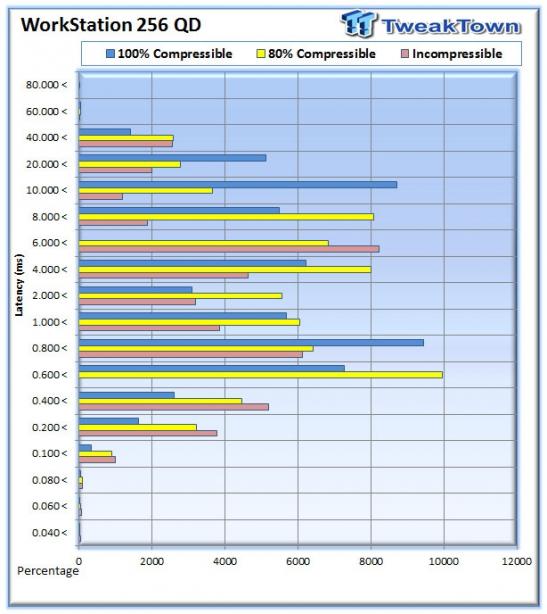

The Workstation profile is indicative of the type of file access requested by a high performance workstation. With many PCIe accelerators making their way into video editing, medical imaging, and geological uses these types of applications are expanding. The workload consists of 8k access with an 80% read and 20% write distribution.

The incompressible results at QD 256 average 71,040 IOPS, with 80% compressible data they average 74,659 IOPS, and for 100% compressible data the drive averages 120,679 IOPS.

The WorkStation profile has a vast distribution of latency.

Final Thoughts

During the course of our evaluation of the LSI Nytro WarpDrive we also validated a new testing regimen that takes an extended amount of time to complete. This round of tests can take up to four days. We repeated these tests multiple times with different settings in order to dial in the correct settings, which will be applied to all flash products that come through my lab. After almost a month of continual testing, we feel that we have a solid test regimen. During this test period, the LSI WarpDrive BLP4-800 was under load almost constantly.

One thing that was impressive was the consistency of performance that we were able to elicit from the WarpDrive over this extended test period. Even with the three compression levels of data that we were testing, the results were consistent and repeatable. The performance of the LSI Nytro WarpDrive was simply exemplary, with rock-solid steady state performance.

This type of consistent, repeatable, and reliable performance is the hallmark of any enterprise-class storage solution. Installation was simple, and drive management was easy to understand. We would like to see some enhanced SMART data included in the user interface, but the simplicity of the WarpDrive Management Tool speaks to just how easy it is to use and manage this Application Accelerator.

SandForce processors have made their way into the enterprise space in various incarnations, from PCIe solutions to standard 2.5" devices. Their deployment into this segment has proven them durable and reliable, with admirable data protection characteristics. The Nytro WarpDrive also includes a three year warranty to alleviate users concerns.

Many have questioned the practicality of utilizing compression in certain scenarios. In many typical Enterprise workloads, the results are clear. With many workloads being either highly compressible or partially compressible, the WarpDrive exhibits strong acceleration characteristics.

By offering a number of products in their Nytro line, LSI is serving the needs of several customer bases simultaneously, regardless of their present infrastructure. Server-side solid-state drive deployment is exploding into the datacenter at a growth rate of 55% per year, and is projected to reach nearly 10 million units per year over the next several years according to Gartner Research. LSI is getting an early foothold into this emerging market, so look for them to continue their growth into this market.

The expansion of virtualization has exposed the storage limitations that have lain hidden in the past. There is a strong need for devices such as these to provide the performance that allows administrators to increase density and realize higher CPU utilization from existing infrastructure. With validating sales wins such as the EMC VFCache product and collaboration with Proximal Data and Cisco, the WarpDrive has already begun its penetration into the server market.

The WarpDrive as a primary storage solution is simply outstanding, and we are looking forward to testing its performance with the Nytro XD caching software. We have absolutely no reservations giving the BLP4-800 LSI Nytro WarpDrive the TweakTown Editor's Choice Award.