Our Verdict

Introduction and Quick Specs

Techman SSD is a relatively new company looking to penetrate enterprise and consumer SSD markets with high-quality, high-performance products that offer value that is a cut above the ordinary. Techman's design team includes experts in computer science, NAND flash technology, electronics design, validation, and quality assurance. Techman's mission statement is to "design and deliver SSD solutions with speed, security, and service for the data-exploding universe and to become the world's number one SSD solution provider". Techman is already a well-known brand in Asian markets and is looking to expand their reach worldwide.

Techman's XC100E5C Series SSDs leverage Microsemi's (formerly PMC) 16-core, 16-channel "Princeton" NVMe controller paired with Toshiba 15nm eMLC NAND flash and a 2-4GB DDR3 1600MHz DRAM cache. Microsemi's "Princeton" ASIC (Application Specific Integrated Circuit) controller is highly programmable allowing Techman's engineering team to customize features through software and firmware to meet the specific needs of their clientele. The XC100E5C Series NVMe SSDs are designed to meet the need for high-performance storage in today's datacenter. Techman's XC100E5C series SSDs utilize the power of the NVMe 1.1b software stack over a PCIe Gen3 x4 lane interface to deliver ultra-low latencies, by moving data closer to the CPU and reducing CPU overhead with a streamlined command stack. A single XC100E5C PCIe SSD can deliver the performance of up to ten SATA-based SSDs while at the same time reducing space requirements and operating costs.

Techman NVMe SSDs are engineered for today's high-speed computing environments such as OLTP, Analytics, HFT, and Cloud computing. The XC100E5C sports a hefty endurance rating of seven drive writes per day for five years, or 10.2PB for 800GB, 20.4PB for 1.6TB and 40.8TB for 3.2TB. The XC100E5C series employ a whole host of reliability and security measures including embedded XOR, Strong BCH ECC, Advanced NAND flash management, End-to-End Data Protection, Adaptive RAID Protection, Thermal Throttling, Power Loss Protection, Metadata and Firmware Protection, High Performance FTL, Global Wear-Leveling, TRIM, Garbage Collection, and a proprietary NVMe driver. Techman's proprietary driver is not required for the drive to run at factory specs, but it will provide superior performance and functionality when installed.

Techman's SSD toolbox is currently under construction. The toolbox used in conjunction with Techman's NVMe driver will facilitate useful functions such as thermal monitoring, self-health check, and many more drive management features.

Techman was kind enough to send over two 1.6TB XC100E5C HHHL Add-In-Card's for testing. Having two XC100E5C SSDs on hand affords us the opportunity to test a single disk as well as a RAID array. Techman, as well as ourselves, are interested in comparing the performance of a two-drive XC100E5C 1.6TB array to that of Intel's potent DC P3608 RAID capable dual controller SSD. We will be creating a Windows Server Storage Space RAID 0 array with a partition on the virtual volume for this testing. Usually, we test without a partition on the drive, and that is how we will be testing as a single drive. However, to test a two-drive software RAID array, we had to partition the volume for it to be recognized by Iometer. Introducing a partition into the mix also introduces Storage Spaces built-in write-caching which boosts overall performance significantly. This is the reason why; in many cases, the two-drive array will deliver more than double the performance of a single drive.

Quick Specs

The Techman XC100E5C 1.6 TB HHHL AIC we have on the bench today sports the following hardware and steady-state performance specifications: 4K Random Read / Write = 750K/115K. Sequential Read / Write = 3200 / 1400 MB/s. Power consumption = 21W Active/ 8W idle. Controller = Microsemi 16-Channel ASIC NVMe controller. NAND = Toshiba 15nm eMLC. DRAM Cache = 2GB on the 1.6TB model. Onboard Power-loss Protection = Yes.

Techman backs the XC100E5C 1.6TB for five years or 20.4 Petabytes Written.

Our Latest PCIe SSD Review Coverage

Techman XC100E5C 1.6TB PCIe NVMe SSD Photos and Specs

Photos

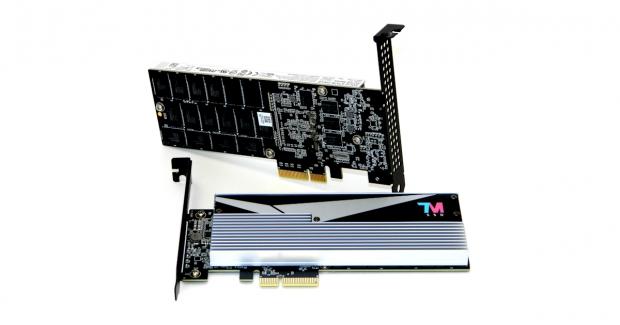

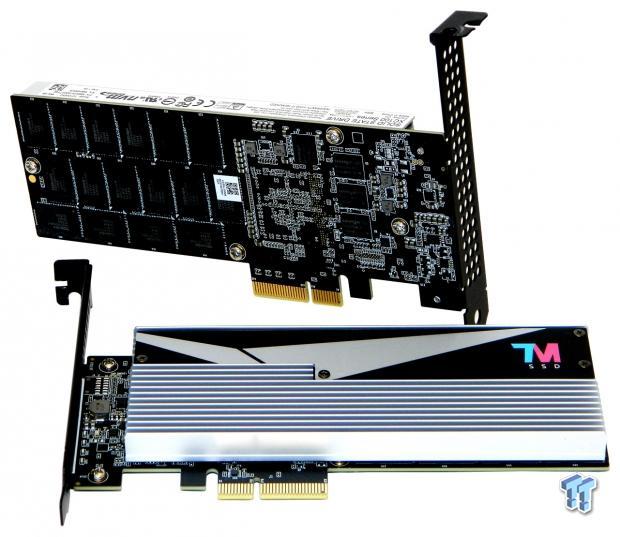

The top half of the drive's all-black PCB is covered by a full-length aluminum heat sink. There is a sheet aluminum cover fused to a daughterboard PCB with a Techman logo on it. The cover attaches with four small screws.

The bottom half of the PCB has no shielding; the components are exposed. This half of the PCB houses 16 of the drive's 32 Toshiba 15nm eMLC flash packages and four of the drive's eight Nanya DRAM cache packages.

There is a full-length manufacturer's label located on this side of the drive's aluminum heat sink. The label lists the drive's model number, part number, serial number, and shipping firmware.

From this angle, we can see the drive's perforated full-height bracket. The drive ships with a half-height bracket. Techman was kind enough to send along full height brackets for our use.

From this angle, we can see the channels in the heat sink where airflow is to be directed through to the perforated mounting bracket.

Here is a shot of the drive with the daughterboard removed.

Removing the heat sink reveals that the drive's controller and major components are fitted with thermal pads to wick heat away. This side of the PCB houses the drive's controller, 16 of its 32 NAND flash packages, and four 256MB DRAM cache packages. There is an additional 256GB DRAM package dedicated for data ECC. The function of the additional 256MB DRAM package further guarantees data integrity at the expense of some write speed.

A detailed view of the drive's 16-channel, 16-core Microsemi (formerly PMC) 500MHz ASIC "Princeton" controller.

A detailed view of some of the drive's 64GB (32 in total) Toshiba 15nm eMLC BGA flash packages. 32-64GB flash packages give the drive a total of 2048GB NAND array capacity. The drive is over-provisioned by 28% leaving 1.6TB available to the end-user before formatting.

A detailed view of some of the drive's (eight in total) 256MB Nanya 1600MHz DDR3 DRAM cache packages. Eight 256MB DRAM packages give the 1.6TB XC100E5C a total of 2GB onboard data cache.

Specifications and Features

We are going to go over the 1.6TB XC100E5C's specifications, for other capacities please refer to the above product specification sheet. Techman's XC100E5C 1.6TB Enterprise PCIe NVMe SSD comes in a half-height, half-length, low-profile Add-in Card (AIC) form factor as well as a 2.5" U.2 (SFF-8639) variant. Factory specifications for both form factors are identical. We note that the given factory specifications on the product sheet for random write are FOB. Additionally, we note that the specs given on the factory spreadsheet for mixed 4K 70/30 R/W are also FOB.

Features include a PCIe Gen 3.0 x4 interface, and Toshiba eMLC NAND flash memory. End-to-End data protection featuring XOR parity protection and advanced ECC bit correction are featured on all internal and external memories in the data path for protection at every layer. Enhanced power loss management including protection from unplanned power loss or PLI (Power Loss Imminent) by utilizing a propriety combination of hardware, firmware algorithms, and robust validation is also included. We also have background garbage collection and optional AES 256-bit encryption.

Techman XC100E5C 1.6TB PCIe NVMe SSD steady-state factory specs:

- Sequential 128KB Read (up to): 3200 MB/s

- Sequential 128KB Write (up to): 1400 MB/s

- Random 4KB Read (up to): 750,000 IOPS

- Random 4KB Write (up to): 115,000 IOPS

- Read/Write latency: 90/17us (typical)

Reliability: MTBF 1.2 million device hours, UBER: 1E-17, end-to-end data protection, enhanced power-loss data protection, SMART monitoring, bad block management, TRIM, and wear-leveling.

Endurance: PBW (Petabytes Written): 1.6TB = 20.4 PBW equivalent to seven drive writes per day for five years.

Pricing: Techman's XC100E5C is set to retail for approximately $2 per gigabyte.

Test System Setup and Testing Methodology

Jon's Enterprise SSD Review Test System Specifications

- Motherboard: ASRock Rack EPC612D8A-TB (Intel C612 chipset) - Buy from Amazon

- CPU: Intel Xeon E5-2699 V3 18 Core 36 Thread - Buy from Amazon

- Cooler: Supermicro Air Cooling

- Memory: Samsung 64GB DDR4 ECC 2133MHz - Buy from Amazon

- Video Card: Onboard Video

- Power Supply: Seasonic Platinum 1000 Watt - Buy from Amazon

- OS: Microsoft Windows Server 2012 R2 64 Bit - Buy from Amazon

- Drivers: Microsoft AHCI

- Drivers: Techman Proprietary NVMe driver

We would like to thank ASRock Rack, Crucial, Intel, Samsung, Seagate, and Seasonic for making our test system possible.

TweakTown's Enterprise SSD testing methodology replicates typical enterprise workload environments as closely as possible. Our test systems use strictly enterprise based hardware. Enterprise chipsets, Intel Xeon processors, ECC DRAM, and standard air-cooling. Storage drivers are Windows standard drivers, except as otherwise required for the test device to operate as designed.

TweakTown strictly adheres to industry-accepted Enterprise Solid State Storage testing procedures. Each test we perform repeats the same sequence of the following four steps:

- Secure Erase SSD

- Write entire capacity of SSD a minimum of 2x with 128KB sequential write data, seamlessly transition to next step

- Precondition SSD at maximum QD measured (QD32 for SATA, QD256 for PCIe) with the test specific workload for a sufficient amount of time to reach a constant steady-state, seamlessly transition to next step

- Run test specific workload for 5-minutes at each measured Queue Depth, record results

We chart workload preconditioning IOPS or MB/s and latency for each specific test. We plot workload preconditioning using scatter charts with each recorded 1-second data point represented on the chart, allowing us to see some of the performance variability exhibited by our test subjects. We chart workloads using line charts plotting average workload IOPS or MB/s and latency at each measured QD. Utilizing line charts provides a good visual perspective of the test subject's performance curve.

To summarize, we test with Enterprise hardware, Windows Server Operating System, and we strictly adhere to industry-accepted Enterprise SSD testing procedures. Our goal is to provide results that are consistent, reliable, and repeatable.

Benchmarks – 4K Random Write/Read

4K Random Write/Read

We precondition the drive for 16,000 seconds, or 4.44 hours, receiving performance data every second. We plot this data to observe the test subject's descent into steady-state. We plot both IOPS and Latency. We plot IOPS (represented by blue scatter) in thousands and Latency (represented by orange scatter) in milliseconds. We observe steady-state is achieved at 5,000 seconds of preconditioning for a single drive; 4,000 seconds for a two-drive array. We do note a significant number of outlying IOs.

With our configuration, we exceeded Techman's 4K random write factory specification of 115,000 IOPS. The XC100E5C delivers 4k random write performance that exactly matches that of Samsung's XS1715. The two-drive XC100E5C array delivers well over double the performance of a single drive in this test as we previously explained would happen in certain test scenarios. Intel's DC P3700 outperforms the DC P3608 at queue depths of up to 64. Our two-drive XC100E5C array eviscerates the DC P3608 at QD256 by 127K IOPS.

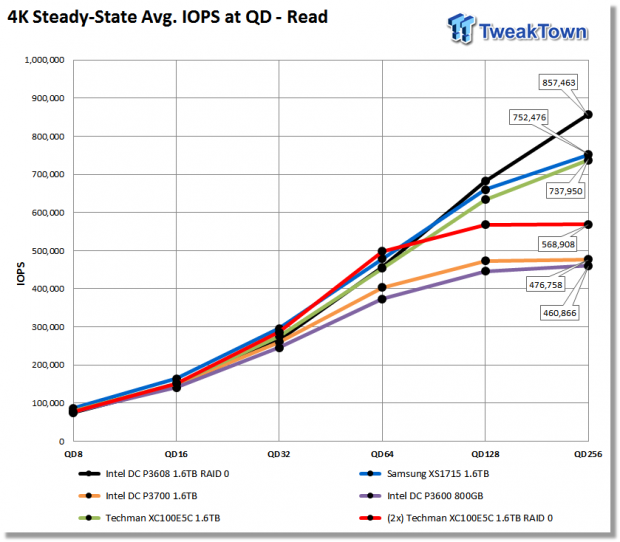

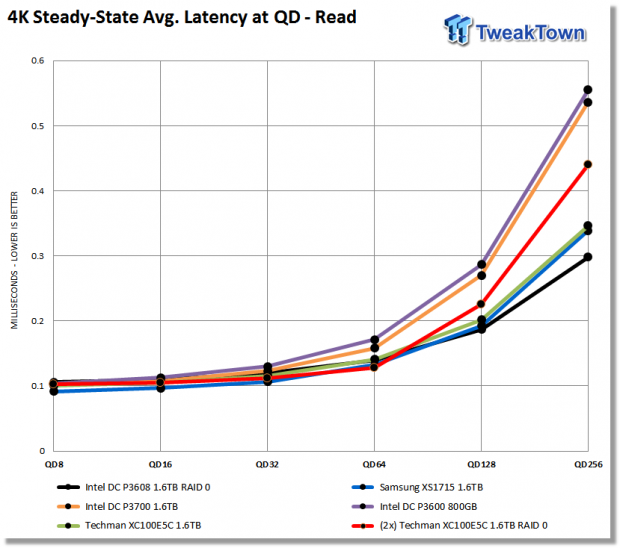

The XC100E5C outperforms Intel's DC P3700 across the board. Samsung's XS1715 outperforms the XC100E5C across the board. Our two-drive XC100E5C array hits a wall at QD128-256. We aren't exactly sure why the array hits this barrier, but this is the only test where it happens. Intel's DC P3608 beats all the competitors by over 100K at QD256. The XC100E5C performs very well, and very similar to Samsung's XS1715. The XC100E5C array outperforms the DC P3608 up to QD64.

Conclusion (TL;DR): The XC100E5C handily outperforms Intel's DC P3700 with 4K random reads. The reverse is true for 4K random writes.

Benchmarks - 8K Random Write/Read

8K Random Write/Read

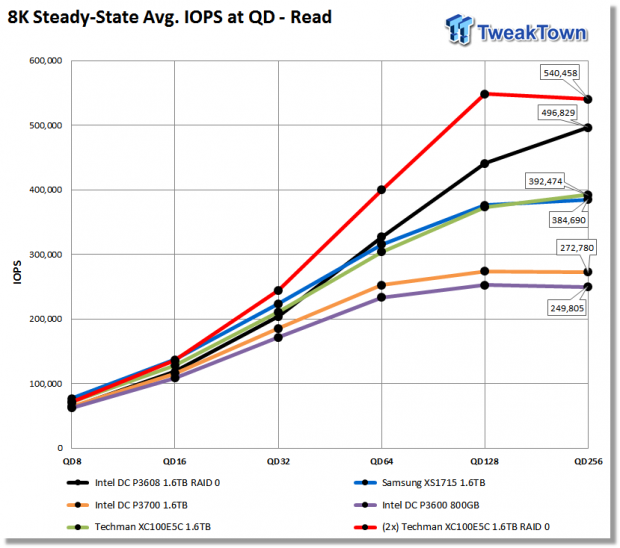

We precondition the drive for 16,000 seconds, or 4.44 hours, receiving performance data every second. We plot this data to observe the test subject's descent into steady-state. We plot both IOPS and Latency. We plot IOPS (represented by blue scatter) in thousands and Latency (represented by orange scatter) in milliseconds. We observe steady-state is achieved at 4,000 seconds of preconditioning for a single drive; 5,000 seconds for a two-drive array. We do note a significant number of outlying IO's.

8K random is a more demanding workload than 4K. The XC100E5C outperforms Samsung's XS1715 from QD16 on up to QD256. Intel's DC P3700 delivers the second best performance of the drives in our test pool. The XC100E5C two-drive array is benefitting greatly from write caching in this test, delivering 2.4X the performance of a single XC100E5C. More importantly, the two-drive XC100E5C array is generating 78% more performance than Intel's DC P3608 at QD256.

As expected, the XC100E5C dominates Intel's DC P3700 from start to finish with the read portion of our 8K testing. At QD256, the XC100E5C outperforms the DC P3700 by 44%. The XC100E5C trails Samsung's XS1715 slightly up to QD128, at QD256 the XC100E5C surpasses the XS1715 by 8K IOPS. A single XC100E5C outperforms Intel's DC P3608 at queue depths of up to 32. The two-drive XC100E5C array again dominates the DC P3608 and the rest of the test pool, with the greatest performance disparity occurring at QD128.

Conclusion (TL;DR): The XC100E5C delivers far superior performance to that of the DC P3700 at all measured random read queue depths culminating in a 100K lead at QD256. The two-drive XC100E5C array again handily outperforms Intel's DC P3608 running in RAID 0 mode.

Benchmarks - 128K Sequential Write/Read

128K Sequential Write/Read

We precondition the drive for 6,500 seconds, or 1.8 hours, receiving performance data every second. A sequential steady-state is achievable in a much shorter span of time than a random steady-state. We plot both MB/s and Latency. We plot MB/s using blue scatter and Latency using orange scatter. We observe that a single XC100E5C as well as the two-drive array both reach steady-state at 0 seconds of preconditioning, indicating that the previous 2x LBA fill phase achieved a sequential steady-state. The XC100E5C is performing well above its factory sequential write specification of 1400 MB/s. We observe a small number of outliers dropping below 1000 MB/s for the single drive and well below 2000 MB/s for the two-drive array.

Techman's XC100E5C handily outperforms Samsung's XS1715 across the board. We note very little variability from the XC100E5C at QD8-QD256. As we've seen so far in our testing, Intel's DC P3700 delivers better write performance than the XC100E5C. The DC P3608 delivers the second best performance of the bunch cranking out 1970 MB/s at QD16. However, the DC P3608 is greatly overshadowed by our two-drive XC100E5C array. At QD256, the two-drive array is 62% faster than the DC P3608.

The XC100E5C fares extremely well when workloads are sequential in nature. Again, the XC100E5C handily outperforms both Samsung's XS1715 and Intel's DC P3700. Intel's DC P3608 lead's our test pool at QD8-16. At queue depths of 64-256, the XC100E5C array outperforms the DC P3608 by over 1000 MB/s. We observe very good scaling from our two-drive XC100E5C array. The two-drive array delivers 97% scaling in comparison to a single XC100E5C.

Conclusion (TL;DR): Techman's XC100E5C handily outperforms Samsung's XS1715 with sequential read/write workloads. Intel's DC P3700 outperforms the XC100E5C with sequential write workloads but is soundly outperformed by the XC100E5C with sequential read workloads.

Mixed Workload Benchmarks – Email Server

Email Server

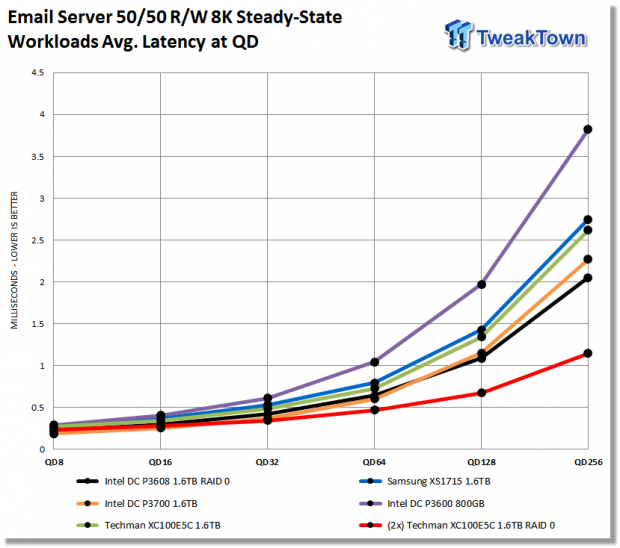

We precondition the drive for 16,000 seconds, or 4.44 hours, receiving performance data every second. We plot this data to observe the test subject's descent into steady-state. We plot both IOPS and Latency. We plot IOPS (represented by blue scatter) in thousands and Latency (represented by orange scatter) in milliseconds. We observe steady-state is achieved at 5,000 seconds of preconditioning for a single drive; 4,000 seconds for a two-drive array. We do note a significant number of outlying IO's.

An Email Server workload is a demanding 8K test with a 50 percent R/W distribution. This application gives a good indication of how well a drive will perform in a write heavy workload environment.

Intel's DC P3700 shows off its write prowess at QD8-16 where it leads the field; it is even able to outperform the DC P3608 at queue depths of up to 64. The XC100E5C shows itself to be a better choice than Samsung's XS1715 for this demanding type of workload. At queue depths of 32 and beyond, the XC100E5C array asserts its dominance over Intel's DC P3608. At QD256, the XC100E5C array outperforms Intel's DC P3608 by nearly 100K IOPS or 98%. A two-drive XC100E5C array does take an additional slot in comparison to the DC P3608, but it uses the same number of PCIe lanes (8), and cost per GB is similar.

Conclusion (TL;DR): Techman's XC100E5C is a better choice than Samsung's XS1715 with this particular type of workload. A two-drive XC100E5C array provides nearly twice the performance of Intel's DC P3608 at QD256.

Mixed Workload Benchmarks – OLTP/Database

OLTP/Database

We precondition the drive for 16,000 seconds, or 4.44 hours, receiving performance data every second. We plot this data to observe the test subject's descent into steady-state. We plot both IOPS and Latency. We plot IOPS (represented by blue scatter) in thousands and Latency (represented by orange scatter) in milliseconds. We observe steady-state achieved at 4,000 seconds of preconditioning for a single drive; 3,000 seconds for a two-drive array. We do note a significant number of outlying IO's.

An On-Line Transaction Processing (OLTP) / Database workload is a demanding 8K test with a 66/33 percent R/W distribution. OLPT is online processing of financial transactions such as credit cards and high-frequency trading in the financial sector. Database workloads are challenging for any storage solution.

In terms of pecking order, this test follows exactly what we saw from our email testing. Techman's XC100E5C again outperforms Samsung's venerable XS1715. Intel's DC P3700 outperforms the rest of the test pool at QD8-32, and the two-drive Techman array handily outperforms intel's DC P3608 at queue depths of 32 and beyond.

Conclusion (TL;DR): Techman's XC100E5C is a better choice than Samsung's XS1715 with this particular type of workload.

Mixed Workload Benchmarks - Web Server

Web Server

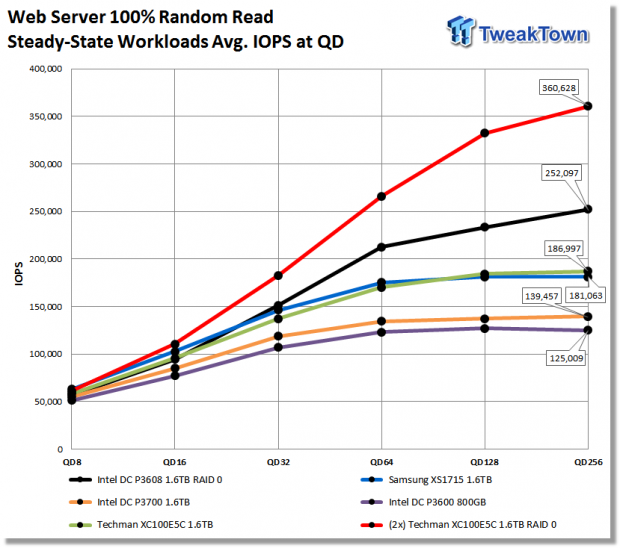

We precondition the drive for 16,000 seconds, or 4.44 hours, receiving performance data every second. We plot this data to observe the test subject's descent into steady-state. We plot both IOPS and Latency. We plot IOPS (represented by blue scatter) in thousands and Latency (represented by orange scatter) in milliseconds. We observe steady-state is achieved at 4,000 seconds of preconditioning for a single drive; 3,000 seconds for a two-drive array. We do note a significant number of outlying IO's.

The Web Server workload is a pure random read test with a wide range of file sizes. Our test consists of the following file sizes and corresponding percentage of the overall 100 percent workload file size: 512B = 22 percent, 1KB = 15 percent, 2KB = 8 percent, 4KB = 23 percent, 8KB = 15 percent, 16KB = 2 percent, 32KB = 6 percent, 64KB = 7 percent, 128KB = 1 percent, and 512KB = 1 percent.

With a 100% random read workload, Intel's DC P3700 is handily outperformed by the Techman XC100E5C. Samsung's XS1715 delivers slightly better performance than the XC100E5C at queue depth's of up to 64. The XC100E5C just edges out the XS1715 at queue depths of 128-256. Intel's DC P3608 delivers excellent performance as expected with this 100% read workload. However, the DC P3608 is greatly eclipsed by our two-drive Techman array. At QD256, the XC100E5C array is delivering 98K more IOPS than Intel's DC P3608.

Conclusion (TL;DR): Techman's XC100E5C is a far better choice for this type of workload in comparison to Intel's DC P3700.

Final Thoughts

Techman is looking to penetrate the enterprise SSD market, and we believe they are off to a good start with their XC100 series PCIe NVMe SSDs. We are pleasantly surprised that Techman's XC100E5C is capable of outperforming Samsung's potent XS1715 NVMe PCIe SSD in most of our testing. Techman's engineering team has been able to take the blank slate that is Microsemi's Princeton NVMe controller and design an SSD with performance characteristics that are most desirable for the datacenter ecosystem.

Comparing Techman's XC100E5C with Intel's DC P3700 reveals that the XC100E5C is better suited for read-centric workloads than the DC P3700. This is what we would expect to see. The XC100E5C possesses significantly better sequential read performance, the P3700 significantly better sequential write performance. This carried over into our mixed workload testing where we saw the P3700 deliver better performance with mixed workloads that involve large doses of random writes mixed in with random reads. Looking at our web server testing which is 100% random read, we see that the XC100E5C possesses a distinct advantage over Intel's P3700 and even a slight advantage over Samsung's XS1715 at queue depths of 128-256.

We were blown away by the performance we got from a two-drive Techman XC100E5C array. Compared to Intel's monster DC P3608, our two-drive XC100E5C array delivered vastly superior performance. The array scaled exceptionally well, with the lone exception of pure 4K random reads at QD128-256. Aside from that lone exception, the array delivered 97% to 128% more performance at QD256 in comparison to a single XC100E5C.

Overall, Techman's XC100E5C performs at or above expectation, has a robust data protection features, a high TBW rating and a rich enterprise-class feature set. Optional AES 256-bit encryption assures data security. Techman's XC100E5C possesses everything required for a superior datacenter SSD. Techman's XC100E5C 1.6TB NVMe HHHL AIC is TweakTown recommended.

Pros:

- Available as HHL AIC and U.2 versions

- Sequential Read/Write

- Data Protection Scheme

Cons:

- Outlying IOs