The insatiable demand for AI GPUs and all things AI throughout 2023 has seen HBM memory makers enjoying a gigantic 500% spike in the average selling price of HBM chips. Not bad at all for HBM makers Micron, Samsung, and SK hynix.

HBM chips are one of the most important parts of an AI GPU, with the likes of AMD and NVIDIA both using the bleeding edge of HBM memory on their respective AI GPUs. Market research firm Yole Group has now chimed in, predicting that the HBM supply will grow at a compound annual rate of 45% from 2023 to 2028, with HBM prices expected to remain high "for some time," considering how hard it is to scale up HBM production to keep up with the crazy demand.

Samsung and SK hynix are the dominant players in the HBM memory-making business; between the South Korean companies, they have 90% of the HBM market share, leaving Micron with the scraps. SK hynix and TSMC (Taiwan Semiconductor Manufacturing Company) are teaming together, so you've got some interesting developments that will continue to roll on as the months (and years) go on.

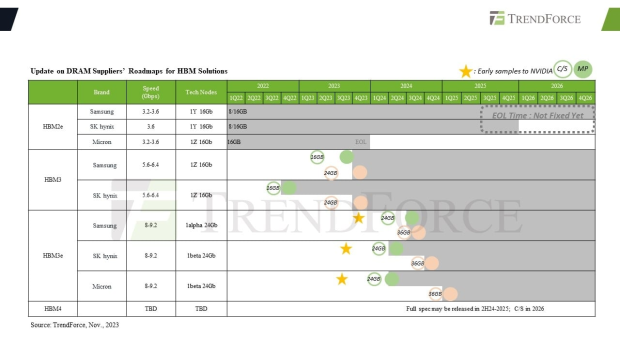

SK hynix recently teased that its next-gen HBM4 memory will be in mass production for 2026, ready for next-next-gen AI GPUs beyond NVIDIA's upcoming Blackwell B100 AI GPU. HBM isn't stopping, and won't stop... as a key part of AI GPUs, it's not just fast HBM that AI GPU makers want (and need). It's far more HBM on board.

NVIDIA's upcoming H200 AI GPU will have up to 141GB of HBM3e memory on board, while the H100 has 80GB of HBM3 memory. AMD's new Instinct MI300X AI GPU has 192GB of HBM3 memory on board, leaving NVIDIA with the faster HBM3e but AMD with more VRAM (192GB versus 141GB).

HBM4 is going to be a huge leap for whichever company adopts it -- I don't see how NVIDIA wouldn't be using it, for the AI GPU after Blackwell -- and it's going to be nuts.

SK hynix recently teased its HBM4 memory late last year, saying development would kick off in 2024, with GSM team leader Kim Wang-soo explaining: "With mass production and sales of HBM3E planned for next year, our market dominance will be maximized once again. As development of HBM4, the follow-up product, is also scheduled to begin in earnest, SK Hynix's HBM will enter a new phase next year. It will be a year where we celebrate".

We should see the first HBM4 memory samples with 36GB per stack, allowing for up to a huge 288GB of HBM4 memory on a truly next-gen AI GPU. NVIDIA's next-gen Blackwell B100 AI GPU will be using the latest HBM3e memory, so the next-next-gen codename Vera Rubin should feature HBM4 memory. We should also expect some upgraded Blackwell AI GPUs to use HBM4 down the track.

NVIDIA is refreshing its H100 AI GPU that uses HBM3 with the beefed-up H200 AI GPU that uses the latest HBM3e memory standard, so something similar should be done with Blackwell with HBM3e up to HBM4, possibly. Or a faster HBM3e variant that is planned for the years to come.