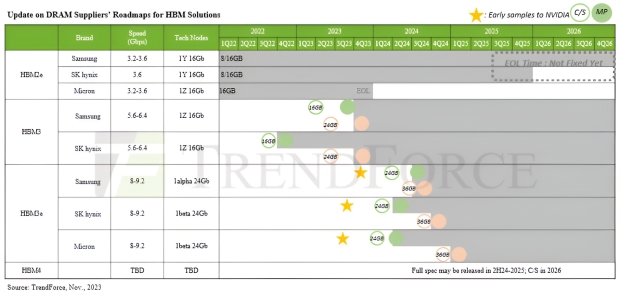

NVIDIA will be gobbling up all of the HBM3e and future-gen HBM4 memory supply for its current and next-gen AI GPUs, with TrendForce's new report stating NVIDIA will soak up most HBM3, HBM3e, and HBM4 memory over the next few years.

TrendForce's latest research into the HBM market sees NVIDIA planning to diversify HBM suppliers for "more robust and efficient supply chain management". TrendForce sees NVIDIA buying up most of its HBM memory from Samsung because they'll be ready first, while SK hynix and Micron will have their new HBM memory ready in the second half of 2024.

Samsung's new HBM3 (24GB) is expected to complete verification with NVIDIA in the coming weeks, while its newer HBM3e memory is coming in 8-Hi stacks (24GB) and will reach NVIDIA by the end of July 2024. It's not just Samsung with HBM memory where SK hynix will have its HBM3e memory with NVIDIA in mid-August 2024, while Samsung will be last delivering HBM3e memory to NVIDIA in October 2024.

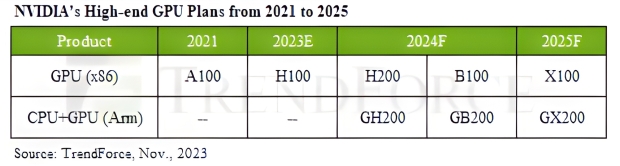

NVIDIA's current-gen H100 AI GPU uses HBM3 memory, while the just-announced H200 AI GPU and upcoming B100 AI GPUs in 2024 are using HBM3e memory. NVIDIA also has teased its next-next-gen X100 AI GPU on datacenter roadmaps for 2025. NVIDIA is tapping Micron's new HBM3e memory for its beefed-up H200 AI GPU, with up to 141GB of HBM3e memory per H200 AI GPU.

- Read more: SK hynix and Samsung are both sold out of their HBM3 memory until 2025

- Read more: Micron teases GDDR7 at 32Gbps memory for 2024, GDDR7 at 36Gbps in 2026

- Read more: Samsung teases next-gen HBM4 is in development

- Read more: NVIDIA's next-gen GB200 'Blackwell' GPU listed on its 2024 data center roadmap

- Read more: NVIDIA announces H200 AI GPU: up to 141GB of HBM3e memory

- Read more: AMD Instinct MI300X AI accelerator teased: up to 192GB of HBM3 memory

The thrust of AI has seen demand for high-speed HBM3, HBM3e, and HBM4 memory for AI GPUs from both AMD and NVIDIA, with AMD's upcoming Instinct MI300X series of AI accelerators featuring up to 192GB of HBM3 memory. TrendForce reiterates that HBM4 memory will be launching in 2026 and will be the first use of a 12nm process for its bottommost logic die (the base die) that will be supplied by foundries.

We can expect HBM4 12-Hi stacks to launch in 2026, while the HBM4 16-Hi stacks will be launching the year later in 2027.