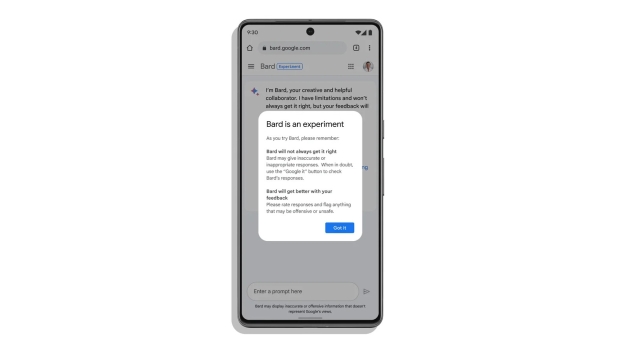

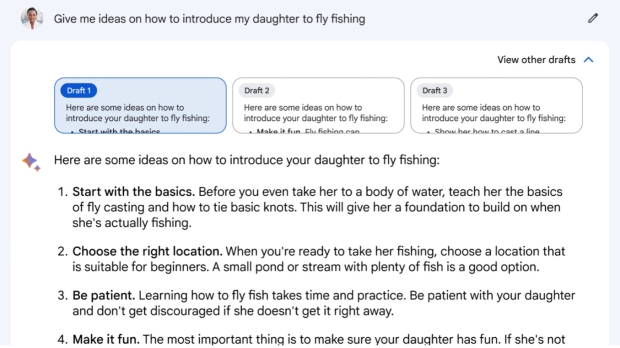

Google staff members weren't too impressed with the state of Bard when asked to test the AI before its launch, and indeed warned the company that the AI wasn't ready to meet its public.

This is according to a Bloomberg report, which relayed information from some 18 current and former employees of Google, as well as from internal documents - and the overall vibe is of stark warnings that Bard needed more polish.

Bard was labeled as 'cringeworthy' and a 'pathological liar' alongside being called 'worse than useless' in an internal message to thousands of staffers, many of whom agreed. The comment about being useless was followed by a plea not to launch Bard, we're told.

Among the problems specified are inaccurate answers which in some cases were dangerous - like advice on scuba diving that would "likely result in serious injury or death."

More worryingly, the report further claims that Google ignored an internal risk evaluation that concluded Bard wasn't ready for release.

Furthermore, we're informed that staff concerned with the ethics and safety of new products at Google have been told to keep their noses out when it comes to generative AI tools.

It's unsurprising to hear these fears staff purportedly had, given that we did see a misfire with the launch of Bard, in what was clearly a rush to release.

The problem for Google is that it was taken off-guard by the launch of the ChatGPT-powered Bing AI, which even in its early days swiftly started boosting traffic to the Bing search site. So, Google clearly felt it needed to catch up quick, or risk being perceived as falling behind Microsoft - the danger being that the launch blunders have arguably done more PR damage than that perception.

Google is hoping to recover, though, and appears to be throwing a whole lot of extra resources behind the Bard AI (at the cost of Google Assistant).

What's worrying, though, is the reported line of thinking regarding the ethics team, which has been told to stay away from Bard. Although Microsoft is guilty of this attitude, too - even more so, in fact. As you may recall, Microsoft actually canned its ethics team within the AI group last month.

The trouble is that long-term thinking about ethics and responsibility concerning AI development doesn't exactly marry up with the heated Bing versus Bard AI arms race currently playing out. But hey, what could possibly go wrong if, at a foundational level with AI, the proper safeguards aren't observed...

This is where the open letter cautioning about the potential future threat AI could represent to society comes in. You know, the one which Elon Musk signed before announcing his take on AI: TruthGPT (read all about that here).