NVIDIA's Audio2Face technology is a part of its push to create lifelike or realistic 3D avatars powered by generative AI. As part of the pipeline, Audio2Face enables the creation of realistic digital characters that can be used for games or even customer service agents by leveraging generative AI for real-time facial animation. You can see it in action in the video below.

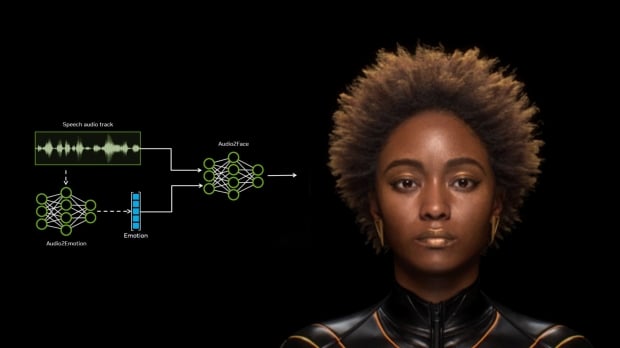

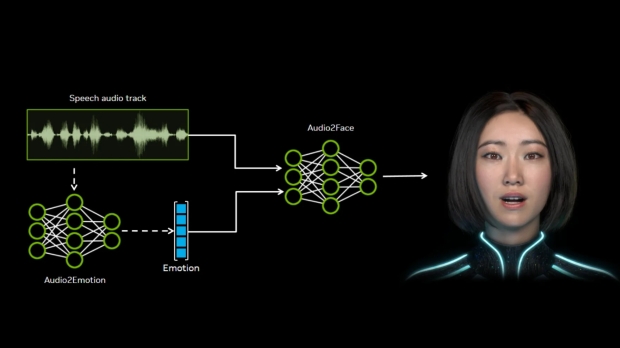

Audio2Face works by taking a speech audio track to create an animation by analyzing speech patterns and sounds, as well as the emotion or intonation. The latter is crucial, as it helps separate Audio2Face from simple lip synching, as the animation attempts to capture and visualize the emotional intent or details in the speech audio track.

For game developers, Audio2Face could become an invaluable tool for animating numerous digital characters - speeding up development time and opening the door to a new level of fidelity for smaller teams. And with that, NVIDIA has announced that it is open-sourcing the Audio2Face models and SDK so that "every game and 3D application developer can build and deploy high-fidelity characters with cutting-edge animations."

- Read more: Sony sees AI as a 'powerful tool' and already used AI animation tech in Horizon Zero Dawn Remastered

- Read more: EA and Stability AI partnership includes generating full 3D worlds from a series of prompts

- Read more: DLSS 5 only takes 2D rendered frames and motion vectors as input, not 3D game engine data, confirms NVIDIA

Additionally, NVIDIA is open-sourcing the training framework for Audio2Face, allowing developers to fine-tune and customize the models for specific use cases. For example, a fantasy or cartoon-style version could exaggerate facial animation to suit a more vibrant tone. The Audio2Face SDK also includes plug-ins for popular 3D animation app Autodesk Maya, as well as a plug-in for Unreal Engine 5.

"Open sourcing technology allows developers, students, and researchers to learn from and build upon state-of-the-art code," NVIDIA's Ike Nnoli writes. "This creates a feedback loop where the community can add new features and optimize the technology for diverse use cases. We're excited to make high-quality facial animation more accessible and can't wait to see what the community creates with it."

In the gaming world, Audio2Face is already being utilized by a range of developers, including Convai, Codemasters, GSC Games World, Inworld AI, NetEase, Reallusion, Perfect World Games, Streamlabs, and UneeQ Digital Humans. Some of the high-profile examples of titles where it has been used include F1 25, Alien: Rogue Incursion Evolved Edition, and Chernobylite 2: Exclusion Zone.