Micron has announced that it has started shipping its new HBM4 36GB 12-Hi memory to "multiple key customers" with the first to likely be NVIDIA and its next-gen Rubin R100 AI GPU.

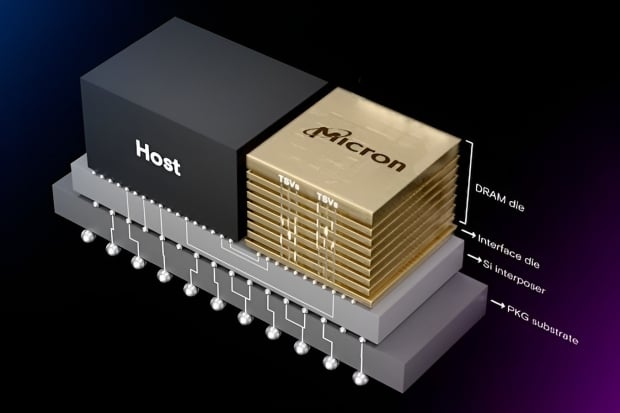

In a press release, the US-based memory maker said it's now shipping HBM4 36GB 12-Hi memory to select customers, built on its well-established 1b DRAM process node, 12-high advanced packaging technology, and highly capable memory built-in self-test (MBIST) feature. Micron says its new HBM4 provides seamless integration for customers and partners developing next-generation AI platforms.

Micron's new HBM4 has a 2048-bit memory interface that pushes more than 2.0TB/sec per memory stack, and over 60% more performance over its previous-gen HBM3 memory. These expansions in speed are perfect for AI, which is where HBM4 will lie: with AI GPUs inside of servers.

Micron says its new HBM4 memory is over 20% more power efficiency compared to its HBM3E memory, with the improvement providing maximum throughput with the lowest power consumption to maximize data center efficiency.

- Read more: Micron to begin mass production of 12-Hi HBM3E, next-gen HBM4 ships in 2026

- Read more: Micron, NVIDIA create modular LPDDR5X memory solution, for GB300 Blackwell Ultra

- Read more: SK hynix ships world's first 12-layer HBM4 samples, ready for NVIDIA Rubin AI GPUs

- Read more: JEDEC releases HBM4 standard: ready for next-gen AI and HPC memory of the future

- Read more: SK hynix showcases world's first HBM4: 16-Hi stacks, 2TB/sec bandwidth, TSMC logic die

- Read more: Samsung's new 1c DRAM yields improve: new chairman admits prior mistakes, ready for HBM4

Raj Narasimhan, senior vice president and general manager of Micron's Cloud Memory Business Unit, explains: "Micron HBM4's performance, higher bandwidth and industry-leading power efficiency are a testament to our memory technology and product leadership. Building on the remarkable milestones achieved with our HBM3E deployment, we continue to drive innovation with HBM4 and our robust portfolio of AI memory and storage solutions. Our HBM4 production milestones are aligned with our customers' next-generation AI platform readiness to ensure seamless integration and volume ramp".