Our Verdict

Introduction, Specifications, and Pricing

Seagate's hard disk drive division has seen a winning streak for the last several years. Capacity ramped up faster than the competition since the 8TB models came to market. Around the same time, the company took a bold step in the feature category by synchronized platter rotation to 7,200 RPMs across the NAS-optimized product line (starting with the 6TB IronWolf). A strong roadmap featuring dual independent actuator arms and even lasers mounted to heads that heat the platters prior to writing data show Seagate doesn't plan to slow innovation.

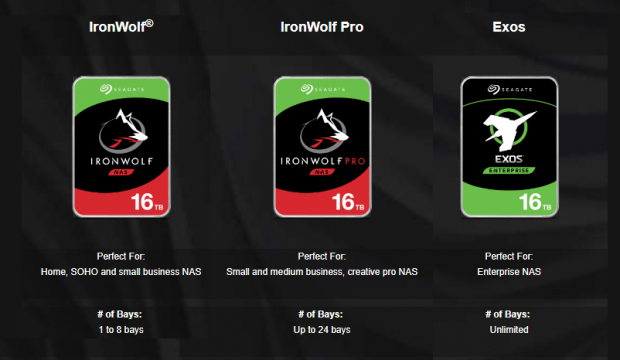

Before we images of Austin Power's laser sharks dancing in our heads, we get to talk about the current product lines that just expanded capacities to 16TB. Seagate's three NAS-optimized series, IronWolf, IronWolf Pro, and Exos X now ship in the new capacity. Other than the label, the three series look identical and often times pricing is similar. Today we will look at what differentiates the three and then see each in action over in the native environment, over a network.

Specifications

There are many data points to go over in the specifications that explain why Seagate offers three NAS-optimized models. We will be starting with the IronWolf and IronWolf Pro from the Guardian Series of consumer and prosumer products.

The IronWolf design supports up to eight drive bay systems, and the IronWolf Pro supports up to twenty-four (recently upgraded from sixteen). The number has more to do with the amount of vibration from the disks in the NAS rather than performance. The more drives you add to the system, or even at the rack level, the more the drive has to compensate for the vibration. Our testing, today will show the optimizations are likely not what you think.

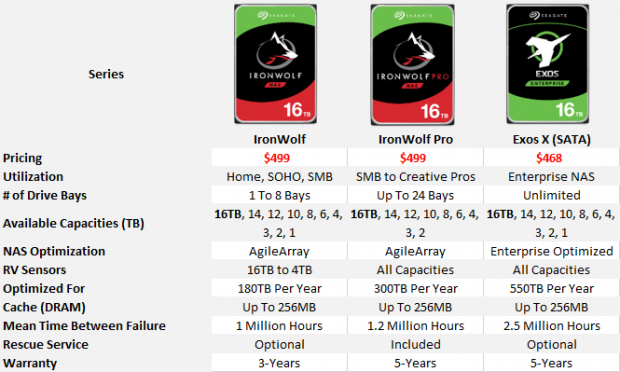

The 1TB to 4TB IronWolf models use 5,900-RPM platter rotation, but the 6TB and larger capacities shifted to 7,200 RPMs just like all capacities of the IronWolf Pros series. The IronWolf base model competes head to head with Western Digital's Red (base series) that still uses the 5,900-RPM spindle speed. The IronWolf Pro is the direct competitor to the Red Pro series with 7,200-RPM speed. The advantage becomes very clear in the user experience and performance.

There are other differences between the IronWolf and IronWolf Pro. The more consumer-focused IronWolf carries a 180-terabyte write rating per year, has an MTFB of 1 million hours and a shorter warranty that will we look at further down the page. The Pro model increases endurance to 300 terabytes per year, bumps the MTFB up to 1.2 million hours.

Our Latest HDD Review Coverage

The Seagate Exos X is another animal altogether. This series comes from Seagate's enterprise product line designed for hyperscale and cloud datacenters. It ships in both SATA (6Gbps) and SAS (12Gbps) interfaces but expect to pay more for the SAS model. The series supports an unlimited number of drives in a system and is what you want to use when deploying racks of servers full of disks. The Exos X uses different firmware optimization to support the increased vibration, has industry-leading 550-terabyte endurance and a massive 2.5 million hour MTBF.

Performance

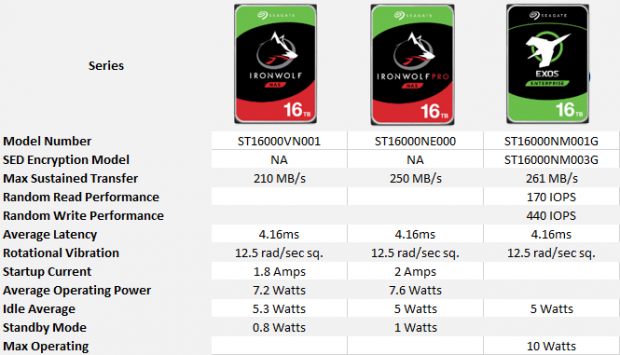

Seagate doesn't publish every specification in public datasheets, so we had to pick and piece a chart together with the available data. On paper, the IronWolf Pro outperforms the base IronWolf series in sequential transfers with the difference being 40 MB/s. In previous reviews, we've found the base IronWolf often outperforms the IronWolf Pro in smaller deployments where the Pro's additional vibration optimization doesn't play as strong of a role. The Exos X leads the sequential performance category with up to 261 MB/s. This model is the only one of the three with published random performance with numbers reaching up to 170 IOPS read and 440 IOPS write. Neither IronWolf Series has published random performance data.

Pricing, Warranty and Rescue

Things start to get jittery when it comes to pricing. We looked at Newegg and Amazon for pricing guidance. Newegg had the best prices at the time of writing. Normally we expect to see a cadence with IronWolf on the low-end, IronWolf Pro in the middle and Exos X at the top with the highest cost per drive. As you can see, that is not the case right now.

Newegg has both the IronWolf 16GB and IronWolf Pro 16TB at $499 right now. We usually see around a $100 difference during a new product launch between the new base and Pro IronWolf models. We rarely observe the Exos X pricing because the release cadence doesn't always come at the same time.

The 16TB Exos X currently undercuts both the IronWolf Pro and IronWolf at Newegg with a $468 price per drive. The price is for the SATA model (ST16000NM001G. The SED encryption SATA model (ST16000NM003G) bumps the price per drive up to $586, and the 12Gbps SAS model (ST16000NM002G) sells for $479.

For comparison, the WD Red 12TB (5,400 RPM and the largest available at the time of writing) sells for $349.99, and the Red Pro 12TB (7,200 RPM) sells for $429.99. HGST, a Western Digital company, ships a 14TB model of the Ultrastar DC HC530 for $549.99. All prices are taken from Newegg at the time of writing.

Seagate worked with every major NAS vendor to implement an in NAS health monitor feature called IronWolf Health Management (IHM). The feature goes way beyond the typical SMART reporting. It's more like ESP with the system monitoring hundreds of parameters, analyzing the data, and recommending replacements before tragedy strikes. Asustor, QNAP, QSAN, Synology, TerraMaster, and Thecus support IronWolf Health Management.

When you register your IronWolf or IronWolf Pro, you trigger Seagate's Rescue Data Recovery Service. This is a free feature for the IronWolf Pro series for two years and an optional add-on for IronWolf and Exos X.

A Closer Look

On the outside, all three series look identical other than the label so we won't bore you with repetitive pictures.

You will notice there is very little room for the circuit board on the bottom of the drive. To reach 16TB, Seagate increased the number of platters inside the drive. The 16TB drives use nine conventional magnetic recording (CMR) platters. This pushes the need for slower shingle magnetic recording (SMR) back at least a generation.

Starting with the 14TB capacity, Seagate started using a new chassis for the high-capacity drives that differs from the 10TB and 12TB models. The easiest way to spot the difference is the black paint on the bottom of the larger drives. The 10TB and 12TB used all silver enclosures.

We can get back to the lasers again. Seagate laser welds the two pieces together to form a perfect seal that ensures the Helium stays inside.

| Today | 7 days ago | 30 days ago | ||

|---|---|---|---|---|

| - | $589.99 USD | |||

| $945 CAD | $1095.14 CAD | |||

| £836.58 | £839.89 | |||

| - | $589.99 USD | |||

| Check Price | Check Price | |||

* Prices last scanned 6/1/2026 at 1:42 am CDT - prices may be inaccurate. As an Amazon Associate, we earn from qualifying purchases. We earn affiliate commission from any Newegg or PCCG sales. | ||||

CIFS Performance Testing

Testing Notes

Today we get to use our QSAN XCubeNAS XN8012R for the first time outside of its feature review. This is my system of choice for testing hard disk drives, solid state drives, and advanced cache products designed for use in storage servers.

The system connects to our Supermicro SSE-X3348TR switch with both 10GbE and 40GbE connectivity. The workload comes from a modified Quanta (QCT) MESOS CB220 server.

In the NAS, we use eight drives from each series in a RAID 6 array without a SSD cache. The QSAN XN8012R uses the ZFS file system and a 10-gigabit Ethernet connection to the network.

Sequential Read Performance

Hard disk drives deliver inconsistent performance under heavy workloads compared to enterprise solid state drives. The sequential read charts shows us that as we pull data from the arrays with increasing intensity. Each dot represents an IO on the chart.

The two IronWolf Series drives outperform the Exos X slightly in the sequential read test, but there are plenty of outliers with all three series.

Sequential Write Performance

Even though we don't have flash sitting in front of the arrays today, we still show the preconditioning and steady-state charts that will allow you to compare these three products to other products and array types later.

In the sequential write test, we see very similar performance between the three arrays. The IronWolf Pro and Exos X show a distinct improvement over the base IronWolf at 4 OIO, though.

Sequential Mixed Workloads

The mixed workload and 70% read charts show us more of the performance inconsistency at very high queue depths. These are worse case numbers for HDDs since the heavy workloads compounds latency between each IO.

Random Read Performance

We start to see significant performance variation in the random workloads. The first thing you will clearly notice is the Exos walking away from the two IronWolf models and in some workloads double and tripling random read performance. Less obvious is the IronWolf outperforming the IronWolf Pro. For many, this is unexpected, but we've noticed the IronWolf performing better in some workloads over the years with other capacities.

The IronWolf Pro uses optimizations to ensure steady and reliable performance in environments with more vibration. Slightly reducing performance in some key areas allows that to happen. If we tested the IronWolf and IronWolf Pro in a rack full of storage systems or even with 24 drives in a server, the IronWolf Pro would perform better than the base model.

Random Write Performance

We see similar findings in the random write tests. The Exos quickly surpasses the IronWolf models and becomes the real value leader for random workloads. The base IronWolf slightly overtakes the IronWolf Pro in our eight-drive array, but if we used a larger array, the two would reverse with the Pro extending the lead with each drive we add to the system.

Random Mixed Workloads

Random 4KB mixed workloads, and the 70% read test, give us a good indication of virtualized desktops running off-network storage. This, as well as database and miscellaneous cloud storage, are where the Exos X stands tall. The two IronWolf products still perform well for their respected markets. Most IronWolf drives simply fall into mass storage roles hold cold data for end-users be them consumers, creators, or businesses.

Server Workloads

Database

The synthetic benchmarks results from the previous page will carry over to many of the server workloads tests with the HDDs we have on the charts today.

The Exos X doubles the IOPS in every OIO compared to the two IronWolf series. This doesn't come as a surprise since this is the series' home turf.

OLTP

The OLTP charts are nearly an identical image of the database test with the Exos standing strong in this heavy randomized workload.

The tables start to turn in the email charts as the workload falls closer to the conditions where the two IronWolf Series thrive.

Archival File Server

The archive test is my favorite because it uses a very wide range of reads, writes, sequential, and random datasets. The test comes from Dell's performance lab and is similar to what a large office with several users working from the NAS is like. It's also similar to my own NAS workload, although my family doesn't hit the system with the same intensity.

Web Server

The webserver test shows similar performance between the three sets of drives tested today. The Exos X shows a little more inconsistency compared to the two IronWolf series in preconditioning with a heavy workload. This is likely due to the different cache algorithm.

Final Thoughts

People claim that hard disk drives are dead, but the facts done show that happening anytime soon. Between April, May, and June 2019, nearly 80 million units shipped, according to TrendFocus, Inc. Seagate led the charge with 40% market share and saw 10% growth from the previous quarter. Seagate, Western Digital and Toshiba combined to ship nearly 210 exabytes of hard disk drive capacity in just three months. The flash companies combined to produce just 114 exabytes of NAND in Q1 2019. The two coexist now and will continue to do so for a long time.

Hard disk drive placement has changed. Cloud storage continues to rise as end-users (consumers, prosumers, and businesses) flock to one-click low monthly cost storage. More advanced users with an eye on personal privacy continue to invest in in-house NAS products that hold the data closer to home and provide faster access. The three products we tested today cover all of these areas and that's likely why Seagate chose to focus on the 16TB launch here first rather than other product lines like Skyhawk and BarraCuda.

If you follow my other IronWolf reviews featuring the smaller capacities you already knew the IronWolf is slightly faster than the IronWolf Pro in smaller array deployments. The new information in this article is how the Exos X series fits in the mix to round out the NAS-optimized trio.

The Exos X delivers superior random performance for cloud and rack-scale deployments but can also benefit smaller install bases. Medium-sized and small businesses can access these drives through the channel and use them to increase performance in virtualized environments, and even different degrees of server workloads.

Current pricing makes the Exos X series more attractive in performance-focused deployments, but you do lose some consumer/prosumer features like two years of free data recovery found on the IronWolf Pro, and advanced disk health monitoring found on both IronWolf and IronWolf Pro series.

The base Seagate IronWolf is still the best value for home and small business users. The series now ships in two capacities not found on the Western Digital Red series, 14TB and 16TB and has the performance advantage thanks to superior platter rotational speeds.

The IronWolf Pro has the same capacity advantages over the Red Pro, and the dollars per gigabyte value is favorable, as well. The series works better in large disk deployments that exceed the recommended drive count of the base IronWolf. Seagate recently increased the maximum recommended number of drives to twenty-four from sixteen and that increases the series' usefulness for cold storage.

The real issue at this time with the IronWolf Pro is the current pricing pressure from Exos X in large capacities. The Exos X in the 16TB capacity is the lowest price we've seen on a flagship model from the enterprise side. The current pricing may just be a fluke from market waves made from new emerging capacities, but as of right now, I would purchase the Exos X over the IronWolf Pro.