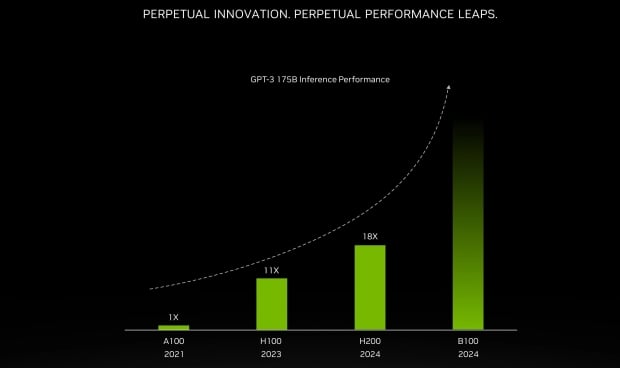

NVIDIA will unveil its next-generation Blackwell GPU architecture at GTC 2024... tomorrow, if you can believe it, detailing its new B100 AI GPU and giving us a tease at the beefed-up B200 AI GPU expected in 2025.

In a new post on X by "XpeaGPU," we hear that NVIDIA's new B100 is truly a monster: 2 x GPU dies on the latest TSMC CoWoS-L (Chip-on-Wafer-on-Substrate-L) 2.5D packaging technology, which allows companies to design and manufacture larger chips. NVIDIA's next-gen B100 will have up to 192GB of ultra-fast HBM3E memory on 8-Hi stacks, while the beefed-up B200 AI GPU will feature a huge 288GB of HBM3E memory.

NVIDIA's current H100 AI GPU ships with 80GB or 141GB of HBM3 memory, while its competitor, the AMD Instinct MI300X, ships with 192GB of HMB3 memory. The release of the B100 AI GPU will see NVIDIA match AMD for the amount of HBM memory, but NVIDIA's new B100 will have the new ultra-fast HBM3E memory and will be the first GPU with HBM3E to market.

- Read more: NVIDIA's next-gen B200 AI GPU uses 1000W of power per GPU, drops in 2025

- Read more: NVIDIA B100 AI GPU will be 'supply constrained' as 'demand far exceeds supply'

- Read more: NVIDIA teases GTC 2024 event is all about AI, expect Blackwell B100 AI GPU unveil

- Read more: NVIDIA CEO Jensen Huang visits Taiwan, preparing for Hopper H200 and Blackwell B100 AI GPUs

NVIDIA will follow up on its B100 AI GPU with the new B200, which will reportedly feature 12-Hi stacks of HBM3E memory, enabling a whopping 288GB of ultra-fast HBM3E memory for the ultimate AI GPU. AMD has a planned upgrade for its Instinct MI300 AI GPU, which will see it using HBM3E memory -- compared to the HBM3 it ships with -- which has been in the rumor mill recently.

NVIDIA will host its GTC 2024 keynote with CEO Jensen Huang tomorrow, March 18, at 1-3pm PDT, which you can check out here.