NVIDIA CEO Jensen Huang has been doing the rounds in Asia, recently visiting Beijing where he checked out the festivities with the Beijing New Year, meeting with multiple Chinese clients of NVIDIA, including Alibaba and Tencent, to further concrete NVIDIA's dominance in AI GPU technology.

Huang then took a flight over to Taiwan, where the NVIDIA founder met with multiple Taiwanese companies, including TSMC (Taiwan Semiconductor Manufacturing Company) and Wistron, some of the key firm suppliers NVIDIA uses and will greatly need for its next-gen AI GPUs. Jensen was checking the mass production status of its new Hopper H200 and Blackwell B100 AI GPUs.

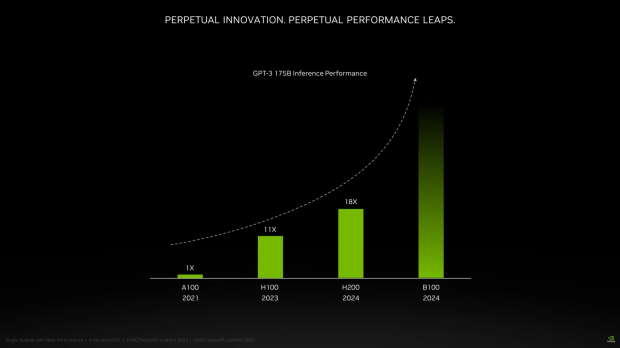

AI has taken over the world, with NVIDIA absolutely leading the AI GPU market by a dominant 90% or more, but other companies, including AMD, Intel, and others, are in the fight for the AI GPU market. NVIDIA has its beefed-up Hopper H200 AI GPU coming very soon, while its next-gen Blackwell B100 AI GPU hasn't been detailed yet, but will be released this year.

NVIDIA's upcoming Hopper H200 AI GPU will feature Micron's new HBM3e memory, with up to 141GB of memory on-board with a huge 4.8TB/sec of memory bandwidth. This additional memory bandwidth will allow NVIDIA to almost double the AI inference performance compared to its H100 AI GPU in AI workloads like Llama 2 (70 billion parameter LLM).

Hopper H200 will fill out most of the AI GPUs from NVIDIA this year, while Blackwell B100 will be released later this year, and most likely detailed at GTC 2024 (GPU Technology Conference) in March, so there's not much longer until we know what is going inside of the Blackwell GPU architecture, and B100 AI GPU.

The industry will see not just the new NVIDIA H200 and B100 AI GPUs but NVIDIA's new AI supercomputer systems with DGX H200 and DGX GB200 expected to launch this year. AI GPU shipments are expected to burst up to a huge 1.2 million to 1.5 million this year, with TSMC's new CoWoS production capacity in massive demand, too. All of these new GPUs will use CoWoS packaging, hence the huge demand.

NVIDIA's new H200 AI GPU will be the first GPU with HBM3e memory and is expected to ship as early as the second quarter of this year. Werder is a subsidiary of Quanta, a long-term partner of NVIDIA, and will be handling the first batches of H200 AI GPUs. Memory manufacturers, including Winbond, Etron, Jinghaoke, and Apple, are all fighting for HBM business opportunities, whereas GIGABYTE, Quanta, Wistron, Wiwing, and others are pushing for the H200 orders.