Introduction

Note: I'm not advocating on buying NVIDIA's new GeForce RTX 3090 Ti graphics card for crypto mining, but I can't stop it. It'll happen, we know it'll happen -- especially since the GA102 GPU is NOT gimped by LHR -- and I have the GPUs here so I thought I would play around with them in some ETH mining.

NVIDIA's new flagship GeForce RTX 3090 Ti rocks some refreshed GDDR6X from Micron, with the frequency being driven up to 21Gbps, up from the 19.5Gbps GDDR6X on the RTX 3090. We have the same 24GB of GDDR6X -- but Micron is using its latest 2Gb modules -- allowing NVIDIA to keep all of the GDDR6X modules on the front of the card.

The GeForce RTX 3090 had its GDDR6X memory modules on both sides, seeing the backplate of the RTX 3090 running ridiculously hot. That's not the case for any of the GeForce RTX 3090 Ti graphics cards, as the GDDR6X memory is on the back.

Why is this so important? Well, crypto mining really pushes the VRAM on GPUs... and with the GeForce RTX 3090 Ti enjoying fast GDDR6X at 21Gbps that runs cooler, and has some overclocking (OC) headroom, then we have a monster crypto mining GPU.

It doesn't cook eggs when you're mining, and you're not having to sit there worrying about your super-expensive GPU being ruined in the future with its GDDR6X being pushed to its limits 24/7.

I was able to squeeze over 1000MHz+ out of both the air-cooled MSI GeForce RTX 3090 Ti SUPRIM X and water-cooled ASUS ROG Strix LC GeForce RTX 3090 Ti OC Edition, and their respective GDDR6X memory. But I'll go into more detail on that later on in the article, with some benchmark charts -- and more data, including GPU + GDDR6X temps -- as well as power consumption, and more.

But man... the MSI card kills the ASUS card when it comes to GDDR6X memory overclocking. THere's just no juice in the tank to put the pedal to the metal, let alone let it unleash on the highway. MSI's custom RTX 3090 Ti SUPRIM X on the other hand... man, it is built to handle a huge GDDR6X memory OC.

Our Latest Editorials Article Coverage

- How to Overclock Your GPU and Boost Your PC Gaming with ASUS GPU Tweak III

- TweakTown GPU Test Bed 2022 Update: Ready For Next-Gen GPUs

- MSI MEG Z490 Unify + Core i9-10900K: RTX 3080/3090 SUPRIM X Tested

- TweakTown GPU Test Bed Upgrade for 2021, But Then Zen 3 Was Announced

- Neon Noir Benchmarked: Crytek's Ray Tracing Benchmark Tool Tested

I was pushing past 24Gbps...

Still, I wouldn't be buying any GeForce RTX 3090 Ti for crypto mining.

| Today | 7 days ago | 30 days ago | ||

|---|---|---|---|---|

| $1995 USD | $2140 USD | |||

| $3361.81 CAD | $3361.81 CAD | |||

| £3235.07 | £3461.77 | |||

| $1995 USD | $2140 USD | |||

| Check Price | Check Price | |||

* Prices last scanned 6/1/2026 at 10:56 am CDT - prices may be inaccurate. As an Amazon Associate, we earn from qualifying purchases. We earn affiliate commission from any Newegg or PCCG sales. | ||||

New 16-pin PCIe 5.0 Power Connector

NVIDIA's new GeForce RTX 3090 Ti does one thing very different to the previous flagship GeForce RTX 3090, which saw the custom MSI GeForce RTX 3090 SUPRIM X using 3 x 8-pin PCIe power connectors... but the new RTX 3090 Ti uses a single 16-pin PCIe power connector.

But... how will you plug your PSU into the new 16-pin PCIe power connector, which you don't have? Well, that's why you'll use the 1 x 16-pin PCIe power connector to 3 x 8-pin PCIe power connectors. Your PSU might be able to handle it, but I would suggest looking at upgrading your PSU for the new GeForce RTX 3090 Ti... especially with the RTX 40 series GPUs right around the corner, and man they're going to need all the power they can get.

That's why MSI sent over their new MSI MPG A1000G power supply, pumping 1000W of power with 80 PLUS Gold-certified efficiency. It also looks great, with MSI using patterns that can be infused beautifully -- with tons of power at your disposal -- with the MSI MPG VELOX 100P AIRFLOW PC case and MSI MPG Z690 CARBON WIFI motherboard.

MSI's new MPG A1000G PSU has a single-railed design, which will deliver clean currents under heavy loads... making it perfect for MSI's new flagship GeForce RTX 3090 Ti SUPRIM X graphics card. MSI's new MPG A1000G PSU is also "future-proof" as it meets the new standards for the upcoming PCIe 5.0 specification... for even higher-wattage GPUs.

I used MSI's new MPG A1000G PSU for all of my GeForce RTX 3090 Ti reviews, starting here with MSI's new flagship GeForce RTX 3090 Ti SUPRIM X. I will also be using the MPG A1000G PSU for NVIDIA's new GeForce RTX 3090 Ti Founders Edition, and the new flagship ASUS ROG Strix LC GeForce RTX 3090 Ti that should be with me any minute now.

RTX 3090 Ti 24GB Tech Specs

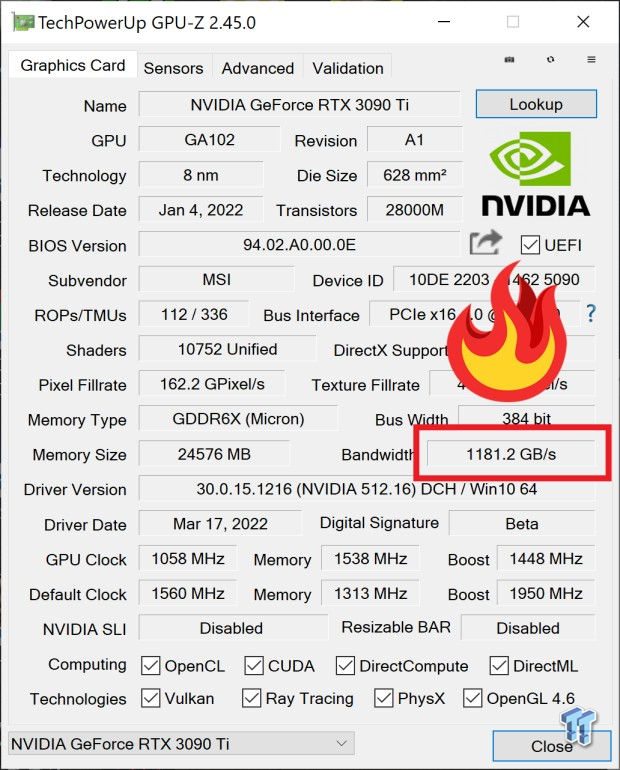

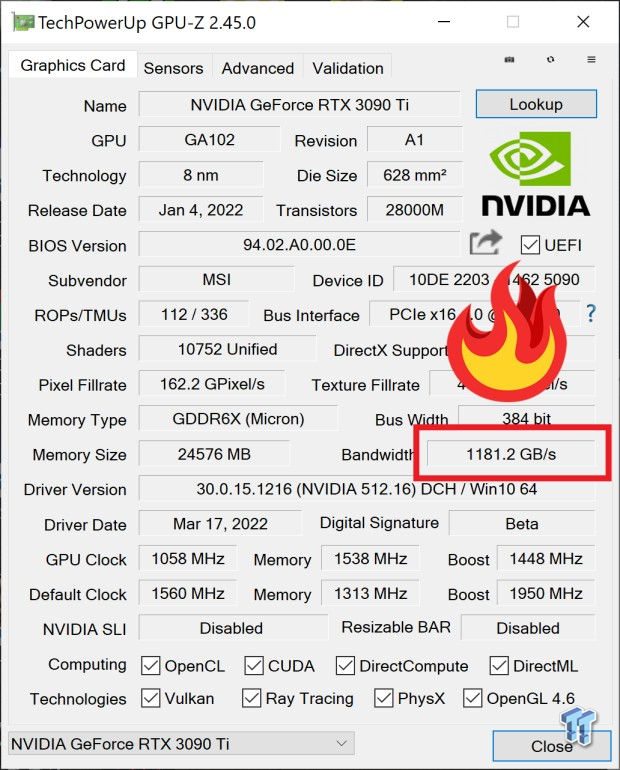

NVIDIA is using the full GA102 on its new GeForce RTX 3090 Ti, with 10,752 CUDA cores compared to the 10,496 CUDA cores on the same (GA102 GPU) as the GeForce RTX 3090. There are other tweaks, with the same 82 RT cores but 336 Textures Units (328 TUs on the RTX 3090).

There's the same 24GB of GDDR6X on the GeForce RTX 3090 Ti as the previous GeForce RTX 3090, on the same 384-bit memory interface -- but with a big change: it's clocked at 21Gbps -- providing it with up to 1TB/sec of memory bandwidth, up from the 936GB/sec on the RTX 3090.

However, I've overclocked every custom GeForce RTX 3090 graphics card that I've got -- before, and leading up into this review -- and pushed the 24GB GDDR6X up over 21Gbps and enjoyed 1TB/sec of memory bandwidth for the last year or more.

Another huge upgrade over the RTX 3090 is that the new RTX 3090 Ti has its spiffy new 16-pin PCIe 5.0-ready power connector with up to 450W available on the RTX 3090 Ti... up from the 350W on the RTX 3090. NVIDIA is making plenty of references to its near 10-year-old TITAN RTX, and sure it kinda is... but it's replacing the RTX 3090, not the Ampere-based TITAN RTX that never materialized.

And if this is the Ampere TITAN RTX... then maybe it should've been called that, so I will not be referring to the TITAN RTX (even though I could, I have the results recently tested on my Ryzen 9 5900X) but no one in their right mind still has that as a gamer... they'd have a new RTX 30 series GPU so they have RT + DLSS... right?!

Right, NVIDIA?!

Overclocked GDDR6X is the Key

I've got some testing notes that I had typed in here and was going to remove them, but I thought I'd keep them here as a digital scrapbook of the hours I put into the OC adventures with the GDDR6X memory on these custom GeForce RTX 3090 Ti graphics cards.

MSI RTX 3090 Ti SUPRIM X:

- +1346MHz = 11615 = 1138.9GB/sec (1.1TB/sec)

- +1620MHz = 11871 = 1163.6GB/sec

- +1864MHz = 12115 = 1187.3GB/sec

- 124.5MH/s

- up to insane 134MH/s (flexes between 125/126)

- 129.6MH/s stable AF

- rock solid, while ASUS crashing multiple times @ 1337MHz or so on GDDR6X

- -try reduce below 1300

- -OK lol try reduce below 1200, wtf ASUS

ASUS ROG Strix LC RTX 3090 Ti:

- +1175MHz = OK

- +1200MHz = testing (seems OK)

- +1250MHz = testing (124MH/s) -- NOT OK -- POS (visual glitches across the screen)

- +1337 = seems OK = 11587 = 1136.6GB/sec (some slight glitches)

- (not OK -- crashed in the end, whole system reset)

- +1390 = visual glitch instantly

Test System Specs

Anthony's GPU Test System Specifications

The biggest upgrade to the GPU testbed is the AMD Ryzen 9 5900X processor, offering 12 cores and 24 threads of Zen 3-powered CPU grunt at up to 4.8GHz.

That's plenty of CPU power and offers a great upgrade over the Ryzen 7 3800X that I was using previously.

I will be upgrading this system in a few months, and maybe running it side-by-side with the new Alder Lake-powered Intel Core i9-12900K processor. I'm using one inside of the Allied M.O.A.B.-I gaming PC that I reviewed a few months ago, and man the 12900K is like the Godzilla of CPUs.

Sabrent is the most recent partner of mine to help build out my systems, sending me oodles of the fastest NVMe M.2 SSDs on the planet. I'm using Sabrent's flagship Rocket 4 Plus 4TB M.2 SSDs which offers 7GB/sec+ reads and writes with a huge 4TB of capacity.

ASUS has been a tight partner of mine for a few years now, providing their huge 43-inch 4K 120Hz gaming monitors for my benchmarking and gaming needs. I'm using two of them at the moment, the ROG Strix XG438Q and the ROG Swift PG43UQ gaming monitors.

- CPU: AMD Ryzen 9 5900X (buy from Amazon)

- Motherboard: ASUS ROG X570 Crosshair VIII HERO (buy from Amazon)

- Cooler: CoolerMaster MasterLiquid ML360R RGB (buy from Amazon)

- RAM: G.SKILL Trident Z NEO RGB 32GB (4x8GB) (F4-3600C18Q-32GTZN) (buy from Amazon)

- SSD: Sabrent 4TB Rocket NVMe PCIe 4.0 M.2 2280 (buy from Amazon)

- PSU: be quiet! Dark Power Pro 11 1200W (buy from Amazon)

- Case: InWin X-Frame 2.0

- OS: Microsoft Windows 11 Pro x64 (buy from Amazon)

- Display: ASUS ROG Swift PG43UQ (4K 120Hz) (buy from Amazon)

ETH Mining Benchmark Results

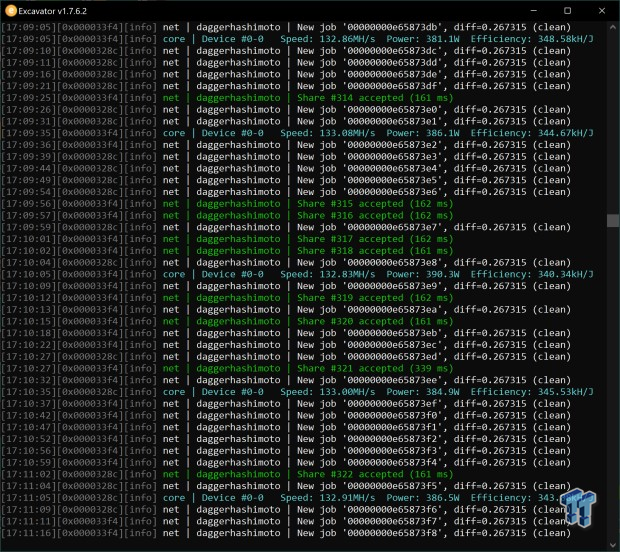

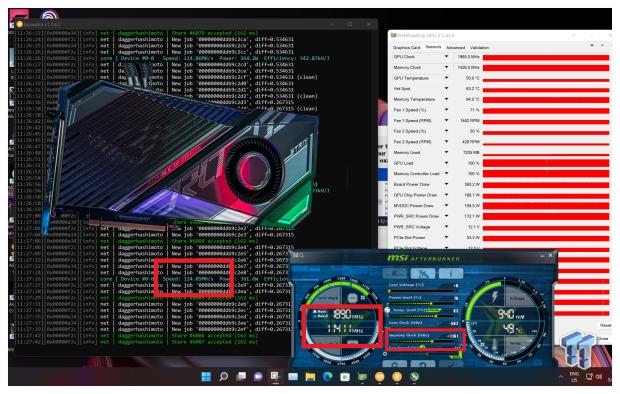

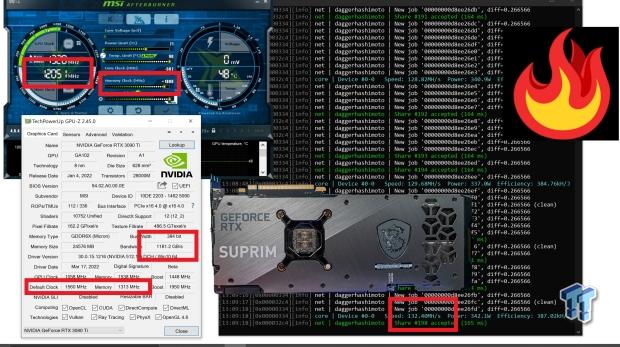

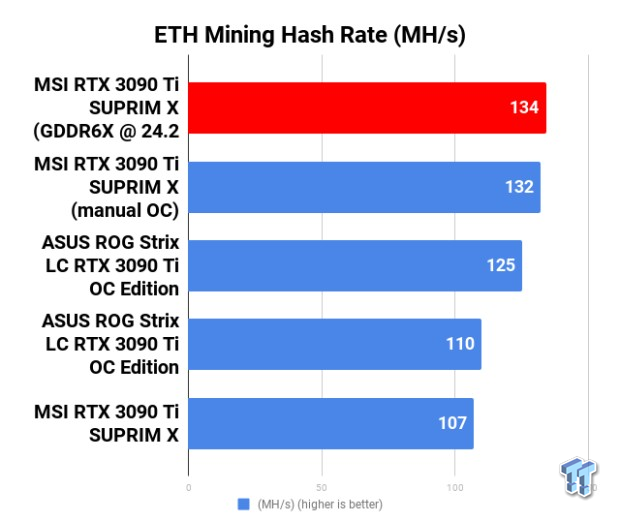

MSI's air-cooled GeForce RTX 3090 Ti SUPRIM X reigns supreme in ETH benchmarking, with an average of 130-132MH/s with its massive GDDR6X memory overclock to 24Gbps. I was topping out at around 134.5MH/s which was freaking awesome to see, but it sat between 132.5MH/s and 133.2MH/s all day long at a tad over 24Gbps on the GDDR6X memory.

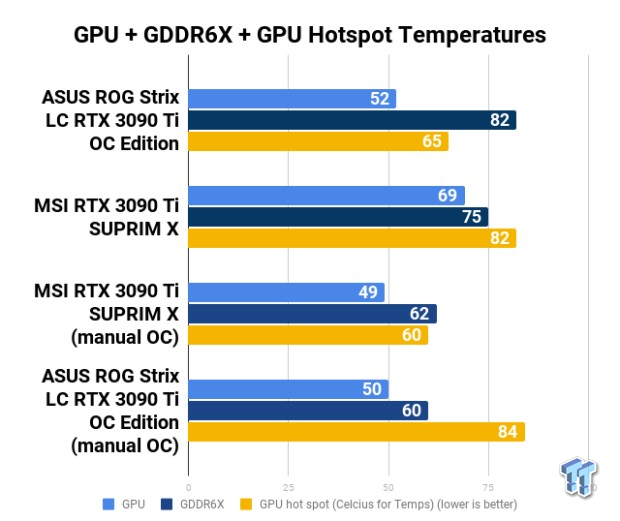

Compared to the mild GDDR6X overclock on the ASUS ROG Strix LC RTX 3090 Ti OC Edition, which was spitting out around 125-126MH/s max -- yet chiller GPU temps, just -- with its bulky AIO cooler. If you were outfitting a GPU crypto mining farm, it would be a hard choice between the cards.

On one hand you've got better hash rates on the MSI RTX 3090 Ti SUPRIM X, but the AIO cooler is great on the ASUS ROG Strix LC RTX 3090 Ti. You know what? 20 x each would be nice, or 20 x any RTX 3090 Ti for ETH mining because you're doing 120MH/s easy.

At stock settings, the ASUS outperformed the MSI card with around 110MH/s right out of the box... the MSI card was tapping out at 107MH/s before it can get unleashed with some GDDR6X memory overclocking.

Power & Temps

There's no escaping the huge power draw on the GeForce RTX 3090 Ti, which easily hits 450-500W on its own if you let it. But if you reign in the power limit to somewhere between 65-75% where the card is stable, I was getting the GPU power consumption down on the MSI RTX 3090 Ti SUPRIM X to around 340-345W and the ASUS ROG Strix LC RTX 3090 Ti OC Edition down to around 360-365W.

Final Thoughts

I've got two custom GeForce RTX 3090 Ti graphics cards here in the GPU lab, the air-cooled MSI GeForce RTX 3090 Ti SUPRIM X and the water-cooled ASUS ROG Strix LC GeForce RTX 3090 Ti OC Edition. You'd think that out of the both of them, the water-cooled ASUS ROG Strix LC (the LC stands for Liquid Cooled) would be better.

Nope, the MSI kicks its ass -- purely from the GDDR6X memory being far more overclockable.

MSI's custom GeForce RTX 3090 Ti SUPRIM X was pushing +1800MHz resulting in over 24Gbps bandwidth on the new GDDR6X -- up from its already ultra-fast 21Gbps. Pushing 128-129MH/s with the air-cooled MSI RTX 3090 Ti SUPRIM X for around 340W of power is impressive AF, that GDDR6X @ 24Gbps+ gives me tingles.

I can see waves of crypto mining farms powered by either the water-cooled ASUS ROG Strix LC RTX 3090 Ti OC Edition or air-cooled MSI RTX 3090 Ti SUPRIM X, with 10 of them pushing 1.2GH/s for around 3400W of power. That's respectable.

After spending the last few days overclocking and tweaking the two custom GeForce RTX 3090 Ti graphics cards with some ETH mining, I'm walking away impressed. NVIDIA's use of Micron's new GDDR6X memory clocked at 21Gbps makes the RTX 3090 Ti faster for ETH mining than any other card on the market, but overclocked on the MSI RTX 3090 Ti SUPRIM X up to 24Gbps... wowzers.

GDDR7 is coming in the new wave of next-gen GPUs, offering 32Gbps+ of bandwidth right out of the gate. We would be looking at 180MH/s and some from a single GPU, but you won't be mining ETH on any GDDR7-based GPUs of the future as ETH goes into proof-of-stake in Q2 2022 (end of June 2022).

For now, all I wanted to do was push that insane new GDDR6X to new heights -- and hitting 24Gbps is a beautiful new height -- in ETH mining. It's not game-stable at 24Gbps, but for ETH mining it can take that 24Gbps GDDR6X overclocking pounding all day. There were some hiccups, where reducing it to 23.8Gbps