Introduction

Intel, with their E series SSDs, has already led the push for enterprise SSDs with class-defining speed, endurance and reliability. Taking this a step further, Intel is coming into the PCIe flash market with their 910 PCIe series. Intel has been in the storage business for some time now by partially owning NAND production fabs in partnership with industry heavyweight Micron (IMFT) and also selling consumer and enterprise SSDs.

Intel has always stated their intention of pushing forward SSD technology as a means to get more performance out of users' computers in both the client and enterprise space. For Intel it hasn't always been about having top-performing SSDs and proprietary controllers, their goal has been to be the leader in bringing about the mass adoption of flash to the customer. Spurring the mass production of NAND by owning the foundries, then placing this flash into the hands of other companies to develop their own flash-based devices, has served Intel well.

A key here for Intel is the pricing approach. Current PCIe SSDs can be prohibitively expensive. With Intel coming into this market with a much lower price structure, the other players will be forced to compete. Intel has the heft to throw their weight around and actually force the overall price of these types of SSDs even lower, stimulating wider implementation. Intel also happens to own a stake in one of the largest NAND foundries in partnership with Micron. Selling more NAND on both the front and back end is a great deal for Intel.

[img]2[/img]There are plenty of existing players in this space that are already deeply entrenched and many more that are waiting in the wings to jump in as well. Currently the PCIe SSD market is white hot and with several of the larger OEMs jumping into the fray, the time is right for this type of storage to gain deeper penetration into the datacenter. The reasons that this approach will succeed are clear. Flash offers exponential increases in performance over its HDD ancestors. These massive performance gains come in much smaller packages that consume less power and space and also generates less heat than HDDs.

One method of deploying SSDs into the server rack is by simply using a dedicated appliance that is connected to a server. This approach has its drawbacks, however, as the more devices that you place between the SSD and the server will always bring latency penalties along with it. Bringing the SSDs into the rack also isn't always the best option. The high-powered HBAs, RAID controllers and backplanes that are needed to benefit from the increased speed of the SSDs can involve large upfront capital expenditures when deployed into existing infrastructure.

The simplest method of slipping in more power and performance is to have a small form factor PCIe SSD. Physically the Intel 910 takes up little space, with a half-height and half-length footprint. This can be slipped into popular smaller servers easily and places the flash as close to the CPU as possible. Placing the SSD onto the PCIe interface creates a straight path to the CPU, keeping the latency low. Keeping the host system overhead light is another requirement for success. With the integrated OEM LSI SAS drivers, which are preinstalled in every major operating system, it is as simple as plug and play to get the 910 up and running.

Ease of use is another important requirement that is addressed with the installation. Many PCIe flash solutions involve intense system management requirements which require users to jump through hoops to get up and running correctly. Those looking to rapidly multiply their performance with an existing solution require a quick and easy solution that involves the least amount of downtime as possible.

Our Latest PCIe SSD Review Coverage

Specifications, Pricing and Availability

One of the most important aspects of any enterprise-class device is the endurance and reliability of the components, as the device is only going to be as strong as its weakest link. This really illustrates the wisdom of using the LSISAS2008. This processor is stable and proven, with a long track record of reliability under demanding conditions. Its ability to provide low latency access, along with the LSI OEM drivers that are integrated into every operating system, makes this an excellent choice.

Intel and Hitachi have partnered in developing the EW29AA31AA1 SSD controller which is currently also utilized in Hitachi Ultrastar SSDs. These enterprise class controllers handle all of the heavy lifting that is required for the NAND management onboard the 910. The wear leveling, ECC, garbage collection and other processes are handled by these controllers, keeping the overhead on the host system low.

The unfortunate fact is that as NAND ages it requires more intensive management. Some PCIe SSDs use the power of the host systems' CPU to handle the NAND management. These solutions will require ever-increasing amounts of system resources to handle these background tasks, robbing the host system of precious resources.

HET MLC is Intel's High Endurance Technology MLC. This provides SLC-like endurance at MLC prices. Sporting an industry-leading AFR (Annual Failure Rate) for its flash products, Intel offers a warranty of ten full drive writes per day for five years with the 910. This is an endurance improvement of 30X over the standard Intel MLC. HET MLC offers an impressive 7 Petabytes of write endurance for the 400GB model and 14 Petabytes for the 800GB model when measured with a random full-span 8k write workload.

The drive is rated for 180,000 random read and 75,000 random write IOPS, along with 2GB/s sequential read and 1GB/s sequential write for the 800GB model. The 910 specifications are measured when the SSD is under Steady State conditions and filled to 100% capacity. Intel does not market F.O.B. (Fresh Out of Box) specifications for the 910.

There is also the option to select a "˜Performance Mode' for this device. This allows the user to select a higher average power draw for the SSD which results in a sequential write performance increase up to 1.5GB/s from the standard 1GB/s. This does increase the average power draw from 25 Watts up to 28 Watts and the peak output to 38 Watts.

The 800GB model has an MSRP of $3859 and the 400GB is listed for $1929. This is an impressive price point, coming in at $4.82 per GB, outstripping much of the competition easily.

Intel 910 SSD

The Intel 910 comes in a single slot form factor PCIe 2.0 x8 card that consists of three densely packed PCBs. The top two PCBs are close to each other and contain large banks of NAND. There is a larger gap between the bottom and the top PCBs to facilitate airflow to the processing components on the bottom PCB. The holes on the rear PCIe bracket allow the air to pass through the SSD and out the rear of the case.

The device does generate heat that requires some active cooling to dissipate. 200 LFM (Linear Feet per Minute) of airflow is actually not an excessively high standard and most servers already meet this requirement easily. Under the Maximum Performance mode the requirement rises to 300 LFM.

The bottom of the card contains a large chip in the center that holds the firmware for the LSISAS2008 controller. Eight individual Micron DDR2 SDRAM chips, which provide caching for each individual SSD controller, line the bottom of the card. Each chip has a counterpart on the other side of the PCB as well.

Once the card is pulled apart we can get a better look at the components. The top two PCBs contain the banks of NAND and the bottom PCB contains the LSISAS2008 processor and the four individual SSD controllers.

The 910 presents itself to the host operating system as four individual 200GB drives. The LSISAS2008 processor under the silver heatsink merely passes these controllers onto the system itself and does not actually perform any RAID calculations. The Intel 910 is not a bootable device, which keeps the driver stack lean, minimizing latency.

This graphic illustrates the architecture that is utilized on the 910 to present the controllers and NAND to the host system.

Intel 910 SSD Continued

The four large Aluminum Electrolytic power capacitors that are directly above the PCIe power connector are used to provide enough power (330uF) for the SSD controllers to flush any data to the NAND in the event of a power loss. Many datacenters are now beginning to operate with higher heat ceilings. This lowers overall cooling costs, but makes high heat endurance a definite must. This type of capacitor is specifically designed for high heat environments, which is crucial for today's datacenter use.

Many of the current crop of PCIe SSDs do not have power capacitors integrated into the design which can effectively limit their use in mission-critical applications. The integration of power capacitors is a definite advantage for the Intel 910.

The bottom PCB mating connectors are composed of five rows of female connecting slots that attach to the male equivalents. These provide a bridge for communication between each of the PCBs. A clever design allows both of the uppermost PCBs to connect directly to the "˜engines' of the unit that are contained on the lower PCB. These connectors must remain tight, as servers can be subjected to a high amount of vibration. There are strategically placed fasteners next to each mating connector and a clasp on the end of the card to secure the components together.

The middle PCB is packed with IMFT 25nm HET (High Endurance Technology) NAND modules on both sides. HET MLC NAND is binned at the Fab and specifically processed for the highest endurance. This also provides a much lower price point that is more palatable than SLC pricing for customers. HET MLC is certainly furthering its push into the datacenter, as SLC is still priced far too high for all but the most intense high write applications.

The Intel 910 will initially come in two capacities of 400GB and 800GB. The 400GB model will consist of only two PCBs, with the uppermost PCB likely being the one that is eliminated.

The top PCB also contains only large banks of NAND flash. These large daughterboards are essentially "˜dumb' modules that pass the NAND onto the bottom PCB. The most interesting aspect of the bottom of this PCB is what is NOT there. There are six empty slots that are ready and waiting for NAND packages and the middle board also has six empty slots. These 12 slots are a sign of the impending higher capacity versions of the Intel 910 that will be hitting the market soon.

Test System and Methodology

The Intel 910 comes in both 400GB and 800GB capacities. The 800GB 910 presents itself to the host operating system as four individual 200GB volumes, while the 400GB version only supplies two of the 200GB volumes. We will be testing both and providing results for the performance of both models.

There is the possibility of using Windows RAID, or a third party program, to aggregate the performance of all of the drives into one large RAID 0 volume. This would be a risky deployment in most applications, but when paired with strong backup schemes in high read and write scenarios, RAID 0 can be a compelling solution. Parity can also be achieved with RAID 5, providing data redundancy at the sacrifice of capacity and write speed.

The configuration that we have tested with provides the best latency results and does a good job of showing the base performance of the 910 itself. This is simply configuring it as four separate LUNs and accessing each individually. This provides low overall latency in conjunction with much lower maximum latencies than RAID 0.

There is an optional Maximum Performance mode that increases the sequential write speed from 1GB/s to 1.5GB/s. This increases the power draw from 25W average to 28W average, with a peak of 38W. We tested with this variable enabled to highlight the maximum performance of the Intel 910. Only Sequential Write speed is affected.

Testing Enterprise Solid State Storage (SSS)

When assessing enterprise flash products the parameters are vastly different than the type of testing conducted upon consumer SSDs and PCIe flash devices. With consumer devices the capacity of the SSD isn't always used at 100% fill and the drive is rarely put under a sufficient enough load to drive it down into its worst performance levels.

Flash is a premium tier in professional environments, costing multitudes of order more money per GB than HDD or tape storage. Every penny that is spent on these high performance EFDs (Enterprise Flash Drives) must be utilized to its fullest potential. This entails using every bit of the storage space available to full capacity and keeping the device under a constant workload for the duration of its lifetime. Unfortunately this type of high-level usage lines up exactly with the worst case scenario for SSDs in terms of both performance and endurance.

All SSDs rely upon spare NAND (Overprovisioning) to complete the majority of their internal functions, keeping the SSD performance at the highest levels possible. Spare area also provides a higher endurance over the lifetime of the SSD as well. Since enterprise SSD devices are typically used to full capacity, there are usually extra levels of overprovisioning to enhance wear levelling, endurance and performance above that of mainstream devices.

Several fundamental aspects of SSD operation are actually hurdles to delivering enterprise class flash devices that yield predictable and sustained performance. Idle time is also used in many consumer SSDs to conduct housekeeping routines, but this is not a reality in an enterprise environment where the SSD will be kept under full load constantly. There are many different approaches that enterprise SSD manufacturers use to mitigate the fact that after prolonged usage the very nature of NAND flash leads to performance degradation.

After prolonged use, all flash-based devices will start to slow down and reach Steady State. Steady State is the "˜final' level of performance that the SSD will come to when it is placed under continuous load for an extended period of time. This final level of performance has little variability and is far below the performance level that is attained when the SSD is brand new and Fresh Out of Box (F.O.B.).

Finding this level of performance isn't always easy and it is here that we defer to several vital methodologies from SNIA.

Storage Networking Industry Association (SNIA)

SNIA is an industry-accepted group that has defined several parameters crucial for assessing enterprise SSDs. In reality, FOB (Fresh Out of Box) test results are rather useless for determining the performance of these devices, so it is critical that we test under the correct parameters. We have adopted several of SNIAs central approaches into our testing program here at TweakTown, which will ensure that we observe the devices under the appropriate Steady State conditions.

Steady State is attained by applying a heavy-write workload over the full span of the SSD over an extended period of time. Steady State can also vary depending upon the type of workload that is placed upon the SSD, so using the correct type of loading is also important.

The three steps of the process are to apply Workload Independent Preconditioning (WIP), Workload Based Preconditioning (WBP) and then verification of Steady State Convergence.

During the WBP we log the performance data to ensure that Steady State has been attained - the slope is less that 10% min/max. Once we have confirmed Steady State Convergence is within the desirable test range on the graph above, we begin data logging.

Base Product Specifications

The five major measurements of base performance of any Solid State Storage solution are latency, random read/write and sequential read/write speed. These are the most common measurements that are posted by manufacturers to advertise storage performance.

We begin with a measurement of the latency of the device. The industry standard for measurement of latency is 4K Random Access at a Queue Depth (QD) of 1.

Both of the 910s capacities are illustrated here, but the lines overlap at the lower QD so closely that there is virtually hardly any difference between the two. The 800GB model has a latency of .142 and scales up accordingly as we apply higher QD to the devices. The listing of QD4 is actually QD1 of each volume for the 800GB version and QD2 per volume with the 40GB model. At QD1 the 400GB comes in with .140.

4K random read speed measurements are an important metric when comparing drive performance, as the hardest type of file access for any storage solution is small-file random. We test with a five second ramp time to eliminate any burst results. Read speeds are largely unaffected by extra Overprovisioning, so we only include standard results.

The 4K random read speeds indicate solid scaling as we pass up through to the higher QDs. The 800GB tops out at QD256 at 227,247 IOPS, this is well above the rated 180,000 IOPS by over 21%!

The 400GB version peaks at 113,489 IOPS at QD128, also 21% over the rated specification of 90,000. Intel has only advertised Steady State specifications for the 910 and obviously conservatively at that.

This also highlights the nearly perfect scaling that we will see over the course of the benchmarks. The LSISAS2008 PCIe bridge scales exceptionally well with each of the four controllers, so the 400GB results are always nearly half that of the 800GB.

As we move into the random write results we begin to include our 20% Overprovisioning results, indicated as "œOP" in our graphs. OP consists of leaving 20% of the drive unformatted. By increasing the amount of available spare area, the SSD can perform at higher write speeds. This represents an optional performance boost for users at the cost of some capacity.

The 800GB results with standard Steady State peak at 85,768 IOPS at QD32. This is 13% higher than the rated 75,000. The OP result reaches 100,342 IOPS at QD32. Reaching over 100,000 IOPS in Steady State 4k write is certainly nothing to sneeze out and one of the most impressive results in this entire evaluation.

The 400GB plateaus at QD32 with 42,971 IOPS, 12% higher than the rated 38,000. The OP results reach 52,421 IOPS at QD16.

The 128K sequential read speeds reflect the maximum sequential throughput of the SSD using a realistic file size that will actually be encountered in an enterprise scenario.

The 800GB scores at 1956MB/s, right below the advertised 2.0GB/s. We can observe similar results with the 400GB, coming in at 973MB/s, a tad below the rated 1GB/s.

The 128K Sequential Write speeds of the 800GB top out at 1480MB/s, right below the rated 1.5GB/s using the Maximum Performance mode. The default power mode hits 1GB/s with sequential write access. The 400GB reaches 745MB/s, right at the rated spec of .75GB/s.

8K Randoms & Server Emulations

8K random read and write speed is a metric that is not tested for consumer use, but for enterprise environments this is an important aspect of performance. With several different workloads relying heavily upon 8K performance we include this as a standard with each evaluation. Many of our Server Emulations below will also test 8K performance with various mixed read/write workloads.

For 100% Random Read the 800GB version scores 104,700 IOPS at QD128 and the 400GB version tops out at 52,166 IOPS at QD128.

The 8K Random write speed in Steady State with the 800GB model tops out at QD64 with 23,995 IOPS and gets a boost up to 26,000 with extra Overprovisioning. The 400GB model scores 12,275 at QD32 in Steady State and with extra Overprovisioning scores 13,607 at QD32.

This test emulates Database and On-Line Transaction Processing (OLTP) workloads. OLTP is in essence the processing of transactions such as credit cards and is used heavily in the financial sector. Enterprise SSD is uniquely well suited for the financial sector with low latency and high random workload performance. Databases are the bread and butter of many enterprise deployments. These are demanding workloads with 8K random of 66% read and 33% write distribution that can bring even the highest performing solutions down to earth.

The 800GB model reaches 344MB/s at QD64 with regular Steady State and 359MB/s with OP.

The 400GB model reaches 172MB/s in Steady State at QD64 and 178MB/s with OP.

This test emulates a typical Email Server with a 50% Read and 50% write distribution of 8K random files.

The 800GB model scores 290MB/s at QD64 with normal Steady State and 305MB/s with extra OP.

The 400GB model reaches 221MB/s at QD32 in Steady State and 155MB/s at QD32 with OP.

The File Server profile represents typical workloads that will be encountered in file servers. This profile tests across a wide variety of different file sizes simultaneously, with an 80% read and 20% write distribution.

The Webserver profile is a read-only test with a wide range of file sizes. This test illustrates the performance of the storage solution with gamut of random reads.

The 800GB model scores 976MB/s at QD64 in Steady State and 995MB/s with OP.

The 400GB model scores a similar 970MB/s in Steady State and 993MB/s with OP.

Power Measurements and Thermal Monitoring

One of the most overlooked areas in many enterprise evaluations of storage solutions is the power consumption and the amount of heat the unit generates. Heat generation must be dealt with via a range of different types of active cooling methods. This constant need to dissipate heat away from the datacenter results in one of the highest ongoing expenses in these environments. Active cooling requires power and lots of it.

For every watt of power that is consumed in a datacenter there also has to be a redundancy for that power as well. This will provide the datacenter the ability to continue operating during power "˜events'. This usually consists of large banks of batteries and generators that can be a very expensive proposition.

By limiting the amount of heat that is introduced into the datacenter, the power used for climate control and redundancy costs of that power as well, are also lowered.

Power consumption, of both the device itself and the power needed to deal with any heat generation, sometimes costs more than the purchase of the unit itself over the lifespan of the device. Power and heat generation are significant measurements to take into consideration when making purchasing decisions.

The Intel 910 has a 200LFM (Linear Feet per Minute) requirement for the default power mode and 300LFM for the maximum performance mode. These requirements are easily met by the majority of cooling solutions already integrated into servers. Temperature monitoring can be handled with the Intel Data Center Tool, a Command Line Interface (CLI) for management of the SSD and monitoring of SMART and thermal data. We were also able to use AIDA 64 software to monitor the temperatures in real-time, highlighting the flexibility given through use of the LSI drivers.

The testing of the Intel 910 consists of both 400GB and 800GB models, but this is actually accomplished through the use of the 800GB SSD only. Simply using only two of the four available volumes allows us to simulate the performance of the 400GB model. The other two controllers and banks of NAND still generate some heat, even if they are only idling. Unfortunately this does not allow us to test the thermal envelope of the 400GB model.

Our thermal observations were conducted with both the default power setting and the optional maximum power settings. This increased power draw did not equate to large differences in heat generation (within 1C), so we are only including the results with the maximum performance mode. These results were generated with 200LFM of airflow (+/- 10%). The workload testing for heat generation was conducted at a QD of 128. The results are displayed as T-Delta to Ambient. This allows for a higher level of accuracy as it accounts for any small variations in room temperature.

There is little variation in the amount of heat generated during the different workloads, with the largest difference being three degrees Celsius while under load.

Overall keeping a low power threshold is the holy grail of high performance enterprise storage solutions. IOPS/Watts is a calculation that is used to determine the amount of IOPS that are given per Watt of power consumed. This is important, taking into consideration that typically for every watt of power consumed there is also an accompanying increase in heat that the device generates. This creates a vicious cycle of overall power consumption as the additional heat generated must also be cooled.

We compare both the 4K and 8K IOPS/Watts levels for random read and write with standard Steady State and Overprovisioning. The 910 performs well in these regards, with near linear scaling between the 400GB and 800GB models. The 8k random really benefits the most with the Overprovisioning. The 4K random does realize as much of a gain as the 400GB, but a gain of 10% with Overprovisioning is good.

The 910 overall performs well in this area with solid marks across the board.

Final Thoughts

The Intel 910 overall is a compelling solution that is certainly going to set a class-defining price point. TCO (Total Cost of Ownership) is the big story here. Price has always been one of the major inhibitors for companies looking to upgrade to enterprise-class flash products and Intel is helping to break down those boundaries.

Intel is an industry "˜heavy' that has the ability to come in and change the landscape and with the 910 launch, the game is definitely going to change for the competition. Intel has come in with such a low price point that others will be forced to attempt to match. Intel enjoys an advantage in pricing, which isn't always going to be easy for competitors who do not own foundries. The low pricing of this SSD easily outstrips other PCIe solutions and could even become a threat to some 2.5" SSDs. The 800GB model has an MSRP of $3859 and the 400GB is listed for $1929.

Some of the industry leading SLC SSDs can cost around $7000 for half of the capacity of the Intel 910. This leads to lopsided comparisons in the price-per-GB area, with some SLC SSDs commanding $17.50 per GB in contrast to $4.82 per GB for the 910. When comparing Dollar per IOPS measurements, the 910 becomes an even clearer winner, with two cents per read IOP compared to as high as eight cents per IOP for some SLC SSDs.

Value-oriented and easier to use products can help to bring forth wider adoption. Ease of use is important to minimize downtime. The LSI drivers provide a flexible platform that has deep compatibility roots, allowing for plug-and-play capability. The amount of time that it takes to configure and manage the device is also important. Once the device is slipped into the waiting PCIe slot, all that is left is volume management.

The lightweight drivers allow for high performance with minimal host overhead and also excellent overall latency figures. By having multiple volumes presented to the host system, there are a number of different configurations that can be utilized and one particularly effective usage would be for tiering models. This type of implementation can reap huge benefits for users with existing infrastructure. Tiering and caching software would allow the 910 to boost the performance of large arrays of HDDs simply by writing the hot files to the 910 as a caching device. This allows the CPU of the system to be used to its fullest, maximizing performance for the whole system.

At the end of the day, one has to remember that Intel is primarily a CPU manufacturer. With multicore processors the norm, the key for Intel to sell more units is to get more use out of the high powered CPUs that they sell. Flash is a means to do this for them, as it can break the storage bottlenecks that have hamstrung the power and speed of the CPUs for several generations now. What is the point of having an ultra-high performance CPU if it is strangled by storage bandwidth? Virtualization, tiering and caching models, cloud computing servers and the financial sector are areas that the 910 is designed for, but there are other uses that the price point allows for. For professional video and audio editing, this would be a dream drive.

The high endurance of the device is provided from using high quality 25nm HET MLC flash direct from Intel's own foundries and they can also set aside the highest binned NAND for their own SSD products. Just a few short years ago 14 Petabytes of endurance from MLC flash was simply unheard of and is still impressive today. Tying this endurance in with high-grade power capacitors that help protect the data results in a reliable SSD that is backed by a five year warranty. The five year warranty covers the SSD for 10 full drive writes per day.

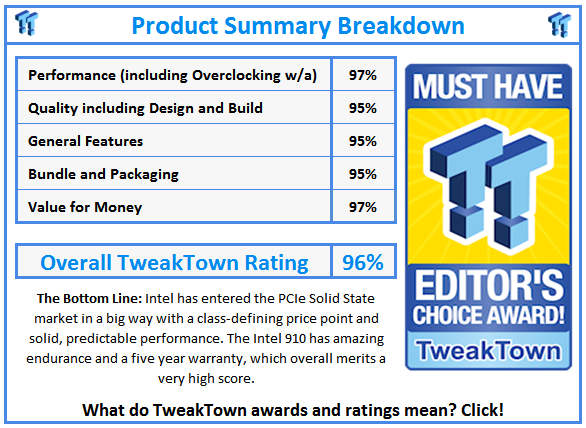

Finally we get down to the performance aspect and here Intel beats all of their own marketed specifications easily. We attained 227,247 4K random read IOPS, well above the rated 180,000 IOPS. The 4k Random write IOPS reached 85,768, also higher than the rated 75,000. The capability of 100,000 4K random write IOPS in Steady State with 20% Overprovisioning tells the story for the possibility of configuring the device for high random workloads as well.

Overall it is rare that we see a device that wins on all counts, but the Intel 910 is definitely in a class of its own.