The Bottom Line

Pros

- + Absolutely amazing 4K 120FPS gaming performance.

- + DLSS 3 is bonkers... 2-3x performance in compatible games.

- + 3.0GHz+ GPU clocks on Ada!

- + Runs cool, doesn't use more than 450W.

- + The best GPU money can buy right now.

Cons

- - It's expensive... but not 2021-GPU-pricing-is-nuts expensive.

- - Navi 31 isn't far away.

Should you buy it?

AvoidConsiderShortlistBuyIntroduction

The day is finally here... NVIDIA's new GeForce RTX 4090 graphics card has been unleashed, with the first embargo NDA allowing GPU reviewers across the world to reveal their GeForce RTX 4090 Founders Edition review... and man, what a graphics card it is.

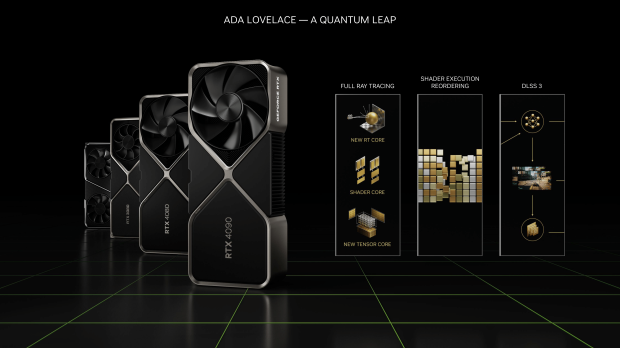

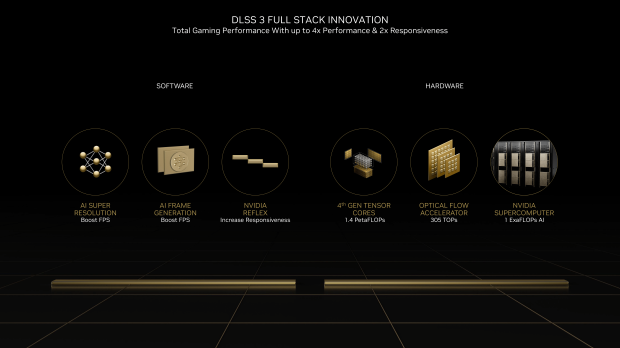

NVIDIA's new GeForce RTX 4090 Founders Edition has so many new things going on inside of it, I don't think anyone is going to be able to cover everything in a single review. We have a new GPU architecture, new Streaming Multiprocessors, 4th Generation Tensor Cores, Optical Flow, 3rd Generation RT Cores, Shader Execution Reordering (SER), DLSS 3, NVIDIA Studio, and AV1 encoders... and that my friends, is just for starters.

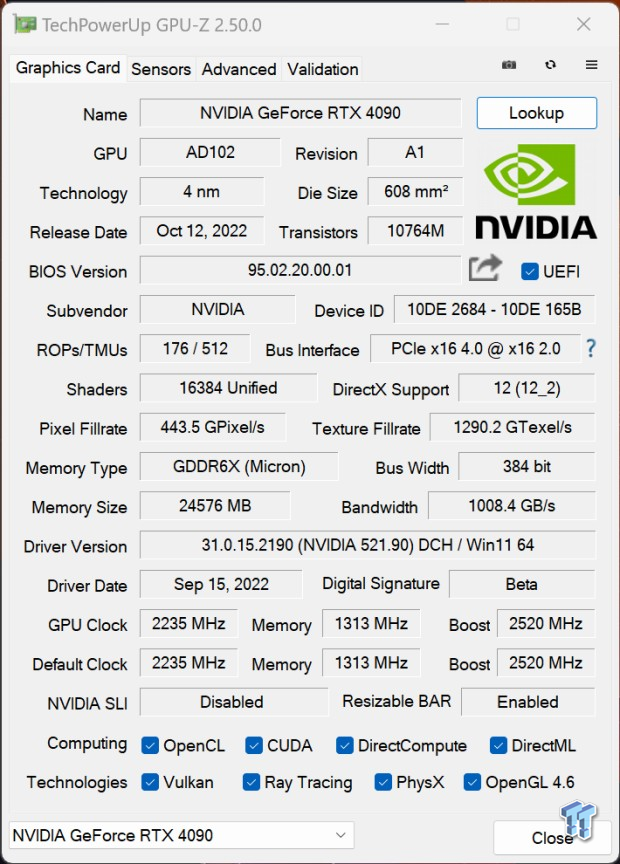

Inside, the new Ada Lovelace GPU architecture arrives in the form of the AD102-300 GPU, which packs

NVIDIA is all about ray tracing -- for obvious reasons -- with its new GeForce RTX 4090 graphics card, and all of the upcoming RTX 40 series GPUs, but not all gamers use ray tracing. Over the coming months, I'll be tweaking our benchmark runs to include more games with ray tracing, and especially DLSS 3, given that there's so much untapped potential inside of Ada.

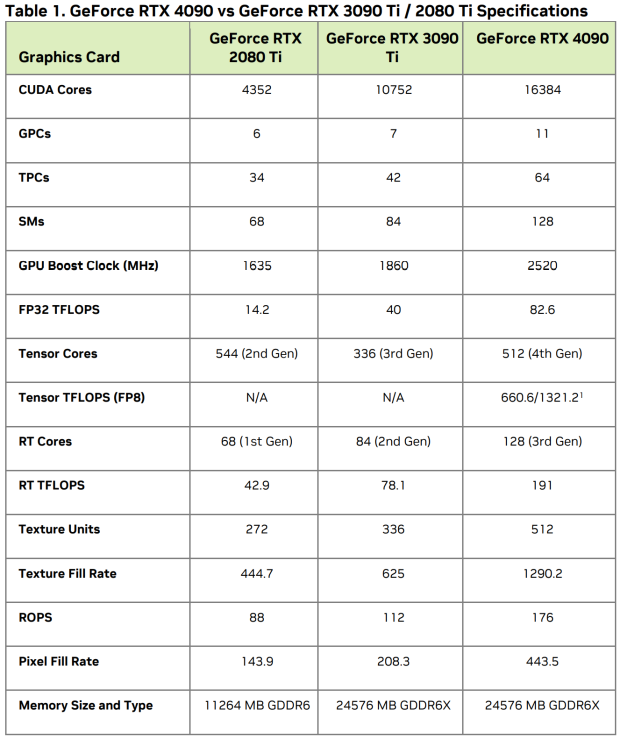

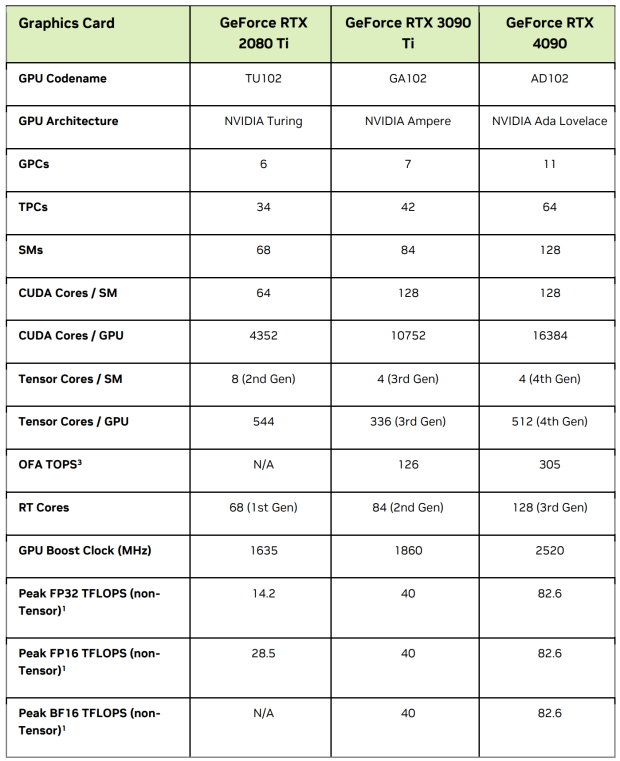

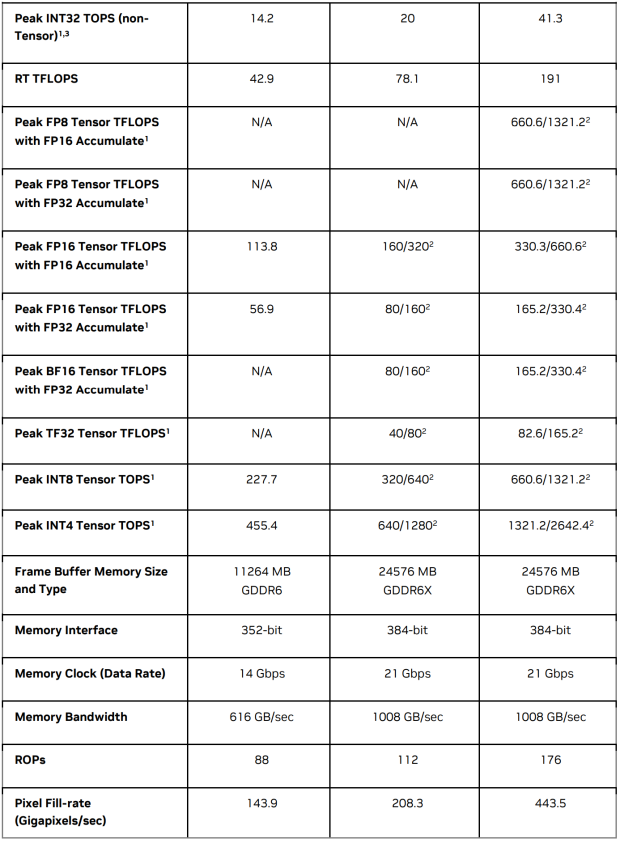

There is a huge injection of virtually everything inside of the new GeForce RTX 4090, with NVIDIA cramming in an incredible 16384 CUDA cores (up from the 10752 CUDA cores inside of the RTX 3090 Ti, and up from the puny 4352 CUDA cores inside of the RTX 2080 Ti). We have the same 24GB of GDDR6X memory on a 384-bit memory bus providing over 1TB/sec of memory bandwidth, and a LOT of overclocking potential on that AD102 GPU.

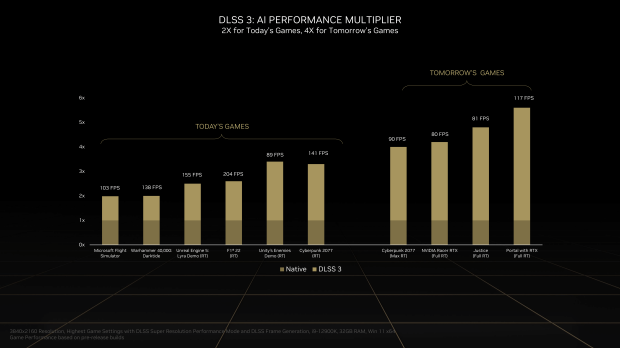

NVIDIA has claims of "4x RTX 3090 Ti" performance with its new RTX 4090, but you'll see none of that shiz here in my review. Yes, there are instances where performance is truly mind-f***ing-blowing, but 4x performance requires a particular game, particular update, ray tracing enabled, DLSS 3 enabled... most gamers won't be doing that, at least not yet.

- Read more: MSI GeForce RTX 3090 Ti SUPRIM X Review

- Read more: NVIDIA GeForce RTX 3090 Founders Edition Review

- Read more: NVIDIA GeForce RTX 3080 Founders Edition Review

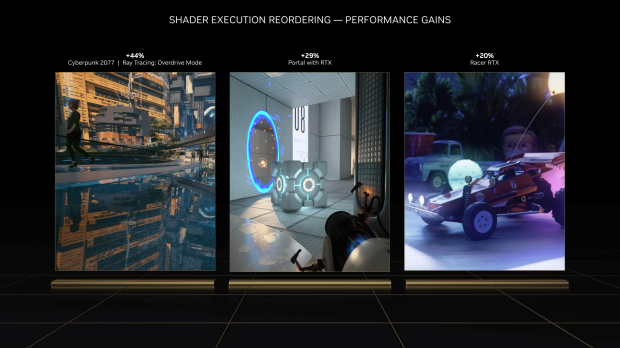

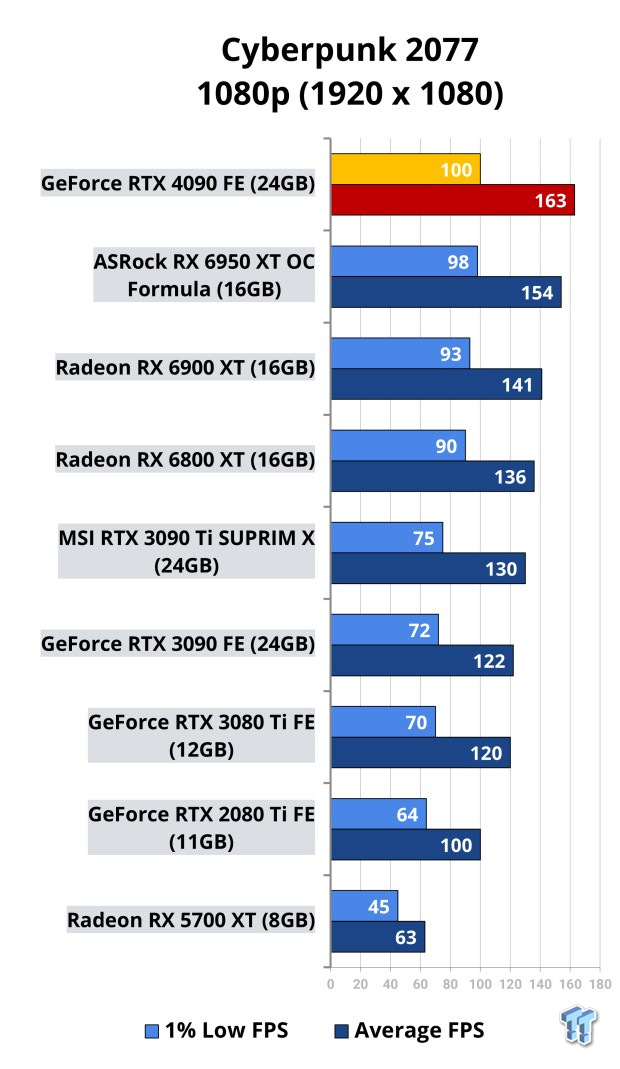

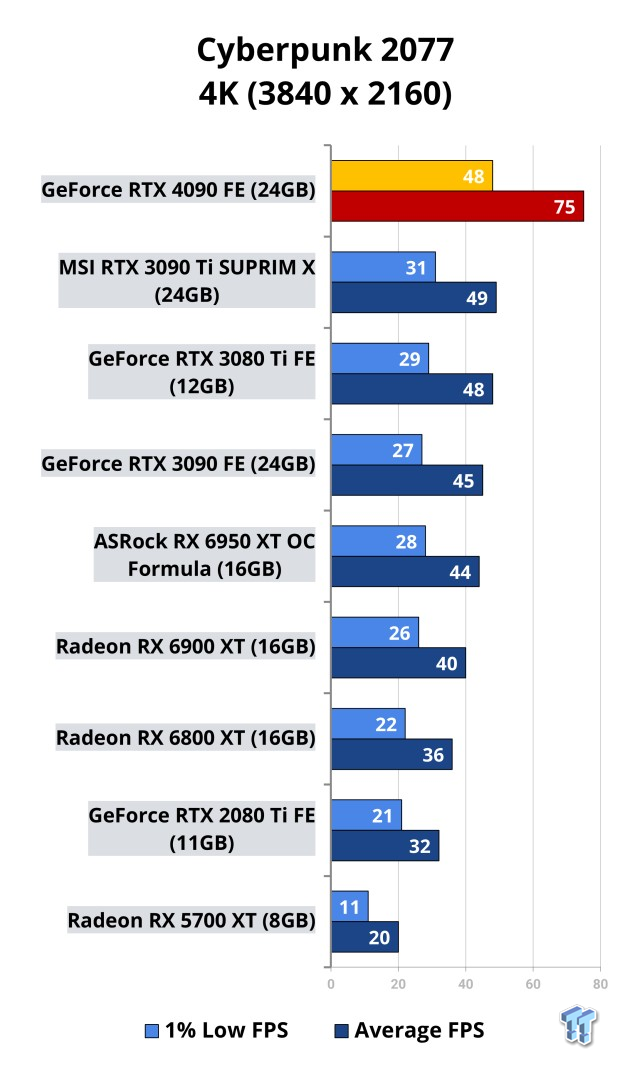

Cyberpunk 2077 and Flight Simulator were two of the games NVIDIA suggested and pushed during its marketing for Ada Lovelace and the new GeForce RTX 4090, but these two games are part of my benchmark runs anyway so off I went. Cyberpunk 2077 and Flight Simulator can both enjoy 2-3x the performance at 4K, but when you're gaming at 8K... man, those performance increases are seriously nice to see.

HDMI 2.1 debuted on the RTX 30 series = 4K 120Hz and 8K 60Hz through a single cable, but the next-gen DisplayPort 2.0 standard (which handles a huge 4K 240Hz and 8K 120Hz through a single DP2.0 cable) isn't present here on the new Ada Lovelace GPU architecture and GeForce RTX 4090 graphics card (or any of the RTX 40 series graphics cards). Very, very disappointing, NVIDIA.

NVIDIA's new GeForce RTX 4090 Founders Edition will start from $1599, and is available October 12 worldwide where you'd usually find Founders Edition graphics card.

Who Is Ada Lovelace

August Ada King, Countess of Lovelace aka Ada Lovelace was born on 10 December 1815 passing away on 27 November 1852 and was an English mathematician and writer, mostly known for her work on Charles Babbage's proposed mechanical general-purpose computer, the Analytical Machine.

Ada was the first to recognize that the machine had applications and uses beyond its pure calculation, publishing the first algorithm intended to be used by the machine... and because of this, Ada Lovelace is regarded as the first computer programmer.

In 1835, Ada married William King, and in 1838 King was made Earl of Lovelace... which is how Ada became the Countess of Lovelace.

Between 1842 and 1843, Ada translated an article by Luigi Menabrea, an Italian military engineer, about the Analytical Engine, helping it along with an elaborate set of notes which she simply called "Notes". These notes might not seem important when you're reading about them now, but they're important when it comes to the history of computers, which most consider being the first computer program -- a computer algorithm that was designed to be carried out by a machine.

Ada also had a vision of the capabilities of computers in the future, beyond simple calculations and number-crunching. Babbage used to only focus on the capabilities of computers at the time, meanwhile, Ada had a "poetical science" mindset that made her question the Analytical Engine (her own notes even prove this) examining how individuals and society relate to technology as a collaborative tool.

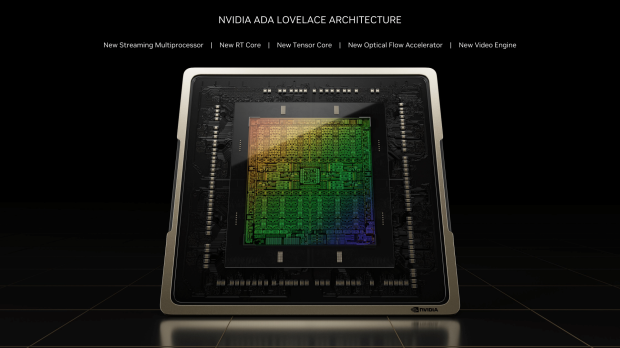

Ada Lovelace GPU architecture

NVIDIA's new Ada Lovelace GPU architecture has new Streaming Multiprocessors (SMs), new RT Cores, new Tensor Cores, a totally new Optical Flow Accelerator, and a new Video Engine... and we're just getting started.

What a beauty.

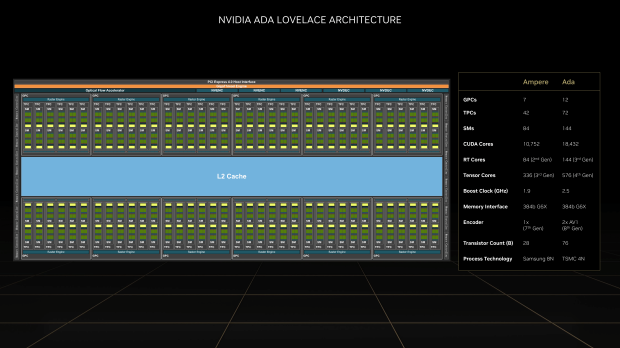

NVIDIA's new Ada Lovelace GPU architecture has (up to) 12 GPCs, 72 x TPCs, 144 x SMs, and up to a huge 18432 CUDA cores, 144 x RT Cores (3rd Gen), and 576 Tensor Cores (4th Gen). NVIDIA has up to a 2.5GHz GPU clock on the new Ada GPU inside of the RTX 4090 (it pushes up to and over 3.0GHz, btw) with 24GB of GDDR6X memory on a 384-bit memory bus, as well as 2 x AV1 decoders, and a huge 76 billion transistors made on TSMC's new 4N process node.

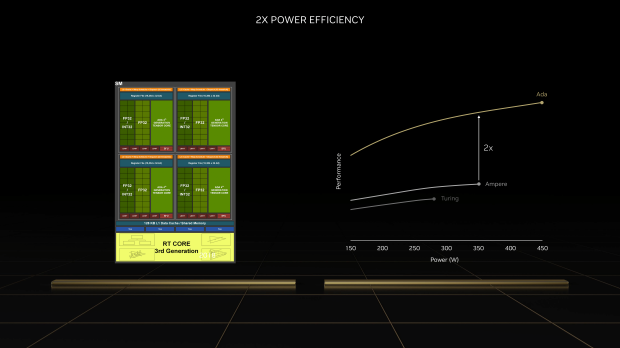

Ada is 2x more power efficient than the Turing and Ampere GPUs with some commanding performance for 450W (which is the TDP of the current king: RTX 3090 Ti).

There is a lot of new stuff going on under the hood of Ada and the next-gen GeForce RTX 40 series GPUs.

Like, a lot.

But man, when you get to DLSS Super Resolution and DLSS 3... things get real, real exciting for Ada.

DLSS 3, Frame Generation, Ray Tracing

DLSS 3

DLSS 3 is some true GPU black magik, with NVIDIA putting in the hard yards into its AI-based rendering technology offering some next-gen gaming performance on top of the already next-gen GPU performance you're already getting with Ada Lovelace and the GeForce RTX 4090.

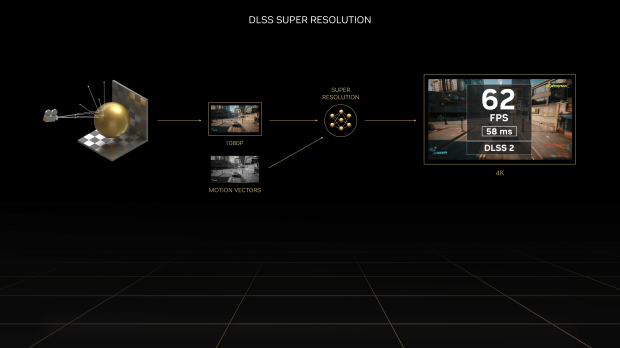

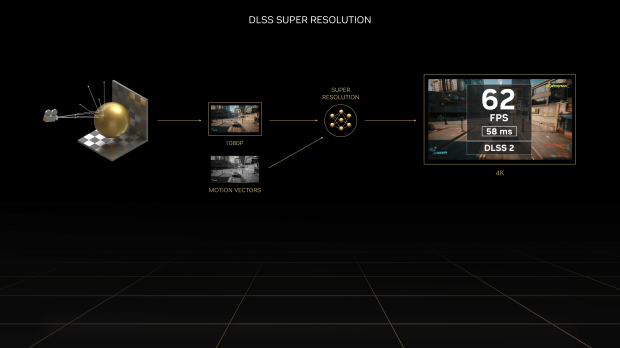

DLSS (Deep Learning Super Sampling) uses AI super resolution and Tensor Cores on GeForce RTX series GPUs to super-boost frame rates, as well as improve the image quality of your games beyond the native resolution (especially as you use games with higher versions of DLSS, like DLSS 2.x and DLSS 3).

The original DLSS debuted back in early 2019 and while it wasn't an overnight success, it is now an amazing piece of technology that sides inside GeForce RTX series GPUs. DLSS 1.0 started off with just a few games: Battlefield V and Metro Exodus. After that, Remedy Games shipped out Control which featured ray tracing and an improprived version of DLSS but it didn't use Tensor Cores.

DLSS 2.0 was a huge step forward, with improvements made to image quality and performance, with a generalized neural network that adapts to all games and scenes without specific training required. DLSS 2 is pumping along inside of over 200 games and app right now, with support from major game engine technologies like Epic Games' Unreal Engine and Unity.

Control got an update that provided DLSS 2.0 support, using Tensor Cores this time, but NVIDIA said AI wasn't needed to be trained on each game specifically. But from there... DLSS 2.0 evolved into DLSS 2.1, 2.2, 2.3, and now 2.4 which recently shipped in Marvel's Spider-Man Remastered.

NVIDIA's AI supercomputer continuously has DLSS 2 training on it, with 4 major updates released that help image quality and performance... but then there's DLSS 3.

DLSS 3

DLSS 3 is a major leap forward, with NVIDIA explaining it as a "revolutionary breakthrough in AI-powered graphics that massively boosts performance while maintaining great image quality and responsiveness" and they are NOT wrong. AMD has its work cut out for it with FSR in the future, and Intel... well, we'll leave those guys out of the race with Arc for now because they can only dream of having a GPU as powerful as AD102 and future-gen DLSS 3 tech.

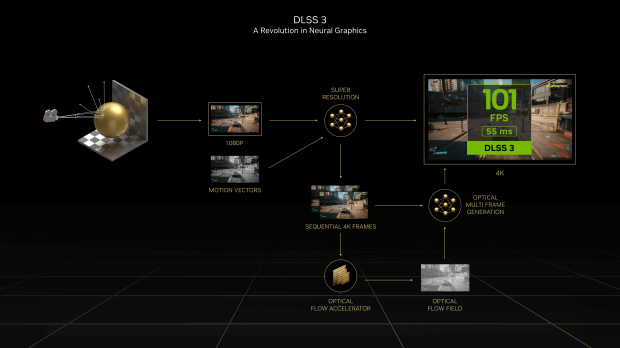

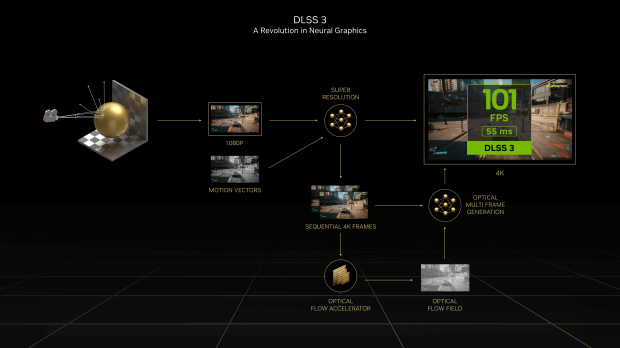

NVIDIA is building on top of the DLSS Super Resolution blocks with DLSS 3, which adds Optical Multi Frame Generation that generates entirely new frames, as well as integrating NVIDIA Reflex low latency technology for the absolute peak in responsiveness.

DLSS 3 is super-powered by NVIDIA's new 4th Gen Tensor Cores and Optical Flow Accelerator inside of the next-gen Ada Lovelace GPU architecture, which is the star of the show here with the new GeForce RTX 40 series. You can't run DLSS 3 on older-gen GeForce RTX series GPUs, but you can run older DLSS versions in games on your new RTX 40 series GPU.

DLSS Frame Generation

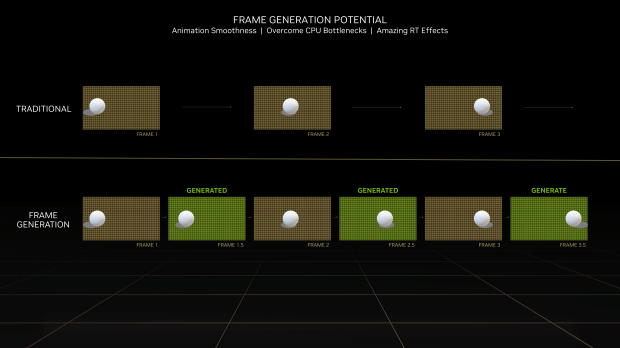

NVIDIA's new Ada Lovelace GPU architecture has something new with the new DLSS Frame Generation convolutional autoencoder taking in 4 inputs -- current, and prior game frames, an optical flow field generated by Ada's Optical Flow Accelerator, and game engine data including motion vectors and depth.

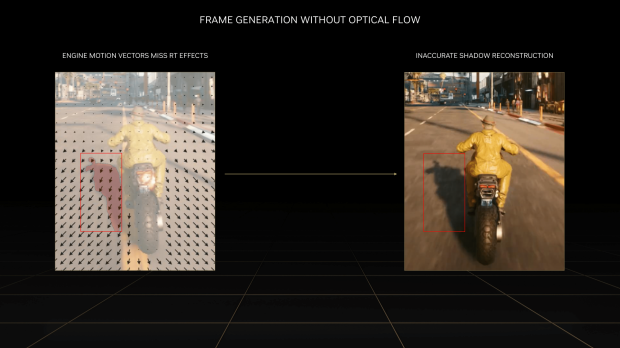

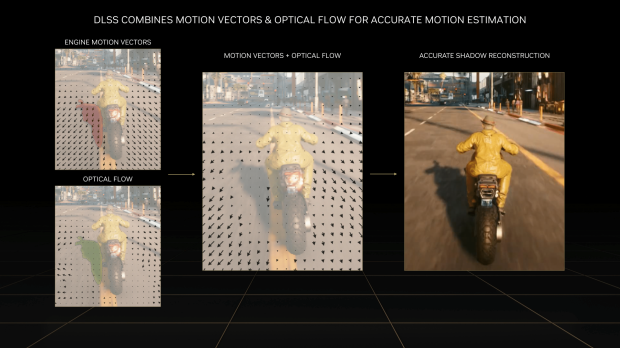

Ada's Optical Flow Accelerator analyzes two sequential in-game frames and calculates an optical flow field. The optical flow field captures the direction and speed at which pixels are moving from frame 1 to frame 2. The Optical Flow Accelerator is able to capture pixel-level information such as particles, reflections, shadows, and lighting, which are not included in game engine motion vector calculations. In the motorcycle example below, the motion flow of the motorcyclist accurately represents that the shadow stays in roughly the same place on the screen with respect to their bike.

Whereas the Optical Flow Accelerator accurately tracks pixel level effects such as reflections, DLSS 3 also uses game engine motion vectors to precisely track the movement of geometry in the scene. In the example below, game motion vectors accurately track the movement of the road moving past the motorcyclist, but not their shadow. Generating frames using engine motion vectors alone would result in visual anomalies like stuttering on the shadow.

For each pixel, the DLSS Frame Generation AI network decides how to use information from the game motion vectors, the optical flow field, and the sequential game frames to create intermediate frames. By using both engine motion vectors and optical flow to track motion, the DLSS Frame Generation network is able to accurately reconstruct both geometry and effects, as seen in the picture below:

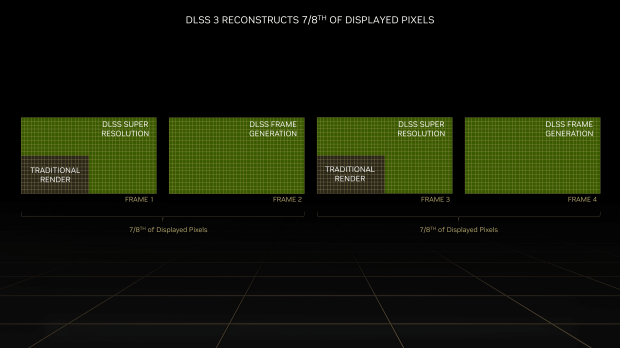

With DLSS 3 enabled, AI is reconstructing three-fourths of the first frame with DLSS Super Resolution, and reconstructing the entire second frame using DLSS Frame Generation. In total, DLSS 3 reconstructs seven-eighths of the total displayed pixels, increasing performance significantly!

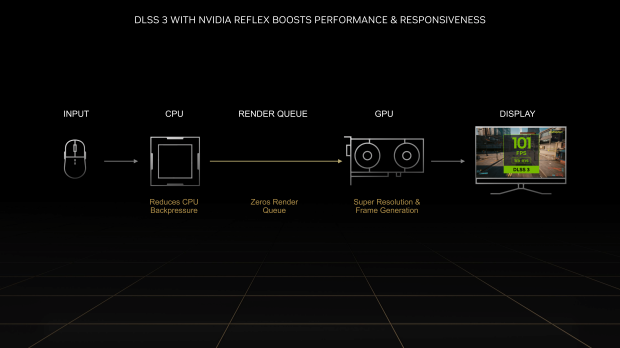

DLSS 3 also incorporates NVIDIA Reflex, which synchronizes the GPU and CPU, ensuring optimum responsiveness and low system latency. Lower system latency makes game controls more responsive and ensures on-screen actions occur almost instantaneously once you click your mouse or other control input. When compared to native, DLSS 3 can reduce latency by up to 2X.

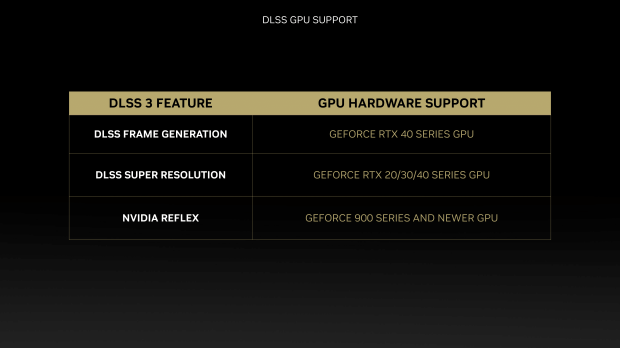

- Optical Multi Frame Generation - RTX 40 series GPU only

- Super Resolution - RTX 40, RTX 30, RTX 20 series GPUs

- NVIDIA Reflex - GTX 900 series GPUs and up supported

DLSS 3 = is perfect for ray tracing games, adding performance when image quality (turning RAY TRACING on) reduces FPS... enabling DLSS 3 brings you not just back to parity (the same perf with RT *off*) but it injects a serious amount of FPS into the game negating any visual bells and whistles you've enabled (like, ray tracing).

So, you can enable ray tracing in something like Cyberpunk 2077... reduce your FPS by a huge chunk, but enable DLSS 3 on your new GeForce RTX 40 series graphics card and you'll get MORE PERFORMANCE than you would at native rendering resolution + NO ray tracing enabled. It's like magic, but for game rendering.

Now, what about promised performance with DLSS 3? Yeah, that's where the money shot is in terms of benchmark charts, check this bad boy out:

Speaking of ray tracing... let's dive into Ada Lovelace's advances in ray tracing, with NVIDIA cementing its commanding role in the world of ray tracing in games.

Ray Tracing

It wouldn't be a new NVIDIA GeForce RTX series GPU without next-gen ray tracing upgrades, where Ada Lovelace really delivers on huge upgrades to ray tracing.

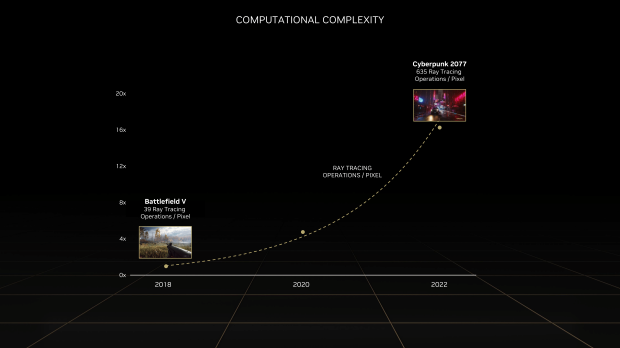

NVIDIA kicked off the Ray Tracing game back in 2018 and early 2019 with Battlefield V featuring 39 ray tracing operations per pixel, up to an insane 635 ray tracing operations per pixel for Cyberpunk 2077.

NVIDIA's next-gen GeForce RTX 40 series GPUs all support DLSS 3, so if you're using a game that supports ray tracing and it supports DLSS 3... then you're going to get a better-looking game with far more performance. It's pretty much black magik, something I've often referred to DLSS as.

It's simply magical, and I'm sure NVIDIA will team with Disney in the future for some crazy good-looking (on GeForce GPUs of course) animated movie in the way Frozen changed the world with "Let It Go", NVIDIA changes it with DLSS.

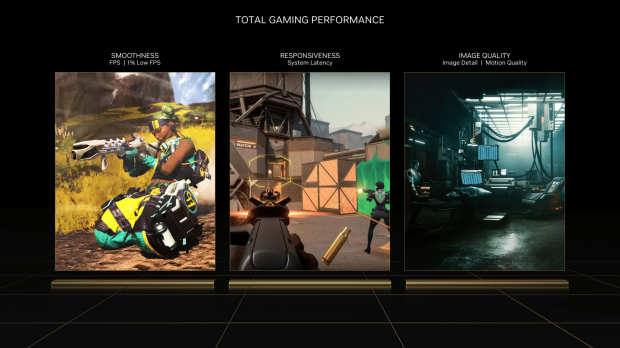

If you want the best smoothness (highest FPS with the best 1% low FPS) mixed with the best responsiveness (overall system latency) and the best image quality (detail and motion quality) then you're going to get that with Ada + RT + DLSS 3 technologies combined.

CD PROJEKT RED will soon push out an update for Cyberpunk 2077 that will enable a new "Overdrive Mode" for ray tracing (up from the "Psycho" mode the game already has) and will usher in 635 ray tracing operations pier pixel, and the second that update drops I'll be pumping the GeForce RTX 4090 graphics card through it.

Detailed Specifications

NVIDIA's new GeForce RTX 4090 graphics card is an absolute behemoth, with the AD102-300 GPU inside featuring some powerhouse specs that crush the still-powerful GeForce RTX 3090 and RTX 3090 Ti graphics cards. NVIDIA is still using the same 24GB of GDDR6X memory, on the same 21Gbps bandwidth, with the same 1TB/sec+ memory bandwidth as its RTX 3090 Ti.

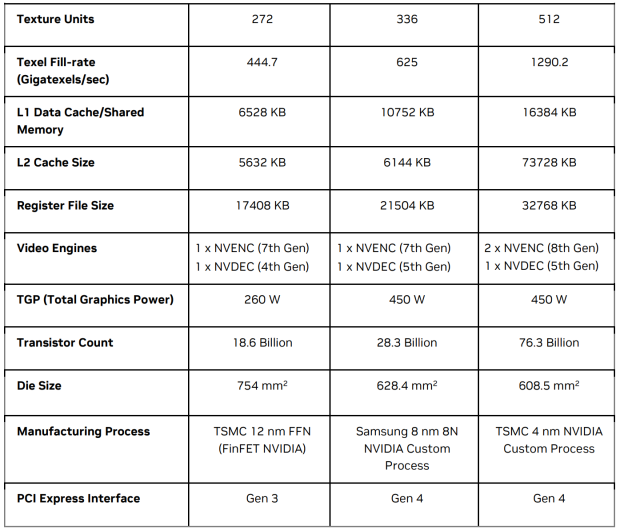

But other than that, it's an overhaul of specifications that include a huge 12x the L2 cache of the RTX 3090 Ti, with the RTX 4090 packing a huge 72MB of L2 cache in total.

NVIDIA has finally, finally shifted away from the Samsung 8nm process node for its Ampere GPUs over to TSMC using its custom 4N process node (5nm in reality, but it sounds cooler with TSMC 4N). AD102-300 packs a huge 67.3 billion transistors, and 16834 CUDA cores (over 18,000 in the full-fat Ada GPU).

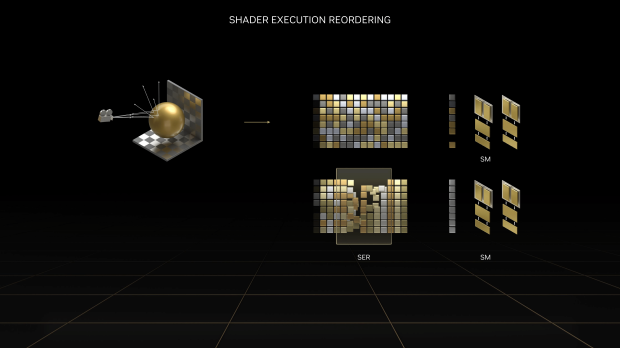

- Programmable Shader: 83 Shader-TFLOPS, over 2X compared to Ampere. Ada's SM includes a major new technology, called Shader Execution Reordering (SER), which reschedules work on-the-fly, giving a 2x speed-up for ray tracing. SER is as big an innovation as out-of-order execution was for CPUs.

- 4th Generation Tensor Core: New Tensor Core in Ada includes the NVIDIA Hopper FP8 Transformer Engine, delivering over 1.3 PetaFLOPS in the RTX 4090 for AI inference workloads. Compared to FP16, FP8 halves the data storage requirements and doubles AI performance. The RTX 4090 delivers more than 2x the total Tensor Core processing power of the RTX 3090 Ti.

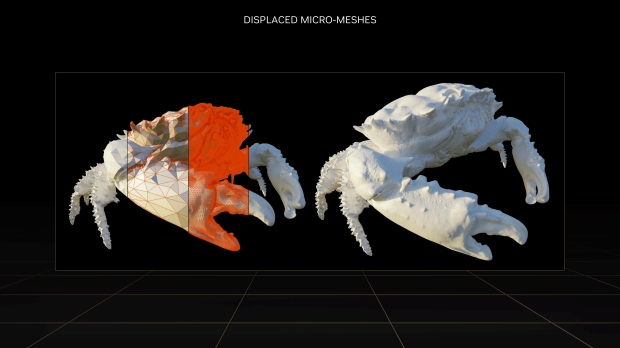

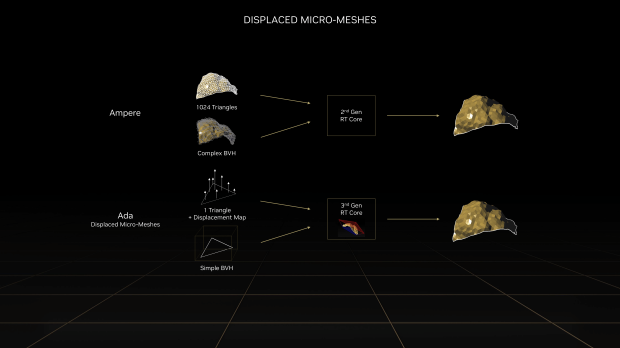

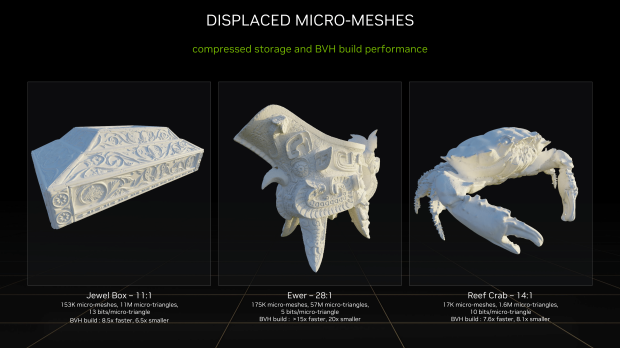

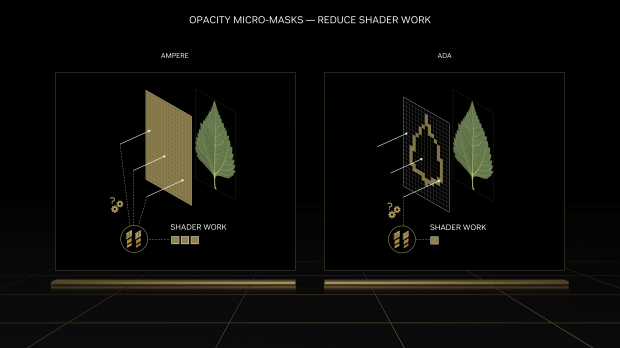

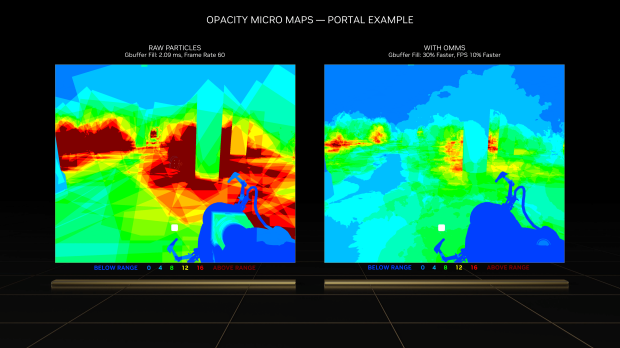

- 3rd Generation RT Core: A new Opacity Micromap Engine speeds up ray tracing of alpha-tested geometry by a factor of 2x, and a new Micro-Mesh Engine, which increases geometric richness without the BVH build and storage cost. Ada ray-triangle intersection throughput delivers 191 RT-TFLOPS, compared to Ampere's 78 RT-TFLOPS.

Detailed Look

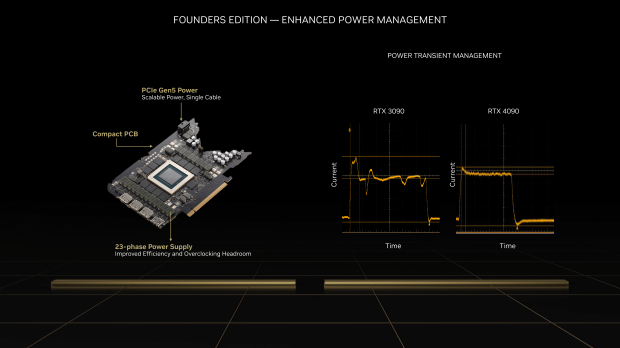

Founders Edition - Enhanced Power Management

NVIDIA's new flagship GeForce RTX 4090 Founders Edition shows how much work the company puts into the evolution of its Founders Edition graphics cards, with the new RTX 4090 FE featuring enhanced power management (this is a big one) and enhanced cooling (just as important).

The enhanced power management on the new GeForce RTX 4090 Founders Edition graphics card is a big upgrade from the current-gen GeForce RTX 3090 Founders Edition and the suped-up RTX 3090 Ti which had power that smashed 450W and some. But the older RTX 3090 FE has inferior power transient management to the new RTX 4090 FE, which offers much more stable power to the card.

This stops those slumps and jumps in power to the card, which results in Ada Lovelace GPUs pushing far higher GPU clocks (2200MHz or so for the RTX 3090 Ti versus 2800MHz through to 3000MHz+ on the RTX 4090) for the same (if not lower) power.

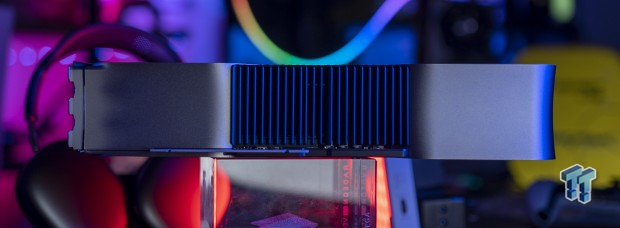

Founders Edition - Enhanced Cooling

NVIDIA's new GeForce RTX 4090 Founders Edition graphics card has a flow-through thermal design (the same as the RTX 30 FE series GPUs) but with larger fans that allow for 20% more airflow. NVIDIA has made improvements to the unibody design, which has been designed for strength and rigidity.

The new GeForce RTX 4090 FE also has enhanced memory efficiency, with lower-power GDDR6X modules used and improved airflow and temperature sensing. The original RTX 3090 used double-sided GDDR6X modules which meant not just the front of the card, but the back of the RTX 3090 FE used to get ridiculously hot with GDDR6X temperatures easily sitting at 110C all day and night long.

Not anymore...

Detailed Look @ RTX 4090 FE

NVIDIA's new GeForce RTX 4090 Founders Edition graphics card from the front doesn't look that different to the RTX 3090 FE, but in saying that, I'm still a huge fan of this design. The RTX 4090 FE design is one of my favorites, and I have custom RTX 4090 models from ASUS, MSI, COLORFUL, GAINWARD, and others.

On top of the RTX 4090 FE you've got that spiffy PCIe 5.0 16-pin power connector, which is ready to push 600W with its 4 x 8-pin PCIe power adapter that's included

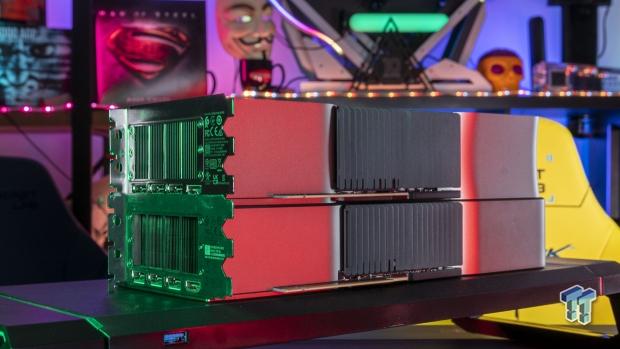

RTX 4090 FE vs RTX 3090 FE

This is the battle to end all battles in the RTX x090 Founders Edition graphics cards, so I've got both of NVIDIA's in-house GeForce RTX 3090 Founders Edition and the new GeForce RTX 4090 Founders Edition in some comparison side-by-side (and sometimes on top of each other). I don't have an RTX 3090 Ti FE card, so the RTX 3090 FE + RTX 4090 FE cards will have to do.

NVIDIA's new GeForce RTX 4090 FE is actually a tad shorter than the GeForce RTX 3090 FE, with the "RTX 4090" and its new font taking up less space on the card than the "RTX 3090". You can see the RTX 4090 FE has a slightly bigger fan, longer than the RTX 3090 FE fan, right up to the edge of the card.

The new RTX 4090 FE might be a little shorter, but it's definitely thicker than the RTX 3090 FE: don't get me wrong, both cards are triple-slot GPU beasts but the RTX 4090 FE is thicker, but it's not as crazy as the AIBs have gone with custom GeForce RTX 4090 graphics cards.

You can see just how thick when you're up close, with the RTX 3090 FE on the left and the new RTX 4090 FE on the right.

Here you can see the RTX 4090 FE (on top) is shorter and thicker while the RTX 3090 FE (on the bottom) is slightly longer, and slightly thinner.

Once again from the side, the RTX 4090 FE is on top here, and the RTX 3090 FE is on bottom.

Test System Specs

I've recently upgraded my major GPU test bed for 2022, but I will be upgrading again soon enough once Intel launches its new 13th Gen Core "Raptor Lake" CPUs and Z790 motherboards, and AMD with its upcoming Ryzen 7000 series "Zen 4" CPUs and X670E motherboards.

The new upgrades include the shift to the Intel Core i9-12900K processor, ASUS ROG Maximus Z690 Extreme motherboard, 64GB of Sabrent Rocket DDR5-4800 memory, and 8TB of Sabrent Rocket 4 Plus PCIe 4.0 M.2 SSD goodness. Intel's flagship Core i9-12900K is a beast, with the Alder Lake CPU packing 8 Performance cores (P-cores) and 8 Efficient cores (E-cores) at up to 5.2GHz.

Motherboard: ASUS ROG Maximus Z690 Extreme

I've got that installed into the bigger-than-life ASUS ROG Maximus Z690 Extreme motherboard, which is absolutely loaded to the brim with technologies and features that it houses everything you need. We're talking about one of the best-looking designs on a motherboard yet, PCIe 5.0 support, enthusiast-grade 10GbE networking, and oh-so-much more.

RAM: 64GB Sabrent Rocket DDR5-4800

Sabrent helped out in a huge way by sending over 64GB of DDR5-4800 memory in the form of 4 x 16GB DDR5-4800 modules of its new Sabrent Rocket DDR5 memory. The company also helped out in an even bigger way, supplying us with a gigantic and super-fast 8TB model of its Sabrent Rocket 4 Plus PCIe 4.0 NVMe M.2 SSD.

SSD: 8TB Sabrent Rocket 4 Plus M.2

We're talking about 7.5GB/sec+ (7500MB/sec) from a single M.2 SSD, along with a gigantic 8TB of capacity. The 2TB drives aren't big enough for all of our game installs for GPU testing... the 4TB is much better, but the 8TB gives us room to move into 2023 without worrying about installing multiple games that are 200GB+ in size.

Some glory shots, of course.

Displays: ASUS ROG Strix 43-inch 4K 120Hz

ASUS has been a tight partner of TweakTown for many years, with the fine folks at ASUS Australia sending over their ROG Strix XG438Q and ROG Swift PG43UQ gaming monitors for our GPU test benches. They're both capable of 4K 120Hz+ through their DisplayPort 1.4 connectivity.

I will be upgrading these in the near future, over to some DisplayPort 2.0-capable panels and some new HDMI 2.1-enabled 4K 165Hz panels in OLED form of course...given that next-gen GPUs are right around the corner, there has been no better time to upgrade your display or TV.

I've been working on this system for a while now, but now we're stretching its legs with the newly-released PC port of Marvel's Spider-Man Remastered. Not just in 1080p or 1440p, not even in just 4K... but at 8K with a native resolution of 7680 x 4320. I've run through some of the very fastest GPU silicon on the planet.

- CPU: Intel Core i9-12900K (buy from Amazon)

- Motherboard: ASUS ROG Maximus Z690 Extreme (buy from Amazon)

- Cooler: CORSAIR iCUE H150i ELITE LCD Display (buy from Amazon)

- RAM: Sabrent Rocket 64GB DDR5-4800 (4 x 16GB) (F4-3600C18Q-32GTZN) (buy from Amazon)

- SSD: Sabrent 8TB Rocket 4 Plus PCIe 4.0 NVMe M.2 SSD (buy from Amazon)

- PSU: MSI MPG A1000G Gaming Power Supply 1000W (buy from Amazon)

- Case: InWin X-Frame 2.0

- OS: Microsoft Windows 11 Pro x64 (buy from Amazon)

- Display: ASUS ROG Swift PG43UQ (4K 120Hz) (buy from Amazon)

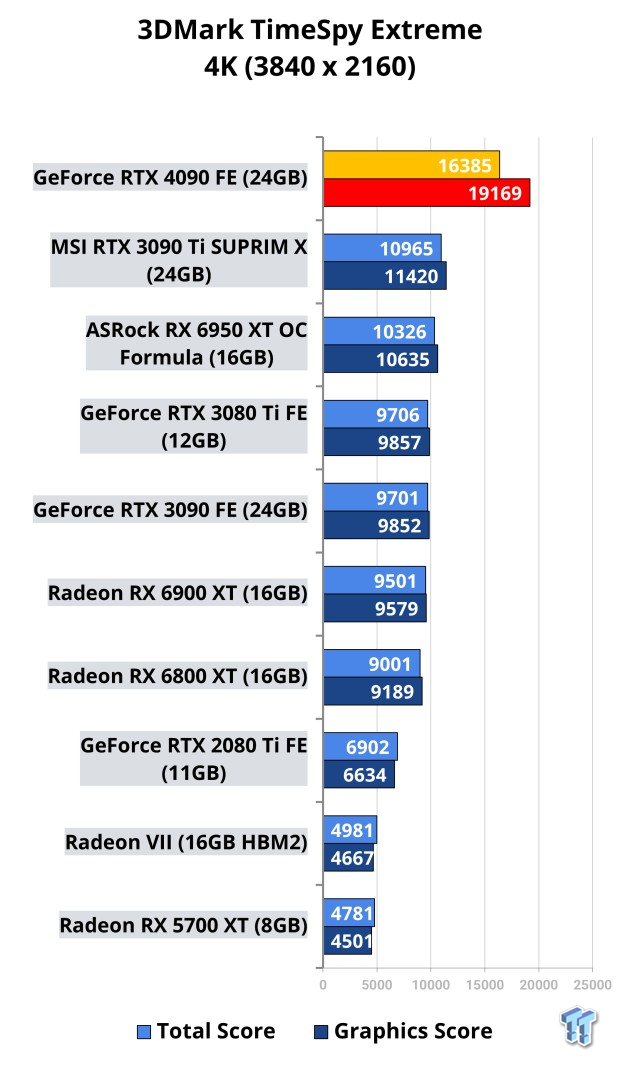

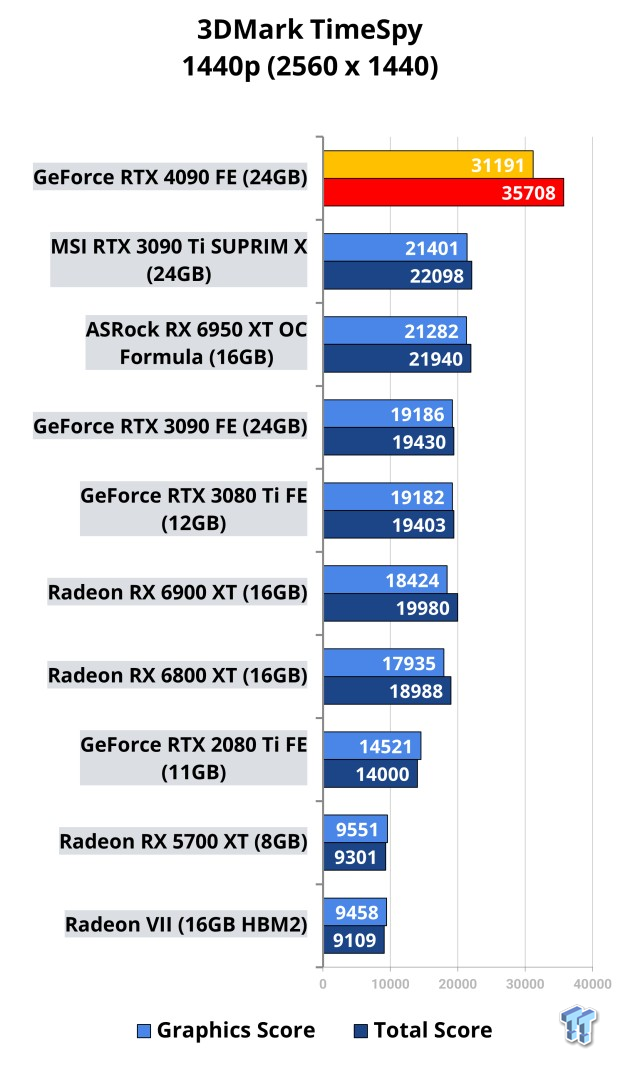

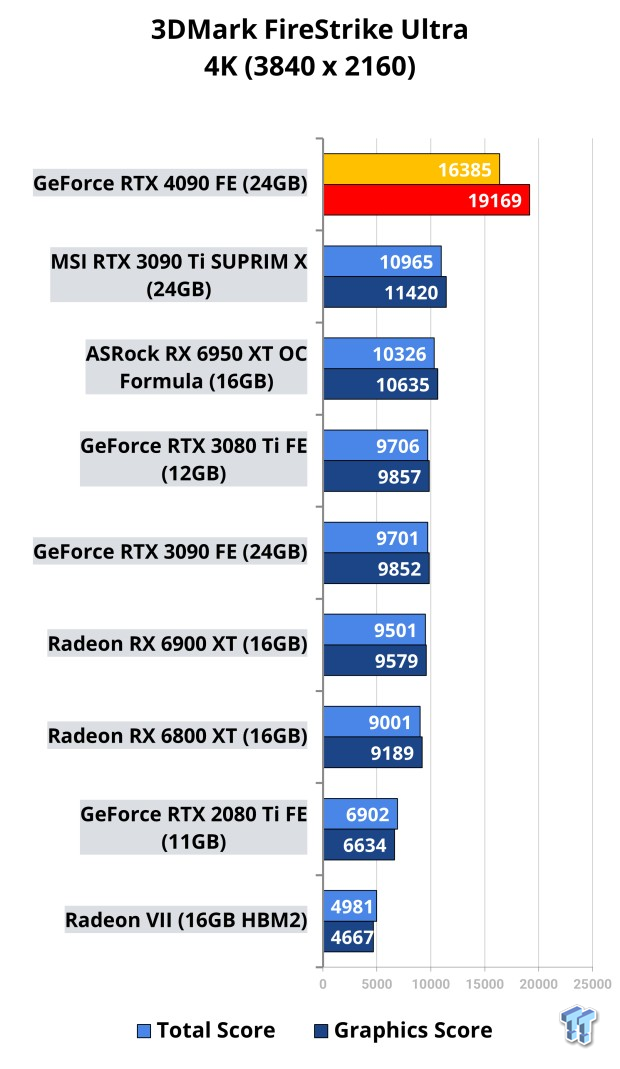

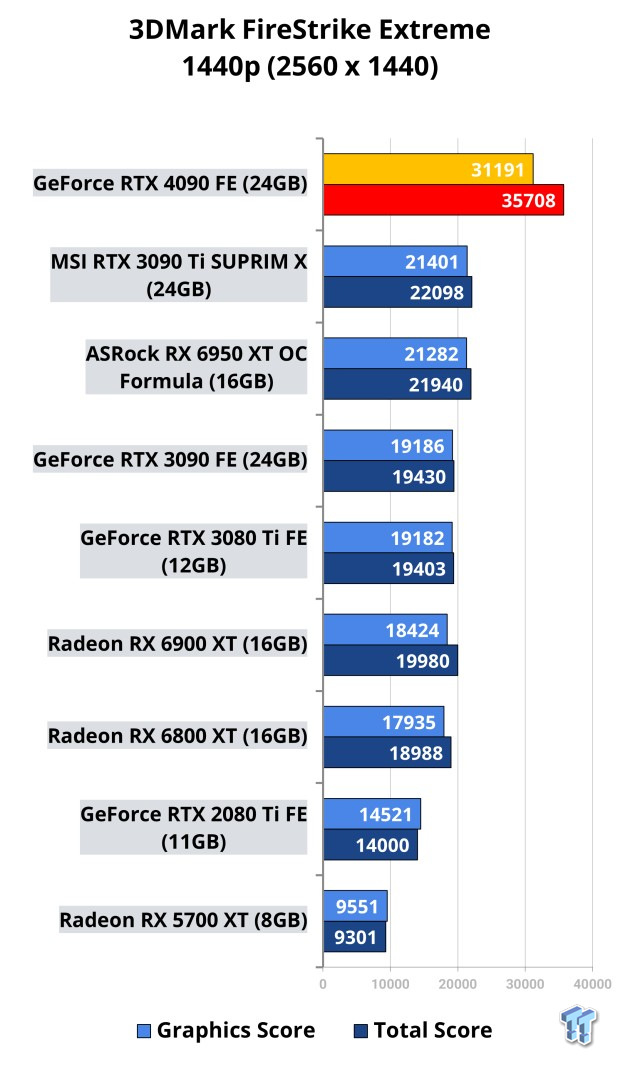

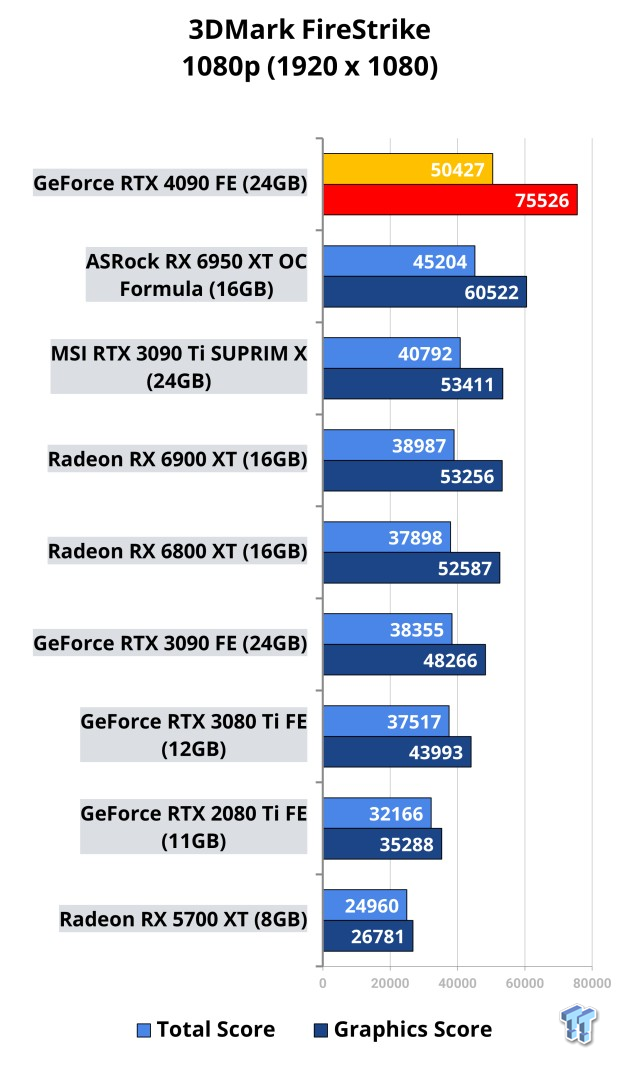

Benchmarks - Synthetic

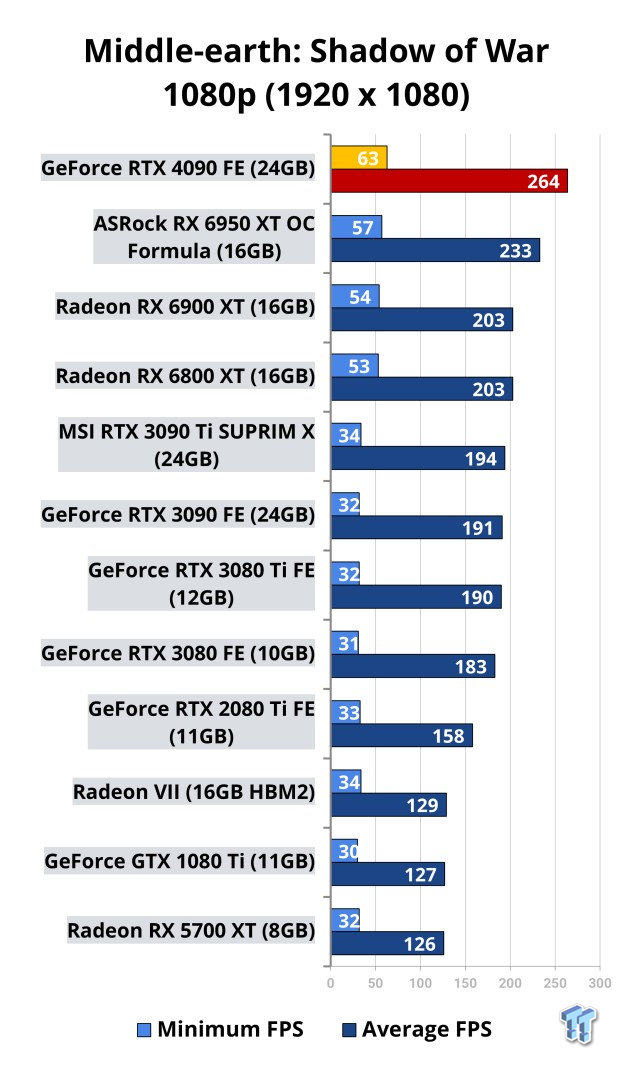

Benchmarks - 1080p

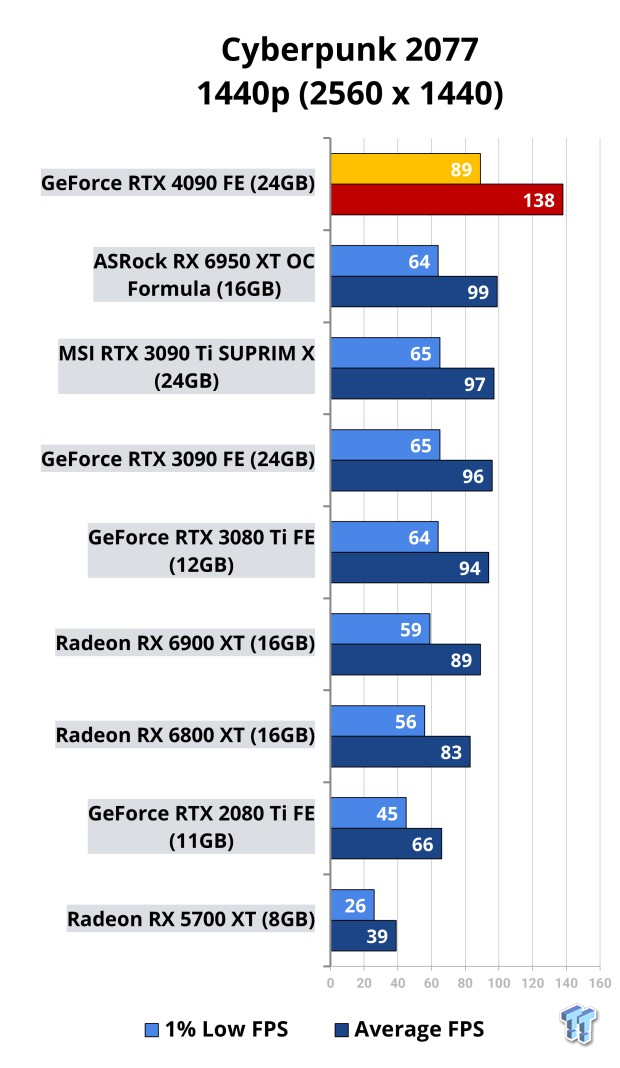

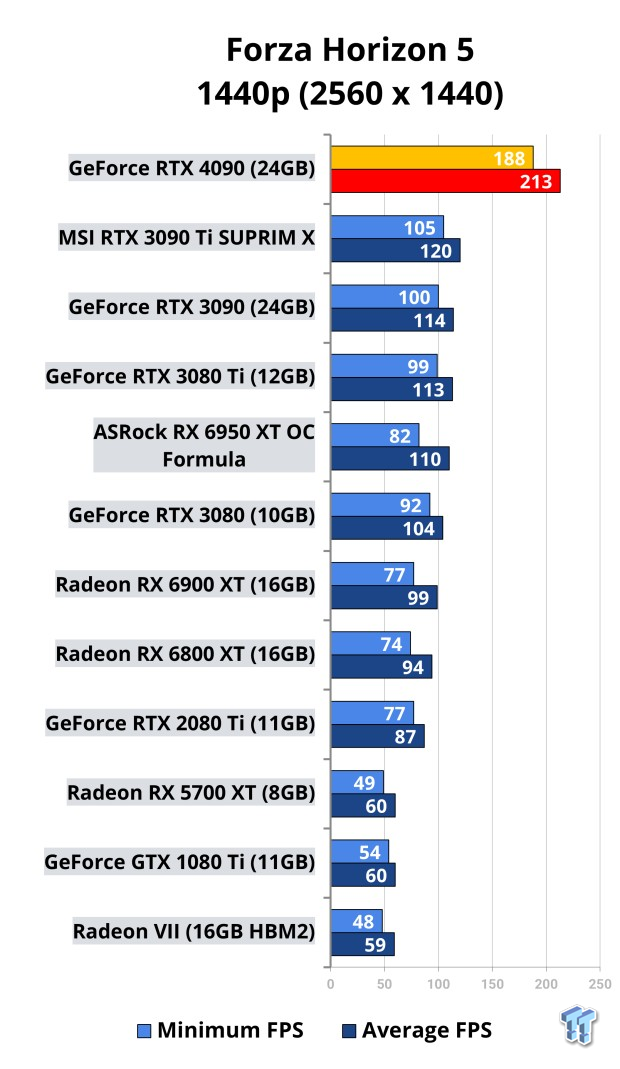

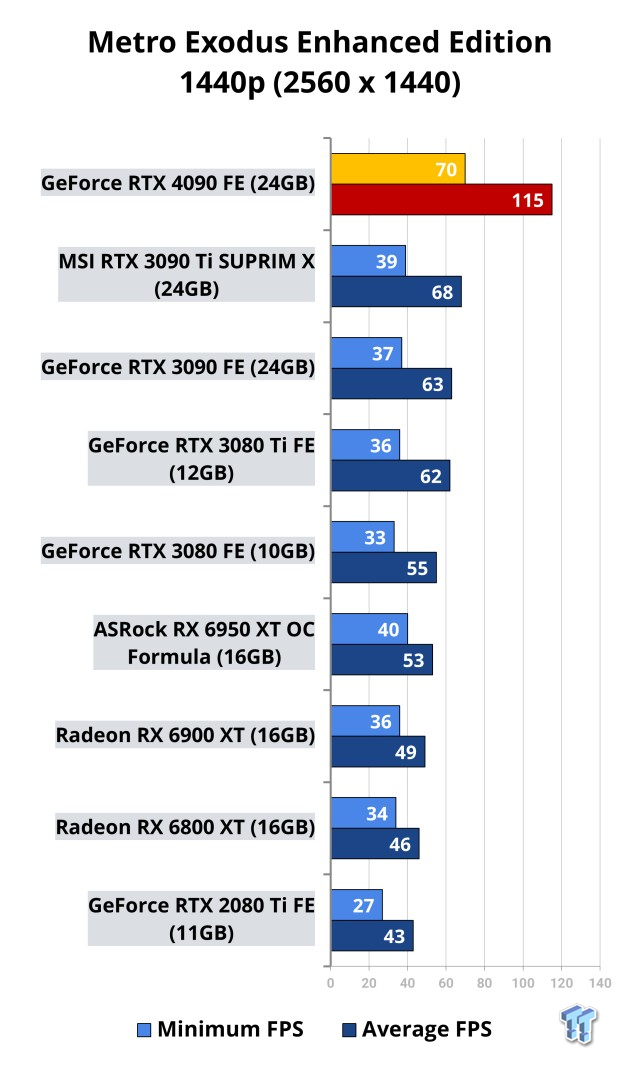

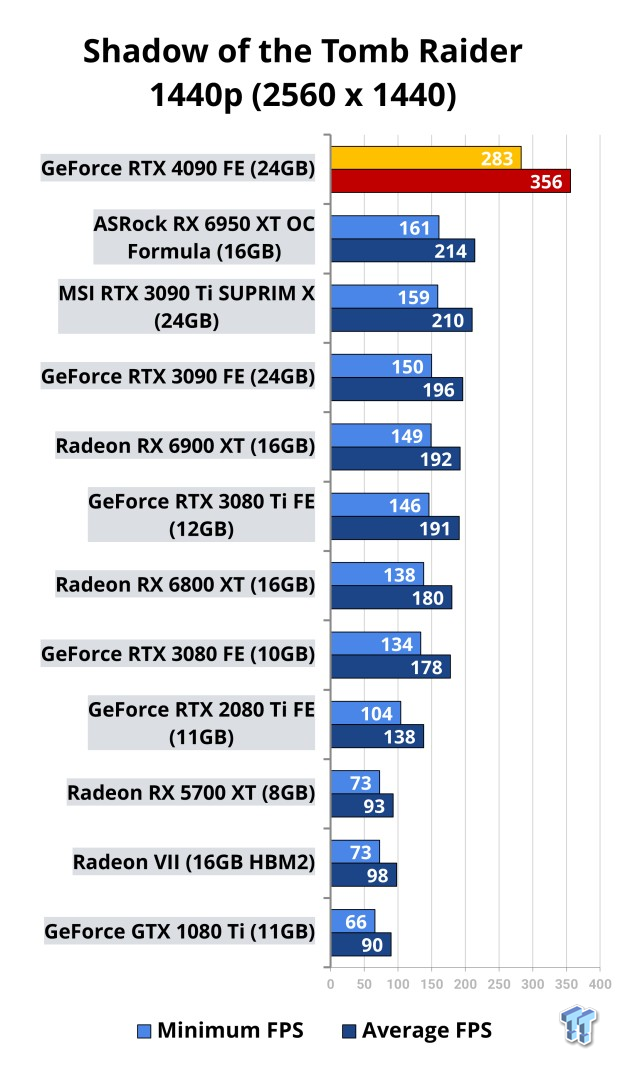

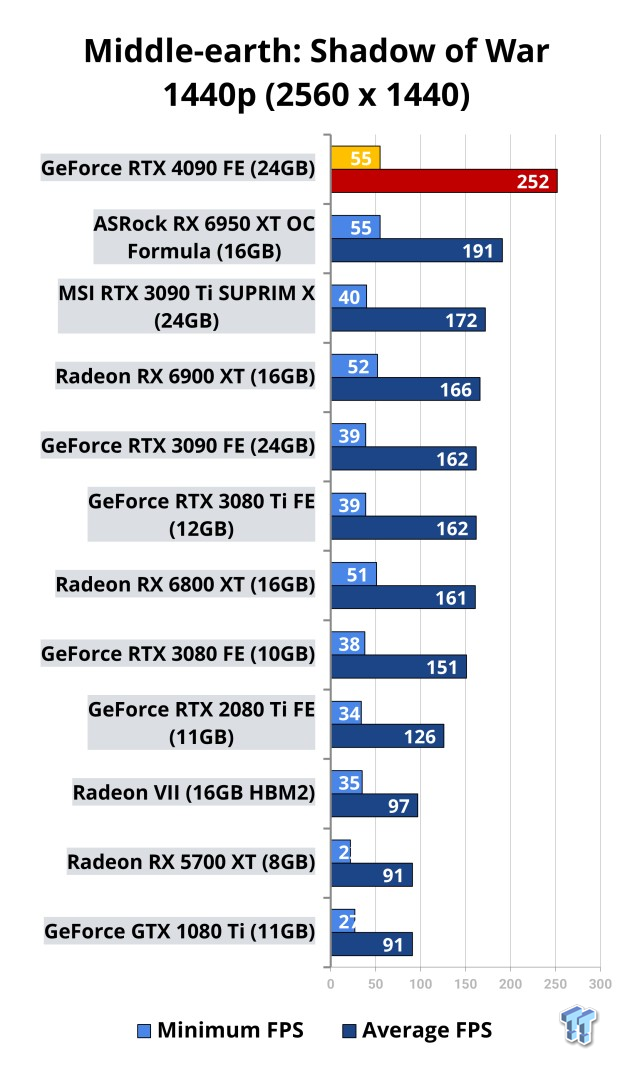

Benchmarks - 1440p

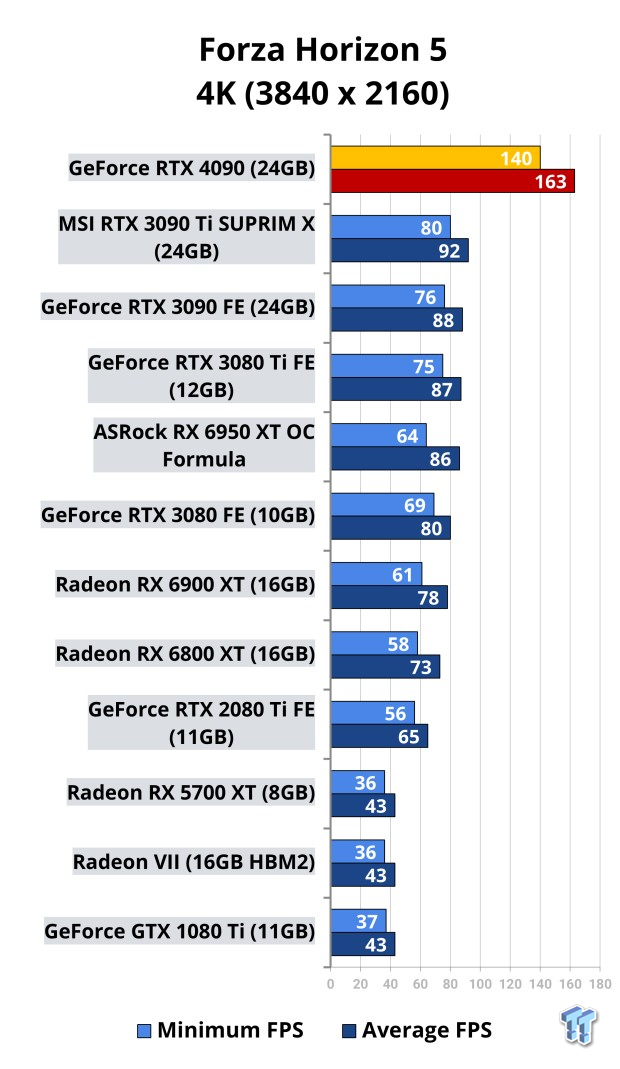

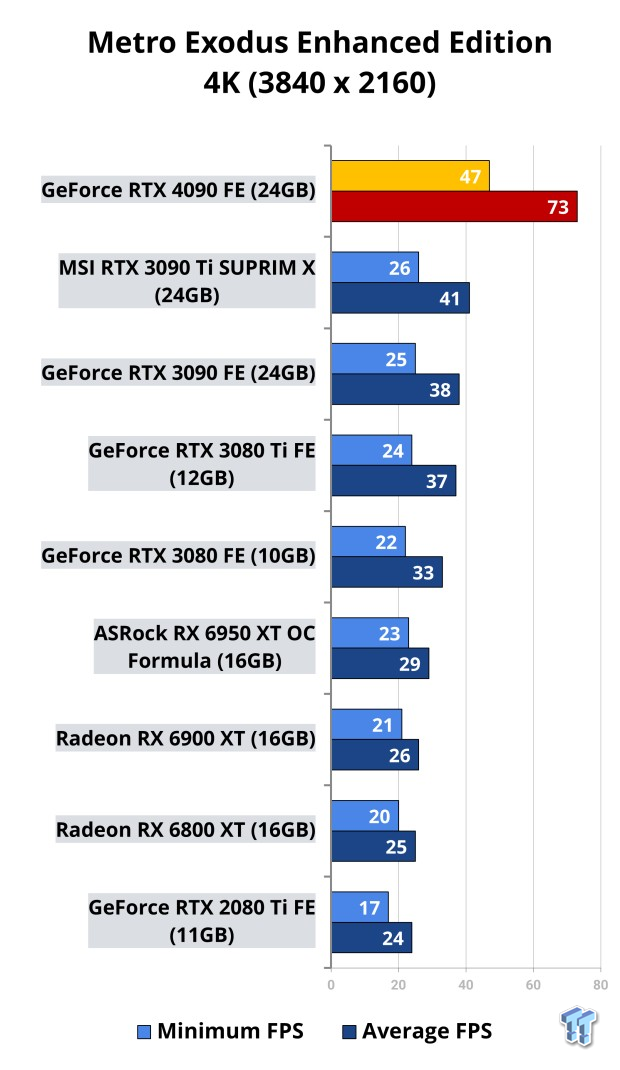

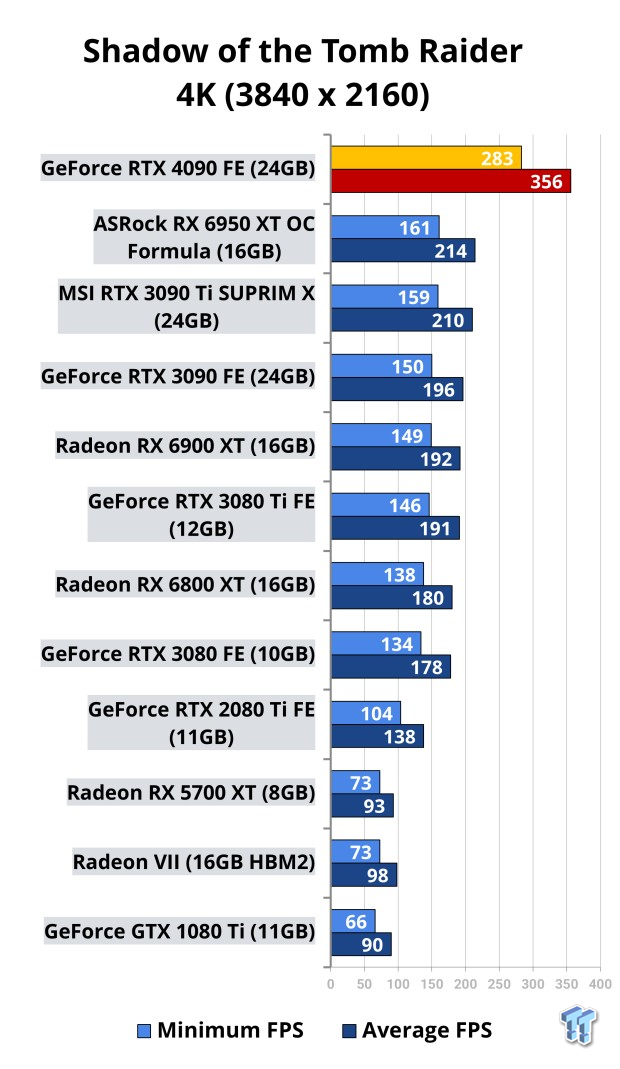

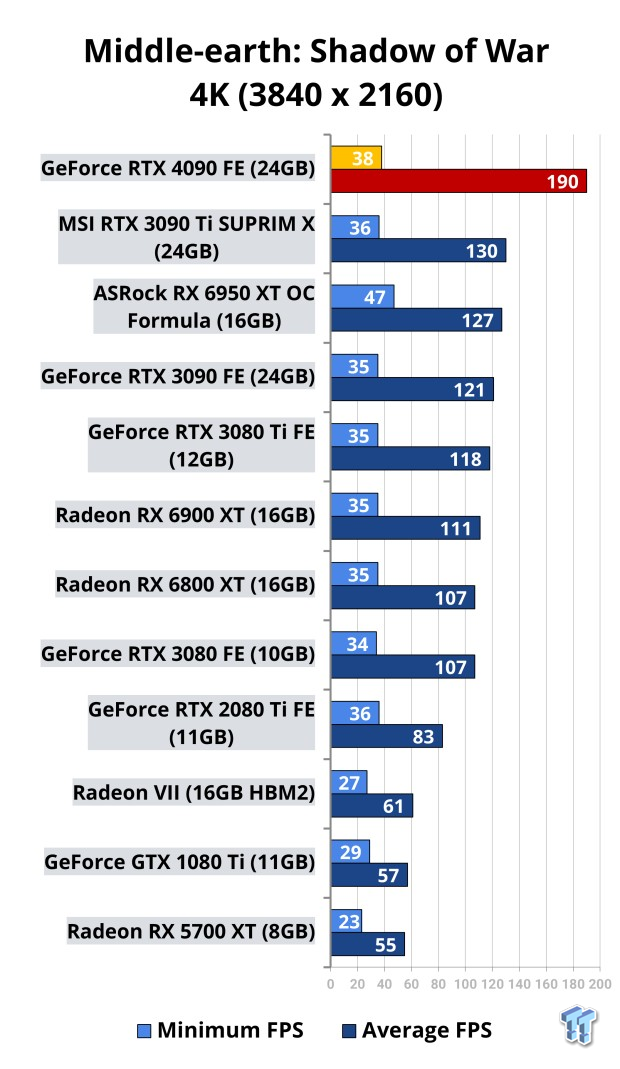

Benchmarks - 4K

Benchmarks - 8K

Coming soon... but these results, man... they'll be worth it.

Temperatures & Power Consumption

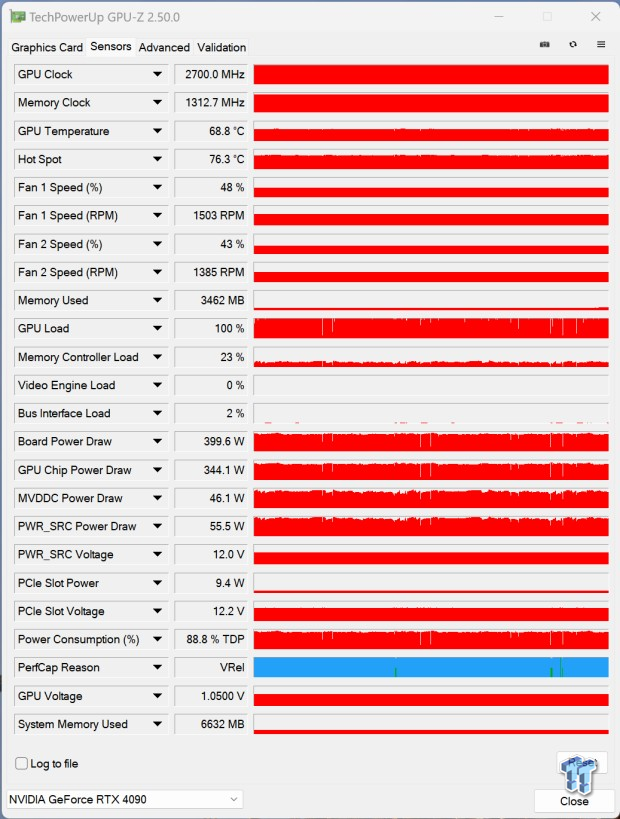

NVIDIA's new GeForce RTX 4090 Founders Edition was hitting an average of 2700MHz GPU clocks, while the GPU temps are pretty cool at under 70C during gaming and benchmark loads, with the GPU hotspot sitting at around 76C. The fans on the RTX 4090 FE were running at 48% (1500RPM or so) and 43% (1385RPM or so).

The entire board power was sitting at around 400W of power consumption, but the Ada Lovelace GPU is much more efficient (along with the RTX 40 FE board design and TSMC 4N process node) meaning you've got the power to turn down the power limit slider and maintain kick-ass next-gen GPU performance.

What's Hot, What's Not

What's Hot

- Fastest GPU money can buy: If you want the very fastest graphics card that money can buy, NVIDIA's new GeForce RTX 4090 Founders Edition is worthy of your hard-earned money. If you held out on buying the GeForce RTX 30 series GPUs, then the GeForce RTX 4090 is going to be a gigantic leap in gaming performance.

- 3.0GHz+ GPU clocks, a GeForce GPU first: Ada Lovelace + TSMC 4N process node has allowed NVIDIA to push the GPU clock to the limit on the GeForce RTX 4090, where you're easily able to overclock it up to 3.0GHz -- which is the first time NVIDIA has shipped a GeForce graphics card that has over 3.0GHz GPU clocks.

- Runs cooler, and uses less power than RTX 3090 Ti: Another big thing for the RTX 4090 is that it both runs cooler, and uses less power (50-70W+) than the custom RTX 3090 Ti graphics cards. That's not bad considering it'll also beat the pants off of the RTX 3090 Ti in virtually everything you throw at the RTX 4090.

- More stable, less power spikes than RTX 3090 Ti: NVIDIA has made some gigantic improvements here with Ada, as the previous Ampere-based RTX 3090 Ti was not as stable at high GPU clocks, and had major power spikes (up and down, up and down) compared to the RTX 4090 which is rock, freaking, solid.

- Uncompromising 4K 120FPS gaming performance: 4K 120FPS+ gaming continues to improve with each generation of GPU -- something that I concentrate on -- and with Ada Lovelace's brute rasterization power, 4K 120FPS gaming just got even better with NVIDIA's new flagship GeForce RTX 4090 graphics card.

- 8K 60FPS+ gaming is a reality: 8K gaming isn't quite here yet, but there are people with 8K monitors, more so 8K TVs, 8K 60FPS+ gaming is actually achievable on the RTX 4090. First off, the 24GB of GDDR6X is not just welcomed but required... you'll be using 20GB+ easily in today's titles at 8K.

- 24GB GDDR6X memory: You might not think you need 24GB of VRAM, but high-end 8K gaming and content creation can chew through that 24GB frame buffer in seconds. This is a premium GPU, so a premium amount of GDDR6X memory is here for your every need. I do wish it was faster bandwidth goodness, but we'll see that in 2023.

- TSMC 4N process node: NVIDIA has been limping along using a custom 8nm process node at Samsung, but now they've been unshackled with Ada Lovelace being made on TSMC's new 4N process node (which in reality, is 5nm, but "4N" is for NVIDIA).

- DLSS 3: I say this with every evolution, but DLSS is like black magik -- only this time, NVIDIA has sacrificed something (Jensen, maybe) to the AI upscaling technology gods. DLSS 3 is freaking incredible, truly offering double, triple, and sometimes even more performance over native rendering. It's simply amazing to sit back and watch Microsoft Flight Simulator or Cyberpunk 2077 go from 60FPS or so, to 150FPS+ with a few button presses enabling DLSS 3.

- Ray tracing + DLSS 3 = more FPS than no ray tracing: DLSS 3 super-powers any game that's blessed enough to feature Ada's exclusive upscaling technology, whereas in games like Cyberpunk 2077 turning DLSS 3 on allows you to run ray tracing with MORE performance, not less.

What's Not

- No DisplayPort 2.0 connector, WTF: NVIDIA not including the new display connector moving forward -- DP2.0 -- is a huge mistake. It means anyone buying a $1500+ graphics card in the tail end of 2022 is not going to be able to use next-gen DP2.0-capable gaming monitors that we'll begin seeing debut in the coming months, probably at CES 2023 in January. WTF, NVIDIA? Even Intel's is-it-even-real Arc GPUs have a DP2.0 port.

- Weird marketing: I really, REALLY don't like the whole BS "2x performance" and "4x performance" multiplier marketing that NVIDIA has used because it's SO specific (DLSS 3 + ray tracing, etc). It's not real, and not indicative of what I would want to push out to my audience.

- Jensen isn't even real anymore, yo: Jensen's appearance during GTC 2022 for the "GeForce Beyond" event when the RTX 40 series GPUs were unveiled, felt like he's deep faked there. Not that this goes against the RTX 4090, but Jensen and his leather jacket are just DLSS 4-powered future versions of the founder and CEO of NVIDIA.

Final Thoughts

NVIDIA's new GeForce RTX 4090 is an absolute powerhouse of a graphics card, and is purely for the ultra-enthusiast gamers and users out there... if you're not a 4K 120Hz+ monitor or TV owner (with HDMI 2.1) then this isn't for you. If you are one of those gamers or enthusiasts -- I am -- then man, you're in for a wild ride.

Slotting in the huge GeForce RTX 4090 graphics card inside of any gaming machine, even if you're taking out an already-fast GeForce RTX 3090... you're going to FEEL the performance improvement. Normally it's 10-20% but this is a monumental upgrade for 4K 120FPS+ gamers, with Ada Lovelace delivering FPS improvements at ALL resolutions, but 4K 120FPS+ is an important market for NVIDIA.

If you don't touch the power limit slider and leave it at the default 450W setting, you're going to be pleasantly surprised with NVIDIA's new GeForce RTX 4090. All that testing the company did with its not-so-old RTX 3090 Ti has paid off because the boards used here for the RTX 4090 are a serious step above.

You could easily throw a new GeForce RTX 4090 into a system that is using the GeForce RTX 3090 or RTX 3090 Ti, and not have to upgrade your PSU. You're not going to be using 600W+ on this card unless you've got LN2 cooling, spend days modding the card, and push it past 3.0GHz+ all day long. For 99% of people who don't do that, you're going to be pleasantly surprised with how good the RTX 4090 is on power and thermals (compared to the RTX 3090 Ti, RTX 3090).

The world of 3.0GHz+ GPU clocks on NVIDIA is here... which I didn't think we'd see this generation, especially after Ampere hits a wall at around 2.2GHz or so. I've got to say though, seeing 3015MHz GPU boost clocks on the GeForce RTX 4090 really is something else.

- Who the RTX 4090 is for: Ultra enthusiasts, hardcore gamers, performance users... whether you're breaking benchmark records, or gaming at 1440p 120FPS+ or better yet (where the RTX 4090 is suited) and gaming at 4K 120FPS+ then the RTX 4090 is for you, without a doubt. Should you wait for Navi 31? Well, that's up to you. If you're playing games with DLSS (especially DLSS 3) then the RTX 4090 is mighty tasty (right now).

- Why you should buy the RTX 4090: If you want the best performance money can buy, in October 2022 that would be the GeForce RTX 4090. Nothing comes close. It makes AMD cry in shame, but that's with their current-gen RDNA 2-based Radeon RX 5000 series GPUs. We have another month to go until RDNA 3 debuts with the flagship Navi 31 GPU.

- Should you wait for AMD and RDNA 3? Well, that's up to you. If you're already a GeForce gamer and no matter what AMD releases in a next-gen Radeon, you're buying the next-gen GeForce... then your mind has been made up. GPU prices have fallen, so a $1599+ priced RTX 4090 is actually not too bad (you were paying more than this a year ago for half the GPU).

We heard rumors of 600W+ and even 850W+ insanity but for NVIDIA's new flagship GeForce RTX 4090... it's just not needed. 450W is perfectly fine for the GPU to hit a ceiling at, with none of my custom cards using more than that (on average, if you check the max slider, it will breach 450W easily). But you can power limit the GPU and it can use sub 350W and still kill everything else on the market.

I can't remember the last time I installed a new graphics card and had so many 'OMG' moments out loud, the performance of the new GeForce RTX 4090 is simply insane. If you've got it paid with a new Intel Core i9-13900K (the previous-gen Core i9-12900K, and similar from Intel) or AMD's just-released Ryzen 9 7900X or 7950X "Zen 4" CPU, then you've got a match made in heaven.

Grab yourself a huge 4K 120Hz+ OLED TV or a decently sized (43-inch or bigger) 4K 120Hz+ gaming monitor, and you're going to be in gaming heaven... NVIDIA's new GeForce RTX 4090 is the graphics card you've been waiting for: capable of pumping 4K 120FPS in today's and even tomorrow's games if they have DLSS 3 support.

NVIDIA's new GeForce RTX 4090 Founders Edition is a beautiful card in its own right, one of the best-looking RTX 4090s that you can buy, and would look magnificent in any gaming PC (albeit, if it can fit). It's a mammoth-looking card, but all of those cooling chops underneath keep the AD102 GPU and 24GB of ultra-fast GDDR6X memory as cool as possible.

The cooling technology that NVIDIA has beefed up on the GeForce RTX 4090 Founders Edition might be invisible to the naked eye -- at least without ripping the cooler off -- but there is a LOT of work that the company has put into it. Gone are the days of super-hot FE cards, and here are the days of cool-operating RTX 40 series FE graphics cards.

NVIDIA moving away from using a custom 8nm process at Samsung and over to a new custom TSMC 4N process node (5nm) is helping here too.

From here, we have AIB partners with custom GeForce RTX 4090 graphics cards, where right now I have:

- MSI GeForce RTX 4090 SUPRIM X

- MSI GeForce RTX 4090 SUPRIM LIQUID X (AIO cooler)

- ASUS ROG Strix GeForce RTX 4090 OC Edition

- COLORFUL iGame GeForce RTX 4090 Vulcan OC-V

- GAINWARD GeForce RTX 4090 Phantom "GS"

The reviews will be in that order (as I received the samples) in the coming days, with MSI, ASUS, and COLORFUL up first. In between, and after, I'll take a closer look at 8K gaming performance, ray tracing performance, and as many DLSS 3-powered games that I can test.

Wrapping up, if you want the biggest and most bad-ass graphics card you can buy: NVIDIA's new GeForce RTX 4090 is a treasure. It's not for everyone, but not every $1599+ product is. Not everyone walks into a Tesla or Ferrari dealership and complains at the price of the cars... they know what customers they are going to get from the very start.

NVIDIA knows this is going to appeal to a particular part of the market, and they have nailed it with the GeForce RTX 4090. Yet another fantastic flagship next-gen GPU, with multiple improvements that make the RTX 4090 Founders Edition something truly special.