IBM Research has spent the last 8 years working on its first-ever AI-dedicated chip, which it just unveiled as NorthPole, with the tech giant saying it is 22x faster than any other industry offering.

IBM's new AI accelerator, codenamed NorthPole has one aim: passing the computing performance of the industry leaders and meeting the insatiable demand for all-things AI. IBM is doing something a little different with its new NorthPole AI chip when compared to competitors in NVIDIA, AMD, and others.

The NorthPole project's leader, Dharmendra Modha, can see a bright future with the chip architecture being different. IBM Research has said that it is combining inference architectures into the chip itself, which is how Modha can refer to NorthPole as a "human brain". It has efficient CPU interconnectivity with an all-digital architecture that allows for inter-communication being much faster... which is where the dominance in AI processing comes from IBM's new NorthPole chip.

IBM is using the older and proven 12nm process node, but the company still thinks its new NorthPole AI chip on 12nm surpasses current-gen AI GPUs that are made on 4nm over at TSMC (Taiwan Semiconductor Manufacturing Company). How? IBM says it's due to using the ResNet-50 neural network model, which negates Moore's Law -- yeah -- and moves towards Huang's Law, which has individual chip stacking being the objective versus process shrinking.

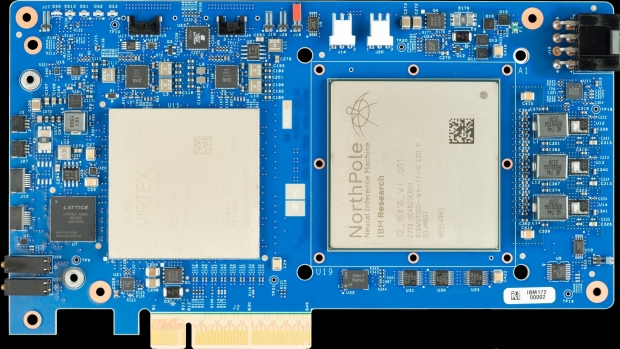

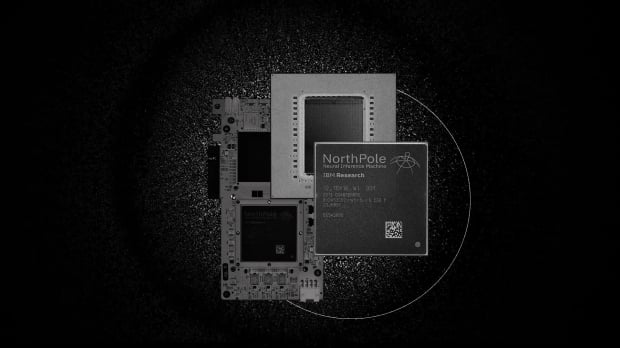

NorthPole measures 800mm square, packing only 22 billion transistors -- compare that to NVIDIA's GH100 AI die that measures in at a barely bigger 814mm square but packs an insane 80 billion transistors. NorthPole packs 256 cores that are capable of performing 2048 operations-per-core, per-cycle at 8-bit precision, so if the AI model fits inside of the memory, NorthPole is incredibly fast.

IBM's new NorthPole AI processor can only perform AI inferencing, so it can't be used to train large language models (LLM) like GPUs or CPUs from NVIDIA, AMD, and Intel. NorthPole cannot run GPT-4, in case you were wondering.

Modha said: "It's an entire network on a chip. Architecturally, NorthPole blurs the boundary between compute and memory. At the level of individual cores, NorthPole appears as memory-near-compute and from outside the chip, at the level of input-output, it appears as an active memory".

He added: "We can't run GPT-4 on this, but we could serve many of the models enterprises need," Modha said . "And, of course, NorthPole is only for inferencing".