TSMC is seeing its 3 biggest customers revising their orders all at the same time: AMD, Apple, and NVIDIA for their next-gen RDNA 3, iPhone 14, and Ada Lovelace products, respectively.

NVIDIA is the big one that we'll tackle in this article because they're in a real precarious spot: NVIDIA has been bedding Samsung for a while now, leaving the South Korean arms for the arms of Taiwan and back to its old faithful: TSMC (Taiwan Semiconductor Manufacturing Company) for its next-gen Ada Lovelace GPU architecture and upcoming GeForce RTX 40 series graphics cards... well, they've hit a snag.

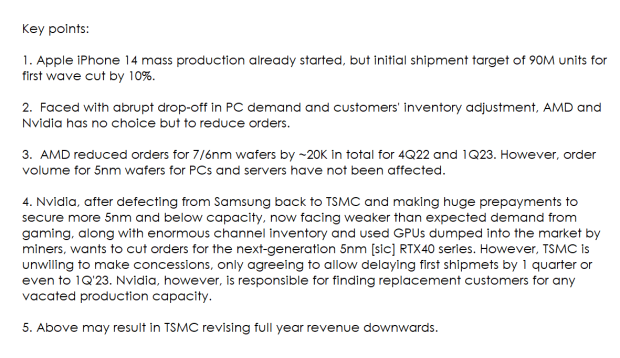

According to the latest report out of DigiTimes and translated by @RetiredEngineer on Twitter: "Faced with abrupt drop-off in PC demand and customers' inventory adjustment, AMD and NVIDIA has no choice by to reduce orders".

If you remember, late last year NVIDIA coughed up big money to TSMC to ensure that they had enough wafers for their next-gen Ada Lovelace GPU architecture... and now, things are getting rocky. NVIDIA has a boatload of leftover inventory from the cryptocurrency prices dropping, leaving crypto miners out in the cold.

- Read more: TSMC announces FinFlex tech for N3 node, nanosheet-based N2 in 2025

- Read more: Samsung kicks off mass production of 3nm GAA chips: next-gen begins

- Read more: AMD RDNA 3 GPU: engineer confirms hybrid 5nm + 6nm nodes on Navi 3X

Lots of NVIDIA GeForce RTX 30 series graphics cards, with nowhere to go... no customers to sell them to... in a harder economic time and no true reason to really upgrade right now. RetiredEngineer continues, saying that "along with enormous channel inventory and used GPUs dumped into the market by miners, wants to cust orders for the next-generation 5nm RTX 40 series".

But this bit: however, TSMC is unwilling to make concessions.

The only concession TSMC is willing to give NVIDIA is by allowing them to delay the first shipments by 1 quarter, or shift them into Q1 2023. But, NVIDIA would then be responsible for finding replacement customers for any vacated production capacity.