Alright... this is a pretty exciting tease in the lead-up to Christmas in the form of NVIDIA's new mysterious "GPU-N" that has been unveiled in a new research paper published by NVIDIA.

The new NVIDIA "GPU-N" was revealed in a recently published research paper titled "GPU Domain Specialization via Composable On-Package Architecture" in which NVIDIA talks about a next-generation GPU design using a "Composable On-Package Architecture (COPA)" as the optimal solution for maximizing low-precision matrix math throughput to increase Deep Learning performance. Simulated performance is teased, which is the most exciting part.

But first, GPU-N specs:

- GPU-N: 134 SM units (104 SMs in A100)

- 8576 CUDA cores (24% more than A100)

- 60MB L2 cache (50% more than A100)

- DRAM bandwidth: freaking bonkers 2.68TB/sec (scales up to what-the-hell 6.3TB/sec)

- up to 100GB of HBM2e (expandable to 233GB with COPA implementations)

- 6144-bit memory bus @ 3.5 Gbps

GPU-N has 24.2 TFLOPs of FP32 compute performance (a 24% increase over A100) and a truly next-gen leap of 779 TFLOPs of FP16 compute performance (2.5x faster than A100... yes, 250% faster). It's pretty bonkers to think about, but GPU-N -- whatever the hell it is -- is a beast. 2022 is going to be very exciting for GPUs.

- Read more: NREL Kestrel supercomputer: NVIDIA A100NEXT GPU, is this Hopper H100?

- Read more: NVIDIA might launch Hopper GPU instead of Ada Lovelace to beat RDNA 3

- Read more: NVIDIA's next-gen Hopper GPU taping out soon: multi-chip module (MCM)

- Read more: NVIDIA teases Ampere Next GPU for 2022, Ampere Next Next GPU for 2024

- Read more: Samsung 5nm fab construction starts, NVIDIA 'Ampere Next' GPUs in 2022

NVIDIA would be using an MCM-based GPU solution here with its next-gen purported GH100 GPU, or as it's known here as "GPU-N", with the multi-chip module packing some serious silicon power to fight AMD's upcoming MCM-based "Aldebaran" GPU that will battle Hopper.

NVIDIA's next-gen GPU-N would have up to 100GB of super-fast HBM2e memory at 3.5Gbps, which can be expanded at up to 233GB of HBM2e memory if need be... all of this is on a 6144-bit memory bus that enables between 2.68TB/sec and 6.3TB/sec of memory bandwidth. I can't believe I'm typing those numbers, but that's the type of performance leap we're looking at here.

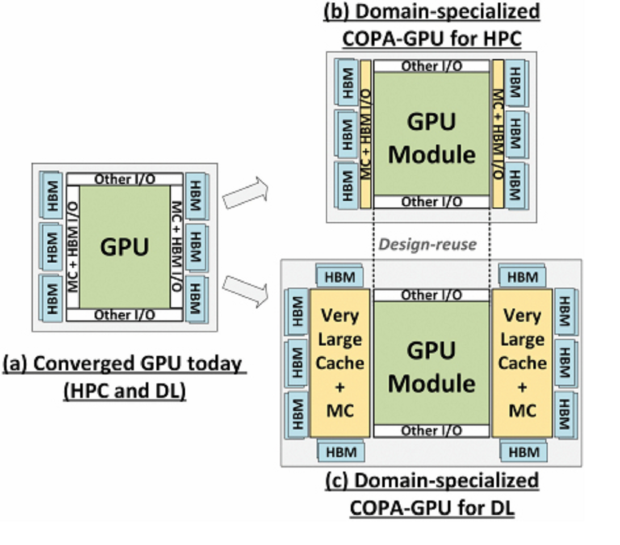

NVIDIA explains in the research paper -- which I will note, was submitted on April 5, 2021 -- "As GPUs scale their low precision matrix math throughput to boost deep learning (DL) performance, they upset the balance between math throughput and memory system capabilities. We demonstrate that converged GPU design trying to address diverging architectural requirements between FP32 (or larger) based HPC and FP16 (or smaller) based DL workloads results in sub-optimal configuration for either of the application domains".

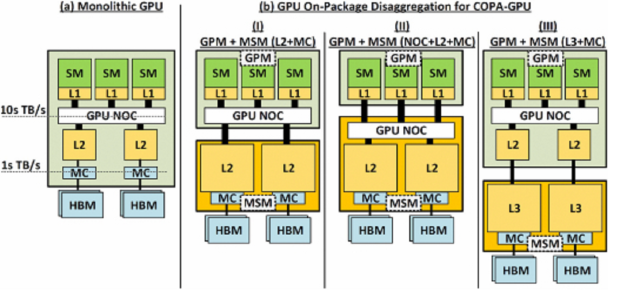

"We argue that a Composable On-PAckage GPU (COPAGPU) architecture to provide domain-specialized GPU products is the most practical solution to these diverging requirements. A COPA-GPU leverages multi-chip-module disaggregation to support maximal design reuse, along with memory system specialization per application domain. We show how a COPA-GPU enables DL-specialized products by modular augmentation of the baseline GPU architecture with up to 4x higher off-die bandwidth, 32x larger on-package cache, 2.3x higher DRAM bandwidth and capacity, while conveniently supporting scaled-down HPC-oriented designs".

"This work explores the microarchitectural design necessary to enable composable GPUs and evaluates the benefits composability can provide to HPC, DL training, and DL inference. We show that when compared to a converged GPU design, a DL-optimized COPA-GPU featuring a combination of 16x larger cache capacity and 1.6x higher DRAM bandwidth scales per-GPU training and inference performance by 31% and 35% respectively and reduces the number of GPU instances by 50% in scale-out training scenarios".