SK hynix showcased its next-gen memory solutions for AI at CES 2026, showing off its new 48GB HBM4, LPDDR6, SOCAMM2, and more for AI platforms of the future.

SK hynix showed off its next-gen 16-Hi HBM4 with 48GB, newer HBM4 that will succeed the upcoming 12-Hi HBM4 with 36GB that will arrive this year. The faster 16-Hi HBM4 48GB modules are bloody fast, with 2TB/sec of memory bandwidth per stack, destined for NVIDIA's next-gen Vera Rubin AI platform.

The company had its new 16-Hi HBM4 48GB running at the industry's fastest speed of 11.7Gbps, and is still under development at SK hynix, and will be released in the nearish future.

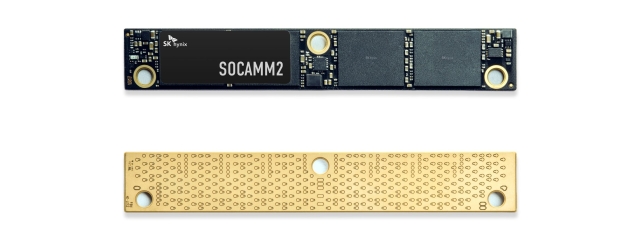

SK hynix also has its new SOCAMM2 memory modules at CES 2026, with the new low-power memory module specialized for AI servers, for the company to demonstrate the competitiveness of its diverse product portfolio "in response to the rapidly growing demand for AI servers".

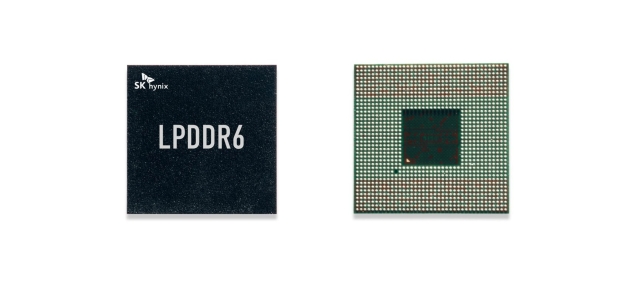

Another new product showcased at CES 2026 is SK hynix's new LPDDR6 memory, optimized for on-device AI, offering huge data processing speed increases, as well as power efficiency gains compared to the previous-gen LPDDR5 standard.