The next-generations of HBM memory have been teased for the next 10+ years, including HBM4 which will appear on NVIDIA's new Rubin AI GPUs and AMD's just-announced Instinct MI400 AI accelerators, but also we have details on HBM5, HBM6, HBM7, and HBM8 which will appear in 2038.

In a new presentation published by KAIST (the Korea Institute of Science & Technology) and Tera (Terabyte Interconnection and Package Laboratory) the firms showed off a lengthy HBM roadmap with details of the next-gen HBM memory standards. HBM4 will launch in 2026 with NVIDIA Rubin R100 and AMD Instinct MI500 AI chips, with the Rubin and Rubin Ultra AI GPUs using HBM4 and HBM4E, respectively.

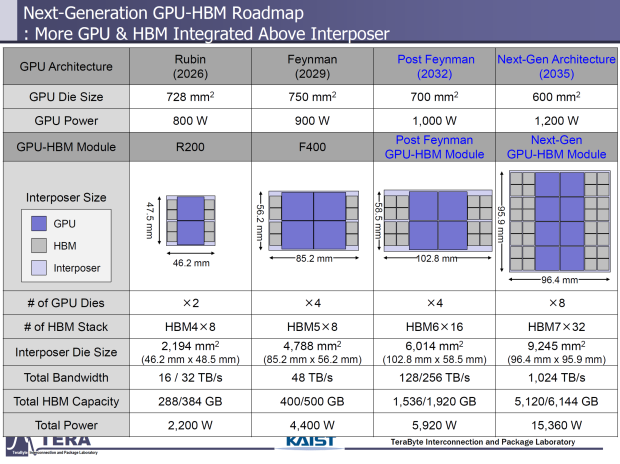

NVIDIA's new Rubin AI GPUs will feature 8 HBM4 sites with Rubin Ultra doubling that to 16 HBM4 sites, there are two GPU die cross-sections for each variant, with Rubin Ultra featuring a larger cross-section, packing double the compute density of the regular Rubin AI GPU.

The research firm teases that NVIDIA's new Rubin AI chip has a GPU die size of 728mm2 and will have up to 800W of power per die, with the interposer measuring 2194mm2 (46.2mm x 48.5mm) and will pack between 288GB and 384GB of HBM4 with 16-32TB/sec memory bandwidth. The total chip power will hit 2200W, which is double the current-gen Blackwell B200 AI GPUs. AMD's upcoming Instinct MI400 AI chip has even more HBM4 with 432GB of HBM4 capacity and up to 19.6TB/sec of memory bandwidth.

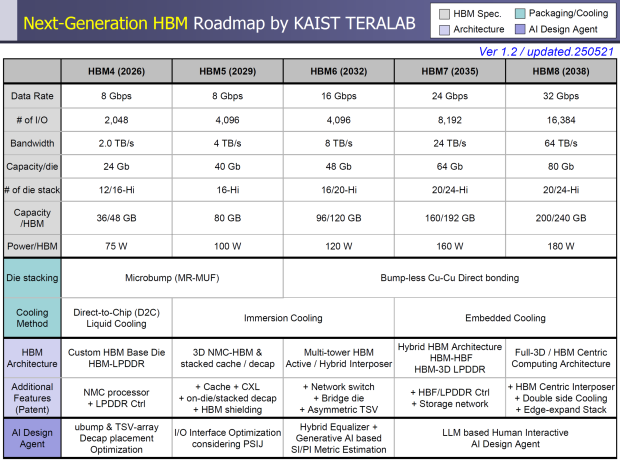

HBM4: The upcoming HBM4 memory standard will feature an 8Gbps data rate on a 2048-bit IO with 2TB/sec of memory bandwidth per stack, 24Gb capacity per die equalling up to 36-48GB of HBM4 memory capacity, and per-stack power package of 75W. HBM4 will use the direct-to-chip (DTC) liquid cooling, and will use a custom HBM-based die (HBM-LPDDR).

HBM4E: The beefier HBM4E standard takes things up to a 10Gbps data rate, 2.5TB/sec of memory bandwidth per stack, and up to 32Gb capacity per die with up to 48-64GB of HBM4 memory capacity through 12-Hi and 16-Hi stacks, with a per-HBM package power of up to 80W.

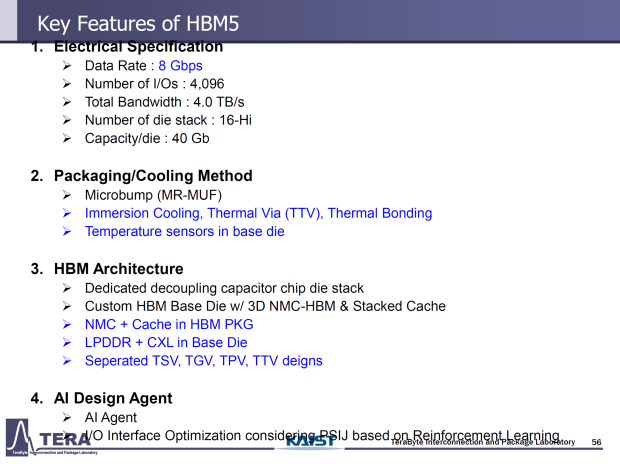

HBM5: We will see the next-next-gen HBM5 memory standard debut with NVIDIA's next-generation Feynman AI GPUs ready for 2029, with the IO lanes boosted up to 4096 bits, with 4TB/sec memory capacity per stack through 16-Hi stacks as the new baseline. We'll see 40Gb DRAM dies, with HBM5 driving up to 80GB memory capacity per stack, and the per-stack power package increased to 100W.

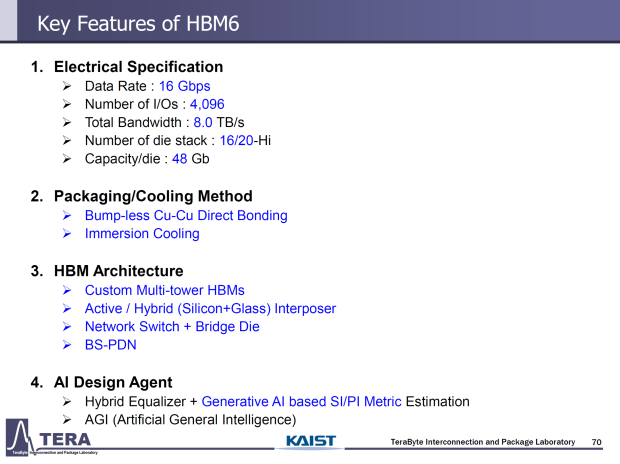

HBM6: After HBM5 is released, we'll see HBM6 which could debut with NVIDIA's next-gen Feynman Ultra AI GPU (not confirmed yet) where we'll see a doubling of the data rate again to 16Gbps, boasting 4096-bit IO lanes. Bandwidth is doubled to 8TB/sec, and 48Gbps capacities per DRAM die. HBM6 will be the first time we see HBM stacking beyond 16-Hi, with HBM6 pushing things to 20-Hi stacks, memory capacities boosting to 96-120GB per stack, and a per-stack power of 120W. HBM5 and HBM6 memory will both feature Immersion Cooling solutions, with HBM6 using multi-tower HBM (Active/Hybrid) interposer architecture, and new features like Network Switch, Bridge Die, and Asymmetric TSV during its research phase.

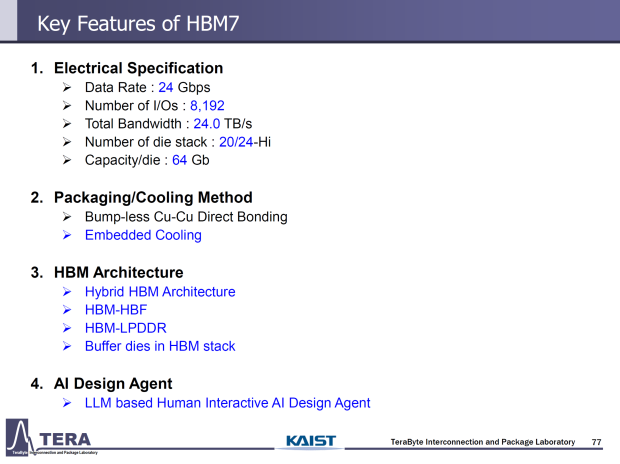

HBM7: Ooooh yeah, HBM7 will boast 24Gbps pin speeds per stack, with a far wider 8192 IO lanes (a doubling over HBM6) with 64Gb capacity per DRAM die, offering a huge 160-192GB of HBM7 per stack thanks to the use of 20-24-Hi memory stacks, and a per-stack power package of 160W.

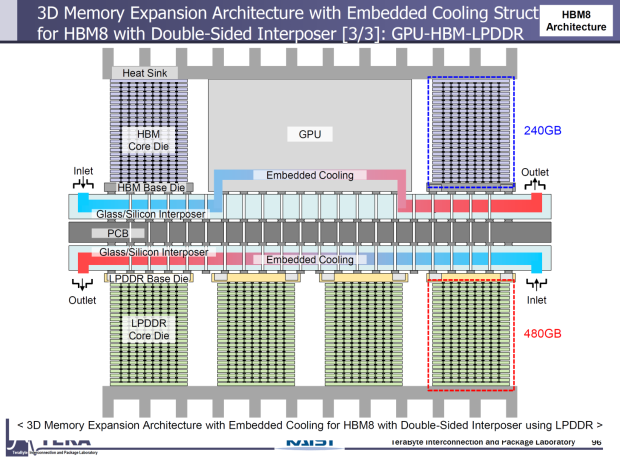

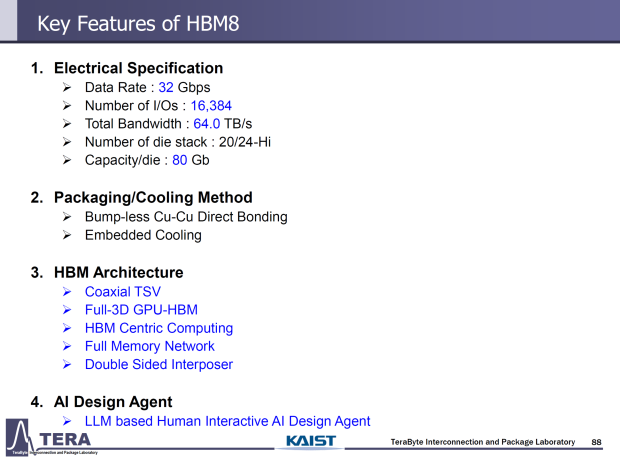

HBM8: We won't see HBM8 for at least 10+ more years with an expected release of 2038, but we'll see 32Gbps data rate and another doubling of the IO rate to 16,384 IO lanes. HBM8 will offer 64TB/sec of bandwidth per stack, with 80Gb capacities per DRAM, and up to an insane 200-240GB HBM8 memory capacity per stack, and per-HBM-site package power of a much higher 180W.