Meet the new 2nd gen Intel Xeon Scalable Processors

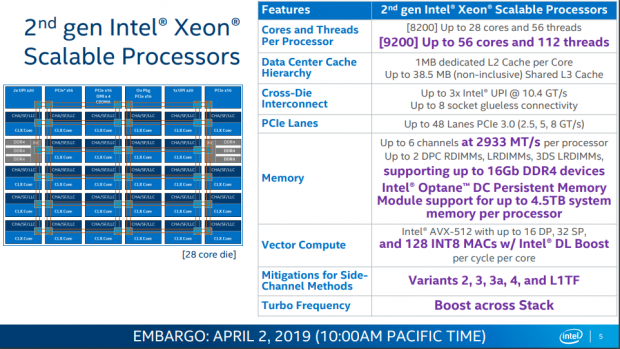

Cascade Lake is finally here with some improvements over its predecessor, which are highlighted in purple in the image above. The new 9200 series processors offer up to 56 cores and 112 threads, while the 8200 series (opposed to the previous 8100 series) offer processors with up to 28 cores. While cache, interconnector, and PCI-E have stayed the same, there are other improvements.

The first is that each processor supports up to 2933MHz memory, 16Gb DDR4, and Intel's Optane DC Persistent memory with up to 4.5TB system memory per processor. There is also a new instruction enhancement for AI, which we will cover later. There are also many new mitigations for the side-channel attacks and Turbos frequencies have been increased across the stack.

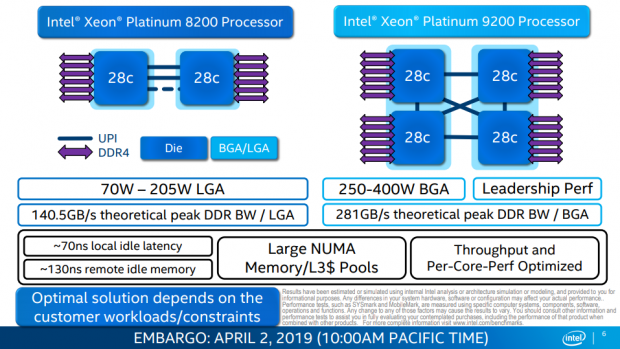

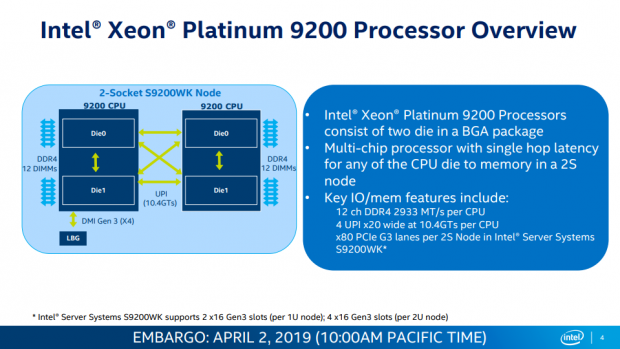

Yup, you see that right, Intel has put two dies into a single package for their 9200 series Xeon Platinum processors, with each of these dual die CPUs supporting duodeco-channel memory (12-channel). UPI is used to connect the dies both on the package and through the PCB in a two-socket system. However, there will be no socket; these dual die CPUs will only be soldered to motherboards and sold that way.

Each die has three UPI links operating at 10.4GT/s, so each CPU has a total of four UPI links for each core to connect to each core for single hop latency in a 2S node. If you go with one of the two socket (2S) solutions you will get x80 PCI-E 3.0 lanes. Intel is basically taking their top SKU and putting two on a single package.

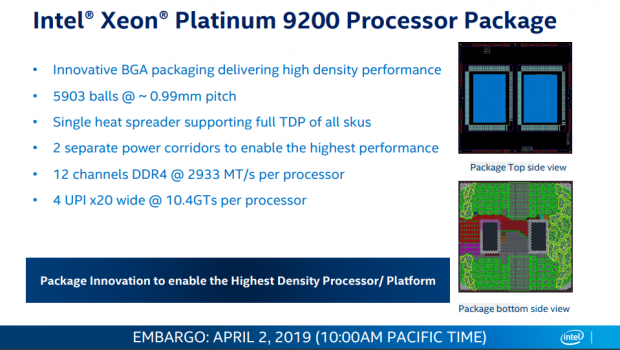

It's quite interesting that Intel went the solder root, and it seems like they have reduced the ball count to 5903, considering you might have expected it to be double the balls as there are pins in the LGA 3467 socket. While they were able to get the ball count down they are powering each die separately, so we would expect two sets of VRMs for each CPU. While the power delivery is separate, a single heat spreader is used. The whole point of this move is to increase density in 1U and 2U systems, putting 112 cores into a 1U system is quite impressive.

Performance and Configurations

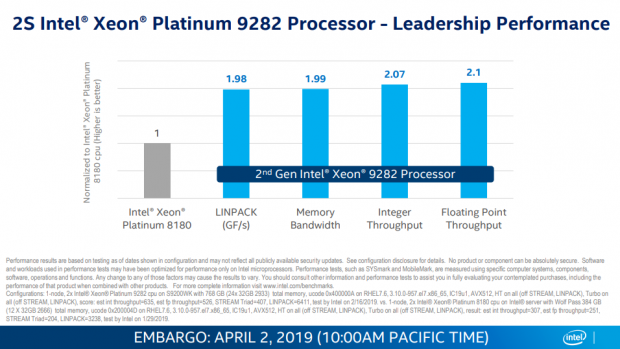

Here we see that the performance of these crazy CPUs is roughly the same or better than a single Xeon Platinum 8180.

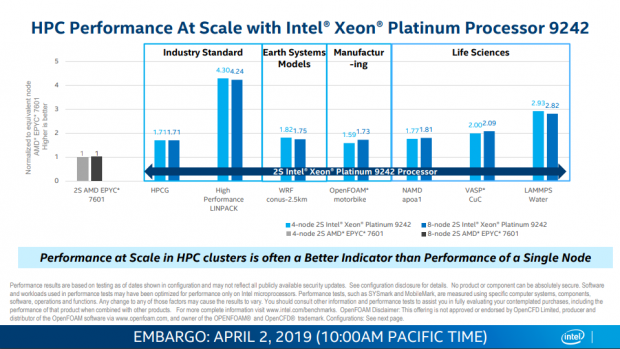

Here we see a two-socket AMD EPYC 7601 system (64C/128T) thrown at a two-socket Intel Xeon Platinum 9242 system (96C/192T ). We can see the Xeon offering pretty solid performance, although these are self-generated results, so we will wait to see how things will turn out.

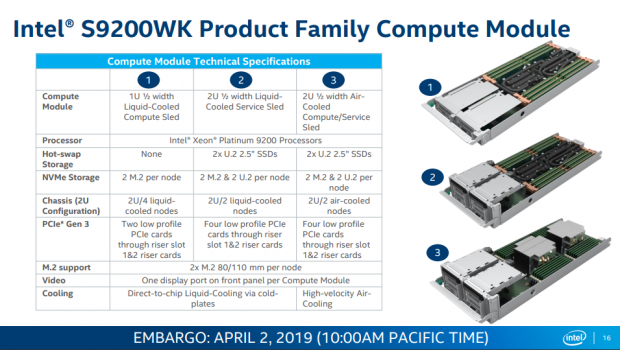

Intel mentioned they will be selling the S9200WK series with the motherboard, as in they will solder the CPUs to the motherboard and then sell them as a solution. The 1U solution looks absolutely insane, that's 112cores and 224 threads in a small sled, which obviously has to be watercooled. A 2U solution is a bit taller and allows for either air or liquid cooling.

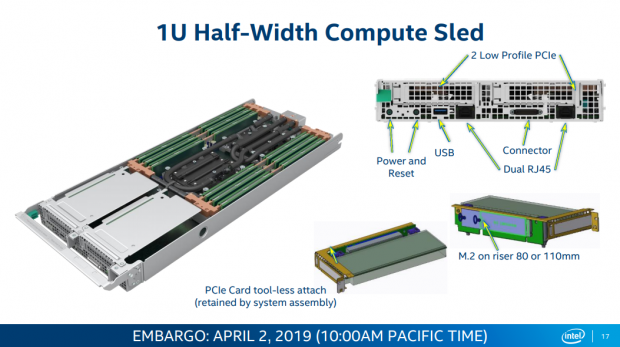

In the 1U solution, the M.2 cards can sit in a riser cards, and PCI-E cards get a tool-less attachment mechanism.

New Cascade Lake Technologies

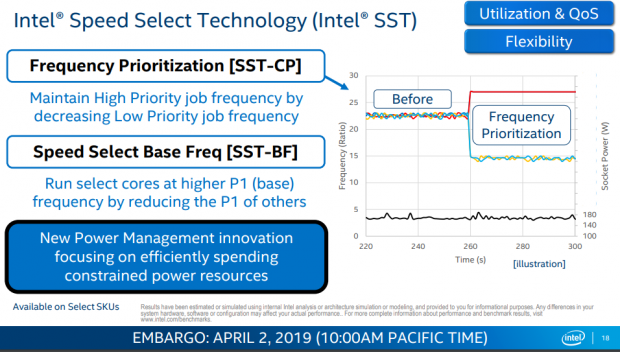

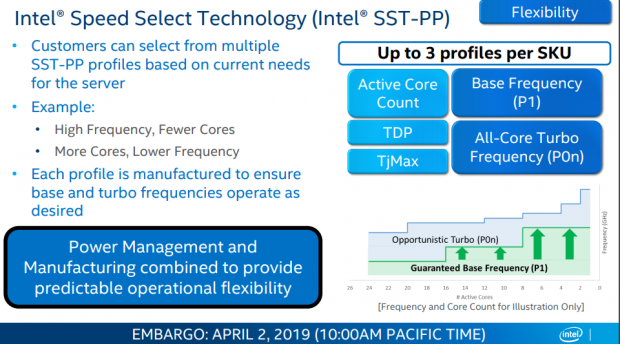

First off we find Intel's Speed Select Technology (SST), which allows for prioritization of cores to certain jobs, such as reducing the frequency for low priority jobs to maintain high frequency on a high priority job. It can also run specific cores at higher power limits while reducing the power limits of other cores.

Intel's customers are free to customize this technology and can pick from SST-PP profiles. It can be configured to run fewer cores at higher frequency or more cores at lower frequency, basically allowing the system to maximize resources through power manipulation and opportunistic turbo profiles.

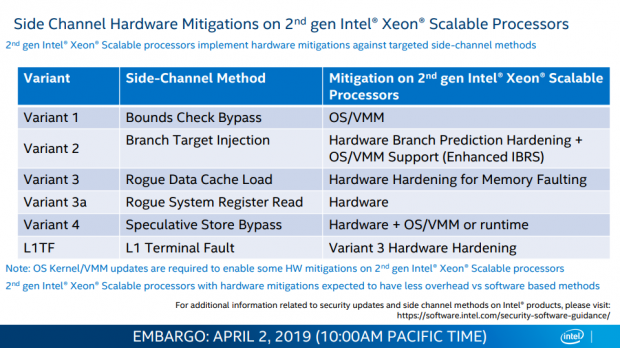

Intel has shown us some of their new hardware mitigations for their 2nd generation Intel Xeon Scalable Processors. Variant 2 has some hardware hardening as does variant 3 and variant 4. Variant 3a was mitigated through hardware.

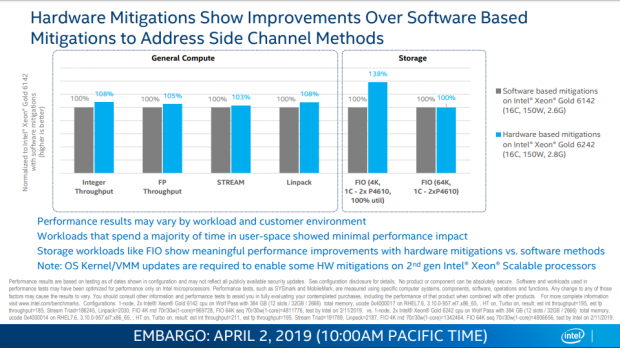

Intel has found that these hardware mitigations offer better performance than their software based variants, resulting in lower performance loss.

Intel's new Deep Learning Instruction Set

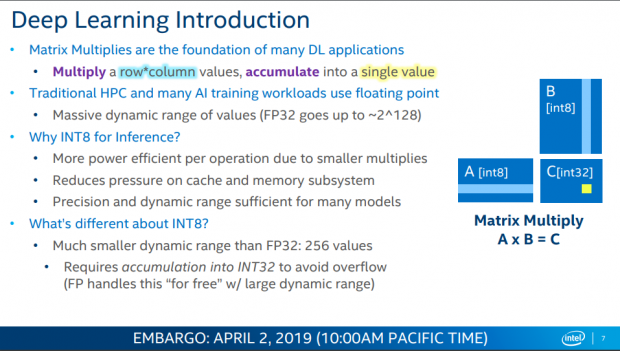

Many deep learning applications utilize matrix multiplication, and Intel has found a way to utilize int8 to produce more power efficient and more effective deep learning capabilities. Intel has been trying to break into the AI market as NVIDIA has been expanding into the market as well, so these technologies are foundational to Intel's future in the datacenter and AI market.

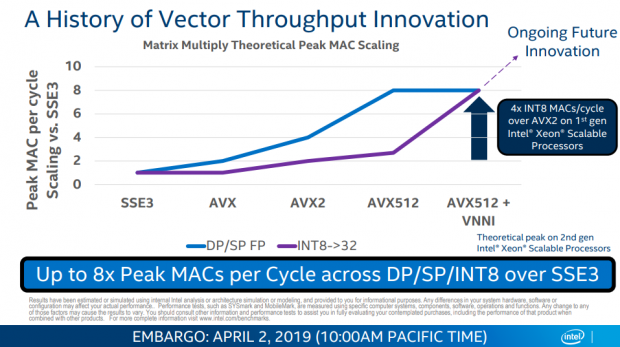

Vector-based matrix multiplication is traditionally done through FMA floating point operations, but with AVX512+VNNI Intel is also to utilize INT8 and produce performance on par with sing/dual precision floating point operations.

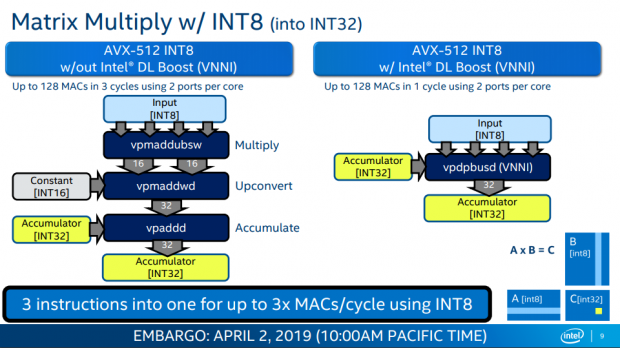

We should mention that the VNNI is actually based in hardware, it's not some software trick, but rather hardware was added just like Intel does for AVX. We can see that this hardware reduces the time it takes from 3 cycles to just a single cycle.

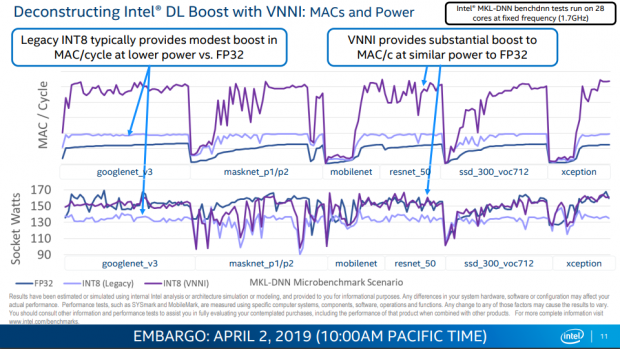

Here we can see how many MACs per cycle perform per different scenarios, and Intel also took note of power usage. We see lower power consumption with more performance than FP32 and more of a performance boost than FP32 at the same power.

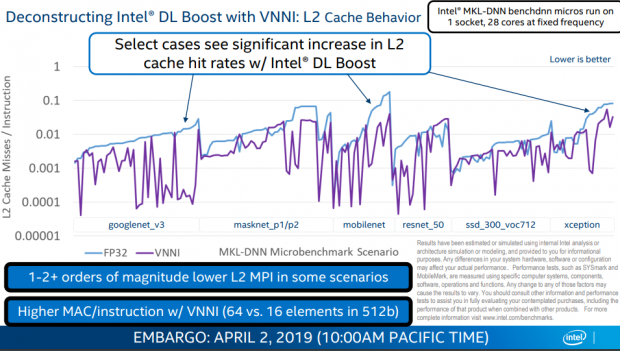

Other scenarios show that L2 cache miss rates have decreased in certain scenarios, which is great for performance.

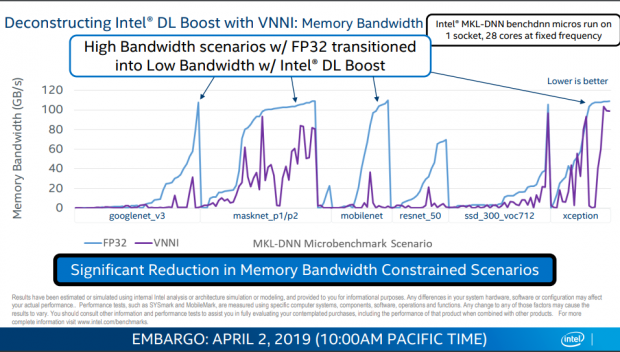

We also see that memory bandwidth constrained scenarios have decreased as well in certain cases.

2nd Generation Intel Xeon Scalable Processor SKUs

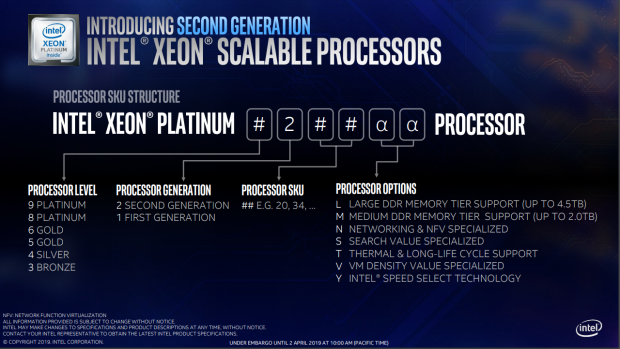

Here we see Intel's new naming scheme for their new line of Xeon Scalable Processors. We see options for things such as large and medium memory tier support and Intel Speed Select Technology. We also see how Intel is designating processor generation and processor level.

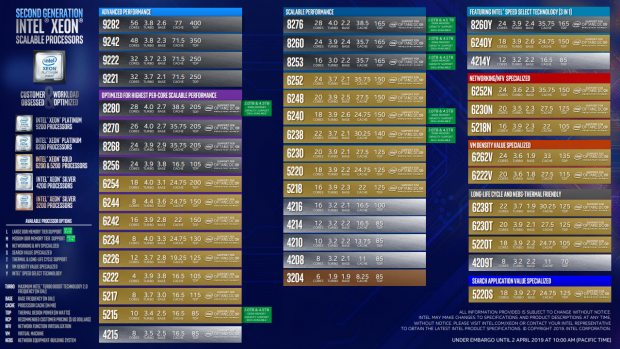

As you can see there is a huge lineup of refreshed 2nd generation Xeon Scalable Processors, ranging from the 9282 56-core monster to the 6-core Bronze CPU. Check out that 400W TDP.

Intel has also grouped the processors into different areas where Intel believes those individual SKUs make sense and offer more performance. That is why you will see many of the CPUs from the last image in this image as well.

United

States: Find other tech and computer products like this

over at

United

States: Find other tech and computer products like this

over at  United

Kingdom: Find other tech and computer products like this

over at

United

Kingdom: Find other tech and computer products like this

over at  Australia:

Find other tech and computer products like this over at

Australia:

Find other tech and computer products like this over at  Canada:

Find other tech and computer products like this over at

Canada:

Find other tech and computer products like this over at  Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf

Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf