Introduction, Specifications, and Pricing

Do you ever dream of having server-like storage capacity in your desktop PC without the ear-busting noise?

Icy Dock builds accessories for storage products. The product line consists primarily of drive cages, but there are some external enclosures as well. The company has enjoyed success with both small and, in recent years, large drive count products used to adapt hard disk drives and solid state drives into 3.5-inch and 5.25-inch bays.

Today we take a deep dive into two of the latest high capacity storage enclosures from the company. The MB998IP-B is the latest 5.25-inch bay 8-drive enclosure with mini-SAS connectivity. It's nearly identical to a previously released model that used eight standard SATA connectors to add drives to your system.

For those of you that don't remember and wonder what the covers on your computer are for, a 5.25-inch bay used to house optical media drives, like CD and DVD ROMs.

The MB516SP-B uses a similar mini-SAS cable configuration but doubles the density to a massive sixteen drives that fit in just two 5.25-inch bays. The MB516SP-B is the densest storage we have ever tested.

Finding sixteen identical drives proved difficult. We didn't have any modern matching SSDs, so we reached out to Crucial to fulfill our 16-terabyte storage aspirations, and the company came through with the remarkable MX500 1TB, one of the best-selling and fastest SATA SSDs ever released.

Specifications

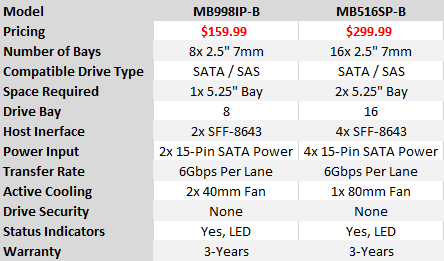

We're looking at two enclosures, but both are very similar. The MB998IP-B starts us off with eight drive bays with two SFF-8643 connections to the host system, and it fits in a single 5.25" bay. Take those specifications and double them for the MB516SP-B.

Power delivery doubles as well with two 15-pin SATA connections for the MB998IP-B and four for the MB516SP-B. The fan cooling goes the opposite way with two 40mm fans on the back of the 8-drive enclosure and a single 80mm fan on the 16-drive enclosure. Both enclosures feature a switch to control the fan's rotation speed. We didn't have any issues with noise even with the fans running at full speed on either system.

Pricing And Warranty

Like many other Icy Dock enclosures, the MB998IP-B and MB516SP-B come with a no hassle 3-year warranty. Pricing starts at $159.99 for the 8-bay and $299.99 for the 16-bay enclosure.

Icy Dock MB516SP-B

The freshest line of 2.5" drive enclosures feature a few updates and upgrades over previous models. Users can install a plastic tag on each drive tray to keep them in order. On the back, we see the new SFF-8643 that eliminates the individual SATA data connectors. Each SFF-8643 transmits data for four drives.

Icy Dock MB516SP-B

From the front, the 16-bay version looks like two 8-bay enclosures stacked together. Unlike the MB516SP-B, the 16-bay version segments the power and data to different sides of the enclosure. That makes installation easier.

Accessories

Both enclosures ship with screws for installing 2.5" drive in the trays and screws to mount each enclosure in your case. The tabs for the drive sleds come on a mold like the parts for plastic models.

Crucial MX500 1TB

We can't forget the Crucial MX500, the SATA SSD with the best balance between performance and cost shipping today. The MX500's biggest attribute comes from the Micron 64-layer TLC memory that delivers exceptional random read performance at low queue depths.

RAID Performance Testing

Testing Notes

It's possible to connect eight SATA SSDs to most modern enthusiast-class motherboards but utilizing sixteen requires special adapter cards. You could use a HBA or Host Bus Adapter card, but this option just adds the drives to your system, the software configuration and management comes from Windows.

A hardware RAID adapter on the other hand adds logic between the array of drives and the operating system. A processor on the card runs the RAID, and a large DRAM cache accelerates performance without taxing the other system components.

We extracted our Areca ARC-1883ix-24 RAID controller from storage for this review. The ARC-1883 series features a dual-core RAID-on-Chip processor with a PCIe 3.0 x8-lane host interface. Our card was fit with a 4GB DDR3-1866 buffer.

We tested arrays with eight and sixteen drives. The eight-drive array ran in RAID 0 and RAID 5 with a stripe size of 64KB. The sixteen-drive arrays ran in RAID 0 and 5 with a 64KB stripe size and a RAID 0 using a 1024KB stripe size.

Sequential Read Performance

In theory, the 1024KB stripe would deliver amazing sequential performance but that wasn't what we found in practice. The general rule with RAID configurations has always been to use a smaller stripe size for better random data performance and a larger stripe size for improved sequential performance. We saw better sequential performance in our HighPoiint SSD6540 enclosure review with a 512KB stripe.

The Areca RAID controller is the bottleneck in the storage system so that's essentially what we are testing today but the other components play a role. The additional hardware just shows what's possible with the Icy Dock enclosures.

Sequential Write Performance

The difference between eight and sixteen drives shows up writing data. There is a small difference reading data, but data writes show a much larger difference between the arrays and fully populated enclosures. The one thing you will notice is the return on investment. Eight drives in RAID 0 nets us 2,769 MB/s in sequential writes at queue depth 2 but doubling the drive count only gives us an additional 1,000 MB/s. The gap increases at higher queue depths. This is a case of having the right workload to take advantage of the performance available.

Random Read Performance

When there wasn't a solid state option, and the world ran on hard disk drives, strong random read performance was right around 200 IOPS at queue depth 1. It was easy to increase low queue depth random read performance; the bar was already very low.

With flash the base performance has increased dramatically. RAID doesn't increase random read performance over a single drive. In fact, RAID controllers are not as efficient as Intel's PCH SATA, so queue depth 1 performance actually decreases.

Random Write Performance

The random write tests allow us to see the RAID 5 "write hole". This is where RAID 5's redundancy decreases performance. The massive DRAM buffer in our Areca controller card helps the issue, so our loss is less than some other cards on the market.

70% Read Sequential Performance

Mixing reads and writes is a difficult workload for consumer grade storage. This is one of the tests where more drives give users access to higher performance. Even with just 20% of the workload coming from data written, the is a very large performance gap between the eight and sixteen drive arrays.

70% Read Random Performance

The number of drives has less of an impact in random mixed data than the RAID level used to build the array.

Final Thoughts

We've tested a number of products from Icy Dock over the last decade and found that sometimes the enclosures have little issues that we have to work around but can live with, and other times they are just perfect right out of the box. The two units we tested today fit in the latter category where we couldn't ask for anything more and wouldn't ask for anything to change in the design.

That doesn't mean we can't find anything to complain about. The MB998IP-B at $159.99 and MB516SP-B at $299.99 are extremely expensive. There are very few alternatives, and you would have a difficult time finding one with equal build quality at a lower price.

The real question is who needs to stack sixteen or even eight SSDs together. That takes us to the outer edges of computing when talking about flash storage. The enclosures are small enough to build a power NAS in a small form factor system or to build extreme performance storage without dealing with rackmount hardware and the drawbacks that comes with it, like server-level noise and power consumption. The two Icy Dock enclosures we tested today really just give you more flexibility in your system design and eliminate obstacles that previously might have added expense or complexity to an otherwise simple task, like adding a lot of storage space to your system.

United

States: Find other tech and computer products like this

over at

United

States: Find other tech and computer products like this

over at  United

Kingdom: Find other tech and computer products like this

over at

United

Kingdom: Find other tech and computer products like this

over at  Australia:

Find other tech and computer products like this over at

Australia:

Find other tech and computer products like this over at  Canada:

Find other tech and computer products like this over at

Canada:

Find other tech and computer products like this over at  Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf

Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf