AMD launched its new flagship AI GPU and accelerator, the Instinct MI300X, earlier this month. During the launch event, AMD provided charts and data indicating that the MI300X outperformed NVIDIA's powerful H100 GPU in several tests.

It was up to 60% faster in a direct 8 to 8 server comparison; however, NVIDIA followed up with its own data. NVDIA's report shows (with data) that AMD did not use the latest NVIDIA TensorRT-LLM kernel optimizations for NVIDIA Hopper, nor did it show real-world MLPerf server scenarios where DGX H100 hardware delivers impressive "inferences per second" results.

Long story short, NVIDIA's response claims that if AMD benchmarked the H100 GPU properly, it would show that Team Green's flagship was 2X faster. So, where does that bring us to? AMD has reached out with an update showing that the MI300X's performance advantage has increased thanks to its own optimizations. And it's now accounting for latency.

In a new blog post titled "Competitive performance claims and industry-leading Inference performance on AMD Instinct MI300X," AMD confirms that it did not use TensorRT-LLM optimizations in its original benchmarks because the data was recorded in November, before the update.

"We are at a stage in our product ramp where we are consistently identifying new paths to unlock performance with our ROCM software and AMD Instinct MI300 accelerators," AMD writes. "The data that was presented in our launch event was recorded in November. We have made a lot of progress since we recorded data in November that we used at our launch event and are delighted to share our latest results highlighting these gains."

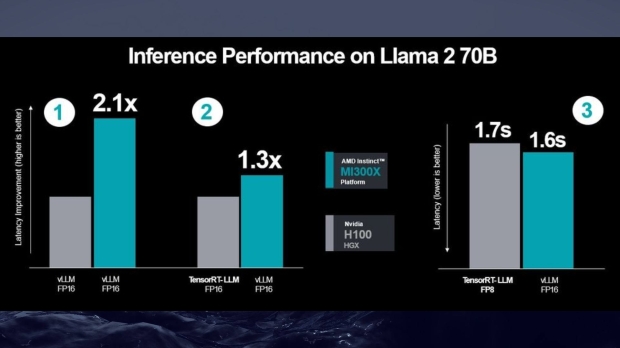

This is where it all gets a little technical, but AMD's new results show that its "performance advantage has increased to 2.1X" in favor of the MI300X using AMD's new-found optimizations. However, throw in NVIDIA's optimized TensorRT-LLM, and that figure drops to 1.3X - still favoring the MI300X.

Here are AMD's latest results.

- MI300X to H100 using vLLM for both.

- MI300X using vLLM vs H100 using NVIDIA's optimized TensorRT-LLM

- Measured latency results for MI300X FP16 dataset vs H100 using TensorRT-LLM and FP8 dataset

AMD states that NVIDIA's benchmarks compared "FP16 datatype on AMD Instinct MI300X GPUs to FP8 datatype on H100," while its new results compare FP16 and FP16. "MI300X continues to demonstrate a performance advantage when measuring absolute latency," AMD adds. "Even when using lower precisions FP8 and TensorRT-LLM for H100 vs. vLLM and the higher precision FP16 datatype for MI300X."

FP8 only works with TensorRT-LLM. So what's the takeaway? AI hardware, benchmarks, and optimizations are happening very fast, and with various tests and scenarios, both AMD's MI300X and NVIDIA's H100 are powerful bits of hardware. However, these new results from AMD do show the MI300X has the advantage in these specific tests.

We've reached out to NVIDIA for a statement on AMD's latest results.