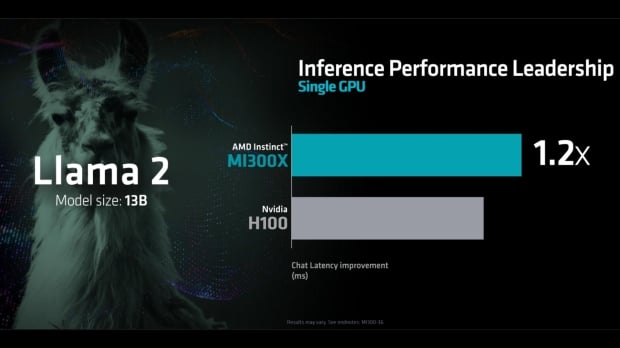

[UPDATE AMD has responded to the below with updated benchmark results and new optimizations of its own to show that the MI300X still has the performance advantage - read all about it here]. AMD, like all of the big players in the chip game, is going all in on AI hardware, and with the company's recent flagship MI300X GPU launch, it made some bold claims that compared performance between the MI300X and NVIDIA's powerful H100 GPU. Up to 20% faster than the H100 in a direct 1 to 1 comparison and up to 60% faster in an 8 to 8 server comparison.

Every slide in AMD's 'Advancing AI' presentation that covered the performance of the AMD Instinct MI300X to the NVIDIA H100 Tensor Core GPU shows the MI300X coming out on top or, at worse, performing on par. And with that, NVIDIA has taken the time to present its own results showing that the H100 GPU is 2X faster than the MI300X.

"At a recent launch event, AMD talked about the inference performance of the H100 GPU compared to that of its MI300X chip," NVIDIA's Dave Salvator and Ashraf Eassa write. "The results shared did not use optimized software, and the H100, if benchmarked properly, is 2X faster."

Benchmarking "properly" means using NVIDIA's latest NVIDIA TensorRT-LLM kernel optimizations for the NVIDIA Hopper architecture, which significantly alters the results displayed by AMD during its presentation.

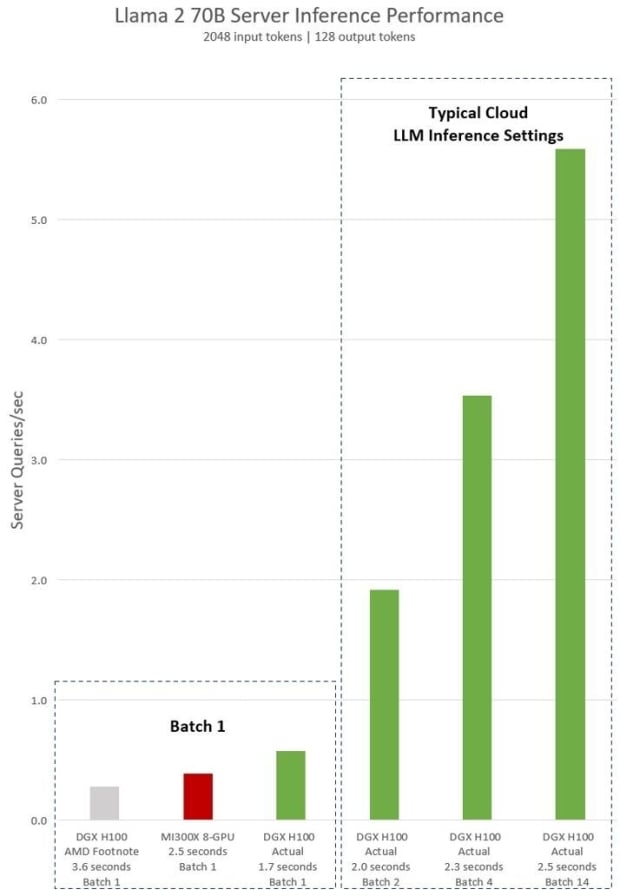

Llama 2 70B, a model used in AMD's presentation, is greatly accelerated. There's no shade or tone to NVIDIA's response, an article with the title 'Achieving Top Inference Performance with the NVIDIA H100 Tensor Core GPU and NVIDIA TensorRT-LLM.' The chart above shows "Llama 2 70B server inference performance in queries per second with 2,048 input tokens and 128 output tokens for "Batch 1" and various fixed response time settings," with a clear victory for the H100 GPU.

It also includes typical cloud settings for AI where inference requests are handled in larger batches - an industry standard. AMD didn't include results for MI300X for this particular real-world use case, so NVIDIA has, showcasing that the "8-GPU DGX H100 server can process over five Llama 2 70B inferences per second." Naturally, NVIDIA didn't put the MI300X to the test here - making the H100's performance look even more impressive.

NVIDIA's response does imply that not using the "publicly available NVIDIA TensorRT-LLM" update was a deliberate move on AMD's part. To make its flagship AI GPU, the MI300X look better than it is? Possibly.

Either way, this is one of those mess-around and find-out situations for using cherry-picked benchmarks in a presentation.