NVIDIA has unveiled the new Vera Rubin AI platform, specifically designed to advance AI into its next phase of reasoning, automation, agentic-based workloads, and continuous responses. However, not all that power will be allocated to the numerous AI server farms around the world; some of it is destined for off-planet use.

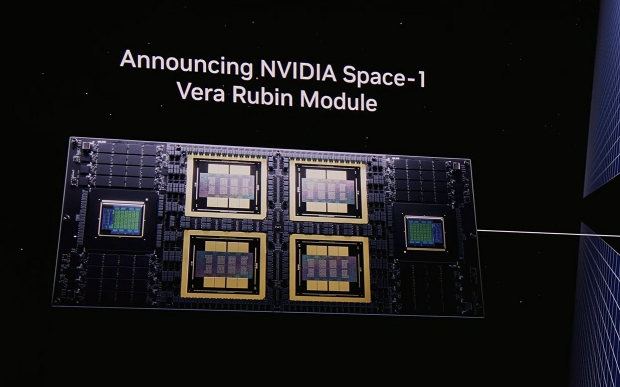

During NVIDIA's GTC 2026 presentation, company CEO Jensen Huang unveiled the Vera Rubin Space Module, which Huang says will deliver up to 25x times more AI compute than Hopper-generation H100. According to the announcement, six commercial space companies have already adopted the new Vera Rubin Space Module, which NVIDIA says is specifically designed for orbital data centers that are running LLMs in space.

Huang explained during the keynote that NVIDIA is seeking a way to expand its AI computing stack beyond Earth, leading to the creation of "AI factories in space". The Vera Rubin Space Module is designed to solve a current problem in data transfer in space: satellites capture large swaths of data and then have to send it back to Earth for processing. That process introduces latency and bandwidth requirements, but with the Vera Rubin Space Module, the idea is that inference compute is much closer to where the data is being generated, reducing latency, processing time, etc.

The benefits of reducing latency would be answers to questions delivered much faster than today, enabling decisions to be made more quickly. This would include changes to Earth observation, communications, autonomous spacecraft systems, and the frequency of scientific discoveries.

Read more: US government hits pause on Nintendo lawsuit against US government