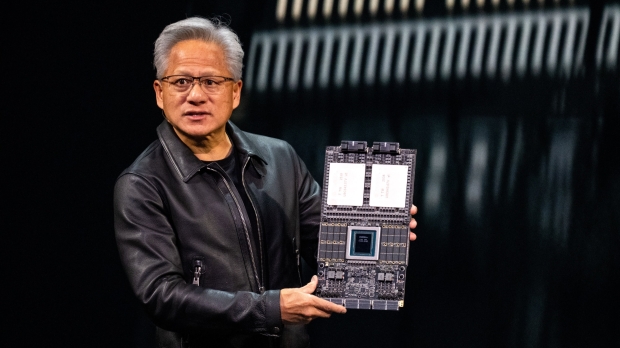

NVIDIA's CEO Jensen Huang has officially unveiled its next-generation AI platform, which includes seven chips and six individual racks.

Vera Rubin was unveiled at GTC 2026, where Huang broke down the components that make up NVIDIA's new flagship AI platform. NVIDIA has positioned the unveiling of Vera Rubin as the foundation of a new era of AI infrastructure, with the platform being specifically designed around AI inference and agent-based workloads. At its core, Vera Rubin is a full-stack AI supercomputing platform that combines a set of tightly integrated components, including the Vera CPU, Rubin GPU, NVLink interconnect, networking, and data processing units.

Huang explained during the GTC Keynote that AI is moving toward continuous generation of responses, decision-making, and action-taking. The NVIDIA CEO added that Vera Rubin has been specifically designed to support this new direction of AI inference. Notably, NVIDIA's Vera Rubin is an AI system designed to reason, plan, and act autonomously. As for performance, NVIDIA has said Vera Rubin will cut inference token costs by up to 10x and reduce the number of GPUs required for complex models.

- Read more: NVIDIA's next-gen Vera Rubin NVL576 AI server: 576 Rubin AI GPUs, 12672C/25344T CPU, new HBM4

- Read more: NVIDIA officially unveils Rubin: its next-gen AI platform with huge upgrades, next-gen HBM4

- Read more: NVIDIA updates roadmap, with new details on its next-gen GPU 'Feynman' coming in 2028

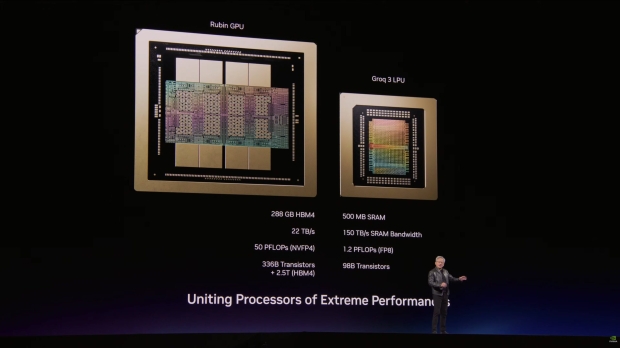

Furthermore, each NVIDIA Rubin GPU features 288GB of HBM4 memory, providing up to 22 TB/s of total bandwidth, along with 50 PFLOPS of NVFP4 compute performance. At the transistor level, each NVIDIA Rubin GPU has 336 billion transistors, with an additional 2.5 trillion transistors in HBM4 memory. As for the CPU, the Vera CPU is NVIDIA's first fully custom, next-generation Arm-based CPU designed solely for AI data centers. Its purpose is to keep the massive GPU cluster running at maximum efficiency 24/7.