With the release of NVIDIA CUDA 13.1, the company is introducing the "largest and most comprehensive update to the CUDA platform since it was invented two decades ago." Alongside new features and performance improvements, the arrival of NVIDIA CUDA Tile is set to be a game-changer for AI programming.

The initial release is limited to the current Blackwell generation of GPU hardware (future versions will support more architectures), with CUDA Tile programming allowing users to bring their code up a layer with specific chunks of data called tiles. From there, the compiler and runtime determine "the best way to launch that work onto individual threads," including using hardware such as tensor cores.

With the new CUDA Tile programming, removing the need to define each thread's "path of execution," it reduces the effort required to write code that performs well across various GPU architectures.

Here's NVIDIA's Jonathan Bentz and Tony Scudiero explaining how it works.

With the evolution of computational workloads, especially in AI, tensors have become a fundamental data type. NVIDIA has developed specialized hardware to operate on tensors, such as NVIDIA Tensor Cores (TC) and NVIDIA Tensor Memory Accelerators (TMA), which are now integral to every new GPU architecture.

With more complex hardware, more software is needed to help harness these capabilities. CUDA Tile abstracts away tensor cores and their programming models so that code using CUDA Tile is compatible with current and future tensor core architectures.

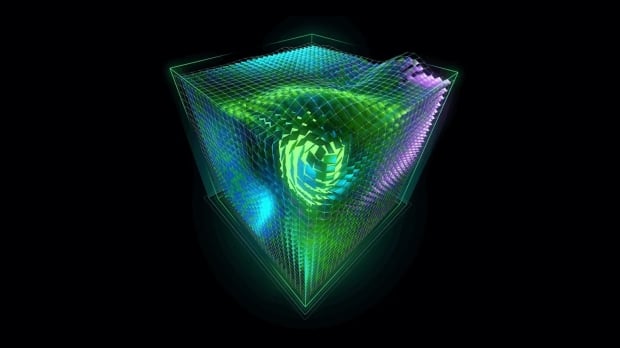

Tile-based programming enables you to program your algorithm by specifying chunks of data, or tiles, and then defining the computations performed on those tiles. You don't need to set how your algorithm is executed at an element-by-element level: the compiler and runtime will handle that for you.

This higher-level code will be powered by CUDA Tile IR, a virtual instruction set that enables programming tile operations. NVIDIA notes that it isn't a replacement for traditional single-instruction, multiple-thread (SIMT) hardware and programming, as the two will coexist. In addition to the CUDA Tile IR, the company has introduced NVIDIA cuTile Python, which enables CUDA tile programming on the popular AI platform.