The path towards combining both GPUs and HBM memory chips together is here, with NVIDIA and Meta "reviewing plans" to mount GPU cores on HBM.

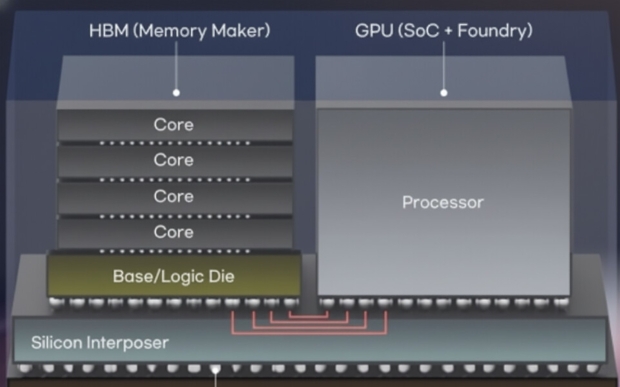

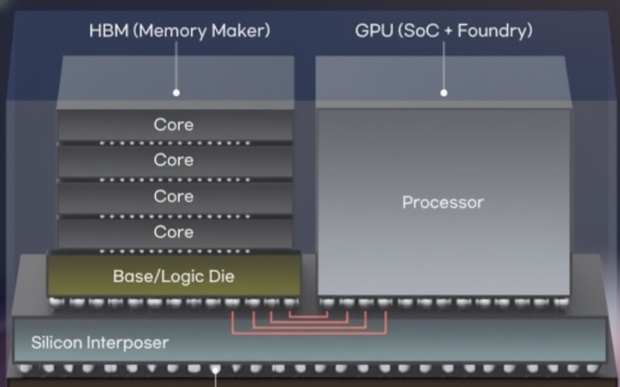

The general concept is that placing GPU cores into the base die at the bottom of the HBM stack, with Meta and NVIDIA working with HBM leaders SK hynix and Samsung. In a new report from Korean outlet SEDaily and multiple industry insiders "familiar with the matter" that "next-generation 'custom HBM' architectures are being discussed, and among them, a structure that directly integrates GPU cores into the HBM base die is being pursued".

HBM (High Bandwidth Memory) is widely used on AI GPUs from both NVIDIA and AMD, with next-gen HBM4 right around the corner, and HBM4E not far behind it, and it's built perfectly for AI applications and mass amounts of data through its incredibly high memory bandwidth.

The base die is responsible for communication between the memory and everything else attached to it, with the next step for HBM coming from a "controller" is featured on HBM4 in 2026. The industry wants to see HBM4 boost performance and efficiency by adding a semiconductor capable of controlling the memory.

Placing GPU cores onto the base die with HBM is considered "technology several steps ahead of the HBM4 controller". When it comes to GPUs and CPUs, a core is the basic unit capable of independent computation, with a 4-core GPU = four cores that are capable of computation, so with more cores = more computing performance. It happens with consumer gaming GPUs, CPUs, and then the most insane amount of computational power comes from the very latest AI GPUs.

However, with a combined GPU + HBM on the base die, this would distribute the computational functions that were only happening in the GPU, to the memory, which would reduce data movement, and lowering the load on the main GPU body.

An industry official explains this process: "In AI computation, an important factor is not just speed but also energy efficiency. Reducing the physical distance between memory and computational units can reduce both data transfer latency and power consumption".

It's not an easy road for semiconductor companies and tech giants to just do this, but with the companies involved -- NVIDIA, SK hynix, Samsung and then Meta wanting this for their AI infrastructure -- it is a great goal to see evolve in the months and years ahead. The technical challenges of this goal involve the Through-Silicon Via (TSV) process, with the space available for both the GPU cores in the HBM base die is very limited.

GPU computational cores use a lot of power, generating significant heat -- NVIDIA's next-next-gen Rubin Ultra AI GPUs will be consuming an incredible 2300W of power each, and the same goes for AMD's future Instinct MI450X that will use 2300W -- so putting that kind of power + heat load can lead to some troubles obviously.

The new development could be something the industry could use to its massive benefit, but it's quite the task for semiconductor foundries, and there aren't a lot of them. TSMC is the absolute dominant force in the semiconductor industry, with Samsung Foundry and Intel Foundry in the mix as well.

Kim Joung-ho, a professor in the School of Electrical Engineering at KAIST, said: "The speed of technological transition where the boundary between memory and system semiconductors collapses for AI advancement will accelerate. Domestic companies must expand their ecosystem beyond memory into the logic sector to preempt the next-generation HBM market".