AMD has confirmed that it will be launching its new Radeon AI PRO R9700 workstation-ready graphics card on July 23, built on the full-fat Navi 48 GPU die and features 32GB of GDDR6 memory.

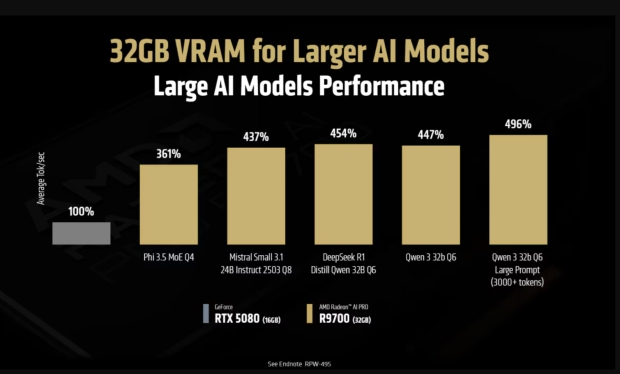

The company has specifically tuned the new Radeon AI PRO R9700 graphics card for lower-precision calculations and demanding AI workloads, with AMD noting that it delivers up to 496% faster inference on large transformer models compared to NVIDIA's Blackwell-based GeForce RTX 5080.

AMD's new Radeon AI PRO R9700 will also be around half the price of NVIDIA's new RTX PRO Blackwell GPUs, with the Radeon AI PRO R9700 at launch being offered only inside turnkey workstations from OEM partners like Boxx and Velocity Micro.

Enthusiasts wanting to get their hands on the new RDNA 4-powered Radeon AI PRO R9700 workstation graphics card will have to wait until later in Q3 2025, with AIB partners like ASRock, PowerColor, and others preparing their own solutions. Early listing prices suggest we can expect a price of around $1250 for the Radeon AI PRO R9700, which is considerably more than the $599 pricing on the Radeon RX 9070 XT, yet considerably lower than workstation GPUs from NVIDIA.

AMD's new Radeon AI PRO R9700 excels in natural language processing, text-to-image generation, generative design, and other high-end workloads that rely on large models and memory-intensive pipelines. The included 32GB of VRAM allows for production-scale inference, local fine-tuning, and multi-modal workflows to run entirely on-premise, without reaching out to the cloud. This means you've got more performance, lower latency, and increased data security when compared to cloud-based solutions.

We've also got full compatibility with AMD's open ROCm 6.3 platform, which provides developers with access to leading frameworks like PyTorch, ONNX Runtime, and TensorFlow. This means AI models can be built, tested, and deployed efficiently on local workstations, again, without using the cloud.

The Radeon AI PRO R9700 debuts in a sleek dual-slot design with a blower-style cooler, which means multi-GPU configurations are easy to do, making it simple to expand memory capacity, deploy parallel inference pipelines, and sustain high-throughput AI infrastructure in enterprise environments.