NVIDIA has published a new blog post providing some more details about the next level of performance offered by its new Blackwell GPU architecture.

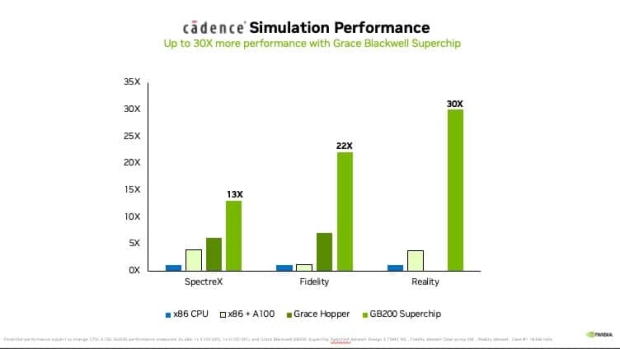

The new blog post by NVIDIA shows the gigantic performance leap that Blackwell will deliver for the research industry including quantum computing, drug discovery, fusion energy, physics-based simulations, weather simulations, scientific computing, and more.

NVIDIA has another major goal with Blackwell -- other than industry-leading AI performance -- in that Blackwell can simulate weather patterns 200x cheaper than Hopper, and use 300x less energy while running digital twins simultaneously encompassing the globe with 65x less cost, and 58x less energy used. Absolutely astonishing numbers from Blackwell.

The new NVIDIA Blackwell AI GPUs will also feature 30% more TLOPs over Hopper, where a single Hopper H100 AI GPU features 34 TFLOPs of FP64 compute performance, a single Blackwell B100 (not even the B200) will feature 45 TFLOPs of FP64 compute performance. However, the GB200 Superchip packs on not one but two Blackwell GPUs for around 90 TFLOPs of FP64 compute performance.

- Read more: NVIDIA DGX GB200 AI servers expected to sell 40,000 servers in 2025

- Read more: NVIDIA's next-gen GB200 AI server chips go into mass production in September

- Read more: Quanta to make NVIDIA GB200-based AI servers for Google, Amazon, and Meta

- Read more: NVIDIA GB200 Grace Blackwell Superchip: 864GB HBM3E, 16TB/sec bandwidth

- Read more: NVIDIA's full-spec B200 AI GPU uses 1200W of power, Hopper H100 uses 700W

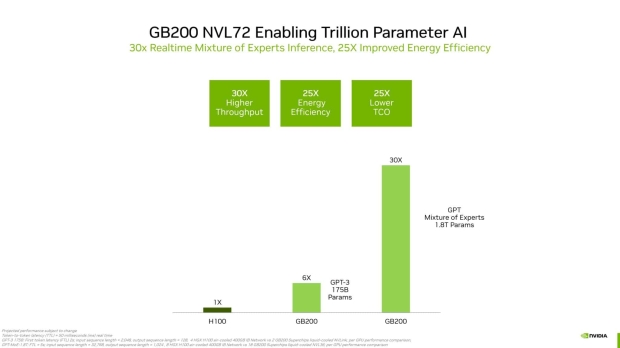

NVIDIA shared some new numbers for its B200 AI GPU platform with a gigantic 30x higher throughput while using 25x less energy and 25x lower TCO (Total Cost of Operation). NVIDIA compared the GB200 NVL72 system against 72 x86 CPUs with an 18x performance gain, and 3.27x lead over the current-gen GH200 NVL72 system in Database Join Query.