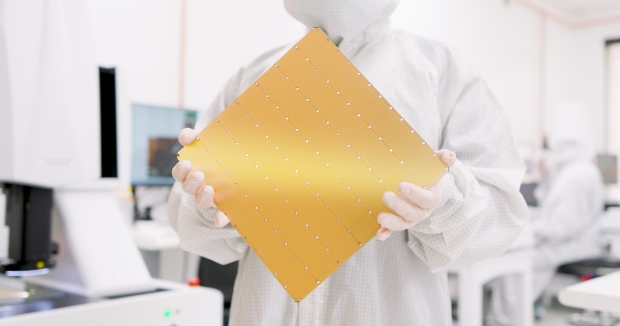

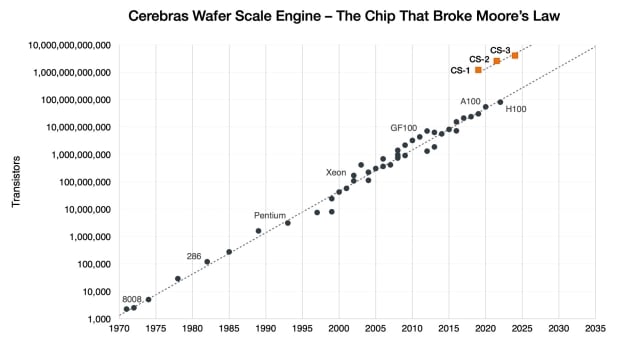

Cerebras Systems has just revealed its third-generation wafer-scale engine (WSE) chip, WSE-3, which packs 4 trillion transistors and 900,000 AI-optimized cores.

The company hasn't stopped on its journey of AI processor releases, with some truly crazy specifications for Cerebras' new WSE-3 chip. We have 4 trillion transistors, 900,000 AI-optimized cores, 125 petaflops of peak AI performance, and 44GB of on-chip SRAM made on the 5nm process node at TSMC.

WSE-3 also features either 1.5TB, 12TB, or 1.2PB of external memory -- yeah, 1.2 petabytes of memory -- capable of training AI models with up to 24 trillion parameters. Cerebras says its new WSE-3 has a die size of 46,225mm2, which is an insane 57x larger than NVIDIA's current H100 AI GPU, which measures 826mm2.

NVIDIA's current H100 AI GPU features 16,896 cores and 528 Tensor Cores, but the new Cerebras WSE-3 features an insane 900,000 AI-optimized cores (per chip), which is a massive 52x increase over the H100. Incredible stuff, truly incredible stuff.

- Read more: Cerebras CS-1 packs 400,000-core CPU, 1.2 trillion transistors for AI

- Read more: Cerebras Systems has largest chip EVER with 1.2 TRILLION transistors

- Read more: The world's largest chip: 2.6 trillion transistors and 850,000 cores

- Read more: Cerebras details largest chip ever built with 2.6 TRILLION transistors

Now, let's jump into the 21 petabytes per second of memory bandwidth... which is a mind-boggling 7000x more than the H100, with 214 petabits per second of fabric bandwidth (3715x faster than H100). Cerebras slaps 44GB of on-chip memory, which is 880x more than NVIDIA's current H100 AI GPU. Every number Cerebras has, puts its competitors to shame.

How much is the new WSE-3 AI chip? That's something we don't know, but don't expect them to be cheap.

Andrew Feldman, CEO and co-founder of Cerebras explained: "When we started on this journey eight years ago, everyone said wafer-scale processors were a pipe dream. We could not be more proud to be introducing the third-generation of our groundbreaking water scale AI chip. WSE-3 is the fastest AI chip in the world, purpose-built for the latest cutting-edge AI work, from mixture of experts to 24 trillion parameter models. We are thrilled for bring WSE-3 and CS-3 to market to help solve today's biggest AI challenges".

Cerebras WSE-3 specification highlights:

- 4 trillion transistors

- 900,000 AI cores

- 125 petaflops of peak AI performance

- 44GB on-chip SRAM

- 5nm TSMC process

- External memory: 1.5TB, 12TB, or 1.2PB

- Trains AI models up to 24 trillion parameters

- Cluster size of up to 2048 CS-3 systems

Rick Stevens, Argonne National Laboratory Associate Laboratory Director for Computing, Environment and Life Sciences said: "As a long-time partner of Cerebras, we are interested to see what's possible with the evolution of wafer-scale engineering. CS-3 and the supercomputers based on this architecture are powering novel scale systems that allow us to explore the limits of frontier AI and science. Cerebras' audacity continues to pave the way for the future of AI".