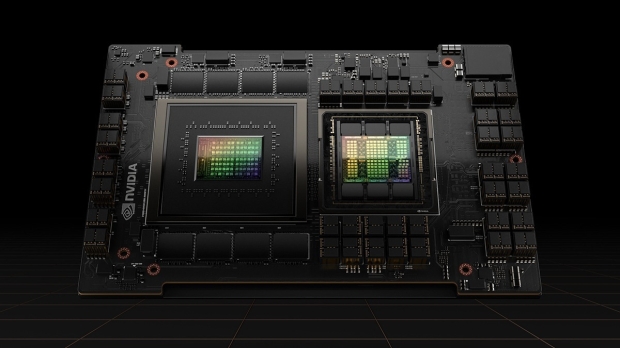

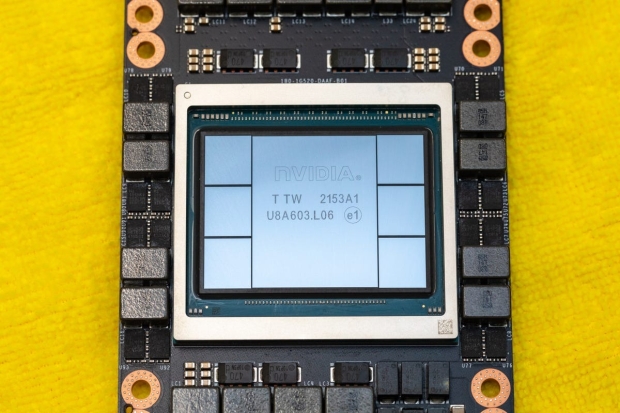

NVIDIA has greatly improved AI GPU shipments over the last few months, which were swelling out to 8-11 months and now down to a better 3-4 month waiting period. But some AI GPU customers are offloading their high-end AI chips... yeah, they're selling the H100 AI GPUs they've paid for.

Why? Some companies purchased oodles of NVIDIA's flagship H100 Tensor Core AI GPU, noting that it's now easier to rent AI processing power from AI cloud providers like Amazon Web Services, Google Cloud, and Microsoft Azure.

A new report from The Information states that some companies are reselling their NVIDIA H100 AI GPUs or reducing their future AI GPU orders due to the scarcity of AI GPUs and the huge costs of maintaining unused hardware. If we rewind to just 6 months ago, companies were tripping over themselves trying to get as many of the most powerful AI GPUs made. Still, even though increased AI GPU availability, reduced waiting times, and next-gen AI GPU hardware are on the horizon, companies are selling their AI GPUs.

AWS recently made it easier to rent out NVIDIA's high-end H100 AI GPUs with a new server that lets customers schedule GPU rentals for shorter periods, which helps with the availability of AI GPU hardware and the location of the chips. Why buy an expensive AI GPU when you can rent one? You'll own nothing, and you'll be happy, I guess.

Chip supply constraints don't hinder the AI business as they were last year, with NVIDIA still powering through with an estimated 90%+ of the AI GPU market share with its H100 AI GPU. It has its beefed-up H200 coming soon, and the next-gen Blackwell B100 AI GPU is also around the corner. AMD has its Instinct MI300 AI GPU out in the wild now, and other companies are going all-in on AI chip production.

But selling expensive NVIDIA H100 AI GPUs... we're in weird times, my friends.