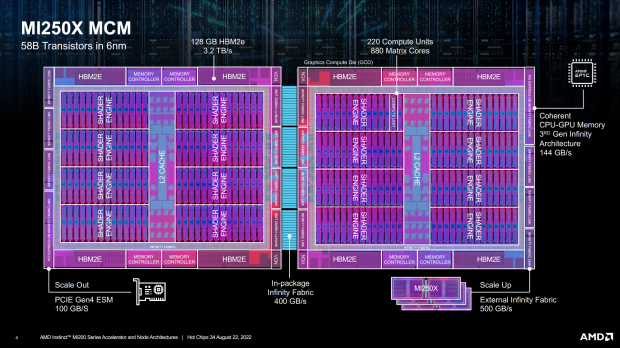

We're seeing some juicy silicon news coming out of the Hot Chips 34 conference, where AMD showed off the GPU block diagram for its upcoming MI250X MCM GPU.

AMD's upcoming MI250X GPU was detailed at Hot Chips 34, where we get the GPU block diagram that gives us all the good stuff in terms of specifications and details. The new MI250X features not 1 but 2 x GPUs for a total of 58 billion transistors, with AMD getting TSMC to make their MI250X GPU on their 6nm process node.

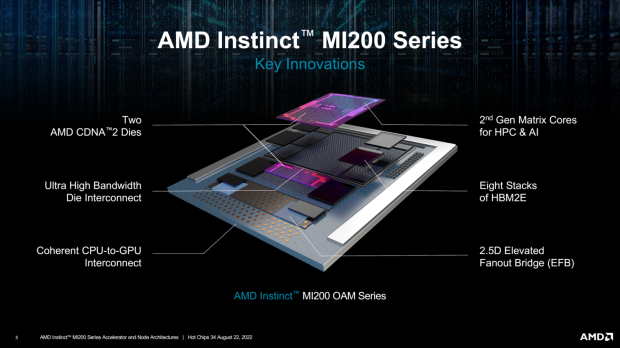

We also have 128GB of HBM2e memory at a huge 3.2TB/sec memory bandwidth, in-package Infinity Fabric with 400GB/sec of bandwidth between the two GPUs. All-in-all, AMD has 10 chips that makes up the MI250X "Aldebaran" GPU, with a mix of GPU dies, and HBM2 memory dies (2 x GPUs, 4 x HBM2e stacks per GPU for 8 x HBM2e stacks total + 2 x GPUs = 10 chips in total).

Each of the GPU dies has 110 Compute Units each, seeing the MCM GPU featuring 220 Compute Units in total... split into 7168 stream processors per GPU for a total of 14,080 stream processors on the MI250X GPU.

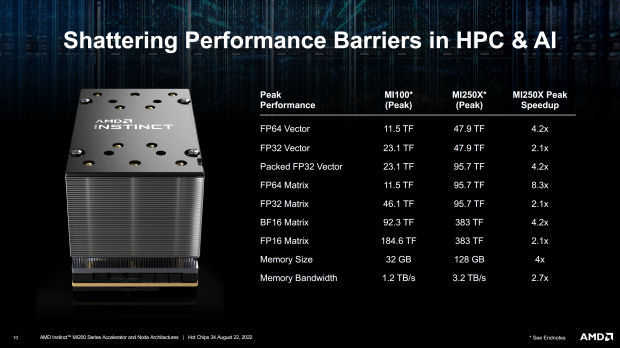

AMD compares its new MI250X MCM GPU against its current-gen MI100 GPU, where the MI100 gets destroyed across the board in terms of performance and memory bandwidth. We're talking about 2.1x gains at a minimum (FP32 Matrix and FP16 Matrix performance) while there's a much larger 8.3x increase in FP64 Matrix performance. The new MI250X GPU is a monster compared to the current MI100 GPU.

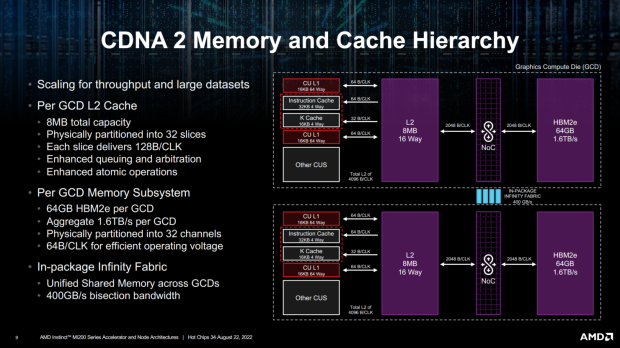

The new AMD Instinct MI250X and its MCM GPU is joined by 128GB of HBM2e memory, with AMD tapping an 8-channel interface (1024-bit interface per channel x 8 = 8192-bit memory bus). Each 1024-bit interface supports 2GB HBM2e DRAM modules, which makes 16GB HBM2e memory capacity per stack x 8 stacks = 128GB of HBM2e memory in total.

NVIDIA's current-gen Ampere A100 GPU has 80GB of HBM2e memory with 2TB/sec of memory bandwidth, while AMD destroys that with its MI250X MCM GPU featuring 128GB of HBM2 memory (48GB more HBM2e memory) at 3.2TB/sec memory bandwidth (1.2TB/sec more memory bandwidth over A100).

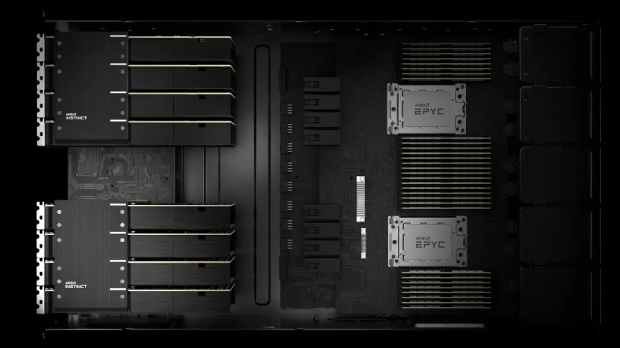

AMD already has its new Instinct MI200 CDNA 2 "Aldebaran" MCM GPU inside of the world's fastest supercomputer, with the Department of Energy (DOE) will operate the new Frontier supercomputer in Tennessee, USA, with the system costing up to $1.8 billion to build and is now the world's fastest supercomputer, overtaking the Fugaku supercomputer in Japan. ORNL's new supercomputer is powered by AMD 3rd Gen EPYC CPUs and AMD's newest Radeon Instinct MI250X GPUs.

- 9408 AMD 3rd Gen EPYC "Trento" CPUs

- 37,632 AMD Instinct MI250X GPUs

- 9.2 petabytes memory split between HBM and DDR4

- 37 petabytes of node-local storage

- 716 petabytes of center-wide storage

- 100% liquid cooled (using warm 85-degree water)

Frontier is entirely water-cooled, with a huge 6000 gallons (22,700+ liters) of water being pumped through the supercomputer every 60 seconds, while there's a huge 90 miles (around 145km) of networking cables inside of Frontier. Astonishing numbers.

AMD Instinct MI250X "Aldebaran" CDNA 2 GPU specs:

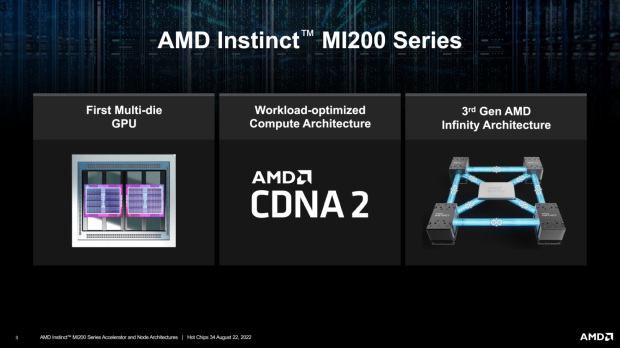

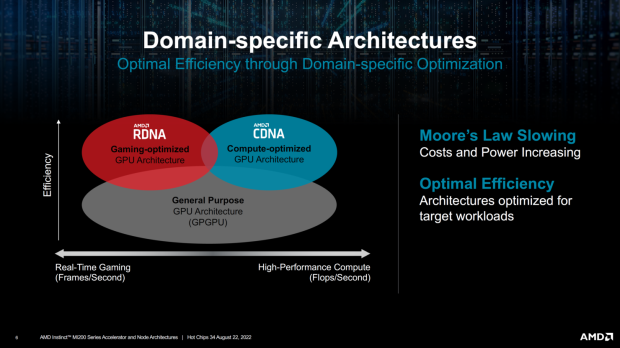

- GPU architecture: CDNA 2

- GPU process node: 6nm TSMC

- GPU chiplets: 2 (MCM = multi-chip module aka GPU chiplets)

- GPU cores: 14,080

- GPU clock speed: 1700MHz

- VRAM: 128GB HBM2e

- GPU memory bandwidth: 3.2TB/sec

- GPU memory bus: 8192-bit

- FP32 compute: 95.7 TFLOPs

- FP64 compute: 47.9 TFLOPs

- TDP: 560W

- AMD CDNA 2 architecture - 2nd Gen Matrix Cores accelerating FP64 and FP32 matrix operations, delivering up to 4X the peak theoretical FP64 performance vs. AMD previous-gen GPUs.

- Leadership Packaging Technology - Industry-first multi-die GPU design with 2.5D Elevated Fanout Bridge (EFB) technology delivers 1.8X more cores and 2.7X higher memory bandwidth vs. AMD previous-gen GPUs, offering the industry's best aggregate peak theoretical memory bandwidth at 3.2 terabytes per second.

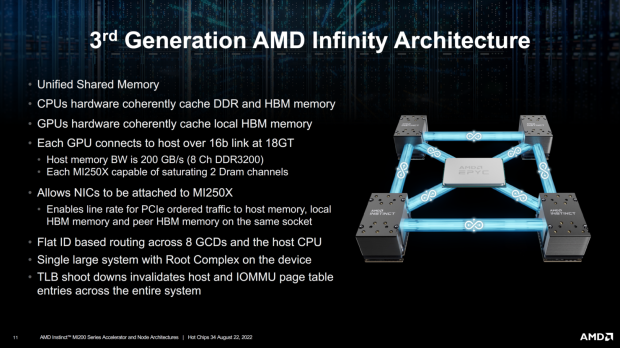

- 3rd Gen AMD Infinity Fabric technology - Up to 8 Infinity Fabric links connect the AMD Instinct MI200 with 3rd Gen EPYC CPUs and other GPUs in the node to enable unified CPU/GPU memory coherency and maximize system throughput, allowing for an easier on-ramp for CPU codes to tap the power of accelerators.