NVIDIA has just announced its new second-gen DGX Station A100 server, which is powered by the new Ampere A100 Tensor Core GPUs. Check out the awesome video on it below:

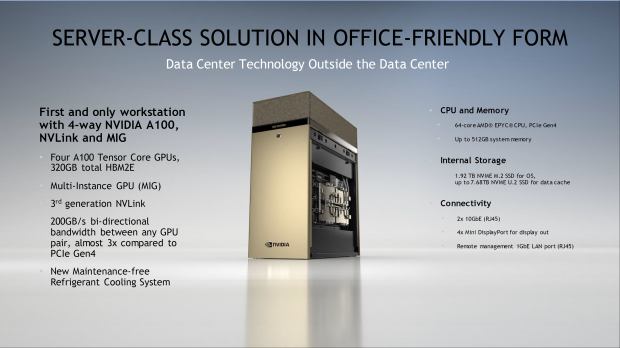

The newly-upgraded NVIDIA DGX Station A100 is for those working with AI, machine learning and data science workloads. It is the fastest server in a box dedicated to AI research. Inside, were looking at up to 4 x A100 Tensor Core GPUs with 80GB of HBM2e each -- so 320GB of HBM2e memory in total.

We also have a 64-core, 128-thread AMD EPYC CPU, up to 512GB of system memory, up to 7.68TB of NVMe M.2 SSDs for storage. You'll also have 3rd-gen NVLink, 200GB/sec bi-directional bandwidth between any GPU pair, and a kick ass new maintenance-free refrigerant cooling system.

- BMW Group Production is using NVIDIA DGX Stations to explore insights faster as they develop and deploy AI models that improve operations.

- DFKI, the German Research Center for Artificial Intelligence, is using DGX Station to build models that tackle critical challenges for society and industry, including computer vision systems that help emergency services respond rapidly to natural disasters.

- Lockheed Martin is using DGX Station to develop AI models that use sensor data and service logs to predict the need for maintenance to improve manufacturing uptime, increase safety for workers, and reduce operational costs.

- NTT Docomo, Japan's leading mobile operator with over 79 million subscribers, uses DGX Station to develop innovative AI-driven services such as its image recognition solution.

- Pacific Northwest National Laboratory is using NVIDIA DGX Stations to conduct federally funded research in support of national security. Focused on technological innovation in energy resiliency and national security, PNNL is a leading U.S. HPC center for scientific discovery, energy resilience, chemistry, Earth science, and data analytics.